Intel Haswell Info: Single Chip for Ultrabooks, GT3 GPU for Mobile, LGA-1150 for Desktop

by Anand Lal Shimpi on November 9, 2011 5:37 PM ESTVR-Zone spotted a bunch of very interesting slides about Haswell over at Chiphell. The slides and information both look fairly believable so let's get with the analyzing shall we?

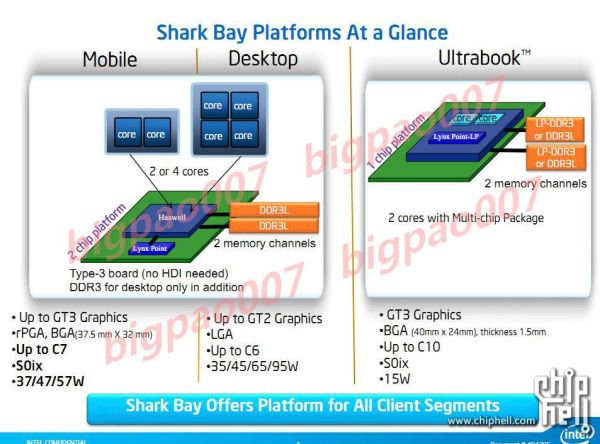

Haswell is Intel's next tock, meaning it's a brand new architecture. Haswell will debut sometime in 2013 on Intel's 22nm process, first introduced with Ivy Bridge at the beginning of 2012. The information on Haswell spans three major platforms: notebooks, desktops and Ultrabooks.

Integration

Haswell for Ultrabooks will be available in a 15W TDP, similar to where SNB based Ultrabooks are today. The big news here is Intel will move the PCH (Platform Controller Hub) onto the same package as the CPU, making the Ultrabook version of Haswell a single chip solution. With Sandy Bridge you needed two parts from Intel, the CPU and the PCH, with Haswell you only need the Haswell MCP (multi-chip package). That's two individual die on a single package, often the precursor to outright die integration (perhaps at 14nm?). The combined MCP should require a smaller footprint than the CPU + PCH arrangement we have today, allowing for less cramped (or smaller) motherboards and potentially even larger batteries in Ultrabooks. This is a huge move as it really starts to blur the line between Ultrabook and tablet hardware.

While Haswell for Ultrabooks tops out at two cores, you can get 2 or 4 core versions in notebooks and desktops.

Faster Graphics

Both the Ultrabook and notebook Haswell platforms will feature one of three different on-die GPU configurations: GT1, GT2 or GT3. Desktop Haswell will only be offered (as of now) in GT1 or GT2 configurations. No word on the differences between each configuration, but the fact that there are three in Haswell (vs 2 in SNB/IVB) indicates Intel may be exploring an ultra high performance GPU option to further encroach on discrete mobile GPU territory. An even higher performance GPU option for Haswell is something we hinted at in our Ivy Bridge architecture discussion.

Lower Power Memory & A New Socket

The list of memory support is also fairly power optimized. All three Haswell targets will support DDR3L, while the desktop version can use regular DDR3 and the Ultrabook version can use LPDDR3. All three implementations feature two memory channels.

It's important to note that despite Haswell's retarget to focus on 10 - 20W TDPs, the architecture appears to be capable of scaling nearly as high as Sandy Bridge (95W desktop parts will be available, although TDPs may not be directly comparable). This makes sense given that a single architecture can usually span an order of magnitude of TDPs without losing its edge.

Other Haswell features include integrated voltage regulators (should simplify things on the motherboard side), AVX 2.0 instruction support and of course things like AES-NI and Hyper Threading. Haswell will require a new socket: LGA-1150 for desktops.

Final Words

Nothing here is really all that surprising. We knew that faster integrated graphics was coming, I am curious to see just how powerful this GT3 option will be. The true test is whether or not it will be enough to steer customers like Apple away from including a discrete GPU in their 15-inch Macbook Pro for example. From what I've heard, much of Intel's integrated graphics roadmap has been strongly "encouraged" by Apple.

The move to a single-chip solution for a high-end x86 CPU is a pretty significant step. That line between tablets and notebooks is going to become mighty blurry come 2013.

36 Comments

View All Comments

Roland00Address - Wednesday, November 9, 2011 - link

either built into the macbook pros themselves, or avaliable via thunderbolt and an external gpu.bhima - Monday, January 16, 2012 - link

Thunderbolt does not have the bandwidth to push the kind of graphics one would want from an external graphics card. In fact, I don't think it even has PCI-E v1.0 bandwidth let alone 2.0 or now 3.0.nofumble62 - Wednesday, November 9, 2011 - link

I can date that back to 486.I had never upgraded the CPU on the following systems Pentium 2 (Slot), Pentium 3, Athlon, Pentium 4, LGA775, LGA1156. In fact, I always buy the motherboard and CPU as a combo.

CPU upgrading is just a distance memory of those good old day folks.

Kjella - Friday, November 11, 2011 - link

Duron 700 to Athlon 1.2 here, that was a kick-ass upgrade on the same mobo. Another opportunity was blocked because I wanted PCI express for graphics and the final chance for an upgrade I switched to an Intel Q6600 instead. Intel changes too fast to make sense, even if say Sandy Bridge and Ivy Bridge are the same who upgrades every generation? Even if you upgrade every two years which is rapid it still won't happen, Q6600 = LGA775 (2007), Core i7-860 = LGA1156 (2009), Sandy Bridge = LGA1155 (2011), Haswell = LGA1150 (2013). Still, after Bulldozer I'm pretty sure my next computer will be an Intel all the same...GreenEnergy - Friday, November 11, 2011 - link

The issue is component integration. We want it, we need it. But it also comes with a sideeffect.Plus voltage control and so on is much more refined.

AMDs FM1 and FM2 is incompatible as well and you simply get alot more when they aint compatible. LGA1156->LGA1155 changed power supply and a few other things. LGA1150 gets ondie VRM so its obviously its incompatible.

AMDs FX chips didnt work in older boards, so you had to get a new board anyway, shoud you for whatever reason want one of those CPUs.

We might get reuse of sockets again in the future as you ask for. There is only the PCH components for Intel to integrate. Abit longer road for AMD. But essentially its just a matter of time before boards are some dummy PCB with a few slots on and nothing else. If it all at that time will be integrated.

The question is simply, what will be integrated ondie next. Memory to kill discrete GFX? Or SATA, USB, Thunderbolt etc controllers to yet again make boards smaller and cheaper.

8steve8 - Thursday, November 10, 2011 - link

Why do desktop versions of these chips top off at GT2, while the ultrabook gets GT3...It seems strange. Good enough graphics in IGP are a good thing, and desktops can spend the heat cost easier than ultrabooks/notebooks.

Yes, desktop owners can buy pciE gpus, but thats missing the point... integration is good, even when there are options. Cost, energy, resources would all be saved if the igp bar was higher in desktops.

anactoraaron - Thursday, November 10, 2011 - link

Perhaps screen res is an issue here. It's the only thing that really stands out. Maybe the GT3 only outperforms GT2 at your typical low res 1280x800? Otherwise I agree with you. It should at least appear on the i3 or i5 non K chips and leave the GT2 for the Pentium, Celeron and unlocked K lines.Arnulf - Thursday, November 10, 2011 - link

Or perhaps the naming isn't meant to indicate that higher number equals higher performance. Take touring car racing series for example: GT1 (incidentally the same name !) is the top-of-the-top and even purpose-built stuff while GT3 is the class closest to production cars (with GT2 inbetween). So GT1 > GT2 > GT3.Lonbjerg - Thursday, November 10, 2011 - link

You can put in a discrete GPU if you need the added power on a desktop...not so much in a ultrabook...anactoraaron - Thursday, November 10, 2011 - link

IIRC there isn't really a big enough gain in bandwidth for DDR4 (it was too close to DDR3) which is why GPUs just jumped from DDR3 to DDR5. I would think desktop RAM will follow this as well.