NVIDIA's Tegra 3 Launched: Architecture Revealed

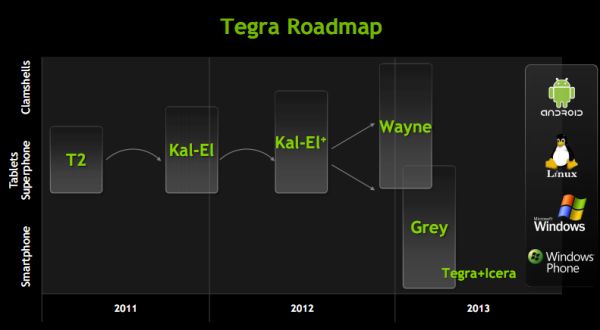

by Anand Lal Shimpi on November 9, 2011 12:34 AM ESTOriginally announced in February of this year at MWC, NVIDIA is finally officially launching its next-generation SoC. Previously known under the code name Kal-El, the official name is Tegra 3 and we'll see it in at least one product before the end of the year.

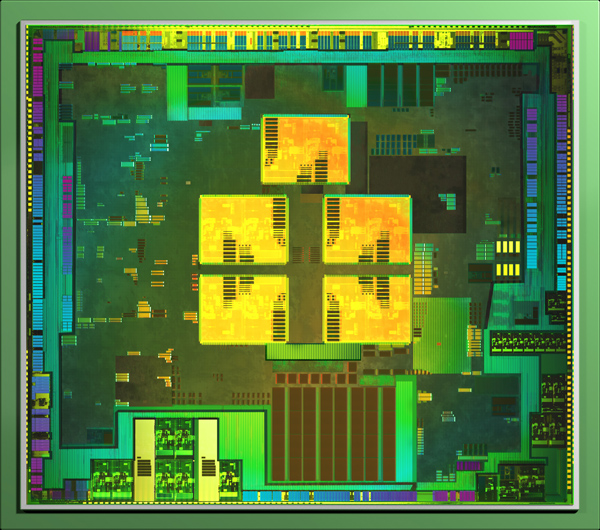

Like Tegra 2 before it, NVIDIA's Tegra 3 is an SoC aimed at both smartphones and tablets built on TSMC's 40nm LPG process. Die size has almost doubled from 49mm^2 to somewhere in the 80mm^2 range.

The Tegra 3 design is unique in the industry as it is the first to implement four ARM Cortex A9s onto a chip aimed at the bulk of the high end Android market. NVIDIA's competitors have focused on ramping up the performance of their dual-core solutions either through higher clocks (Samsung Exynos) or through higher performing microarchitectures (Qualcomm Krait, ARM Cortex A15). While other companies have announced quad-core ARM based solutions, Tegra 3 will likely be the first (and only) to ship in an Android tablet and smartphone in 2011 - 2012.

NVIDIA will eventually focus on improving per-core performance with subsequent iterations of the Tegra family (perhaps starting with Wayne in 2013), but until then Tegra 3 attempts to increase performance by exploiting thread level parallelism in Android.

GPU performance also sees a boon thanks to a larger and more efficient GPU in Tegra 3, but first let's talk about the CPU.

Tegra 3's Four Five Cores

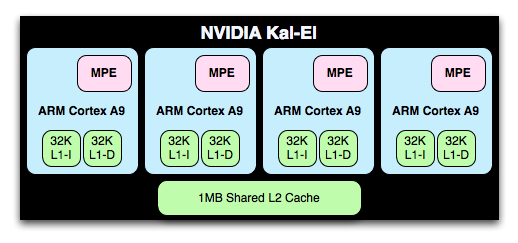

The Cortex A9 implementation in Tegra 3 is an improvement over Tegra 2; each core now includes full NEON support via an ARM MPE (Media Processing Engine). Tegra 2 lacked any support for NEON instructions in order to keep die size small.

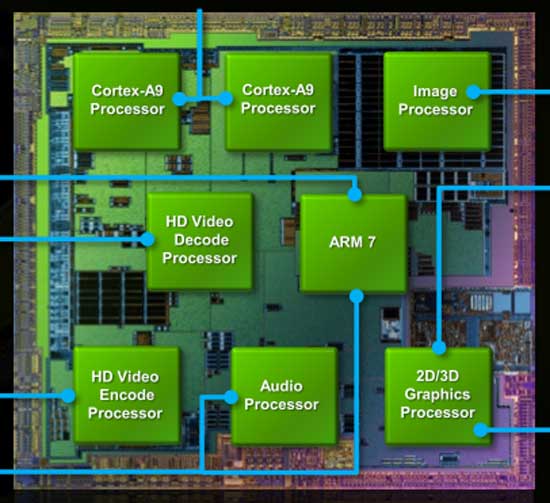

NVIDIA's Tegra 2 die

NVIDIA's Tegra 3 die, A9 cores highlighted in yellow

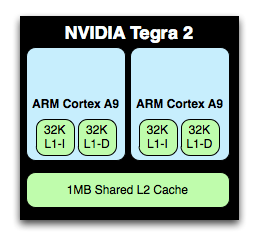

L1 and L2 cache sizes remain unchanged. Each core has a 32KB/32KB L1 and all four share a 1MB L2 cache. Doubling core count over Tegra 2 without a corresponding increase in L2 cache size is a bit troubling, but it does indicate that NVIDIA doesn't expect the majority of use cases to saturate all four cores. L2 cache latency is 2 cycles faster on Tegra 3 than 2, while L1 cache latencies haven't changed. NVIDIA isn't commenting on L2 frequencies at this point.

The A9s in Tegra 3 can run at a higher max frequency than those in Tegra 2. With 1 core active, the max clock is 1.4GHz (up from 1.0GHz in the original Tegra 2 SoC). With more than one core active however the max clock is 1.3GHz. Each core can be power gated in Tegra 3, which wasn't the case in Tegra 2. This should allow for lightly threaded workloads to execute on Tegra 3 in the same power envelope as Tegra 2. It's only in those applications that fully utilize more than two cores that you'll see Tegra 3 drawing more power than its predecessor.

The increase in clock speed and the integration of MPE should improve performance a bit over Tegra 2 based designs, but obviously the real hope for performance improvement comes from using four of Tegra 3's cores. Android is already well threaded so we should see gains in portions of things like web page rendering.

It's an interesting situation that NVIDIA finds itself in. Tegra 3 will show its biggest performance advantage in applications that can utilize all four cores, yet it will be most power efficient in applications that use as few cores as possible.

There's of course a fifth Cortex A9 on Tegra 3, limited to a maximum clock speed of 500MHz and built using LP transistors like the rest of the chip (and unlike the four-core A9 cluster). NVIDIA intends for this companion core to be used for the processing of background tasks, for example when your phone is locked and in your pocket. In light use cases where the companion core is active, the four high performance A9s will be power gated and overall power consumption should be tangibly lower than Tegra 2.

Despite Tegra 3 featuring a total of five Cortex A9 cores, only four can be active at one time. Furthermore, the companion core cannot be active alongside any of the high performance A9s. Either the companion core is enabled and the quad-core cluster disabled or the opposite.

NVIDIA handles all of the core juggling through its own firmware. Depending on the level of performance Android requests, NVIDIA will either enable the companion core or one or more of the four remaining A9s. The transition should be seamless to the OS and as all of the cores are equally capable, any apps you're running shouldn't know the difference between them.

94 Comments

View All Comments

psychobriggsy - Friday, November 11, 2011 - link

By using 40nm NVIDIA has achieved a first to market advantage in the high-end quad-core SoC for tablets. Obviously this comes at the cost of a larger die, higher power consumption and/or slower clock speeds.The larger die will add some cost to the product, but it's hardly a problem given that it is still quite small in the grand scheme of things. I believe it is smaller than the A5 for example. In addition mature yields on the 40nm process may allow NVIDIA to ship millions without worry rather than risk early 28nm yields.

Tegra 3 was meant to clock to over 1.5GHz, and this hasn't been achieved, probably 1.3GHz was the better option for power consumption. 28nm will fix this for Tegra 3+ next year, hopefully.

In addition the low power core gives NVIDIA an early entry into the low-power companion core market a year or two before the ARM Cortex A15 + ARM Cortex A7 combos arrive. This is another reason it is 40nm - TSMC don't have the ability to fab 28nm dies with a combination of processes (LP and HP) on the same die yet.

So the die might costs a couple of dollers more to make vs Tegra 2, but I'm sure they can charge a premium for the product until the competitors arrive.

Paulman - Wednesday, November 9, 2011 - link

Wow, I'm amazed by the response times. It looks pretty seamless (i.e. the switching to and from the low-power transistor companion core). From a GUI perspective, there doesn't appear to be any stutter at all.Looks like a good job, NVIDIA :O

P.S. Speaking of low-power transistors, that's ingenious to build an entire core out of low-power transistors on the same die as the four regular cores. I wonder if that's an idea that's been floating around in the field for awhile...

dagamer34 - Wednesday, November 9, 2011 - link

You think using LP transistors is something, see big.LITTLE coming from ARM in 2012-2013. ARM designed an entire core to be specifically low power (the Cortex A7) to fit perfectly with the more powerful Cortex A15, so that you get even greater performance with even greater power savings.Mugur - Wednesday, November 9, 2011 - link

Yes, but Tegra 3 is already here...Draiko - Wednesday, November 9, 2011 - link

It seems like nVidia and ARM co-developed this kind of Architecture. nVidia is implementing it in the Tegra 3 and ARM is making it available for license with bigLITTLE.I'm just blown away with how smooth the dynamic threading is on the Tegra 3. This is going to be an absolute game-changer.

JonnyDough - Wednesday, November 9, 2011 - link

That's because it isn't loaded down with crapware like the Blockbuster app...yet.metafor - Wednesday, November 9, 2011 - link

IIRC, Marvell's Sheeva processors uses this method (came out ~2010 I believe).jcompagner - Thursday, November 10, 2011 - link

intel does this also for quite some timeWas the SATA bug they had not a result of something like this?

There also there was a wrong type of transistor used for that.

Omega215D - Wednesday, November 9, 2011 - link

Seeing that the architecture has a sound processor in it, is there any chance that nVidia could revive SoundStorm for the mobile platform? That would be great for things like the Transformer and other tablets as well as smart phones for multimedia purposes. Just a thought.ggathagan - Wednesday, November 9, 2011 - link

Given the Tegra 3 already includes HD audio and 7.1 support, I'm not clear on what feature you think Soundstream would add.