Bulldozer for Servers: Testing AMD's "Interlagos" Opteron 6200 Series

by Johan De Gelas on November 15, 2011 5:09 PM ESTMaxwell Render Suite

The developers of Maxwell Render Suite--Next Limit--aim at delivering a renderer that is physically correct and capable of simulating light exactly as it behaves in the real world. As a result their software has developed a reputation of being powerful but slow. And "powerful but slow" always attracts our interest as such software can be quite interesting benchmarks for the latest CPU platforms. Maxwell Render 2.6 was released less than two weeks ago, on November 2, and that's what we used.

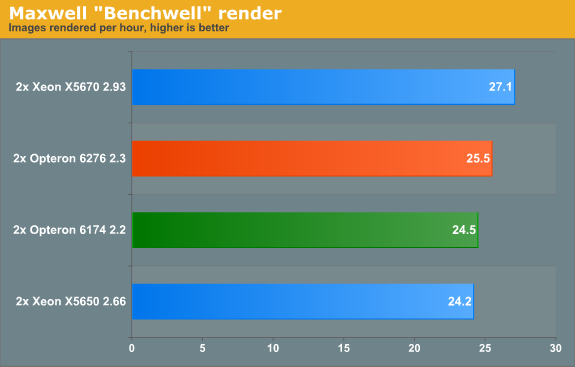

We used the "Benchwell" benchmark, a scene with HDRI (high dynamic range imaging) developed by the user community. Note that we used the "30 day trial" version of Maxwell. We converted the time reported to render the scene in images rendered per hour to make it easier to interprete the numbers.

Since Magny-cours made its entrance, AMD did rather well in the rendering benchmarks and Maxwell is no difference. The Bulldozer based Opteron 6276 gives decent but hardly stunning performance: about 4% faster than the predecessor. Interestingly, the Maxwell renderer is not limited by SSE (Floating Point) performance. When we disable CMT, the AMD Opteron 6276 delivered only 17 frames per second. In other words the extra integer cluster delivers 44% higher performance. There is a good chance that the fact that you disable the second load/store unit by disabling CMT is the reason for the higher performance that the second integer cluster delivers.

Rendering: Blender 2.6.0

Blender is a very popular open source renderer with a large community. We tested with the 64-bit Windows version 2.6.0a. If you like, you can perform this benchmark very easily too. We used the metallic robot, a scene with rather complex lighting (reflections) and raytracing. To make the benchmark more repetitive, we changed the following parameters:

- The resolution was set to 2560x1600

- Antialias was set to 16

- We disabled compositing in post processing

- Tiles were set to 8x8 (X=8, Y=8)

- Threads was set to auto (one thread per CPU is set).

To make the results easier to read, we again converted the reported render time into images rendered per hour, so higher is better.

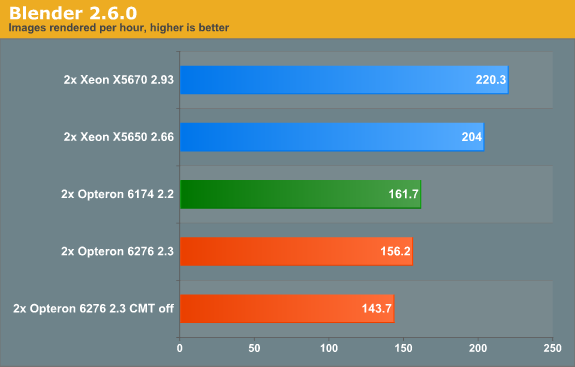

Last time we checked (Blender 2.5a2) in Windows, the Xeon X5670 was capable of 136 images per hour, while the Opteron 6174 did 113. So the Xeon was about 20% faster. Now the gap widens: the Xeon is now 36% faster. The interesting thing that we discovered is that the Opteron is quite a bit faster when benchmarked in linux. We will follow up with some Linux numbers in the next article. The Opteron 6276 is in this benchmark 4% slower than its older brother, again likely due in part to the newness of its architecture.

106 Comments

View All Comments

mino - Wednesday, November 16, 2011 - link

IT had most likely to do with you running it on NetBurst (judging by no VT-X moniker).As much to do with VT-X as with a crappy CPU ... wiht bus architecture ah, thank god they are dead.

JustTheFacts - Wednesday, November 16, 2011 - link

Please explain why there is no comparison between the latest AMD processors to Intel's flagship two-way server processors: the Intel Westmere-EX e7-28xx processor family?Lest you forgot about them, you can find your own benchmarks of this flagship Intel processor here: http://www.anandtech.com/show/4285/westmereex-inte...

Take the gloves off and compare flagship against flagship please, and then scale the results to reflect the price differece if you have to, but there's no good reason not to compare them that I can see. Thanks.

duploxxx - Thursday, November 17, 2011 - link

Westmere EX 2sockets is dead, will be killed by own intel platform called romley which will have 2p and 4p.it was a stupid platform from the start and overrated by sales/consultants with there so called huge memory support.

aka_Warlock - Wednesday, November 16, 2011 - link

I think you should have done a more thorough VM test than you did. 64GB RAM?We all know single threaded performance is weak, but I still feel the server are underutilized in your test.

These CPU's are screaming heavy multi threading workloads. Many VM's. Many vCPU's.

What would the performance be if you had, say, at least 192GB of RAM and 50 (maybe more) VM's on it?

And offcourse, storage should not be a bottleneck.

I think this is where his 8modules/16threads cpu would shine.

A dual socket rack/blade. 16modules/32 threads.

Loads of RAM and a bounch of VM's.

iwod - Wednesday, November 16, 2011 - link

It is power hungry, isn't any better then Intel, and it is only slightly cheaper, at the cost of higher electricity bill.So unless with some software optimization that magically show AMD is good at something, i think they are pretty much doomed.

It is like Pentium 4, except Intel can afford making one or two mistakes, but not with AMD.

mino - Wednesday, November 16, 2011 - link

Then the article served its purpose well.SunLord - Wednesday, November 16, 2011 - link

So is the AMD system running 8GB DDR3-1600 DIMMS or 4GB DDR3-1333? Because you list the same DDR3-1333 model for both systems and if the Server supports 16 DIMMs well 16*4 is 64GBJohanAnandtech - Thursday, November 17, 2011 - link

Copy and paste error, Fixed. We used DDR-3 1600 (Samsung)Johnmcl7 - Wednesday, November 16, 2011 - link

I have wondered about this, with more cores per socket and virtualisation (organising new set of servers and buying far less hardware for the same functionality) so I'd have thought in total less server hardware is being purchased. Clearly that isn't the case though, is the money made back from more expensive servers?John

bruce24 - Wednesday, November 16, 2011 - link

While sure which each new generation of server you need much less hardware to do the same amount of work, however worldwide people are looking for servers to do much more work. Each year companies like Google, Facebook, Amazon, Microsoft and Apple add much more computing power than they could get by refreshing their current servers.