Bulldozer for Servers: Testing AMD's "Interlagos" Opteron 6200 Series

by Johan De Gelas on November 15, 2011 5:09 PM ESTVirtualization Performance: ESX + Windows

vApus Mark II has been our own virtualization benchmark suite that tests how well servers cope with virtualizing "heavy duty applications" on top of Windows Server 2008. We explained the benchmark methodology here. The vApus Mark II tile consist of five VMs:

- 3x IIS webservers running Windows 2003 R2, each getting two vCPUs

- One MS SQL Server 2008 x64 running on top of Windows 2008 R2 x64. This VM has eight vCPUs, which makes EPT/RVI (Hardware Assisteds Paging) very important.

- An OLTP Oracle 11G R2 database VM on top of Window 2008R2 x64. The VM runs the Swingbench 2.2 "Calling Circle" benchmark.

We test with two tiles, good for 36 vCPUs.

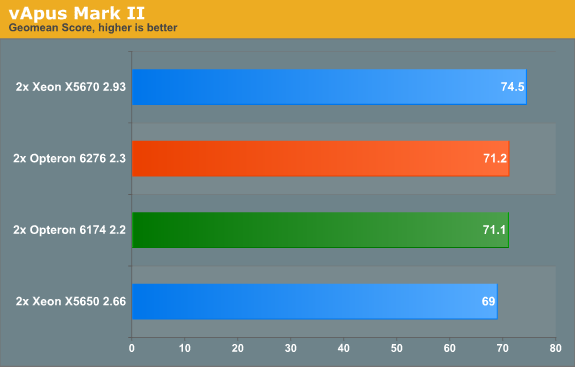

Again, the Opteron 6276 delivers a very respectable performance per dollar, delivering 96% of a Xeon that costs almost twice as much. But the fact remains that the new Opteron cannot create a decent performance gap with the old Opteron.

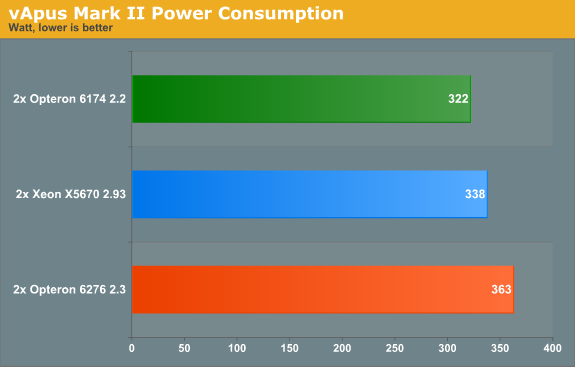

The Xeon has a lower power consumption on paper (95W), so let us check out power consumption.

After this benchmark we were convinced that for some reason the power management features of the Opteron 6276 are not properly used with ESX. We investigated the matter in more detail.

106 Comments

View All Comments

Kevin G - Tuesday, November 15, 2011 - link

I'm curious if CPU-Z polls the hardware for this information or if it queries a database to fetch this information. If it is getting the core and thread count from hardware, it maybe configurable. So while the chip itself does not use Hyperthreading, it maybe reporting to the OS that does it by default. This would have an impact in performance scaling as well as power consumption as load increases.MrSpadge - Tuesday, November 15, 2011 - link

They are integer cores, which share few ressources besides the FPU. On the Intel side there are two threads running concurrently (always, @Stuka87) which share a few less ressources.Arguing which one deserves the name "core" and which one doesn't is almost a moot point. However, both designs are nto that different regarding integer workloads. They're just using a different amount of shared ressources.

People should also keep in mind that a core does not neccessaril equal a core. Each Bulldozer core (or half module) is actually weaker than in Athlon 64 designs. It got some improvements but lost in some other areas. On the other hand Intels current integer cores are quite strong and fat - and it's much easier to share ressources (between 2 hyperthreaded treads) if you've got a lot of them.

MrS

leexgx - Wednesday, November 16, 2011 - link

but on Intel side there are only 4 real cores with HT off or on (on an i7 920 seems to give an benefit, but on results for the second gen 2600k HT seems less important)where as on amd there are 4 cores with each core having 2 FP in them (desktop cpu) issue is the FPs are 10-30% slower then an Phenom cpu clocked at the same speed

anglesmith - Tuesday, November 15, 2011 - link

which version of windows 2008 R2 SP1 x64 was used enterprise/datacenter/standard?Lord 666 - Tuesday, November 15, 2011 - link

People who are purchasing SB-E will be doing similar stuff on workstations. Where are those numbers?Kevin G - Tuesday, November 15, 2011 - link

Probably waiting in the pipeline for SB-E base Xeons. Socket LGA-2011 based Xeon's are still several months away.Sabresiberian - Tuesday, November 15, 2011 - link

I'm not so sure I'd fault AMD too much because 95% of the people that their product users, in this case, won't go through the effort of upgrading their software to get a significant performance increase, at least at first. Sometimes, you have to "force" people to get out of their rut and use something that's actually better for them.I freely admit that I don't know much about running business apps; I build gaming computers for personal use. I can't help but think of my Father though, complaining about Vista and Win 7 and how they won't run his old, freeware apps properly. Hey, Dad, get the people that wrote those apps to upgrade them, won't you? It's not Microsoft's fault that they won't bring them up to date.

Backwards compatibility can be a stone around the neck of progress.

I've tended to be disappointed in AMD's recent CPU releases as well, but maybe they really do have an eye focused on the future that will bring better things for us all. If that's the case, though, they need to prove it now, and stop releasing biased press reports that don't hold up when these things are benched outside of their labs.

;)

JohanAnandtech - Tuesday, November 15, 2011 - link

The problem is that a lot of server folks buy new servers to run the current or older software faster. It is a matter of TCO: they have invested a lot of work into getting webapplication x.xx to work optimally with interface y.yy and database zz.z. The vendor wants to offer a service, not a the latest technology. Only if the service gets added value from the newest technology they might consider upgrading.And you should tell your dad to run his old software in virtual box :-).

Sabresiberian - Wednesday, November 16, 2011 - link

Ah I hadn't thought of it in terms of services, which is obvious now that you say it. Thanks for educating me!;)

IlllI - Tuesday, November 15, 2011 - link

amd was shooting to capture 25% of the market? (this was like when the first amd64 chips came out)