Bulldozer for Servers: Testing AMD's "Interlagos" Opteron 6200 Series

by Johan De Gelas on November 15, 2011 5:09 PM ESTMaxwell Render Suite

The developers of Maxwell Render Suite--Next Limit--aim at delivering a renderer that is physically correct and capable of simulating light exactly as it behaves in the real world. As a result their software has developed a reputation of being powerful but slow. And "powerful but slow" always attracts our interest as such software can be quite interesting benchmarks for the latest CPU platforms. Maxwell Render 2.6 was released less than two weeks ago, on November 2, and that's what we used.

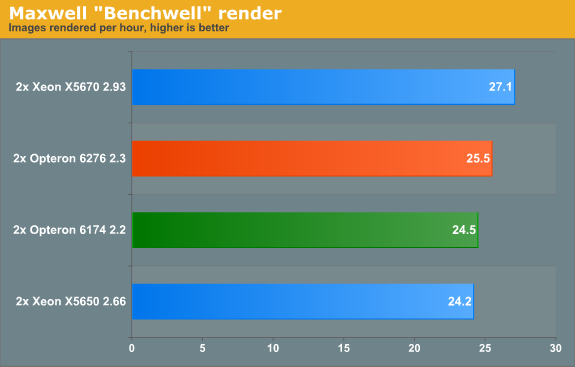

We used the "Benchwell" benchmark, a scene with HDRI (high dynamic range imaging) developed by the user community. Note that we used the "30 day trial" version of Maxwell. We converted the time reported to render the scene in images rendered per hour to make it easier to interprete the numbers.

Since Magny-cours made its entrance, AMD did rather well in the rendering benchmarks and Maxwell is no difference. The Bulldozer based Opteron 6276 gives decent but hardly stunning performance: about 4% faster than the predecessor. Interestingly, the Maxwell renderer is not limited by SSE (Floating Point) performance. When we disable CMT, the AMD Opteron 6276 delivered only 17 frames per second. In other words the extra integer cluster delivers 44% higher performance. There is a good chance that the fact that you disable the second load/store unit by disabling CMT is the reason for the higher performance that the second integer cluster delivers.

Rendering: Blender 2.6.0

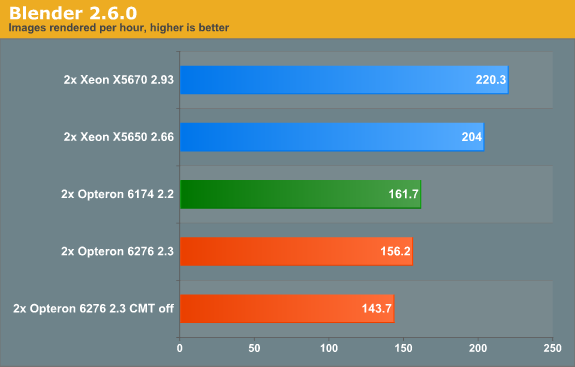

Blender is a very popular open source renderer with a large community. We tested with the 64-bit Windows version 2.6.0a. If you like, you can perform this benchmark very easily too. We used the metallic robot, a scene with rather complex lighting (reflections) and raytracing. To make the benchmark more repetitive, we changed the following parameters:

- The resolution was set to 2560x1600

- Antialias was set to 16

- We disabled compositing in post processing

- Tiles were set to 8x8 (X=8, Y=8)

- Threads was set to auto (one thread per CPU is set).

To make the results easier to read, we again converted the reported render time into images rendered per hour, so higher is better.

Last time we checked (Blender 2.5a2) in Windows, the Xeon X5670 was capable of 136 images per hour, while the Opteron 6174 did 113. So the Xeon was about 20% faster. Now the gap widens: the Xeon is now 36% faster. The interesting thing that we discovered is that the Opteron is quite a bit faster when benchmarked in linux. We will follow up with some Linux numbers in the next article. The Opteron 6276 is in this benchmark 4% slower than its older brother, again likely due in part to the newness of its architecture.

106 Comments

View All Comments

Kevin G - Tuesday, November 15, 2011 - link

I'm curious if CPU-Z polls the hardware for this information or if it queries a database to fetch this information. If it is getting the core and thread count from hardware, it maybe configurable. So while the chip itself does not use Hyperthreading, it maybe reporting to the OS that does it by default. This would have an impact in performance scaling as well as power consumption as load increases.MrSpadge - Tuesday, November 15, 2011 - link

They are integer cores, which share few ressources besides the FPU. On the Intel side there are two threads running concurrently (always, @Stuka87) which share a few less ressources.Arguing which one deserves the name "core" and which one doesn't is almost a moot point. However, both designs are nto that different regarding integer workloads. They're just using a different amount of shared ressources.

People should also keep in mind that a core does not neccessaril equal a core. Each Bulldozer core (or half module) is actually weaker than in Athlon 64 designs. It got some improvements but lost in some other areas. On the other hand Intels current integer cores are quite strong and fat - and it's much easier to share ressources (between 2 hyperthreaded treads) if you've got a lot of them.

MrS

leexgx - Wednesday, November 16, 2011 - link

but on Intel side there are only 4 real cores with HT off or on (on an i7 920 seems to give an benefit, but on results for the second gen 2600k HT seems less important)where as on amd there are 4 cores with each core having 2 FP in them (desktop cpu) issue is the FPs are 10-30% slower then an Phenom cpu clocked at the same speed

anglesmith - Tuesday, November 15, 2011 - link

which version of windows 2008 R2 SP1 x64 was used enterprise/datacenter/standard?Lord 666 - Tuesday, November 15, 2011 - link

People who are purchasing SB-E will be doing similar stuff on workstations. Where are those numbers?Kevin G - Tuesday, November 15, 2011 - link

Probably waiting in the pipeline for SB-E base Xeons. Socket LGA-2011 based Xeon's are still several months away.Sabresiberian - Tuesday, November 15, 2011 - link

I'm not so sure I'd fault AMD too much because 95% of the people that their product users, in this case, won't go through the effort of upgrading their software to get a significant performance increase, at least at first. Sometimes, you have to "force" people to get out of their rut and use something that's actually better for them.I freely admit that I don't know much about running business apps; I build gaming computers for personal use. I can't help but think of my Father though, complaining about Vista and Win 7 and how they won't run his old, freeware apps properly. Hey, Dad, get the people that wrote those apps to upgrade them, won't you? It's not Microsoft's fault that they won't bring them up to date.

Backwards compatibility can be a stone around the neck of progress.

I've tended to be disappointed in AMD's recent CPU releases as well, but maybe they really do have an eye focused on the future that will bring better things for us all. If that's the case, though, they need to prove it now, and stop releasing biased press reports that don't hold up when these things are benched outside of their labs.

;)

JohanAnandtech - Tuesday, November 15, 2011 - link

The problem is that a lot of server folks buy new servers to run the current or older software faster. It is a matter of TCO: they have invested a lot of work into getting webapplication x.xx to work optimally with interface y.yy and database zz.z. The vendor wants to offer a service, not a the latest technology. Only if the service gets added value from the newest technology they might consider upgrading.And you should tell your dad to run his old software in virtual box :-).

Sabresiberian - Wednesday, November 16, 2011 - link

Ah I hadn't thought of it in terms of services, which is obvious now that you say it. Thanks for educating me!;)

IlllI - Tuesday, November 15, 2011 - link

amd was shooting to capture 25% of the market? (this was like when the first amd64 chips came out)