OCZ Z-Drive R4 CM88 (1.6TB PCIe SSD) Review

by Anand Lal Shimpi on September 27, 2011 2:02 PM EST- Posted in

- Storage

- SSDs

- OCZ

- Z-Drive R4

- PCIe SSD

Random Read/Write Speed

The four corners of SSD performance are as follows: random read, random write, sequential read and sequential write speed. Random accesses are generally small in size, while sequential accesses tend to be larger and thus we have the four Iometer tests we use in all of our reviews.

Our first test writes 4KB in a completely random pattern over an 8GB space of the drive to simulate the sort of random access that you'd see on an OS drive (even this is more stressful than a normal desktop user would see). I perform three concurrent IOs and run the test for 3 minutes. The results reported are in average MB/s over the entire time. We use both standard pseudo randomly generated data for each write as well as fully random data to show you both the maximum and minimum performance offered by SandForce based drives in these tests. The average performance of SF drives will likely be somewhere in between the two values for each drive you see in the graphs. For an understanding of why this matters, read our original SandForce article.

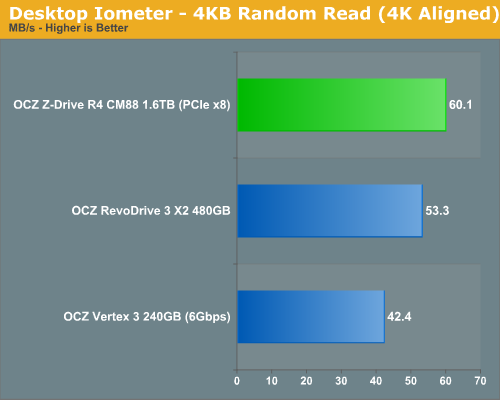

As we saw in our RevoDrive 3 X2 review, low queue depth random read performance doesn't really show much of an advantage on these multi-controller PCIe RAID SSDs. The Z-Drive R4 comes in a little faster than the RevoDrive 3 X2 but not by much at all. Even a single Vertex 3 does just fine here.

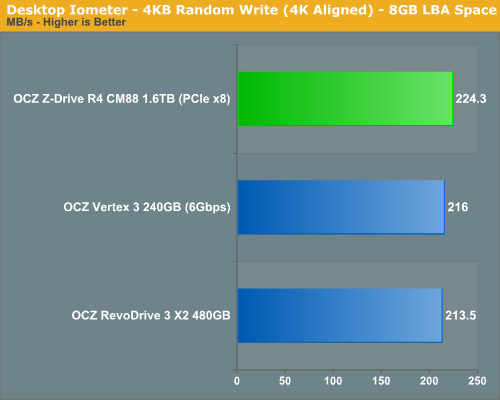

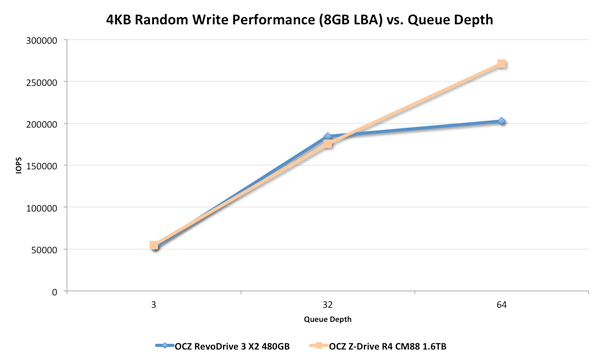

Random write performance tells a similar story, at such low queue depths most of the controllers aren't doing any work at all. Let's see what happens when we start ramping up queue depth however:

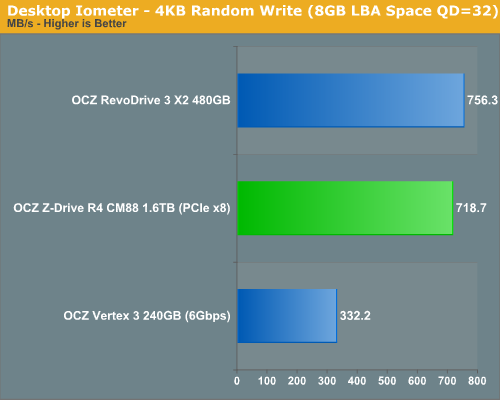

Surprisingly enough, even at a queue depth of 32 the Z-Drive R4 is no faster than the RevoDrive 3 X2. In fact, it's a bit slower (presumably due to the extra overhead of having to split the workload between 8 controllers vs just 4). In our RevoDrive review we ran a third random write test with two QD=32 threads in parallel, it's here that we can start to see a difference between these drives:

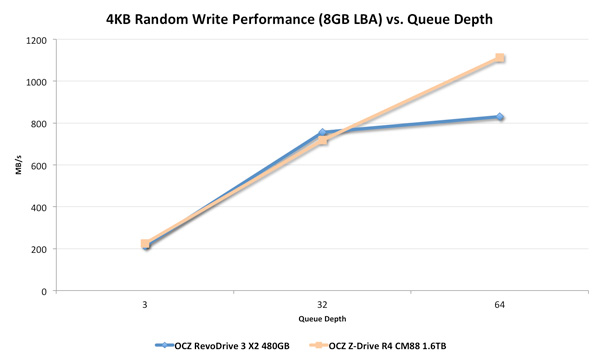

It's only at ultra high queue depths that the Z-Drive can begin to distance itself from the RevoDrive 3 X2. It looks like we may need some really stressful tests to tax this thing. The chart below represents the same data as above but in IOPS instead of MB/s:

271K IOPS...not bad.

57 Comments

View All Comments

jdietz - Tuesday, September 27, 2011 - link

I looked up the prices for these on Google Shopping - $7 / GB.These offer extreme performance, but probably only an enterprise server can ever benefit from this much performance. Enthusiast users of single-user machines should probably stick with RevoDrive X2 for around $2 / GB.

NCM - Tuesday, September 27, 2011 - link

Anand writes: "During periods of extremely high queuing the Z-Drive R4 is a few orders of magnitude faster than a single drive."Umm, a bit hyperbolic! With "a few" meaning three or more, the R4 would need to be at least 1000 times faster. That's nowhere near the case.

JarredWalton - Tuesday, September 27, 2011 - link

Correct. I've edited the text slightly, though even a single order of magnitude is huge, and we're looking at over 30x faster with the R4 CM88 (and over two orders of magnitude faster on the service times for the weekly stats update).Casper42 - Tuesday, September 27, 2011 - link

Where do you plan on testing it? (EU vs US)Have you tried asking HP for an "IO Accelerator" ? (Its a Fusion card)

I worked with a customer a few weeks ago near me and they were testing 10 x 1.28TB Fusion IO cards in 2 different DB Server upgrade projects. 8 in a DL980 for one project and 2 in a DL580g7 for a separate project.

Movieman420 - Tuesday, September 27, 2011 - link

I see all these posts taking all kinds of punishment, please try and remember that ANY company that uses SandForce has the SAME issues, but since Ocz is the largest they catch all the flack. If anything, SF needs to beef up validation testing first and foremost.josephjpeters - Wednesday, September 28, 2011 - link

Like I said before, it's really more of a motherboard issue with the SATA ports then it is a SF/OCZ issue. They designed to spec...Yabbadooo - Tuesday, September 27, 2011 - link

I note that on the Windows Live Team blog they write that they are moving to flash based blob storage for their file systems.Maybe they will use a few of these? That would definitely be a big vote of confidence, and the testimonials from that would be influential.

Guspaz - Tuesday, September 27, 2011 - link

I have to wonder at the utility of these drives. They're not really PCIe drives, they're four or eight RAID-0 SAS drives and a SAS controller on a single PCB. They're still going to be bound by the limitations of RAID-0 and SAS. There are proper PCIe SSDs on the market (Fusion-io makes some), but considering the price-per-gig, these Z-Drives seem to offer little benefit other than saving space.Why should I spend $11,200 on a 1600GB Z-Drive when I can spend about the same on eight OCZ Talos SAS drives and a SAS RAID controller, and get 3840GB of capacity? Or spend half as much on eight OCZ Vertex 3 drives and a SATA RAID controller, and get 1920GB of capacity?

I'm just trying to see the value proposition here. Even with enterprise-grade SSDs (like the Talos) and RAID controllers, the Z-Drive seems to cost twice as much per-gig than OCZ's own products.

lorribot - Tuesday, September 27, 2011 - link

I'm with you on this.What happens if a controller toasts itself wheres your data then?

I would rather have smaller hot swap units sitting behind a raid controller.

It is a shame OCZ couldn't supply such a setup for you to compare performance, or perhaps they know it would be comparable.

Yes it is a great bit of kit but f I can't raid it then it is of no more use to me than as a cache and RAM is better at that, and a lot cheaper, $11000 buys some big quantities of DDR3.

In the enterprise space security of data is king, speed is secondary. Losing data means a new job, slow data you just get moaned at. That is why SANs are so well used. Having all your storage in one basket that could fail easily is a big no, no and has been for many years.

Guspaz - Tuesday, September 27, 2011 - link

To be fair, you can RAID it in software if required. You could RAID a bunch of USB sticks if you really wanted to. There are more than a few enterprise-grade SAN solutions out there that ultimately rely on Linux's software RAID, after all.