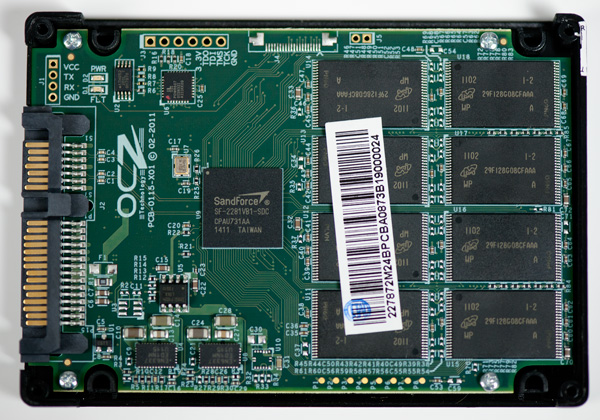

The SandForce Roundup: Corsair, Kingston, Patriot, OCZ, OWC & MemoRight SSDs Compared

by Anand Lal Shimpi on August 11, 2011 12:01 AM ESTIt's a depressing time to be covering the consumer SSD market. Although performance is higher than it has ever been, we're still seeing far too many compatibility and reliability issues from all of the major players. Intel used to be our safe haven, but even the extra reliable Intel SSD 320 is plagued by a firmware bug that may crop up unexpectedly, limiting your drive's capacity to only 8MB. Then there are the infamous BSOD issues that affect SandForce SF-2281 drives like the OCZ Vertex 3 or the Corsair Force 3. Despite OCZ and SandForce believing they were on to the root cause of the problem several weeks ago, there are still reports of issues. I've even been able to duplicate the issue internally.

It's been three years since the introduction of the X25-M and SSD reliability is still an issue, but why?

For the consumer market it ultimately boils down to margins. If you're a regular SSD maker then you don't make the NAND and you don't make the controller.

A 120GB SF-2281 SSD uses 128GB of 25nm MLC NAND. The NAND market is volatile but a 64Gb 25nm NAND die will set you back somewhere from $10 - $20. If we assume the best case scenario that's $160 for the NAND alone. Add another $25 for the controller and you're up to $185 without the cost of the other components, the PCB, the chassis, packaging and vendor overhead. Let's figure another 15% for everything else needed for the drive bringing us up to $222. You can buy a 120GB SF-2281 drive in e-tail for $250, putting the gross profit on a single SF-2281 drive at $28 or 11%.

Even if we assume I'm off in my calculations and the profit margin is 20%, that's still not a lot to work with.

Things aren't that much easier for the bigger companies either. Intel has the luxury of (sometimes) making both the controller and the NAND. But the amount of NAND you need for a single 120GB drive is huge. Let's do the math.

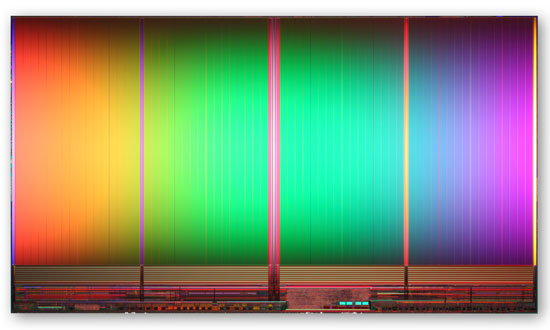

8GB IMFT 25nm MLC NAND die - 167mm2

The largest 25nm MLC NAND die you can get is an 8GB capacity. A single 8GB 25nm IMFT die measure 167mm2. That's bigger than a dual-core Sandy Bridge die and 77% the size of a quad-core SNB. And that's just for 8GB.

A 120GB drive needs sixteen of these die for a total area of 2672mm2. Now we're at over 12 times the wafer area of a single quad-core Sandy Bridge CPU. And that's just for a single 120GB drive.

This 25nm NAND is built on 300mm wafers just like modern microprocessors giving us 70685mm2 of area per wafer. Assuming you can use every single square mm of the wafer (which you can't) that works out to be 26 120GB SSDs per 300mm wafer. Wafer costs are somewhere in four digit range - let's assume $3000. That's $115 worth of NAND for a drive that will sell for $230, and we're not including controller costs, the other components on the PCB, the PCB itself, the drive enclosure, shipping and profit margins. Intel, as an example, likes to maintain gross margins north of 60%. For its consumer SSD business to not be a drain on the bottom line, sacrifices have to be made. While Intel's SSD validation is believed to be the best in the industry, it's likely not as good as it could be as a result of pure economics. So mistakes are made and bugs slip through.

I hate to say it but it's just not that attractive to be in the consumer SSD business. When these drives were selling for $600+ things were different, but it's not too surprising to see that we're still having issues today. What makes it even worse is that these issues are usually caught by end users. Intel's microprocessor division would never stand for the sort of track record its consumer SSD group has delivered in terms of show stopping bugs in the field, and Intel has one of the best track records in the industry!

It's not all about money though. Experience plays a role here as well. If you look at the performance leaders in the SSD space, none of them had any prior experience in the HDD market. Three years ago I would've predicted that Intel, Seagate and Western Digital would be duking it out for control of the SSD market. That obviously didn't happen and as a result you have a lot of players that are still fairly new to this game. It wasn't too long ago that we were hearing about premature HDD failures due to firmware problems, I suspect it'll be a few more years before the current players get to where they need to be. Samsung may be one to watch here going forward as it has done very well in the OEM space. Apple had no issues adopting Samsung controllers, while it won't go anywhere near Marvell or SandForce at this point.

90 Comments

View All Comments

rigged - Sunday, August 14, 2011 - link

are you using the SF-2281 or SF-2282 based OWC drive?only the new 240GB and 480GB drives from OWC use this controller.

http://eshop.macsales.com/item/Other+World+Computi...

Under Specs

Controller: SandForce 2282 Series

Justin Case - Sunday, August 14, 2011 - link

It's not just the BSOD. Even systems that don't crash have frequent freezes for anything up to 90 seconds. Tht's enough to make network transfers abort, connections to game servers drop, etc..I've tried three Corsair drives on multiple platforms and I know people who have used those and also OCZ. Not a single drive was 100% stable on any platform. They tended to crash more on Intel chipsets and freeze more on AMD chipsets (sometimes recoverable, sometimes a hard lock), but NOT A SINGLE ONE was problem-free for more than 2 or 3 days in a row (often you'll get two or three freezes within the sme hour).

The first job of a drive is to reliably hold your data. People use SSDs to install their OS and applications. It takes days to reinstall and recover from errrors. It's irrelevant if some drive gives you 500 happybytes in some benchmark when the same drive keeps losing your thesis or getting you killed in Tem Fortress. I have systems with Raptors that have been running for 5 years without a single error.

If you have any problems (which you will), don't let them string you along with nonsense about your cables or obscure BIOS options or promises about future fixes. Return the drive and demand a refund. Both OCZ and Corsair are still selling drives that they KNOW to be defective, and removing any reference to those problems from the support section of their sites (you'll still find thousands of complaints in their user forums, though). Demanding refunds (or starting a class action suit) seems to be the only language they understand.

SjarbaDarba - Sunday, August 14, 2011 - link

I experience some hard locks too, mainly during gaming only since upgrading to a 120GB Vertex 3.System is an X58-UD7 + i7-960, 2 GTX570 OC Sli, Seasonic X-850 80+ GOLD, 6GB Corsair DDR3 1600C8.

Was originally using 2 x 300GB Velociraptors in RAID 0 with WD1002FAEX and Seagate 2TB XT, stable for 1-2 months before upgrading to the V3. Storage configuration since upgrade is 120GB Vertex3, WD1002FAEX and Raptors RAID 0.

System perfectly stable under 600GB RAID 0 OS with Crysis 2, CS:S, LoL, L4D, L4D2 and Borderlands all playing stable at all loads with hardware monitoring active, no problems found with any hardware or software at this point, system performed flawlessly for all tasks.

Dropped the V3 in with the typical "It's fast - but it could be faster" attitude we all know and love and instantly started experiencing ... whackness. System works flawlessly 99% of the time, however, a few times a week I will lock up and need to power cycle - I have the SSD running in AHCI with TRIM etc. enabled, page file, defrag etc. turned off and pretty much every detail of the drive perfectly specced for optimum performance.

If I lock up and have to cycle, upon restart the SATA controller the SSD is attached to will hang at BIOS and not detect the V3 - however - cycling again at this point allows the SSD to be detected within ~1 second and Windows boots normally.

At this point, however (and using nVidia 275.33 drivers) returning to desktop boots me in 800x600 resolution with no nVidia control panel and a further power cycle is required again to reset the resolution.

Yet to test this problem with nVidia 280.16 drivers but havn't had stability problems since then.

Sorry for any tl;dr, just thought Annad might like to hear about a strange error I've encountered in the SF controller.

P.S: System is 3DMark, Furmark and Prime stable, it just has some whack locks randomly and the SSD disappears completely for a power cycle.

readyrover - Monday, August 15, 2011 - link

I was going to dive into my first SSD with a Bulldozer build on the upcoming horizon...until this all shakes out...absolutely no way. My usage is for processing large music files on a Digital Audio Workstation with multiple time based effects and multi-tracks of instruments. I have been experiencing some latency bottle necks and thought "wow" ssd is an instant fix!If they have ironed out the problems and the reviews' negative percentages drop back below an astounding 20% of my recent research..then perhaps a year from now...Bulldozers should be less expensive then as well..

Just my humble opinion, but I can't roll the dice on a hit and miss crash...."Please Mr. $120 hour guitarist...would you wait an hour for me to fix the computer and replay that absolutely inspired, one of kind improvisation...AGAIN!

Brrrr...shiver...run away fast!

Gothmoth - Friday, August 19, 2011 - link

i have a few asus z68- v pro boards (three to be exact).all of them have an vertex3 120 GB SSD as C drive.

all have 16 GB g.skill ram and run win 7 64 bit sp1.

i had not a single issue with the vertex 3 since i bought them (13. april 2011).

i have still the first firmware running.

thank god i have avoided updating to firmware v2.06 or v2.09.

i have put the vertex3 240 GB from a friend in my system with firmware 2.06.

we could reproduce the BSOD after 1 hour.

he has constand crashes on his gigabye motherboard based system.

we but one of my vertex3 120GB SSD in his system and it was running flawless for 2 days.

twindragon6 - Friday, August 26, 2011 - link

I know the market sucks! But I would rather pay more for something that actually works than pay less for something that doesn't and be stuck with an expensive paperweight!alpha754293 - Friday, September 2, 2011 - link

Anand:Does that BSOD bug only affect drives that are boot drives? i.e. What would happen if the test drives were slave/data/non-OS-containing drives? Does it still do the same BSOD thing?

Keith2468 - Monday, December 12, 2011 - link

Digital people tend to think digital issue when looking for the causes of computer hardsware and software failure. But sometimes the failures are not digital in origin.The power supply may well be critical to SSD failures.

What causes SSD failures? Largely power disturbances to the SSD.

Why are SSDs with smaller IOPS and smaller caches less likely to fail?

Less data to move from volatile RAM cache to Flash when power disturbances occur.

Why should you not use a notebook SSD in a desktop?

A notebook SSD designer will typically assume that the notebook's battery means he doesn't have to design for power distrubances.

"The design of an SSD's power down management system is a fundamental characteristic of the SSD which can determine its suitability and compatibility with user operational environments. Systems integrators must take this into account when qualifying SSDs in new applications - because subtle differences in OS timings, rack power loading and rack logic affect some types of SSDs more than others. Users should be aware that power management inside the SSD (a factor which doesn't get much space in most product datasheets) is as important to reliable operation as management of endurance, IOPS, cost and other headline parameters."

http://www.storagesearch.com/ssd-power-going-down....

jfraser7 - Friday, November 14, 2014 - link

This article is very useful because Mac OS X 10.10 Yosemite dropped all support for third-party Solid State Drives, except for those which use SandForce controllers.jfraser7 - Friday, November 14, 2014 - link

Also, all three of Kingston's recent Solid State Drive lines(V300, KC300 & HyperX) use SandForce controllers.