The AMD Llano Notebook Review: Competing in the Mobile Market

by Jarred Walton & Anand Lal Shimpi on June 14, 2011 12:01 AM ESTWhat Took So Long?

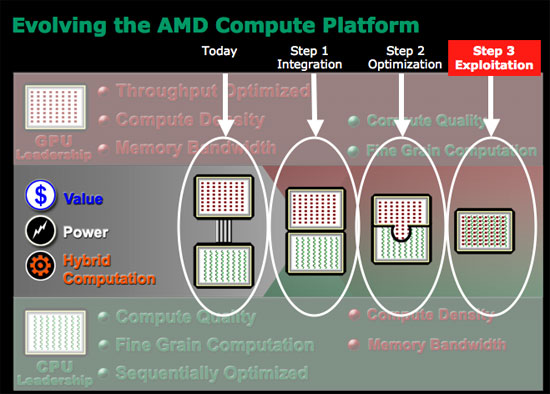

AMD announced the acquisition of ATI in 2006. By 2007 AMD had a plan for CPU/GPU integration and it looked like this. The red blocks in the diagram below were GPUs, the green blocks were CPUs. Stage 1 was supposed to be dumb integration of the two (putting a CPU and GPU on the same die). The original plan called for AMD to release the first Fusion APU to come out sometime in 2008—2009. Of course that didn't happen.

Brazos, AMD's very first Fusion platform, came out in Q4 of last year. At best AMD was two years behind schedule, at worst three. So what happened?

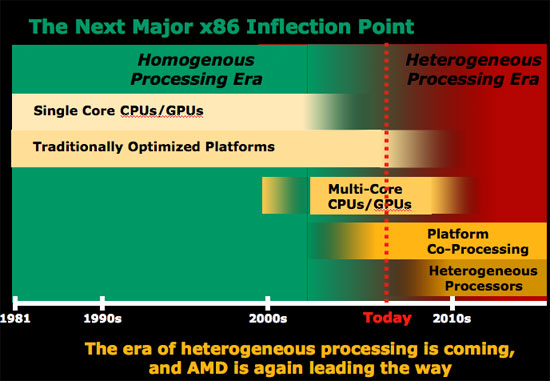

AMD and ATI both knew that designing CPUs and GPUs were incredibly different. CPUs, at least for AMD back then, were built on a five year architecture cadence. Designers used tons of custom logic and hand layout in order to optimize for clock speed. In a general purpose microprocessor instruction latency is everything, so optimizing to lower latency wherever possible was top priority.

GPUs on the other hand come from a very different world. Drastically new architectures ship every two years, with major introductions made yearly. Very little custom logic is employed in GPU design by comparison; the architectures are highly synthesizable. Clock speed is important but it's not the end all be all. GPUs get their performance from being massively parallel, and you can always hide latency with a wide enough machine (and a parallel workload to take advantage of it).

The manufacturing strategy is also very different. Remember that at the time of the ATI acquisition, only ATI was a fabless semiconductor—AMD still owned its own fabs. ATI was used to building chips at TSMC, while AMD was fabbing everything in Dresden at what would eventually become GlobalFoundries. While the folks at GlobalFoundries have done their best to make their libraries portable for existing TSMC customers, it's not as simple as showing up with a chip design and having it work on the first go.

As much sense as AMD made when it talked about the acquisition, the two companies that came together in 2006 couldn't have been more different. The past five years have really been spent trying to make the two work together both as organizations as well as architectures.

The result really holds a lot of potential and hope for the new, unified AMD. The CPU folks learn from the GPU folks and vice versa. Let's start with APU refresh cycles. AMD CPU architectures were updated once every four or five years (K7 1999, K8 2003, K10 2007) while ATI GPUs received substantial updates yearly. The GPU folks won this battle as all AMD APUs are now built on a yearly cadence.

Chip design is also now more GPU inspired. With a yearly design cadence there's a greater focus on building easily synthesizable chips. Time to design and manufacture goes down, but so do maximum clock speeds. Given how important clock speed can be to the x86 side of the business, AMD is going to be taking more of a hybrid approach where some elements of APU designs are built the old GPU way while others use custom logic and more CPU-like layout flows.

The past few years have been very difficult for AMD but we're at the beginning of what may be a brand new company. Without the burden of expensive fabs and with the combined knowledge of two great chip companies, the new AMD has a chance but it also has a very long road ahead. Brazos was the first hint of success along that road and today we have the second. Her name is Llano.

177 Comments

View All Comments

Brian23 - Tuesday, June 14, 2011 - link

Jarred/Anand,Based on the benchmarks you've posted, It's not very clear to me how this CPU performs in "real world" CPU usage. Perhaps you have it covered with one of your synthetic benchmarks, but by looking at the names, it's not clear which ones stress the integer vs floating point portions of the processor.

IMO, a test I'd REALLY like to see is how this APU compares in a compile benchmark against a C2D 8400 and a i3 380M. Those are both common CPUs that can be used to compare against other benchmarks.

Could you compile something like Chrome or Firefox on this system and a couple others and update the review?

Thanks! I appreciate the work you guys do!

ET - Tuesday, June 14, 2011 - link

PCMark tests common applications. You can read more details here: http://www.pcmark.com/wp-content/uploads/2011/05/P...While I would find a compilation benchmark interesting, are you suggesting that this will be more "real world"? How many people would do that compared to browsing, video, gaming? Probably not a lot.

Brian23 - Tuesday, June 14, 2011 - link

Thanks for the link. I was looking for something that described what the synthetic benchmarks mean.As for "real world," it really depends from one user to the next. What I was really trying to say is that no-one buys a PC just to run benchmarks. Obviously the benchmark companies try to make their benchmarks simulate real world scenarios, but there's no way they can truly simulate a given person's exact workload because it's going to be different from someone else's workload.

If we're going down the synthetic benchmark path, what I'd like to see is a set of benchmarks that specifically stresses one aspect of a system. (i.e. integer unit or FPU.) That way you can compare processor differences directly without worrying about how other aspects of the system affect what you're looking at. In the case of this review, I was looking at the Computation benchmark listed. After reading the whitepaper, I found out that benchmark is stressing both the CPU and the GPU, so it's not really telling me just about the CPU which is the part I'm interested in.

Switching gears to actual real world tests, seeing a compile will tell me what I'm interested in: CPU performance. Like you said, most people aren't going to be doing this, but it's interesting because it will truly test just the CPU.

JarredWalton - Tuesday, June 14, 2011 - link

Hi Brian,I haven't looked into compiling code in a while, but can you give me a quick link to a recommended (free) Windows compiler for Chrome? I can then run that on all the laptops and add it to my benchmark list. I would venture to say that an SSD will prove more important than the CPU on compiling, though.

Brian23 - Tuesday, June 14, 2011 - link

Jarred,This link is a user's quick how-to for compiling chrome:

http://cotsog.wordpress.com/2009/11/08/how-to-comp...

This is the official chrome build instructions:

http://dev.chromium.org/developers/how-tos/build-i...

Both use Visual Studio Express which is free.

I really appreciate this extra work. :-)

krumme - Tuesday, June 14, 2011 - link

The first links at the top is sponsored3 times exactly the same i7 + 460 ! ROFL

Then 1 i7 with a 540

Damn - looks funny, but at least it not 1024 *768 like the preview, but the most relevant resolution for the market - thank you for that

Shadowmaster625 - Tuesday, June 14, 2011 - link

Man what is it with this dumb yuppie nonsense. No I dont want to save $200 because I dont actually work for my money. Hell, if you're even reading this site then it is highly likely that the two places you want more performance from your notebook is games and internet battery life. All this preening about intel's crippled cpu being 50% faster dont mean jack because ... well its a crippled cpu. It cant play games yet it has a stupid igp. Why get all yuppity about such an obvious design failure, so much so that you'd be willing to sneeze at a $200 savings like it means nothing. It actually means something to people who work for a living. Most people just dont need the extra 50% cpu speed from a notebook. But having a game that runs actually does mean something tangible.madseven7 - Tuesday, June 14, 2011 - link

I'm not sure why people think this is such a crappy cpu. Am I missing something? Wasn't the Llano APU that was tested the lowest of the A8 series with DDR 1333? Doesn't it give up 500MHz-800MHz to the SB notebooks that were tested? Wouldn't the A8 3530mx perform much better? I for one would love to see a review of the A8 3530mx personally.ET - Tuesday, June 14, 2011 - link

Good comment. This is the highest end 35W CPU, but not the highest end Llano. So it gets commended for battery life but not performance. It will be interesting to see the A8-3530MX results for performance and battery life. It would still lose to Sandy Bridge quite soundly on many tests, I'm sure, but it's still a significant difference in clock speed over the A8-3500M..Jasker - Tuesday, June 14, 2011 - link

One thing that is really interesting that isn't brought up here is the amount of power used during gaming. Not only do you get much better gaming than Intel, but you also get much less power. Double whammy.