Z68 SSD Caching with Corsair's F40 SandForce SSD

by Anand Lal Shimpi on May 13, 2011 3:06 AM ESTRandom/Sequential Read & Write Performance

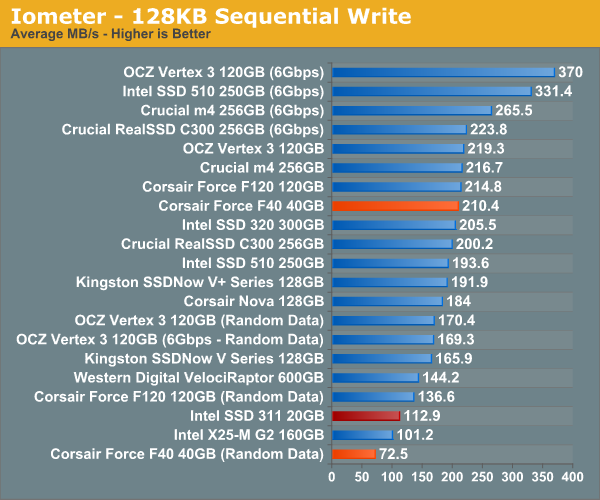

To start with, let's look at how the Corsair Force F40 and Intel SSD 311 stack up. Remember that the F40 is based on SandForce's SF-1200 controller, meaning it gains its high performance by using real-time compression and deduplication techniques to reduce what it actually writes to NAND. Data that can easily be compressed is written as quickly as possible, while data that isn't as compressible goes by much slower. As a cache the drive is likely to encounter data from both camps, although Intel's SRT driver does filter out sequential file operations so large incompressible movies and images should be kept out of the cache altogether.

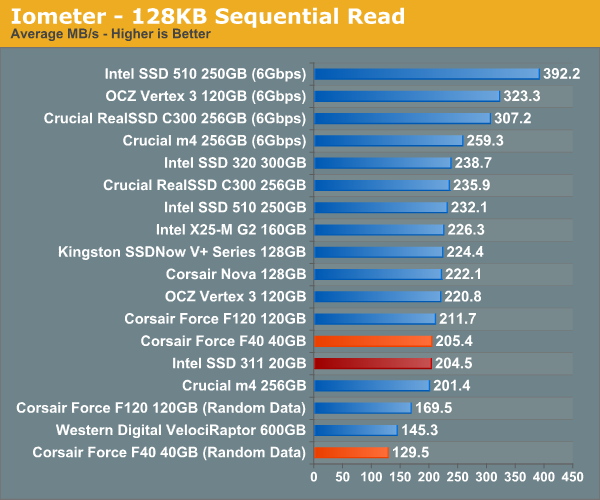

Peak sequential write performance is nearly double that of Intel's SSD 311. Toss incompressible (fully random) data at the drive however and it's noticeably slower. I'd say in practice the F40 is probably about the speed of the 311, perhaps a bit quicker in sequential writes.

For only having five NAND devices on board, Intel's SSD 311 boasts extremely high sequential read performance. At best the F40 equals it, but in reality the sequential read performance is likely a bit lower.

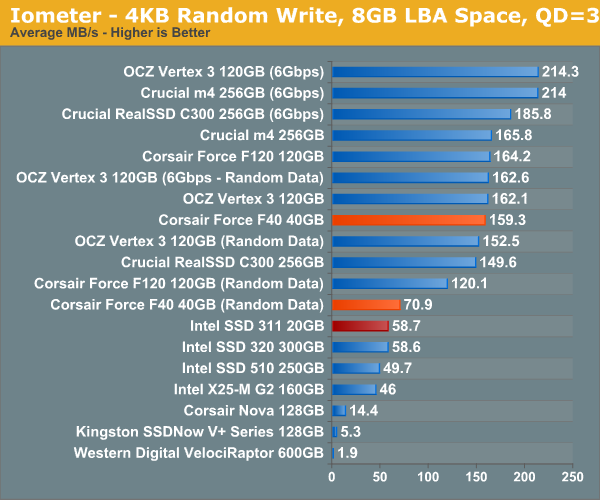

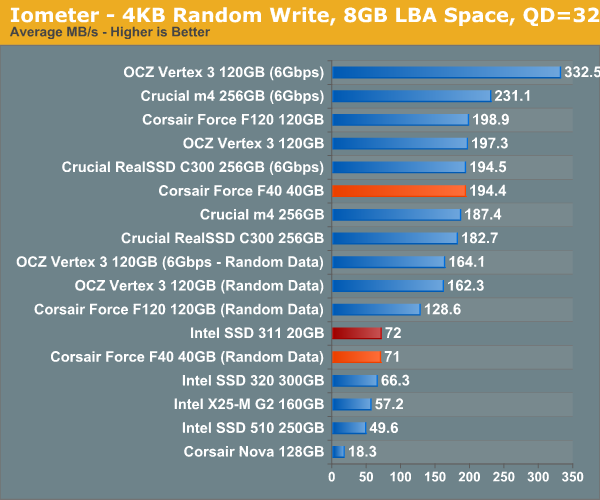

Random write performance is higher across the board, even with incompressible data. Random read/write performance is incredibly important for a cache, especially if most sequential data is kept off the cache to begin with. Things could be quite good for the F40 drive here.

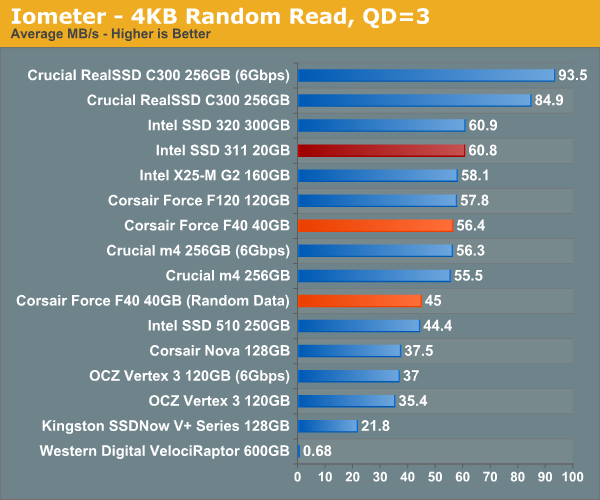

Random read performance unfortunately doesn't look as good for the F40. Again, Intel's SSD 311 performs a lot like a X25-M G2, which happens to do very well in our random read test. At best the F40 is an equal performer, but at worst it's about 75% of the performance of the SSD 311.

Without a clear victory here, we'll likely see mixed results in our storage benchmark suite.

81 Comments

View All Comments

iwod - Friday, May 13, 2011 - link

If 20GB is really what most of us do use. What happen when you have 8GB x 4 RAM? With Windows 7 Superfetch, most of your data will be fetched inside memory.And with $110, would i be better off with a RAM drive, and fake that as a drive for use with Intel SRT?

Araemo - Friday, May 13, 2011 - link

Since this SSD caching is software-driven.. it doesn't help any with boot performance (Until the service is running anyways), right?Stahn Aileron - Friday, May 13, 2011 - link

I'm pretty sure it's built into the chipset's RAID BIOS/firmware, not used at the OS-level. The OS-level stuff is probably more for management and monitoring than anything else.If fact, I think you have to set it up in the BIOS/firmware, not within the OS. I'd have to look at the Z68 review again to be sure about the wording though.

cbass64 - Friday, May 13, 2011 - link

In the last SRT review (http://www.anandtech.com/show/4329/intel-z68-chips... a 3TB drive took 55+ seconds to boot and a 3TB drive with SRT booted in 33 seconds...micksh - Friday, May 13, 2011 - link

Can you do TRIM on cache SSD?I expect that SSD performance would degrade over time regardless of whether it's a cache or standalone drive.

It looks like this aspect has been missed in original Z68 review as well.

Stahn Aileron - Friday, May 13, 2011 - link

Out of curiosity, does Intel's SRT and RAID drivers support TRIM-command passthrough? I believe it was mentioned that SRT only works with the chipset in RAID mode. Last I recall, no RAID drivers support TRIM yet (I might be out of the loop.)Also, in a somewhat related matter: Have you considered looking at SRT's affect on an SSD's long-term read/write capability? I don't think it'll matter TOO much if someone is using a dedicated SSD for the cache. I'm asking this in the scenario that a user has a large capacity SSD (say 160GB) and allocates part of it (say 20GB - 40GB) as a cache while the rest is used for something else. So, for example:

120GB = OS install and frequently use programs/applications (Office, Adobe, etc.)

40GB = Cache for larger storage volume meant for infrequently used or very large installation size apps/programs (like a gaming drive/partition/volume.)

I think the above example may be what many power users/enthusiasts will want to do. Would this have an affect on an SSD's long-term read/write performance? Especially in relation to the support of TRIM with Intel's SRT/RAID drivers. Or will the garbage collection algorithm be the only thing available to maintain it performance in the long run? Do users have to start looking at the garbage collection performance of an SSD as well when choosing one to use as a cache? Or has Intel devised a RAID driver for the Z68 that supports TRIM?

Oh, and would my above usage scenario (splitting and sharing of the SSD) affect data eviction/retention for the cache in any noticeable way, if at all?

micksh - Friday, May 13, 2011 - link

RAID drivers support TRIM. Just one condition - SSD has to be a single drive in order TRIM to work. If RAID is made of SSDs TRIM won't work, but you han have HDD RAID and single SSD with TRIM on the same controller.SRT is different from RAID so the question is still valid. And your scenario is very interesting too.

I think it's believed that SLC SSDs don't need TRIM. I don't even know if 311 supports it. Intel might not even bother with adding TRIM to SRT if 311 is its main usage target, I'm not sure.

But if you are going to use your own MLC drive it is important.

And yes, defragmentation from the next post is also important question.

Stahn Aileron - Friday, May 13, 2011 - link

All flash NAND can use TRIM. Lack of it for SLC just has a lower overhead penalty on (re)write performance than it does for MLC. Though you did remind me that Intel Gen 1 (50nm) SLC SSD, the X25-E, never got TRIM support, far as I recall.I didn't realize RAID drivers allowed TRIM passthrough on single drives. I always thought SSDs had to be on ACHI-mode drivers to support TRIM properly, regardless of configuration. I wonder if/when they will fix it for RAID arrays, then?

Lastly, yeah, forgot about the fragmentation problem as well. I wonder if Intel's SRT caching algorithm is smart enough to ignore defrag activity?

Actually, I have been curious as to what Diskeeper's SSD-related feature actually does for an SSD. I own a copy of DK with HyperFAST and I've been wondering what it does for quite some time now. Otherwise, I've been using DK for a long time now (since '05, at least).

Anand, have you ever looked at DK's impact on SSD performance? Or at least find out it role and what it actually DOES on a system?

Henk Poley - Monday, May 16, 2011 - link

HyperFast does not much at all. At best it caches some writes, that can improve SSDs that lack in the 4k write department (old disks). I also read it helps with file consolidation, which can help an OS/SSD without TRIM (old disks). Never used space can be used for write leveling by the SSD. Real world benchmarks appear to find nothing changes or that the performance slightly deteriorates.The product was built when 8GB PATA SSDs were pretty nifty.

Besides all that, if you can write less to an SSD, that is in general better. So don't move files around willy nilly (don't defrag).

Dribble - Friday, May 13, 2011 - link

So I have my big HD that obviously needs regularly defragged attached to my SSD.How do I get the defragger to not wear out the SSD?