OCZ Vertex 3 (240GB) Review

by Anand Lal Shimpi on May 6, 2011 1:50 AM ESTThree months ago we previewed the first client focused SF-2200 SSD: OCZ's Vertex 3. The 240GB sample OCZ sent for the preview was four firmware revisions older than what ended up shipping to retail last month, but we hoped that the preview numbers were indicative of final performance.

The first drives off the line when OCZ went to production were 120GB capacity models. These drives have 128GiB of NAND on board and 111GiB of user accessible space, the remaining 12.7% is used for redundancy in the event of NAND failure and spare area for bad block allocation and block recycling.

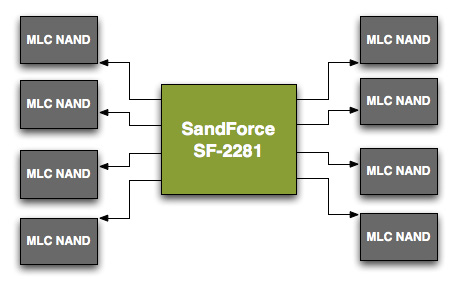

Unfortunately the 120GB models didn't perform as well as the 240GB sample we previewed. To understand why, we need to understand a bit about basic SSD architecture. SandForce's SF-2200 controller has 8 channels that it can access concurrently, it looks sort of like this:

Each arrowed line represents a single 8-byte channel. In reality, SF's NAND channels are routed from one side of the chip so you'll actually see all NAND devices to the right of the controller on actual shipping hardware.

Even though there are 8 NAND channels on the controller, you can put multiple NAND devices on a single channel. Two NAND devices can't be actively transferring data at the same time. Instead what happens is one chip is accessed while another is either idle or busy with internal operations.

When you read from or write to NAND you don't write directly to the pages, you instead deal with an intermediate register that holds the data as it comes from or goes to a page in NAND. The process of reading/programming is a multi-step endeavor that doesn't complete in a single cycle. Thus you can hand off a read request to one NAND device and then while it's fetching the data from an internal page, you can go off and program a separate NAND device on the same channel.

Because of this parallelism that's akin to pipelining, with the right workload and a controller that's smart enough to interleave operations across NAND devices, an 8-channel drive with 16 NAND devices can outperform the same drive with 8 NAND devices. Note that the advantage can't be double since ultimately you can only transfer data to/from one device at a time, but there's room for non-insignificant improvement. Confused?

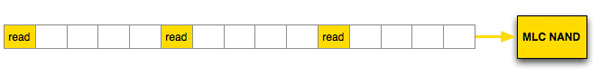

Let's look at a hypothetical SSD where a read operation takes 5 cycles. With a single die per channel, 8-byte wide data bus and no interleaving that gives us peak bandwidth of 8 bytes every 5 clocks. With a large workload, after 15 clock cycles at most we could get 24 bytes of data from this NAND device.

Hypothetical single channel SSD, 1 read can be issued every 5 clocks, data is received on the 5th clock

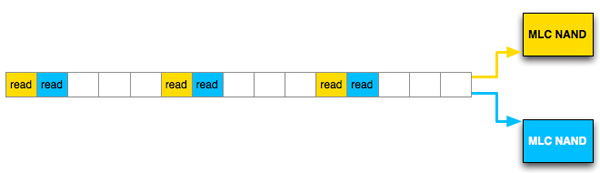

Let's take the same SSD, with the same latency but double the number of NAND devices per channel and enable interleaving. Assuming we have the same large workload, after 15 clock cycles we would've read 40 bytes, an increase of 66%.

Hypothetical single channel SSD, 1 read can be issued every 5 clocks, data is received on the 5th clock, interleaved operation

This example is overly simplified and it makes a lot of assumptions, but it shows you how you can make better use of a single channel through interleaving requests across multiple NAND die.

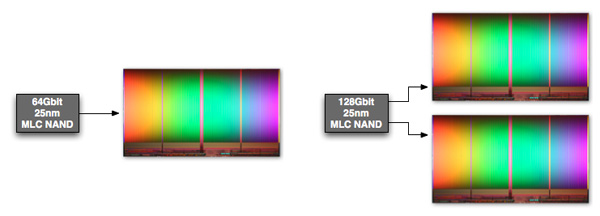

The same sort of parallelism applies within a single NAND device. The whole point of the move to 25nm was to increase NAND density, thus you can now get a 64Gbit NAND device with only a single 64Gbit die inside. If you need more than 64Gbit per device however you have to bundle multiple die in a single package. Just as we saw at the 34nm node, it's possible to offer configurations with 1, 2 and 4 die in a single NAND package. With multiple die in a package, it's possible to interleave read/program requests within the individual package as well. Again you don't get 2 or 4x performance improvements since only one die can be transferring data at a time, but interleaving requests across multiple die does help fill any bubbles in the pipeline resulting in higher overall throughput.

Intel's 128Gbit 25nm MLC NAND features two 64Gbit die in a single package

Now that we understand the basics of interleaving, let's look at the configurations of a couple of Vertex 3s.

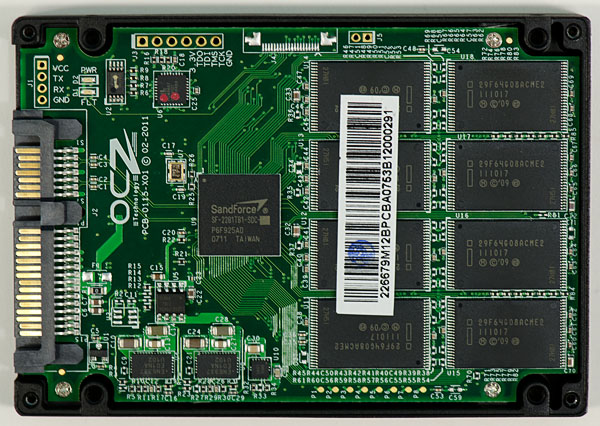

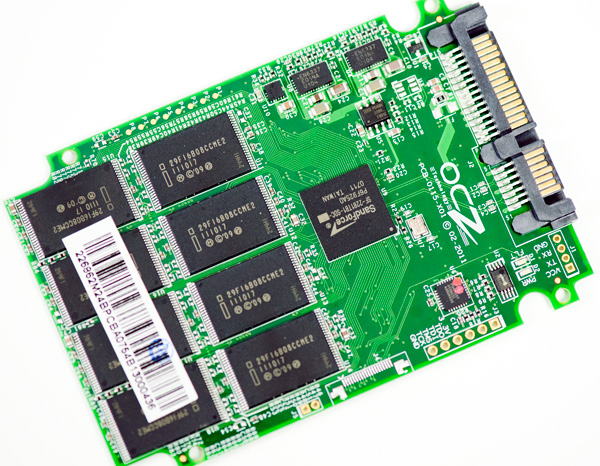

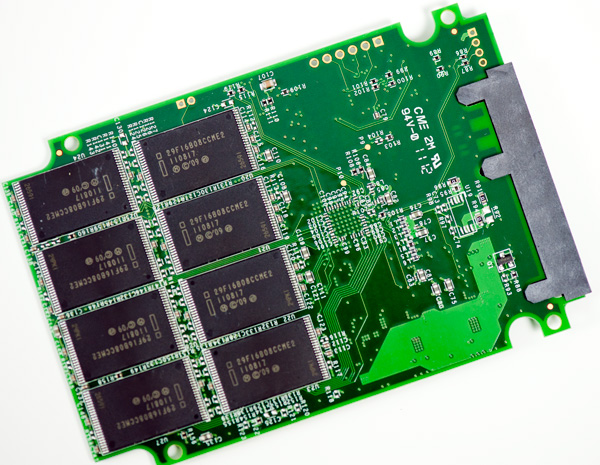

The 120GB Vertex 3 we reviewed a while back has sixteen NAND devices, eight on each side of the PCB:

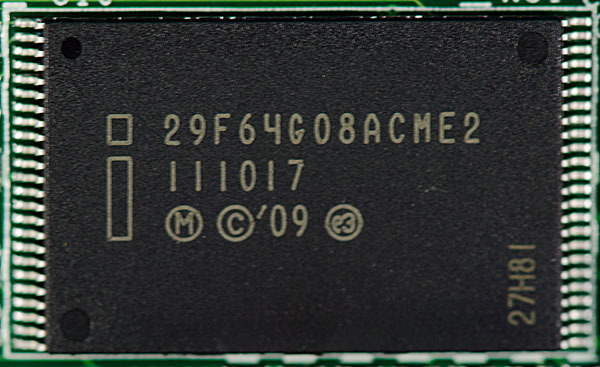

These are Intel 25nm NAND devices, looking at the part number tells us a little bit about them.

You can ignore the first three characters in the part number, they tell you that you're looking at Intel NAND. Characters 4 - 6 (if you sin and count at 1) indicate the density of the package, in this case 64G means 64Gbits or 8GB. The next two characters indicate the device bus width (8-bytes). Now the ninth character is the important one - it tells you the number of die inside the package. These parts are marked A, which corresponds to one die per device. The second to last character is also important, here E stands for 25nm.

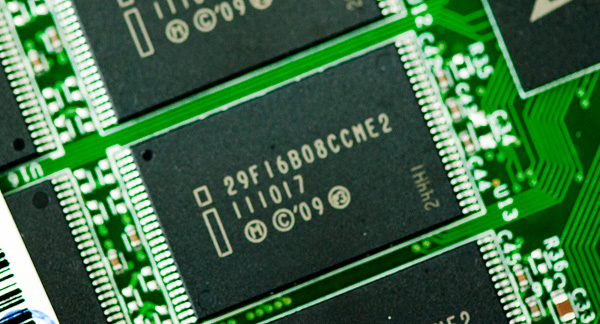

Now let's look at the 240GB model:

Once again we have sixteen NAND devices, eight on each side. OCZ standardized on Intel 25nm NAND for both capacities initially. The density string on the 240GB drive is 16B for 16Gbytes (128 Gbit), which makes sense given the drive has twice the capacity.

A look at the ninth character on these chips and you see the letter C, which in Intel NAND nomenclature stands for 2 die per package (J is for 4 die per package if you were wondering).

While OCZ's 120GB drive can interleave read/program operations across two NAND die per channel, the 240GB drive can interleave across a total of four NAND die per channel. The end result is a significant improvement in performance as we noticed in our review of the 120GB drive.

| OCZ Vertex 3 Lineup | |||||

| Specs (6Gbps) | 120GB | 240GB | 480GB | ||

| Raw NAND Capacity | 128GB | 256GB | 512GB | ||

| Spare Area | ~12.7% | ~12.7% | ~12.7% | ||

| User Capacity | 111.8GB | 223.5GB | 447.0GB | ||

| Number of NAND Devices | 16 | 16 | 16 | ||

| Number of die per Device | 1 | 2 | 4 | ||

| Max Read | Up to 550MB/s | Up to 550MB/s | Up to 530MB/s | ||

| Max Write | Up to 500MB/s | Up to 520MB/s | Up to 450MB/s | ||

| 4KB Random Read | 20K IOPS | 40K IOPS | 50K IOPS | ||

| 4KB Random Write | 60K IOPS | 60K IOPS | 40K IOPS | ||

| MSRP | $249.99 | $499.99 | $1799.99 | ||

The big question we had back then was how much of the 120/240GB performance delta was due to a reduction in performance due to final firmware vs. a lack of physical die. With a final, shipping 240GB Vertex 3 in hand I can say that the performance is identical to our preview sample - in other words the performance advantage is purely due to the benefits of intra-device die interleaving.

If you want to skip ahead to the conclusion feel free to, the results on the following pages are near identical to what we saw in our preview of the 240GB drive. I won't be offended :)

The Test

| CPU |

Intel Core i7 965 running at 3.2GHz (Turbo & EIST Disabled) Intel Core i7 2600K running at 3.4GHz (Turbo & EIST Disabled) - for AT SB 2011, AS SSD & ATTO |

| Motherboard: |

Intel DX58SO (Intel X58) Intel H67 Motherboard |

| Chipset: |

Intel X58 + Marvell SATA 6Gbps PCIe Intel H67 |

| Chipset Drivers: |

Intel 9.1.1.1015 + Intel IMSM 8.9 Intel 9.1.1.1015 + Intel RST 10.2 |

| Memory: | Qimonda DDR3-1333 4 x 1GB (7-7-7-20) |

| Video Card: | eVGA GeForce GTX 285 |

| Video Drivers: | NVIDIA ForceWare 190.38 64-bit |

| Desktop Resolution: | 1920 x 1200 |

| OS: | Windows 7 x64 |

90 Comments

View All Comments

spudit99 - Friday, May 6, 2011 - link

Because these drives are so new and right on the cutting edge, this is probably not going to happen. The enthusiast community is going to do be doing this job for them, letting them both sell drives and fix issues along the way. I'm still mucking around with a year old CS300 that took months to get running as desired....Anand went through the same debacle.if you are looking for a super stable SSD with a wide compatiblity range, the OCZ Vertex 3 probably is not the best choice. I would suggest either Samsung or Intel SSD's if stability is key.

sor - Friday, May 6, 2011 - link

Unfortunately there seems to be some sort of incompatibility between Linux and the Vertex 3. Specifically newer kernels, such as most recent versions of ubuntu. Symptoms include the disk not being found on boot about 80% of the time.From the logs and reports, it looks like the disk doesn't return from the ATA 'identify' command the way linux expects it to. It's a real bummer for me since Linux is more than just a hobby, at this point I've got a 240G Vertex 3 that I can't return for anything but a replacement. Wish I would have just gone with a C300/M4, or 520.

http://www.ocztechnologyforum.com/forum/showthread...

https://bugs.launchpad.net/ubuntu/+source/linux/+b...

sor - Friday, May 6, 2011 - link

I should add that we use hundreds of SSDs at work. We deploy maybe 8-10 a week. We've been eagerly awaiting the new Sandforce controllers to come out in order to try to use them in our servers, but since they don't play nice with Linux it's out of the question. Maybe another brand would work, but my impression was that the firmwares are mostly the same and come from Sandforce.DanaG - Friday, May 6, 2011 - link

If the Vertex 3 is anywhere near as buggy as the Vertex 2, I don't want one.It seems the drive can't handle ATA Security, even though it claims support for it. The drive fails to respond if given non-data (such as "unlock") commands after resume from suspend!

http://www.ocztechnologyforum.com/forum/showthread...

jcompagner - Saturday, May 7, 2011 - link

ah, then this is what i had with an older driver of intelBSOD always when resuming

The latest driver of intel i don't have that problem anymore but i guess that now doesn't send that command or something

But yes i already told OCZ at there forum that they really should look into this, that it is not a real driver issue (yes the driver can fix it by not sending those commands) but it is really an issue that the Vertex 2 or 3 doesn't really work well with all the sata commands, i think many problems reported by many peoples are all coming down to that.

OCZ/Sandforge should really really be looking into that!

DanaG - Monday, May 9, 2011 - link

For me, it's not the driver doing it -- the same happens in both Windows and Linux. It's my laptop's firmware that's sending "non-data commands".How can you claim to "support" ATA Security if the drive becomes unresponsive when told to unlock? If you suspend with drive locked, you can be nearly 100% certain you'll want to unlock the drive at resume.

For some people, it doesn't even take lock/unlock to make the drive crap out -- perhaps the BIOS calls an IDENTIFY command, or such.

Anand, can you please try to get OCZ and/or Sandforce to do something about this buggy firmware? My old Indilinx drive handled suspend/resume (while locked) perfectly fine!

Check out that forum post for info on how to reproduce the issue.

danjw - Friday, May 6, 2011 - link

I am considering switching to an SSD on my desktop. Currently, I have a regular defrag scheduled via windows scheduler. Should I get rid of this for an SSD?evilspoons - Friday, May 6, 2011 - link

Yes, definitely. The Intel SSD toolbox actually does this automatically if it detects a scheduled defrag.Spacecomber - Friday, May 6, 2011 - link

I know that you've got your hands full with keeping up with reviewing the individual SSD models that are being released, Anand, but I think it would be helpful if you or another writer could put together a guide or overview of this hardware segment for someone thinking to take the plunge into a SSD for their system.Things that I would be interested in are bang for your buck comparisons, which types of drives would be the best match for particular usage scenarios, and which drives are likely to be the easiest to adapt to and most trouble free to use.

As I think you suggested in an earlier comment (re: vertex 2 vs vertex 3), a lot of the differences between these drives covered in these detailed reviews are not necessarily differences that can be readily detected in actual use, and the big difference is between a normal HDD and a SDD. Yet, I don't think that this means we are at a stage where you simply look for the least expensive SSD that has the capacity you want (which seems to be pretty close to the case when it comes to HDDs, these days).

I've read these SDD reviews with varying degrees of scrutiny, since I'm not necessarily ready to buy one right now. If I had to simplify what I've taken away these reviews, so far, into a rule of thumb, it would be to buy the largest capacity Intel drive that I could afford. Of course, I may be way off base in reaching that conclusion, which is why I would be interested in a guide.

spudit99 - Friday, May 6, 2011 - link

Company I work for has deployed several SSD's in laptops especially. Samsung and Intel drives have been trouble free, although not always at the very top of speed list.Best advice I can give is find a good buy and try one out. My personal experience is extremely positive, even with the 2+ year old SSD drive in my laptop. Yes, the cost is high, but IMO the performance gain is hard to beat.

They are fast, quiet, and as long as you aren't trying to be the first to have the latest product, very reliable. The only SSD trouble I've had was with a personal purchase (CS300), which took a return and several firmware updates to fix. Reading about this stuff is great, but unless you want to pave the way, I would be at least somewhat conservative.