OWC Mercury Extreme Pro 6G SSD Review (120GB)

by Anand Lal Shimpi on May 5, 2011 1:45 AM ESTI still don't get how OWC managed to beat OCZ to market last year with the Mercury Extreme SSD. The Vertex LE was supposed to be the first SF-1500 based SSD on the market, but as I mentioned in our review of OWC's offering - readers had drives in hand days before the Vertex LE even started shipping.

I don't believe the same was true this time around. The Vertex 3 was the first SF-2200 based SSD available for purchase online, but OWC was still a close second. Despite multiple SandForce partners announcing drives based on the controller, only OCZ and OWC are shipping SSDs with SandForce's SF-2200 inside.

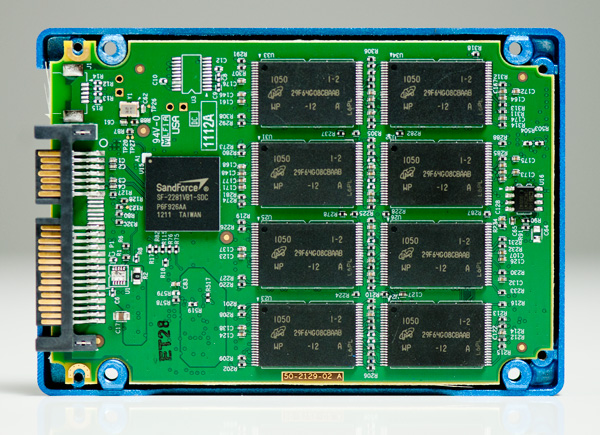

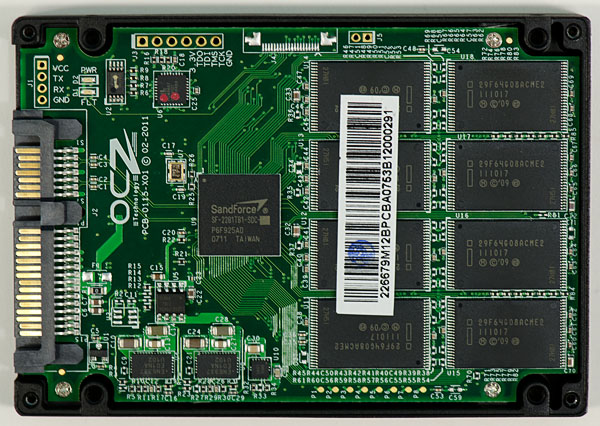

The new drive from OWC is its answer to the Vertex 3 and it's called the Mercury Extreme Pro 6G. Internally it's virtually identical to OCZ's Vertex 3, although the PCB design is a bit different and it's currently shipping with a slightly different firmware:

OWC's Mercury Extreme Pro 6G 120GB

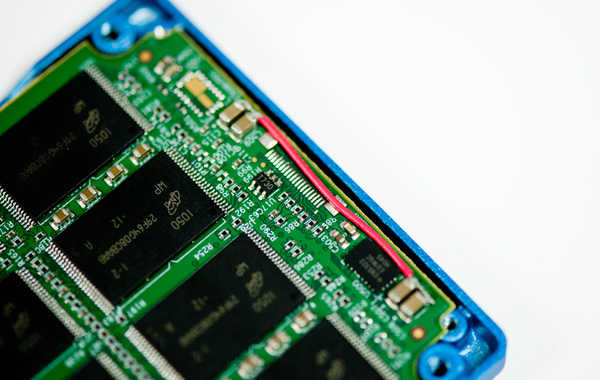

Both drives use the same SF-2281 controller, however OCZ handles its own PCB layout. It seems whoever designed OWC's PCB made an error in the design as the 120GB sample I received had a rework on the board:

Reworks aren't uncommon for samples but I'm usually uneasy when I see them in retail products. Here's a closer shot of the rework on the PCB:

Eventually the rework will be committed to a PCB design change, but early adopters may be stuck with this. The drive's warranty should be unaffected and the impact on reliability really depends on the nature of the rework and quality of the soldering job.

Like OCZ, OWC is shipping SandForce's RC (Release Candidate) firmware on the Mercury Extreme Pro 6G. Unlike OCZ however, OWC's version of the RC firmware has a lower cap on 4KB random writes. In our 4KB random write tests OWC's drive manages 27K IOPS, while the Vertex 3 can push as high as 52K with a highly compressible dataset (39K with incompressible data). OCZ is still SandForce's favorite partner and thus it gets preferential treatment when it comes to firmware.

OWC has informed me that around Friday or Monday it will have mass production firmware from SandForce, which should boost 4KB random write performance on its drive to a level equal to that of the Vertex 3. If that ends up being the case I'll of course post an update to this review. Note that as a result of the cap that's currently in place, OWC's specs for the Mercury Extreme Pro 6G aren't accurate. I don't put much faith in manufacturer specs to begin with, but it's worth pointing out.

| OWC Mercury Extreme Pro 6G Lineup | |||||

| Specs (6Gbps) | 120GB | 240GB | 480GB | ||

| Sustained Reads | 559MB/s | 559MB/s | 559MB/s | ||

| Sustained Writes | 527MB/s | 527MB/s | 527MB/s | ||

| 4KB Random Read | Up to 60K IOPS | Up to 60K IOPS | Up to 60K IOPS | ||

| 4KB Random Write | Up to 60K IOPS | Up to 60K IOPS | Up to 60K IOPS | ||

| MSRP | $319.99 | $579.99 | $1759.99 | ||

OWC is currently only shipping the 120GB Mercury Extreme Pro 6G SSD. Given our recent experience with variable NAND configurations I asked OWC to disclose all shipping configurations of its SF-2200 drive. According to OWC the only version that will ship for the foreseeable future is what I have here today:

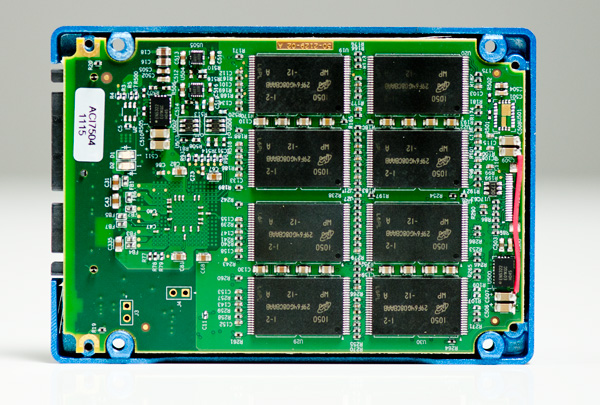

There are sixteen 64Gbit Micron 25nm NAND devices on the PCB. Each NAND device only has a single 64Gbit die inside, which results in lower performance for the 120GB drive than 240GB configurations. My review sample of OCZ's 120GB Vertex 3 had a similar configuration but used Intel 25nm NAND instead. In my testing I didn't notice a significant performance difference between the two configurations (4KB random write limits aside).

OWC prices its 120GB drive at $319.99, which today puts it at $20 more than a 120GB Vertex 3. The Mercury Extreme Pro 6G comes with a 3 year warranty from OWC, identical in length to what OCZ offers as well.

Other than the capped firmware, performance shouldn't be any different between OWC's Mercury Extreme Pro 6G and the Vertex 3. Interestingly enough the 4KB random write cap isn't enough to impact any of our real world tests.

The Test

| CPU | Intel Core i7 965 running at 3.2GHz (Turbo & EIST Disabled) Intel Core i7 2600K running at 3.4GHz (Turbo & EIST Disabled) - for AT SB 2011, AS SSD & ATTO |

| Motherboard: | Intel DX58SO (Intel X58) Intel H67 Motherboard |

| Chipset: | Intel X58 + Marvell SATA 6Gbps PCIe Intel H67 |

| Chipset Drivers: | Intel 9.1.1.1015 + Intel IMSM 8.9 Intel 9.1.1.1015 + Intel RST 10.2 |

| Memory: | Qimonda DDR3-1333 4 x 1GB (7-7-7-20) |

| Video Card: | eVGA GeForce GTX 285 |

| Video Drivers: | NVIDIA ForceWare 190.38 64-bit |

| Desktop Resolution: | 1920 x 1200 |

| OS: | Windows 7 x64 |

44 Comments

View All Comments

Anand Lal Shimpi - Thursday, May 5, 2011 - link

Those drivers were only used on the X58 platform, I use Intel's RST10 on the SNB platform for all of the newer tests/results. :)Take care,

Anand

iwod - Thursday, May 5, 2011 - link

I lost count of many times i post this series. Anyway people continue to worship 4K Random Read Write now have seen the truth. Seq Read Write is much more important then u think.Since the test are basically two identical pieces of Hardware, but one with Random Write Cap, the results shows real world doesn't show any advantage. We need more Seq performance!

Interestingly we aren't limited by the controller or NAND itself. But the connection method, SATA 6Gbps. We need to start using PCI-Express 4x slot, as Intel has shown in the leaked roadmap. Going to PCI-E 3.0 would give us 4GB/s with 4x slot. That should be plenty of room for improvement. ONFI 3.0 next year should allow us to reach 2GB+ Seq Read Write easily.

krumme - Thursday, May 5, 2011 - link

I think Anand heard to much to Intel voice in this ssd story4k random madness was Intel g2 business

And all went in the wrong direction

Anand was - and is - the ssd review site

Anand Lal Shimpi - Thursday, May 5, 2011 - link

The fact of the matter is that both random and sequential performance is important. It's Amdahl's law at its best - if you simply increase the sequential read/write speed of these drives without touching random performance, you'll eventually be limited by random performance. Today I don't believe we are limited by random performance but it's still something that has to keep improving in order for us to continue to see overall gains across the board.Take care,

Anand

Hrel - Thursday, May 5, 2011 - link

Damn! 200 dollars too expensive for the 120GB. Stopped reading.snuuggles - Thursday, May 5, 2011 - link

Good lord, every single article that discusses OWC seems to include some sort of odd-ball tangent or half-baked excuse for some crazy s**t they are pulling.Hey, I know they have the fastest stuff around, but there's just something so lame about these guys, I have to say on principle: "never, ever, will I buy from OWC"

nish0323 - Thursday, August 11, 2011 - link

What crazy s**t are they pulling? I've got 5 drives from them, all SSDs, all perform great. The 6G ones have a 5-year warranty, 2 longer than all other SSD manufacturers right now.neotiger - Thursday, May 5, 2011 - link

A lot of people and hosting companies use consumer SSD for server workload such as MySQL and Solr.Can you benchmark these SSD's performances on server workload?

Anand Lal Shimpi - Thursday, May 5, 2011 - link

It's on our roadmap to do just that... :)Take care,

Anand

rasmussb - Saturday, May 7, 2011 - link

Perhaps you have answered this elsewhere, or it will be answered in your future tests. If so, please forgive me.As you point out, the drive performance is based in large part upon the compressibility of the source data. Relatively incompressible data results in slower speeds. What happens when you put a pair (or more) of these in a RAID 0 array? Since units of data are alternating between drives, how does the SF compression work then? Does previously compressible data get less compressible because any given drive is only getting, at best (2-drive array) half of the original data?

Conversely, does incompressible data happen to get more compressible when you're splitting it amongst two or more drives in an array?

Server workload on a single drive versus in say a RAID 5 array would be an interesting comparison. I'm sure your tech savvy minds are already over this in your roadmap. I'm just asking in the event it isn't.