NVIDIA's GeForce GTX 550 Ti: Coming Up Short At $150

by Ryan Smith on March 15, 2011 9:00 AM ESTThroughout the lifetime of the 400 series, NVIDIA launched 4 GPUs: GF100, GF104, GF106, and GF108. Launched in that respective order, they became the GTX 480, GTX 460, GTS 450, and GT 430. One of the interesting things from the resulting products was that with the exception of the GT 430, NVIDIA launched each product with a less than fully populated GPU, shipping with different configurations of disabled shaders, ROPs, and memory controllers. NVIDIA has never fully opened up on why this is – be it for technical or competitive reasons – but ultimately GF100/GF104/GF106 never had the chance to fully spread their wings as 400 series parts.

It’s the 500 series that has corrected this. Starting with the GTX 580 in November of 2010, NVIDIA has been launching GPUs built on a refined transistor design with all functional units enabled. Coupled with a hearty boost in clockspeed, the performance gains have been quite notable given that this is still on the same 40nm process with a die size effectively unchanged. Thus after GTX 560 and the GF114 GPU in January, it’s time for the 3rd and final of the originally scaled down Fermi GPUs to be set loose: GF106. Reincarnated as GF116, it’s the fully enabled GPU that powers NVIDIA’s latest card, the GeForce GTX 550 Ti.

| GTX 560 Ti | GTX 460 768MB | GTX 550 Ti | GTS 450 | |

| Stream Processors | 384 | 336 | 192 | 192 |

| Texture Address / Filtering | 64/64 | 56/56 | 32/32 | 32/32 |

| ROPs | 32 | 24 | 24 | 16 |

| Core Clock | 822MHz | 675MHz | 900MHz | 783MHz |

| Shader Clock | 1644MHz | 1350MHz | 1800MHz | 1566MHz |

| Memory Clock | 1002Mhz (4.008GHz data rate) GDDR5 | 900Mhz (3.6GHz data rate) GDDR5 | 1026Mhz (4.104GHz data rate) GDDR5 | 902Mhz (3.608GHz data rate) GDDR5 |

| Memory Bus Width | 256-bit | 192-bit | 192-bit | 128-bit |

| RAM | 1GB | 768MB | 1GB | 1GB |

| FP64 | 1/12 FP32 | 1/12 FP32 | 1/12 FP32 | 1/12 FP32 |

| Transistor Count | 1.95B | 1.95B | 1.17B | 1.17B |

| Manufacturing Process | TSMC 40nm | TSMC 40nm | TSMC 40nm | TSMC 40nm |

| Price Point | $249 | ~$130 | $149 | ~$90 |

Out of the 3 scaled down 400 series cards, GTS 450 was always the most unique in how NVIDIA went about it. GF100 and GF104 disabled Streaming Multiprocessors (SMs), which housed and therefore cut down on the number of CUDA Cores/SPs and Polymorph Engines. However for GTS 450, NVIDIA instead chose to disable a ROP/memory block, giving GTS 450 the full shader/geometry performance of GF106 (on paper at least), but reduced memory bandwidth, L2 cache, and ROP throughput. We’ve always wondered why NVIDIA built a lower-performance/high-volume GPU with an odd number of memory blocks and what the immediate implications would be of disabling one of those blocks. Now we get to find out.

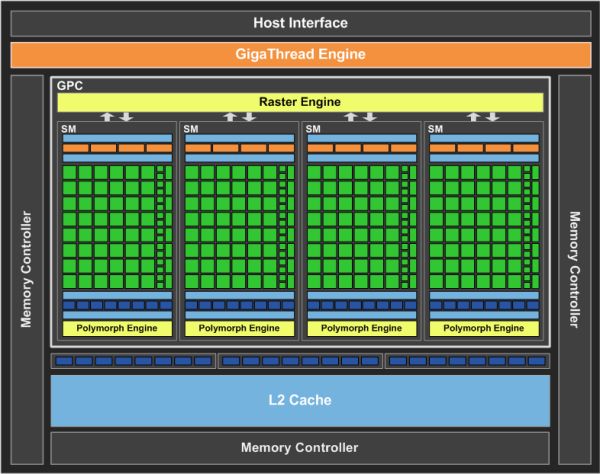

Launching today is the GTX 550 Ti, which features the GF116 GPU. As with GF114 before it, GF116 is a slight process tweak over GF106, using a new selection of transistors in order to reduce leakage, increase clocks, and to improve the card’s performance per watt. With these changes in hand NVIDIA has fully unlocked GF106/GF116 for the first time, giving GTX 550 Ti the responsibility of being the first fully enabled part: 192 CUDA cores is paired with 24 ROPs, 32 texture units, 384KB of L2 cache, a 192-bit memory bus, and 1GB of GDDR5.

The GTX 550 Ti will be shipping at a core clock of 900MHz and a memory clock of 1026MHz (4104MHz data rate), the odd memory speed being due to NVIDIA’s quirky PLLs. If you recall, GTS 450 was clocked at 783MHz core and 902MHz memory, giving the GTX 550 Ti an immediate 117MHz (15%) core clock and 124MHz (14%) memory clock advantage, with the latter coming on top of an additional 50% memory bandwidth advantage due to the wider memory bus (192-bit vs. 128-bit). NVIDIA puts the TDP at 116W, 10W over GTS 450. GF116 remains effectively unchanged from GF106, giving it a transistor count of 1.17B, with the power difference coming down to higher clocks and the additional functional units that have been enabled.

Unlike the GTS 450 launch, GTX 550 Ti is a more laid back affair for NVIDIA – admittedly this is more often a bad sign than it is a good one when it comes to gauging their confidence in a product. As a result they are not sampling any reference cards to reviewers, instead leaving that up to their board partners. As with GF104/GF114, GF116 is pin compatible with GF106, meaning partners can mostly reuse GTS 450 designs; they need only reorganize the PCB to handle a 192bit bus along with meeting the slightly higher power and cooling requirements. As a result a number of custom designs and overclocked cards will be launching right out of the gate, and you’re unlikely to ever see a reference card. Today we’re looking at Zotac’s GeForce GTX 550 Ti AMP, a factory overclocked card that pushes the core and memory clocks to 1000MHz and 1100MHz respectively. The MSRP on the GTX 550 Ti is $149 - $20 more than where GTS 450 launched at – while overclocked cards such as the Zotac model will go for more.

As was the case with the GTS 450, NVIDIA is primarily targeting the GTX 550 Ti towards buyers looking at driving 1680x1050 and smaller monitors, while GTX 460/560 continues to be targeted at 1920x1080/1200. Its closest competitor in the existing NVIDIA product stack is the GTX 460 768MB. The GTX 460 768MB has not officially been discontinued, but one quick look at product supplies shows that 768MB cards are fast dropping and we’d expect the 768MB cards to soon be de-facto discontinued, making the GTX 550 Ti a much cheaper to build replacement for the GTX 460 768MB. In the meantime however this means the GTX 550 Ti launches against the remaining supply of bargain priced GTX 460 cards.

AMD’s competition will be the Radeon HD 6850, and Radeon HD 5770. As is often the case NVIDIA is intending to target an AMD weak spot, in this case the fact that AMD doesn’t have anything between the 5770 and 6850 in spite of the sometimes wide performance gap. Pricing will be NVIDIA’s biggest problem here as the 5770 is available for around $110, while AMD has worked with manufacturers to get 6850 prices down to around $160 after rebate. Finally, to slightly spoil the review, as you may recall the GTS 450 had a deal of trouble breaking keeping up with the Radeon HD 5770 in performance – so NVIDIA has quite the performance gap to cover to keep up with AMD’s pricing.

| March 2011 Video Card MSRPs | ||

| NVIDIA | Price | AMD |

| $700 | Radeon HD 6990 | |

| $480 | ||

| $320 | Radeon HD 6970 | |

| $240 | Radeon HD 6950 1GB | |

| $190 | Radeon HD 6870 | |

| $160 | Radeon HD 6850 | |

| $150 | ||

| $130 | ||

|

|

$110 | Radeon HD 5770 |

79 Comments

View All Comments

Ryan Smith - Tuesday, March 15, 2011 - link

Our experience with desktop Linux articles in the past couple of years is that there's little interest from a readership perspective. The kind of video cards we normally review are for gaming purposes, which is lacking to say the least on Linux. We could certainly try to integrate Linux in to primary GPU reviews, but would it be worth the time and what we would have to give up in return? Probably not. But if you think otherwise I'm all ears.HangFire - Tuesday, March 15, 2011 - link

All I'm asking for is current and projected CUDA/OpenCL level support, and what OS distro's and revisions are supported.You may not realize it, but all this GPGPU stuff is really used in science, government and defense work. Developers often get the latest and greatest gaming card and when it is time for deployment, middle end cards (like this one) are purchased en masse.

Nividia and AMD have been crowing about CUDA and OpenCL, and then deliver spotty driver coverage for new and previous generation cards. If they are going to market it heavily, they should cough up the support information with each card release, we shouldn't have to call the corporate rep and harangue them each and every time.

Belard - Wednesday, March 16, 2011 - link

Someone who already has a GF450 would be a sucker to spend $150 for a "small-boost" upgraded card.When upgrading, a person should get a 50% or better video card. A phrase that never applies to a video card is "invest" since they ALL devalue to almost nothing. Todays $400~500 cards are tomorrows $150 cards and next weeks $50.

So a current GF450 owner should look at a GF570 or ATI 6900 series cards for a good noticeable bump.

mapesdhs - Wednesday, March 16, 2011 - link

Or, as I've posted before, a 2nd card for SLI/CF, assuming their mbd

and the card supports it. Whether or not this is worthwhile and the

issues which affect the outcome is what I've been researching in recent

weeks. Sorry I can't post links due to forum policy, but see my earlier

longer post for refs.

Ian.

HangFire - Friday, March 18, 2011 - link

I wasn't really suggesting such an upgrade (sidegrade). I was just saying that each generation card at a price point and naming convention (450->550) should have at least a little better performance than card it replaces.Calabros - Tuesday, March 15, 2011 - link

tell me a reason to NOT prefer 6850 over this7Enigma - Tuesday, March 15, 2011 - link

So basically this is my 4870 in a slightly lower power envelope with DX11 features. I'm shocked the performance is so low honestly. Thanks for including the older cards in the review because it's always nice to see I'm still chugging along just fine at my gaming resolution (1280X1024) 19".7Enigma - Tuesday, March 15, 2011 - link

Forgot to add, which I bought in Jan 2009 for $180 (Sapphire Toxic 512meg VaporX, so not reference design)mapesdhs - Tuesday, March 15, 2011 - link

You're the target audience for the work I've been doing, comparing cards at

that kind of resolution, old vs. new, and especially where one is playing older

games, etc. Google for, "Ian PC Benchmarks", click the 1st result, then select,

"PC Benchmarks, Advice and Information". I hope to be able to obtain a couple

of 4870s or 4890s soon, though there's already a lot of 4890 results included.

Ian.

morphologia - Tuesday, March 15, 2011 - link

Why in the name of all that's graphical would you use this Noah's Ark menagerie of cards but leave out the 4890? It doesn't make sense. If you're going to include 4000 series cards, you must include the top-of-the-line single-GPU card. It's proven to be quite competitive even now, against the lower-level new cards.