The MacBook Pro Review (13 & 15-inch): 2011 Brings Sandy Bridge

by Anand Lal Shimpi, Brian Klug & Vivek Gowri on March 10, 2011 4:17 PM EST- Posted in

- Laptops

- Mac

- Apple

- Intel

- MacBook Pro

- Sandy Bridge

Turbo and the 15-inch MacBook Pro

The 15 and 13 are different enough that I'll address the two separately. Both are huge steps forward compared to their predecessors, but for completely different reasons. Let's start with the 15.

Starting with Sandy Bridge, all 15 and 17-inch MacBook Pros now feature quad-core CPUs. This is a huge deal. Unlike other notebook OEMs, Apple tends to be a one-size-fits-all sort of company. Sure you get choice of screen size, but the options dwindle significantly once you've decided how big of a notebook you want. For the 15 and 17-inch MBPs, all you get are quad-core CPUs. Don't need four cores? Doesn't matter, you're getting them anyway

| Evolution of the 15-inch MacBook Pro | Early 2011 | Mid 2010 | Late 2009 |

| CPU | Intel Core i7 2.0GHz (QC) | Intel Core i5 2.40GHz (DC) | Intel Core 2 Duo 2.53GHz (DC) |

| Memory | 4GB DDR3-1333 | 4GB DDR3-1066 | 4GB DDR3-1066 |

| HDD | 500GB 5400RPM | 320GB 5400RPM | 250GB 5400RPM |

| Video | Intel HD 3000 + AMD Radeon HD 6490M (256MB) |

Intel HD Graphics + NVIDIA GeForce GT 330M (256MB) |

NVIDIA GeForce 9400M (integrated) |

| Optical Drive | 8X Slot Load DL DVD +/-R | 8X Slot Load DL DVD +/-R | 8X Slot Load DL DVD +/-R |

| Screen Resolution | 1440 x 900 | 1440 x 900 | 1440 x 900 |

| USB | 2 | 2 | 2 |

| SD Card Reader | Yes | Yes | Yes |

| FireWire 800 | 1 | 1 | 1 |

| ExpressCard/34 | No | No | No |

| Battery | 77.5Wh | 77.5Wh | 73Wh |

| Dimensions (W x D x H) | 14.35" x 9.82" x 0.95" | 14.35" x 9.82" x 0.95" | 14.35" x 9.82" x 0.95" |

| Weight | 5.6 lbs | 5.6 lbs | 5.5 lbs |

| Price | $1799 | $1799 | $1699 |

Apple was able to rationalize this decision because of one feature: Intel Turbo Boost.

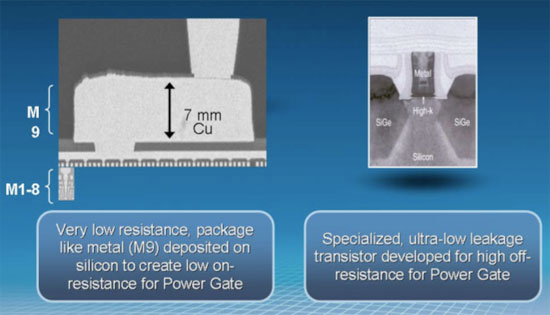

In the ramp to 90nm Intel realized that it was expending a great deal of power in the form of leakage current. You may have heard transistors referred to as digital switches. Turn them on and current flows, turn them off and current stops flowing. The reality is that even when transistors are off, some current may still flow. This is known as leakage current and it becomes a bigger problem the smaller your transistors become.

With Nehalem Intel introduced a new type of transistor into its architecture: the power gate transistor. Put one of these babies in front of the source voltage to a large group of transistors and at the flip of a, err, switch you can completely shut off power to those transistors. No current going to the transistors means effectively no leakage current.

Prior to Intel's use of power gating, we had the next best thing: clock gating. Instead of cutting power to a group of transistors, you'd cut the clock signal. With no clock signal, any clocked transistors would effectively be idle. Any blocks that are clock gated consume no active power, however it doesn't address the issue of leakage power. So while clock gating got you some thermal headroom, it became less efficient as we moved to smaller and smaller transistors.

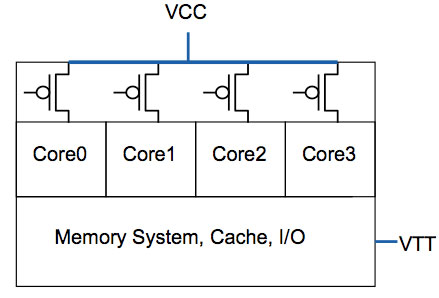

All four cores in this case have the same source voltage, but can be turned off individually thanks to the power gate above the core

Power gating gave Intel one very important feature: the ability to truly shut off a core when not in use. Prior to power gating Intel, like any other microprocessor company, had to make tradeoffs in choosing core count vs. clock speed. The maximum power consumption/thermal output is effectively a fixed value, physics has something to do with that. If you want four cores in the same thermal envelope as two cores, you have to clock them lower. In the pre-Nehalem days you had to choose between two faster cores or four slower cores, there was no option for people who needed both.

Now, with the ability to mostly turn off idle cores, you can get around that problem. A fully loaded four core CPU will still run at a lower clock than a dual core version, however with power gating if you are only using two cores then you have the thermal headroom to ramp up the clock speed of the two active cores (since the idle ones are effectively off).

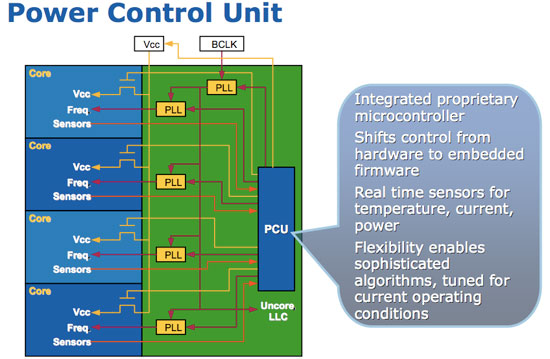

Get a little more clever and you can do this power gate and clock up dance for more configurations. Only using one core? Power gate three and run the single active core at a really really high speed. All of this is done by a very complex piece of circuitry on the microprocessor die. Intel introduced it in Nehalem and called it the Power Control Unit (this is why engineers aren't good marketers but great truth tellers). The PCU in Nehalem was about a million transistors, around the complexity of the old Intel 486, and all it did was look at processor load, temperature, power consumption, active cores and clock speed. Based on all of these inputs it would determine what to turn off and what clock speed to run the entire chip at.

Another interesting side effect of the PCU is that if you're using all cores but they're not using the most power hungry parts of their circuitry (e.g. not running a bunch of floating point workloads) the PCU could keep all four active but run them at a slightly higher frequency.

| Single Core | Dual Core | Quad Core | |

| TDP |

|

|

|

| Tradeoff |

|

|

|

The PCU actually works very quickly. Let's say you're running an application that only for a very brief period is only using a single core. That's more than enough time for the PMU to turn off all unused cores, turbo up the single core and complete the task quicker.

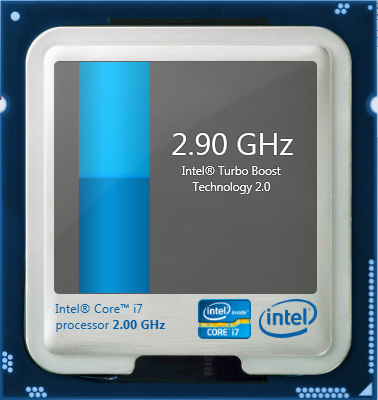

Intel calls this dynamic frequency scaling Turbo Boost (ah this is where the marketing folks took over). The reason I went through this lengthy explanation of Turbo is because it allowed Apple to equip the 15-inch Macbook Pro with only quad-core options and not worry about it being slower than the dual-core 13-inch offering, despite having a lower base clock speed (2.0GHz for the 15 vs. 2.3GHz for the 13).

13-inch MacBook Pro (left), 15-inch MacBook Pro with optional high res/anti-glare display (right)

Apple offers three CPU options in the 15-inch MacBook Pro: a 2.0GHz, 2.2GHz or 2.3GHz quad-core Core i7. These actually correspond to the Core i7-2635QM, 2720QM and 2820QM. The main differences are in the table below:

| Apple 15-inch 2011 MacBook Pro CPU Comparison | |||||

| 2.0GHz quad-core | 2.2GHz quad-core | 2.3GHz quad-core | |||

| Intel Model | Core i7-2635QM | Intel Core i7-2720QM | Intel Core i7-2820QM | ||

| Base Clock Speed | 2.0GHz | 2.2GHz | 2.3GHz | ||

| Max SC Turbo | 2.9GHz | 3.3GHz | 3.4GHz | ||

| Max DC Turbo | 2.8GHz | 3.2GHz | 3.3GHz | ||

| Max QC Turbo | 2.6GHz | 3.0GHz | 3.1GHz | ||

| L3 Cache | 6MB | 6MB | 8MB | ||

| AES-NI | No | Yes | Yes | ||

| VT-x | Yes | Yes | Yes | ||

| VT-d | No | Yes | Yes | ||

| TDP | 45W | 45W | 45W | ||

The most annoying part of all of this is that the base 2635 doesn't support Intel's AES-NI. Apple still doesn't use AES-NI anywhere in its OS it seems so until Lion rolls around I guess this won't be an issue. Shame on Apple for not supporting AES-NI and shame on Intel for using it as a differentiating feature between parts. The AES instructions, introduced in Westmere, are particularly useful in accelerating full disk encryption as we've seen under Windows 7.

Note that all of these chips carry a 45W TDP, that's up from 35W in the 13-inch and last year's 15-inch model. We're talking about nearly a billion transistors fabbed on Intel's 32nm process—that's almost double the transistor count of the Arrandale chips found in last year's MacBook Pro. These things are going to consume more power.

Despite the fairly low base clock speeds, these CPUs can turbo up to pretty high values depending on how many cores are active. The base 2.0GHz quad-core is only good for up to 2.9GHz on paper, while the 2720QM and 2820QM can hit 3.3GHz and 3.4GHz, respectively.

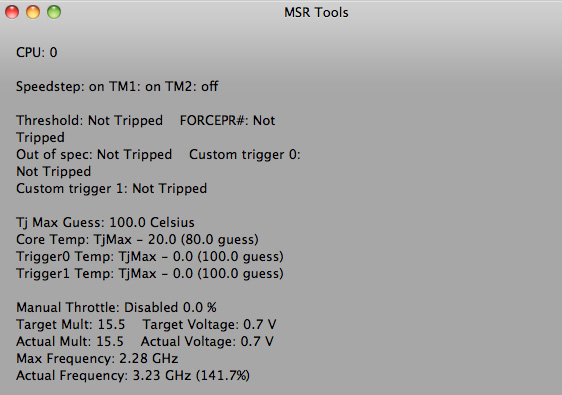

Given Apple's history of throttling CPUs and not telling anyone I was extra paranoid in finding out if any funny business was going on with the new MacBook Pros. Unfortunately there are very few ways of measuring turbo frequency under OS X. Ryan Smith pointed me in the direction of MSR Tools which, although not perfect, does give you an indication of what clock speed your CPU is running at.

Max single core turbo on the 2.3GHz quad-core

With only a single thread active the 2.3GHz quad-core seemed to peak at ~3.1—3.3GHz. This is slightly lower than what I saw under Windows (3.3—3.4GHz pretty consistently running Cinebench R10 1CPU test). Apple does do power management differently under OS X, however I'm not entirely sure that the MSR Tools application is reporting frequency as quickly as Intel's utilities under Windows 7.

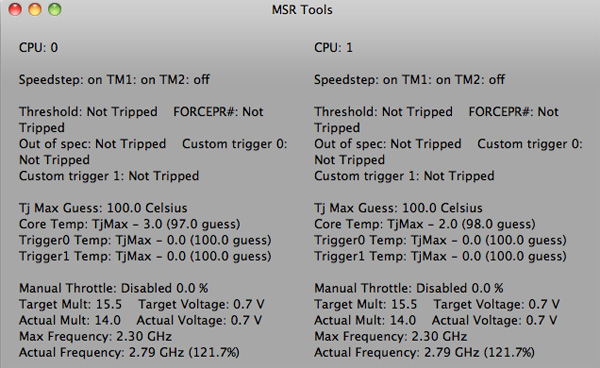

Max QC turbo on the 2.3GHz quad-core

With all cores active (once again, Cinebench R10 XCPU) the max I saw on the 2.3 was 2.8GHz. Under Windows running the same test I saw similar results at 2.9GHz.

Max QC turbo on the 2.3GHz quad-core under Windows 7

I'm pretty confident that Apple isn't doing anything dramatic with clock speeds on these new MacBook Pros. Mac OS X may be more aggressive with power management than Windows, but max clock speed remains untouched.

| Mac OS X 10.6.6 vs. Windows 7 Performance | ||||

| 15-inch 2011 MBP, 2.0GHz quad-core | Single-Threaded | Multi-Threaded | ||

| Mac OS X 10.6.6 | 4060 | 15249 | ||

| Windows 7 x64 | 4530 | 16931 | ||

Note that even though the operating frequencies are similar under OS X and Windows 7, Cinebench performance is still higher under Windows 7. It looks like there's still some software optimization that needs to be done under OS X.

198 Comments

View All Comments

IntelUser2000 - Friday, March 11, 2011 - link

You don't know that, testing multiple systems over the years should have shown performance differences between manufacturers with identical hardware is minimal(<5%). Meaning its not Apple's fault. GPU bound doesn't mean rest of the systems woud have zero effect.It's not like the 2820QM is 50% faster, its 20-30% faster. The total of which could have been derived from:

1. Quad core vs. Dual core

2. HD3000 in the 2820QM has max clock of 1.3GHz, vs. 1.2GHz in the 2410M

3. Clock speed of the 2820QM is quite higher in gaming scenarios

4. LLC is shared between CPU and Graphics. 2410M has less than half the LLC of 2820QM

5. Even at 20 fps, CPU has some impact, we're not talking 3-5 fps here

It's quite reasonable to assume, in 3DMark03 and 05, which are explicitely single threaded, benefits from everything except #1, and frames should be high enough for CPU to affect it. Games with bigger gaps, quad core would explain to the difference, even as little as 5%.

JarredWalton - Friday, March 11, 2011 - link

I should have another dual-core SNB setup shortly, with HD 3000, so we'll be able to see how that does.Anyway, we're not really focusing on 3DMarks, because they're not games. Looking just at the games, there's a larger than expected gap in the performance. Remember: we've been largely GPU limited with something like the GeForce G 310M using Core i3-330UM ULV vs. Core i3-370. That's a doubling of clock speed on the CPU, and the result was: http://www.anandtech.com/bench/Product/236?vs=244 That's a 2 to 14% difference, with the exception of the heavily CPU dependent StarCraft II (which is 155% faster with the U35Jc).

Or if you want a significantly faster GPU comparison (i.e. so the onus is on the CPU), look at the Alienware M11x R2 vs. the ASUS N82JV: http://www.anandtech.com/bench/Product/246?vs=257 Again, much faster GPU than the HD 3000 and we're only seeing 10 to 25% difference in performance for low detail gaming. At medium detail, the difference between the two platforms drops to just 0 to 15% (but it grows to 28% in BFBC2 for some reason).

Compare that spread to the 15 to 33% difference between the i5-2415M and the i7-2820QM at low detail, and perhaps even more telling is the difference remains large at medium settings (16.7 to 44% for the i7-2820QM, except SC2 turns the tables and leads by 37%). The theoretical clock speed difference on the IGP is only 8.3%, and we're seeing two to four times that much -- the average is around 22% faster, give or take. StarCraft II is a prime example of the funkiness we're talking about: the 2820QM is 31% faster at low, but the 2415M is 37% faster at medium? That's not right....

Whatever is going on, I can say this much: it's not just about the CPU performance potential. I'll wager than when I test the dual-core SNB Windows notebook (an ASUS model) that scores in gaming will be a lot closer than what the MBP13 managed. We'll see....

IntelUser2000 - Saturday, March 19, 2011 - link

I forgot one more thing. The quad core Sandy Bridge mobile chips support DDR3-1600 and dual core ones only up to DDR3-1333.mczak - Thursday, March 10, 2011 - link

memory bus width of HD6490M and H6750M is listed as 128bit/256bit. That's quite wrong, should be 64bit/128bit.btw I'm wondering what's the impact on battery life for the HD6490M? It isn't THAT much faster than the HD3000, so I'm wondering if at least the power consumption isn't that much higher neither...

Anand Lal Shimpi - Thursday, March 10, 2011 - link

Thanks for the correction :)Take care,

Anand

gstrickler - Thursday, March 10, 2011 - link

Anand, I would like to see heat and maximum power consumption of the 15" with the dGPU disabled using gfxCardStatus. For those of us who aren't gamers and don't need OpenCL, the dGPU is basically just a waste of power (and therefore, battery life) and a waste of money. Those should be fairly quick tests.Nickel020 - Thursday, March 10, 2011 - link

The 2010 Macbooks with the Nvidia GPUs and Optimus switch to the iGPU again even if you don't close the application, right? Is this a general ATI issue that's also like this on Windows notebooks or is it only like this on OS X? This seems like quite an unnecessary hassle, actually having to manage it yourself. Not as bad as having to log off like on my late 2008 Macbook Pro, but still inconvenient.tipoo - Thursday, March 10, 2011 - link

Huh? You don't have to manage it yourself.Nickel020 - Friday, March 11, 2011 - link

Well if you don't want to use the dGPU when it's not necessary you kind of have to manage it yourself. If I don't want to have the dGPU power up while web browsing and make the Macbook hotter I have to manually switch to the iGPU with gfxCardStatus. I mean I can leave it set to iGPU, but then I will still manually have to switch to the dGPU when I need the dGPU. So I will have to manage it manually.I would really have liked to see more of a comparison with how the GPU switching works in the 2010 Macbook Pros. I mean I can look it up, but I can find most of the info in the review somewhere else too; the point of the review is kind of to have it all the info in one place, and not having to look stuff up.

tajmahal42 - Friday, March 11, 2011 - link

I think switching behaviour should be exactly the same for the 2010 and 2011 MacBook Pros, as the switching is done by the Mac OS, not by the Hardware.Apparently, Chrome doesn't properly close done Flash when it doesn't need it anymore or something, so the OS thinks it should still be using the dGPU.