Synology DS211+ SMB NAS Review

by Ganesh T S on February 28, 2011 3:50 AM EST- Posted in

- IT Computing

- NAS

- Synology

For the purpose of NAS reviews, we have setup a dedicated testbed with the configuration as below. The NAS is directly connected to the testbed (using as many Cat 5E cables as there are ports on the NAS) without a switch or router inbetween. This is done in order to minimize the number of external factors which might influence the performance of the system.

| NAS Benchmarking Testbed Setup | |

| Processor | Intel i5-680 CPU - 3.60GHz, 4MB Cache |

| Motherboard | Asus P7H55D-M EVO |

| OS Hard Drive | Seagate Barracuda XT 2 TB |

| Secondary Drive | Kingston SSDNow 128GB |

| Memory | G.SKILL ECO Series 2GB (1 x 2GB) SDRAM DDR3 1333 (PC3 10666) F3-10666CL7D-4GBECO CAS 7-7-7-21 |

| PCI-E Slot | Quad-Port GbE Intel ESA-I340 |

| Optical Drives | ASUS 8X Blu-ray Drive Model BC-08B1ST |

| Case | Antec VERIS Fusion Remote Max |

| Power Supply | Antec TruePower New TP-550 550W |

| Operating System | Windows 7 Ultimate x64 / Ubuntu 10.04 |

| . | |

In addition to the Realtek GbE NIC on-board the Asus P7H55D-M EVO, four more GbE ports are enabled on the system, thanks to the Intel ESA-I340 quad port GbE ethernet server adapter . With a PCI-E x4 connector, the card was plugged into the PCI-E x16 slot on the Asus motherboard.

Since the Synology DS211+ has only 1 GbE port, there was no need to team the four ports in the ESA-I340.

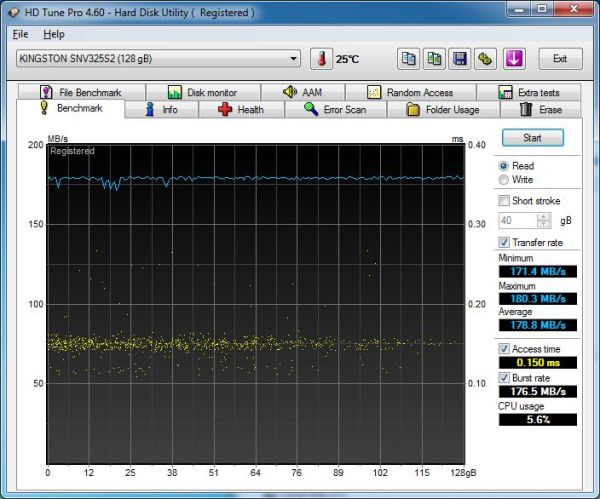

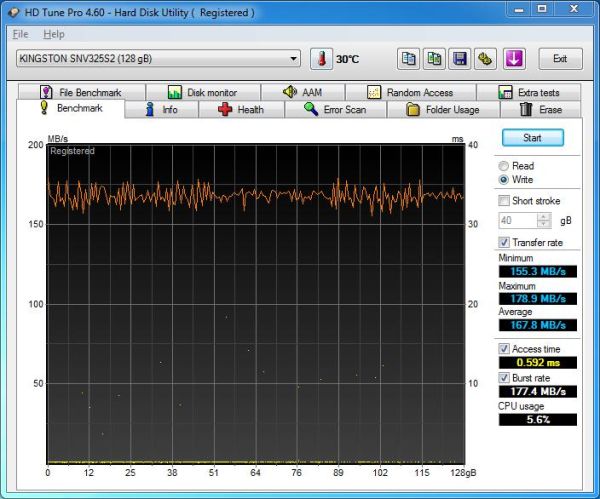

Intel NASPT is used to benchmark the NAS device. In order to ensure that the hard disk transfer rate is not a bottleneck, NASPT is run from the secondary drive in the testbed (the Kingston SSD). With average read and write speeds of 178.8 MB/s and 167.8 MB/s, it is unlikely that a single GbE link NAS can be limited in performance due to the test system. However, a link aggregated NAS could be affected. Fortunately, as we will see in the next few sections, this wasn't the case for the 5big storage server.

All file copy tests were also performed using the SSD. The file copy test consists of transferring a 10.7 GB Blu-Ray folder structure between the NAS and the testbed using the robocopy command in mirror mode (on Windows) and the rsync -av command (on Linux).

There are three important sharing protocols we investigated in the course of our evaluation of the DS211+. In the next few sections, you will find NASPT and/or robocopy/rsync benchmarking results for Samba, NFS and iSCSI sharing protocols. Each section also has a small description of how the shares were set up on the NAS. The NASPT benchmarks were run in Batch mode thrice, giving us 15 distinct data points. The average of these 15 values is recorded in the graphs presented in the following sections. The robocopy and rsync benchmarks were run thrice, and the average transfer rate of the three iterations is presented alongside the NASPT benchmarks.

49 Comments

View All Comments

ganeshts - Monday, February 28, 2011 - link

Let me talk to Synology PR and get back to you.ganeshts - Monday, February 28, 2011 - link

Here is the official response from Synology:Pandamonium - Monday, February 28, 2011 - link

Thanks Ganesh- I'm glad that you were able to get an actual response from them.Customers have posted far more eloquent explanations for why they want scheduled/automated SMART testing than what I posted here and on Synology's forums, and neither they nor I have gotten satisfactory responses.

I'll be the first to say that I am quite happy with my Synology DS209; but I'll also be the first to tell you that this lack of customer service is driving me towards QNAP when it comes time to replace my NAS.

I fail to see the problem with scheduling a quick SMART test every week and a long test every 3 months or so. Synology's canned response to your inquiry seems pretty lackluster. Off-site backups are primarily used to protect against catastrophic failures from theft/disasters/etc. They are not used to protect against bit rot. AFAIK, only checksummed filesystems like ZFS and SMART testing helps protect against bit rot. I'm sure Amazon S3 has something like this in place. While licensing issues may understandably prevent Synology from implementing ZFS, I see no reason stopping them from giving users the option to schedule regular SMART tests.

Maybe I've got a fundamental misunderstanding about data integrity and backup. But I'm a customer with a concern shared by other customers, and this concern could be remedied with a relatively easy fix. Synology's responses really rub me the wrong way, so I plan to put my money where my mouth is and go with a different manufacturer in the future.

Penti - Monday, February 28, 2011 - link

As always with NAS-boxes they simply use Linux kernels soft RAID-1 or some other (their own) software RAID scheme. Which of course don't protect for other things then pure drive failures.If you want to use ZFS you could simply build your own box with OpenSolaris.

Makers like Synology could also build their boxes with FreeBSD if they like ZFS support.

Penti - Monday, February 28, 2011 - link

Of course they could also use ZFS on FUSE. So nothing is really stopping them.LTG - Monday, February 28, 2011 - link

I think one thing to consider when deciding between buying a NAS unit vs. a traditional PC is that you won't get transcoding with NAS.For example, want to have all your DVDs/Blu-Rays/Home Movies stored and watch them on any mobile device, tablet or remote location automagically? Not gonna happen without creating/managing special copies for every possible device.

Secondly, I think Drobo and QNap should be mentioned here even if they haven't been reviewed yet. The reason is most people usually end up wanting to compare Synology/Drobo/QNap. So without a review it's still helpful to know the most discussed players.

Finally anyone considering a multi-disk setup should look very carefully at the RAID technology used.

Forget what you know about RAID 0/1/5/6. For home users, the newer quasi-raid technologies are much more practical. Some let you remove a single disk and view it's files on a separate system which is totally impossible with traditional enterprise raid (other than straight mirroring). Some let you upgrade 1 disk at a time, I believe the Synology requires you upgrade 2 at a time to see a storage bump.

So I'm not making a recommendation here, but trying to point out some of the bigger differences that may drive you one way or another.

ganeshts - Monday, February 28, 2011 - link

Some members in the ReadyNAS lineup have transcoding using Orb. We will hopefully be evaluating it shortly.I did mention Drobo and QNAP as competitors :) Of course, with no unit to compare with, there is nothing much I can write about them.

Your comments add lot of information that I should probably have put in my review itself :) Thanks for the same, and hope the readers take note!

ganeshts - Monday, February 28, 2011 - link

One note about Synology requiring upgrades of 2 drives at a time:With SHR, this is not the case. You can upgrade one by one. In fact, if 2 are pulled, the volume could fail.

donb123 - Monday, February 28, 2011 - link

Review is OK...a little light for anandtech.com IMO.I have been using a NAS in my home network for at least 10 years. For many, many years I built my own and either used a Centos Linux OS or later on the Freenas OS. Performance varied a lot depending on my hardware selections and power consumption was always a concern with our seemingly endless rise in energy costs. Also, managing failed drives etc didn't also go as planned. I never lost data but I did have a few very stressful moments with my DIY NAS's.

Enter Synology. I decided to go the Synlogy way for two main reasons:

1/ Very low power footprint

2/ At the moment in time when I purchased, there were no other comparable 4-Bay NAS's for the price point. All were significantly more. (I initially wanted a Qnap device)

I own the DS-410j configured with 4 WD green 1 TB drives. I am completely happy with this device.

What I do with it:

- SAMBA for 3 Windows 7 boxes using shared data and also backing up to the NAS device daily (using the Synology backup client) and also services my Android tablet.

- Mac support for an iMac and a Mac Mini also accessing the shared data

- NFS support supporting my Linux VMs as well as my Beagleboard also accessing the shared data

- Use download station regularly

- ssh service enabled

Now for the above features it works absolutely great! No complaints. Performance is pretty much exactly as advertised for this product averaging 28 MB/s R/W regardless of protocol.

Also of note, the DS-410j was on and running since the day i purchased it until we had an extended power failure 2 weeks ago. I was probably a month short of a year from the moment I powered it on for the first time. I don't know of many consumer devices that can make that claim as far as uptime and reliability is concerned.

It's worth noting that I've played around with many of the other features on the Synology device but they don't really fit into my workflow so I can't really offer and opinion beyond, "yeah it seems to work".

Aikouka - Monday, February 28, 2011 - link

Hi Ganesh,My thought when looking at a NAS like this is, "how much of a performance hit do I take versus a full-blown PC?" I think this really comes into question when the diskless cost is ~$400, which you could build a decent file serving machine for only a little bit more (depending on need to buy an OS or whatever else you can scrounge up). I mean, I could look at the numbers and say, "well, I usually see 90-100MB/s transfers to my C2D-based server", but that's a poor analogy, because I don't use the same HDD as the review unit.

So, what might be helpful to see is how fast the drives are by default, and how fast they would be when shared (SMB) in a computer with a fast processor.