NVIDIA's Project Kal-El: Quad-Core A9s Coming to Smartphones/Tablets This Year

by Anand Lal Shimpi on February 15, 2011 9:05 PM ESTThe Architecture

Kal-El looks a lot like NVIDIA's Tegra 2, just with more cores and some pin pointed redesigns. The architecture will first ship in a quad-core, 40nm version. These aren't NVIDIA designed CPU cores, but rather four ARM Cortex A9s running at some presently unannounced clock speed. I asked NVIDIA if both the tablet and smartphone versions of Kal-El will feature four cores. The plan is for that to be the case, at least initially. NVIDIA expects high end smartphones manufacturers to want to integrate four cores this year and going in to 2012.

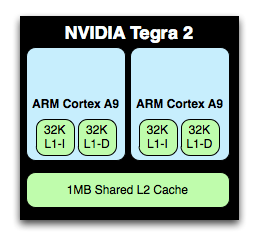

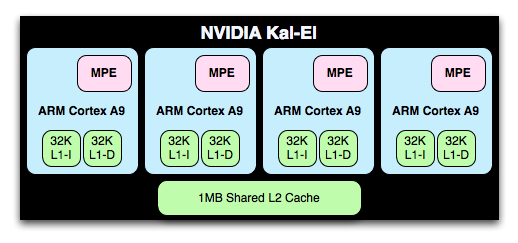

The CPU cores themselves have changed a little bit. Today NVIDIA's Tegra 2 features two Cortex A9s behind a shared 1MB L2 cache. Kal-El will use four Cortex A9s behind the same shared 1MB L2 cache.

NVIDIA also chose not to implement ARM's Media Processing Engine (MPE) with NEON support in Tegra 2. It has since added in MPE to each of the cores in Kal-El. You may remember that MPE/NEON support is one of the primary differences between TI's OMAP 4 and NVIDIA's Tegra 2. As of Kal-El, it's no longer a difference.

Surprisingly enough, the memory controller is still a single 32-bit wide LPDDR2 controller. NVIDIA believes that even a pair of Cortex A9s can not fully saturate a single 32-bit LPDDR2 channel and anything wider is a waste of power at this point. NVIDIA also said that effective/usable memory bandwidth will nearly double with Kal-El vs. Tegra 2. Some of this doubling in bandwidth will come from faster LPDDR2 (perhaps up to 1066?) while the rest will come as a result of some changes NVIDIA made to the memory controller itself.

Power consumption is an important aspect of Kal-El and Kal-El is expected to require, given the same workload, no more power than Tegra 2. Whether it's two fully loaded cores or one fully loaded and one partially loaded core, NVIDIA believes there isn't a single example of a situation where equal work is being done and Kal-El isn't lower power than Tegra 2. Obviously if you tax all four cores you'll likely have worse battery life than with a dual-core Tegra 2 platform, but given equal work you should see battery life that's equal if not better than a Tegra 2 device of similar specs. Given that we're still talking about a 40nm chip, this is a pretty big claim. NVIDIA told me that some of the power savings in Kal-El are simply due to learnings it had in the design of Tegra 2, while some of it is due to some pretty significant architectural discoveries. I couldn't get any more information than that.

Kal-El vs. Tegra 2 running 3D game content today at 2 - 2.5x the frame rate

On the GPU side, Kal-El implements a larger/faster version of the ULP GeForce GPU used in Tegra 2. It's still not a unified shader architecture, but NVIDIA has upped the core count from 8 to 12. Note that in Tegra 2 the 8 cores refer to 4 vertex shaders and 4 pixel shaders. It's not clear how the 12 will be divided in Kal-El but it may not be an equal scaling to 6+6.

The GPU clock will also be increased, although it's unclear to what level.

The combination of the larger GPU and the four, larger A9 cores (MPE is not an insignificant impact on die area) results in an obviously larger SoC. NVIDIA measures the package of the AP30 (the smartphone version of Kal-El) at 14mm x 14mm. The die size is somewhere around 80mm^2, up from ~49mm^2 with Tegra 2.

76 Comments

View All Comments

Lucian Armasu - Wednesday, February 16, 2011 - link

They're working on customized ARM chip for servers, called Project Denver, and will be released in 2013. It's mostly focused on performance so they will make it as powerful as an ARM chip can get around that time. It will also be targeted at PC's.Enzo Matrix - Wednesday, February 16, 2011 - link

Superman < Batman < Wolverine < Iron Man?Kinda doing things in reverse order aren't you, Nvidia?

ltcommanderdata - Wednesday, February 16, 2011 - link

It's interesting that nVidia's Coremark slide uses a recent GCC 4.4.1 build on their Tegras but uses a much older GCC 3.4.4 build on the Core 2 Duo. I can't help but think nVidia is trying to find a bad Core 2 Duo score in order to make their own CPU look more impressive.Lucian Armasu - Wednesday, February 16, 2011 - link

I think you missed their point. They're trying to say that ARM chips are quickly catching up in performance with desktop/laptop chips.jordanp - Wednesday, February 16, 2011 - link

On Tegra Roadmap chart.. looks like that curve is leveling at 100 on STARK... reaching the limit boundary of 11nm node?!!Lucian Armasu - Wednesday, February 16, 2011 - link

I think they'll reach 14 nm in 2014. IBM made a partnership with ARM to make 14 nm chips.beginner99 - Wednesday, February 16, 2011 - link

... in a mobile phone? Most people only have 2 in their many PC. Agreed, does 2 are much more powerful but still, it will end up the same as on pc side. tons of cores and only niche software using it.I still have a very old "phone only" mobile. Yesterday I had some time to kill and looked at a few smart phones. And saw exactly what someone described here. They all seemed laggy and choppy, except the iPhone. I'm anything but an apple Fan boy (more like the opposite) but if I where a consumer with 0 knowledge just by playing with the phone I would chose to buy an iPhone.

jasperjones - Wednesday, February 16, 2011 - link

Did anyone look at the fine print in the chart with the Coremark benchmark?Not only do they use more aggressive compiler flags for their products than for the T7200, they also use a much more recent version of gcc. At the very least, they are comparing apples and oranges. Actually, I'm more inclined to call it cheating...

Visual - Wednesday, February 16, 2011 - link

This looks like Moore's Law on steroids.I guess (hope?) it is technically possible, simply because for a while now we've had the reverse thing - way slower progress than Moore's Law predicts. So for a brief period we may be able to do some catch-up sprints like this.

I don't believe it will last long though.

Another question is if it is economically feasible though. What impact will this have on the prices of the previous generation? If the competition can not catch up, wouldn't nVidia decide to simply hold on to the new one instead of releasing it, trying to milk the old one as much as they can, just like all other greedy corporations in similar industries?

And finally, will consumers find an application for that performance? It not being x86 compatible, apps will have to be made specifically for it and that will take time.

I for one can not imagine using a non-x86 machine yet. I need it to be able to run all my favorite games, i.e. WoW, EVE Online, Civ 5 or whatever. I'd love a lightweight 10-12 inch tablet that can run those on max graphics, with a wireless keyboard and touch pad for the ones that aren't well suited for tablet input. But having the same raw power without x86 compatibility will be relatively useless, for now. I guess developers may start launching cool new games for that platform too, or even release the old ones on the new platform where it makes sense (Civ 5 would make a very nice match to tablets, for example), but I doubt it will happen quickly enough.

Harry Lloyd - Wednesday, February 16, 2011 - link

I'm sick of smartphones. All I see here are news about smartphones, just like last year all I saw were news about SSDs.Doesn't anything else happen in this industry?