LG Optimus 2X & NVIDIA Tegra 2 Review: The First Dual-Core Smartphone

by Brian Klug & Anand Lal Shimpi on February 7, 2011 3:53 AM EST- Posted in

- Smartphones

- Tegra 2

- LG

- Optimus 2X

- Mobile

- NVIDIA

Battery Life

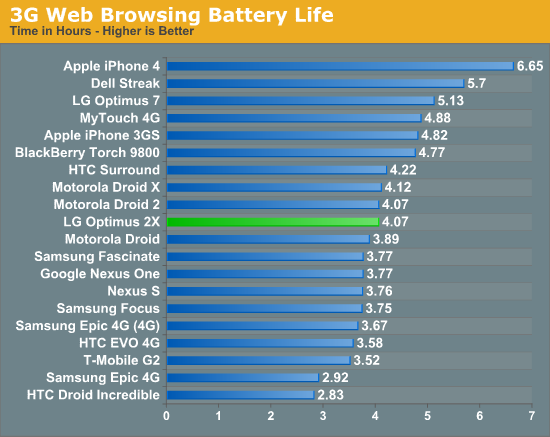

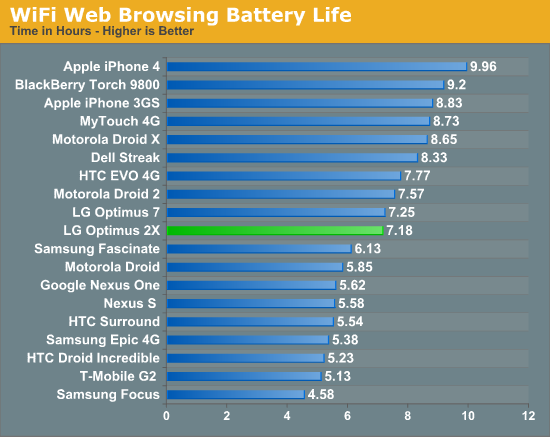

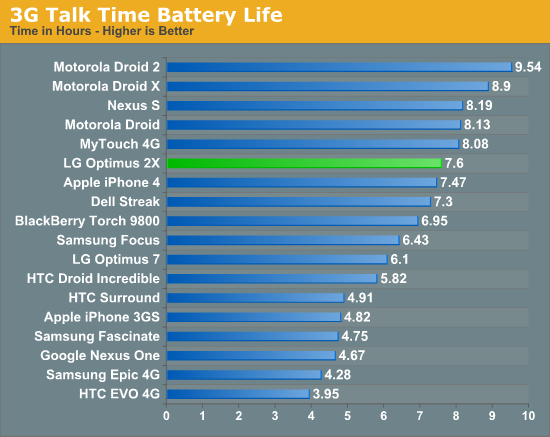

There’s been a lot of speculation about whether dual-core phones would be battery hogs or not. Turns out that voltage scaling does win, and P=V^2/R does indeed apply here. The 2X delivers middle of the road 3G and WiFi web browsing battery life numbers, and above average 3G talk time numbers.

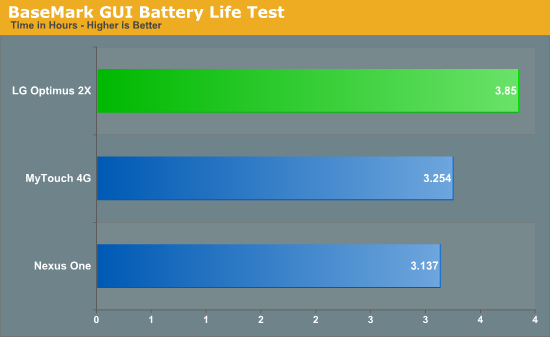

We’ve also got another new test. Gaming battery life under constant load is a use scenario we haven’t really been able to measure in the past, but are now able to. Our BaseMark GUI benchmark includes a battery test which runs the composition feature test endlessly, simultaneously taxing the CPU and GPU. It’s an aggressive test that nearly emulates constant 3D gaming. For this test we leave WiFi and cellular interfaces enabled, bluetooth off, and display brightness at 50%.

I’m a bit disappointed we don’t have more numbers to compare to, but the 2X does come out on top in this category. Anand and I both tested the Galaxy S devices we have kicking around (an Epic 4G and Fascinate), but both continually locked up, crashed, or displayed graphical corruption while running the test. Our constant 3D gaming test looks like a winner for sifting out platform instability.

Conclusion

The 2X is somewhat of a dichotomy. On one side, you've got moderately aesthetically pleasing hardware, class-leading performance from Tegra 2 that doesn't sacrifice battery life at the stake, and a bunch of notable and useful extras like HDMI mirroring. On the other, you've got some serious experience-killing instability issues (which need to be fixed by launch), a relatively mundane baseband launching at a time when we're on the cusp of 4G, and perhaps most notably a host of even better-specced Tegra 2 based smartphones with more RAM, better screens, and 4G slated to arrive very soon.

It's really frustrating for me to have to make all those qualifications before talking about how much I like the 2X, because the 2X is without a doubt the best Android phone I've used to date. Android is finally fast enough that for a lot of the tasks I care about (especially web browsing) it's appreciably faster than the iPhone 4. At the same time, battery doesn't take a gigantic hit, and the IPS display is awesome. The software instability issues (which are admittedly pre-launch bugs) are the only thing holding me back from using it 24/7. How the 2X fares when Gingerbread gets ported to it will also make a huge difference, one we're going to cover when that time comes.

The other part of the story is Tegra 2.

Google clearly chose NVIDIA’s Tegra 2 as the Honeycomb platform of choice for a reason. It is a well executed piece of hardware that beat both Qualcomm and TI’s dual-core solutions to market. The original Tegra was severely underpowered in the CPU department, which NVIDIA promptly fixed with Tegra 2. The pair of Cortex A9s in the AP20H make it the fastest general purpose SoC in an Android phone today.

NVIDIA’s GeForce ULV performance also looks pretty good. In GLBenchmark 2.0 NVIDIA manages to hold a 20% performance advantage over the PowerVR SGX 540, our previous king of the hill.

Power efficiency also appears to be competitive both in our GPU and general use battery life tests. Our initial concern about Tegra 2 battery life was unnecessary.

It’s the rest of the Tegra 2 SoC that we’re not completely sure about. Video encode quality on the LG Optimus 2X isn’t very good, and despite NVIDIA’s beefy ISP we’re only able to capture stills at 6 fps with the camera is set to a 2MP resolution. NVIDIA tells us that the Tegra 2 SoC is fully capable of a faster capture rate for stills and that LG simply chose 2MP as its burst mode resolution. For comparison, other phones with burst modes capture at either 1 MP or VGA. That said, unfortunately for NVIDIA, a significant technological advantage is almost meaningless if no one takes advantage of it. It'll be interesting to see if the other Tegra 2 phones coming will enable full resolution burst capture.

Then there’s the forthcoming competition. TI’s OMAP 4 will add the missing MPE to the Cortex A9s and feed them via a wider memory bus. Qualcomm’s QSD8660 will retain its NEON performance advantages and perhaps make up for its architectural deficits with a higher clock speed, at least initially. Let’s not forget that the QSD8660 will bring a new GPU core to the table as well (Adreno 220).

Tegra 2 is a great first step for NVIDIA, however the competition is not only experienced but also well equipped. It will be months before we can truly crown an overall winner, and then another year before we get to do this all over again with Qualcomm’s MSM8960 and TI's OMAP 5. How well NVIDIA executes Tegra 3 and 4 will determine how strong of a competitor it will be in the SoC space.

Between the performance we’re seeing and the design wins (both announced and rumored) NVIDIA is off to a great start. I will say that I’m pleasantly surprised.

75 Comments

View All Comments

rpmrush - Monday, February 7, 2011 - link

Solid review, but please at least use spell check. I'm not a grammar or typo freak, but there were way too many simple typos that spell check wouldn't even let you get by with. At least have someone proof read it before you publish to the public.zowie - Tuesday, February 8, 2011 - link

who can create a new type battery, who will be the richest man in the worlduhuznaa - Tuesday, February 8, 2011 - link

Yeah, and until then those who manage to come up with some decent power management will be the richest...Seriously, every improvement on the battery front almost always just leads to devices drawing more power. It's somewhat ironic that last year's iPhone still leads the pack when it comes to battery life. Power management (that is: don't draw more power than absolutely necessary by throttling or shutting down components that aren't needed or aren't fully needed in a given moment) is hard and boring design work nobody seems to care for. And with devices and software getting replaced with the next iteration every few months this is even understandable, it's just not worth the effort, especially when nobody seems to care and benchmarks are so much more important to the crowd.

DanNeely - Tuesday, February 8, 2011 - link

How is is typically played back: Cropped, or vertically resampled?Wilco1 - Tuesday, February 8, 2011 - link

Tegra 3 has 4 1.5GHz Cortex-A9's according to a leaked slide.That was a great article! A few minor corrections: The ARM11 VFP is fully pipelined (so it can beat the A8 on FP performance). Like the A8, Scorpion is 2-way in-order, not limited out-of-order. In-order cores issue instructions in-order but may complete them out-of-order. On the other hand, OoO cores use register renaming to issue instructions out-of-order but complete them in-order.

Note none of the micro benchmarks used emits Neon instructions. JIT compilers don't have enough time to generate high quality code, let alone autovectorize! For proper benchmarking you will need to run native code compiled with a quality compiler (not GCC - it is still far behind the state of the art on ARM, especially Thumb-2).

metafor - Tuesday, February 8, 2011 - link

I would argue with that definition of OoO. A design does not need register renaming in order to issue any arbitrary instruction OoO. It's simply a trade-off of whether to centralize hazard tracking on register accesses or on retirement.PWRuser - Tuesday, February 8, 2011 - link

Excellent review. Please, in your future reviews don't stop including gems like this one:"Generally while browsing I can feel when Flash ads are really slowing a page down - the 2X almost never felt that way."

That's what matters! Including hands on observations along with a full volley of synthetic benchmarks.

This review comes as close as humanly possible to portraying a handset's ability to readers without the said readers trying it out.

Your attention to detail puts other reviews to shame. Keep up the good work.

sarge78 - Tuesday, February 8, 2011 - link

Don't forget about ST-Ericsson's U8500 A9. They could be a major player in 2011/2012 with potential design wins from Nokia and Sony Ericsson.warisz00r - Tuesday, February 8, 2011 - link

What equipments do you use to test the phone's audio quality with?phut- - Tuesday, February 8, 2011 - link

"NVIDIA tells us that the Tegra 2 SoC is fully capable of a faster capture rate for stills and that LG simply chose 2MP as its burst mode resolution. For comparison, other phones with burst modes capture at either 1 MP or VGA. That said, unfortunately for NVIDIA, a significant technological advantage is almost meaningless if no one takes advantage of it. It'll be interesting to see if the other Tegra 2 phones coming will enable full resolution burst capture. unfortunately for NVIDIA, a significant technological advantage is almost meaningless if no one takes advantage of it. It'll be interesting to see if the other Tegra 2 phones coming will enable full resolution burst capture. meaningless if no one takes advantage of it. It'll be interesting to see if the other Tegra 2 phones coming will enable full resolution burst capture."LG have probably made this decision based on the sensitivity of the invariably minuscule sensor they will have used. Having 6 frames of 12mp is pointless if they are 12 incomprehensible megapixels due to the lacklustre sensitivity of the pixels in their chosen part.

The kind of sensor you find delivering a meaningful burst in something like a 5D mk2 is enormous and power hungry, in comparison to an operating environment such as a phone.