LG Optimus 2X & NVIDIA Tegra 2 Review: The First Dual-Core Smartphone

by Brian Klug & Anand Lal Shimpi on February 7, 2011 3:53 AM EST- Posted in

- Smartphones

- Tegra 2

- LG

- Optimus 2X

- Mobile

- NVIDIA

NVIDIA's Tegra 2

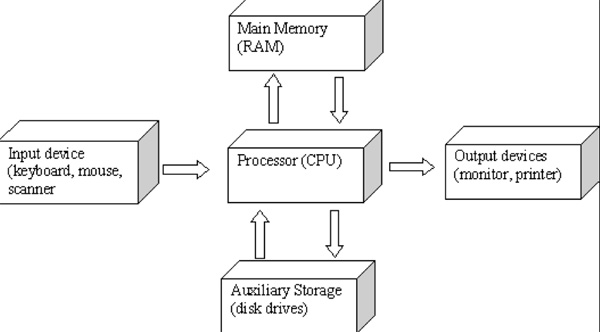

The old definition of a computer is a microprocessor, memory, storage, input and output. The modern definition expands it a bit to include coprocessors (e.g. GPUs) as well as multiple types and levels of memory and storage. The type of input and output devices have changed as well. In smartphones keyboards are virtual and output is integrated into the phone. Although the definition of a computer has evolved, it’s not all that different.

In the old days, almost each one of these parts of a computer was a discrete component. You had a CPU, memory, a hard drive, a video processor (before they were GPUs) all independent of one another. Go back prior to the 486 and you’ll even find that your CPU had to rely on an external FPU for all floating point math.

Moore’s Law has given us bigger, faster, better in all of these areas. Intel’s 486 was the first million transistor x86 processor, introduced in 1989. Sandy Bridge, introduced in January, is just shy of a billion transistors. Sixteen megabytes of memory was a big deal 20 years ago, today high end PCs ship with several gigabytes of memory. Another side effect of Moore’s Law however is integration.

At first integration brought things like the FPU and a second level cache onto the processor die. Over time more components were brought in to the fold. AMD integrated the memory controller in its Athlon 64 processor. Intel brought graphics on-package with Clarkdale/Arrandale, and later on-die with Sandy Bridge. AMD is doing the same with Fusion.

In the smartphone space, the integration is even more pronounced. With physical space and power as major constraints, smartphone chip makers have been forced to further trade performance for integration. The level of integration is so high within a smartphone that you almost never hear about what CPU a phone uses, but rather what Application Processor it uses otherwise known as an SoC (System on Chip or System on a Chip).

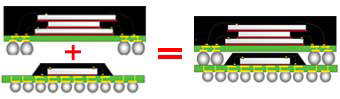

Package on Package (DRAM on top, SoC on bottom)—source: statschippack.com

Integrate a CPU, GPU, memory controller, video decoder, audio decoder, video encoder (sometimes), camera processor, system memory and maybe even a modem onto a single chip and you’ve got something that can only be described as a System on a Chip. It’s a single physical chip that integrates nearly all of the functions of the entire computer. Nearly all of the aforementioned components are on a single piece of silicon, with the exception of any integrated memory. To save board real estate and enable smaller form factors, it’s not uncommon to stack DRAM on top of the SoC package instead of beside it. The SoC in a PoP (Package on Package) stack has contacts on its top surface that line up with the balls on the DRAM for power and signaling. PoP stacks work because the SoC underneath doesn’t dissipate much heat and thus doesn’t mind being insulated by some DRAM up top.

Examples of SoCs are Qualcomm’s Snapdragon, Texas Instruments’ OMAP 4 and of course the subject at hand, NVIDIA’s Tegra 2. Although this is a review of LG’s Optimus 2X, it’s just as much a review of NVIDIA’s Tegra 2.

Tegra 2: The SoC

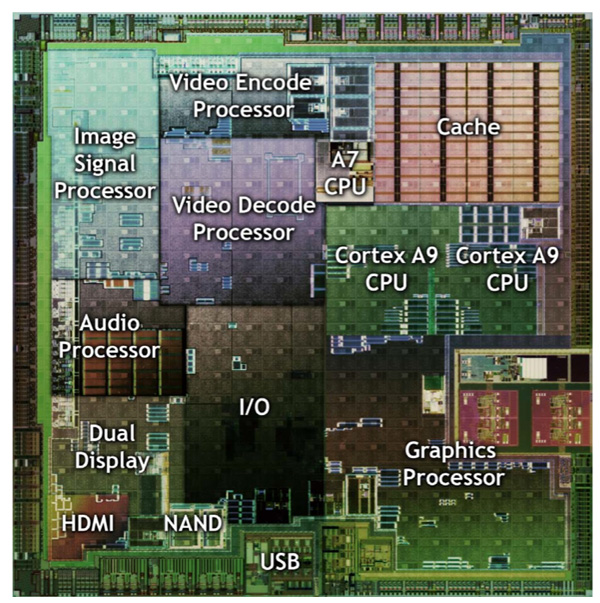

As a System on a Chip, NVIDIA’s Tegra 2 has a number of processors that make up the whole. Having its roots in the PC industry and being used to briefing inquisitive press, NVIDIA put together this handy die shot that shows the various parts of the Tegra 2:

With the exception of two blocks, the Tegra 2 die is entirely NVIDIA’s own creation. The A7 and Cortex A9 blocks are IP licensed from ARM. The entire die is manufactured at TSMC on a 40nm process, similar to NVIDIA’s high end GPUs. While its GPUs are built on TSMC’s 40nm “G” process, Tegra 2 is a slightly different beast.

Most foundries offer two variants of the same manufacturing process: general purpose (G) and low power (LP). The feature size is the same, however the transistors are tuned differently. TSMC’s general purpose process transistors are very fast and low voltage, unfortunately they have very high leakage current. Transistors, as you may know, are electrical on/off switches. Apply a voltage to them and current flows, remove the voltage and current stops flowing. In reality sometimes current flows when you don’t want it to, and this is referred to as leakage. High leakage is a side effect of the nice high performance transistors we need to run the fastest processors.

TSMC’s 40nm LP process uses lower voltage, slower switching transistors (can’t run at as high of a clock speed) that, as a result, have very low leakage characteristics. The lower your leakage and the lower your voltage, the lower your overall power is.

For an SoC, you’d assume that the whole thing would be built at the 40nm LP process. See those two Cortex A9 cores in the diagram above? Remember how they’re licensed from ARM? Those things are pretty high performance, they run at 1GHz. Everything else in the chip runs at 300MHz or less for the most part. On top of that, the libraries ARM provides are optimized for TSMC’s 40nm G process.

As a result, Tegra 2 uses a mixture of G and LP 40nm transistors on two separate voltage rails. The two Cortex A9 cores and the L2 cache are built using TSMC’s 40nm G process transistors, while the rest of the SoC (including the GPU) is built using 40nm LP transistors. The pair of A9s can be powered down together although not independently. We’ll get to a deeper discussion of the ARM Cortex A9 shortly.

The ISP (Image Signal Processor), located in the upper left of the die shot above, is responsible for taking the output from a camera (still/video) controller and processing into a usable video state. The Tegra 2 ISP is capable of processing images at a rate of 80 megapixels per second. The ISP supports two cameras, a 12 megapixel primary and a 5 megapixel secondary. The math works out to a maximum of 6 frames per second captured from the primary sensor at 12MP. LG uses the Tegra 2’s ISP to enable a 6 fps burst mode as you’ll see later on in the review, unfortunately it only works at a 2MP resolution. NVIDIA’s strong ISP looks better on paper than in practice it seems.

The video encode processor does real time H.264 video encoding, which is used when capturing video from the camera sensor. NVIDIA doesn’t provide any specs on what the encoder is capable of, but we’re not too impressed with the quality of its output (again, you’ll see more later).

The audio processor is dedicated hardware for audio encoding and decoding. This is used for audio capture as well as audio playback. Even MP3 playback is done on the dedicated audio processor so the Cortex A9s remain powered down to maximize battery life.

The Tegra 2 video decoder can fully accelerate the decode of 1080p H.264 Baseline profile videos at up to 20Mbps. The specs mostly look good on paper as you won’t be playing anything near that bitrate on your smartphone. NVIDIA includes dual-display capabilities with Tegra 2. The SoC can output the frame buffer to the smartphone’s display as well as an external display via HDMI out.

The ARM A7 nestled in between the video decoder and the L2 cache is used as a system management core. It handles communication between blocks, power management and general SoC management. The remaining blocks (outside of I/O) are the two CPU cores and the GPU. Those both require a lot more detail.

75 Comments

View All Comments

djgandy - Monday, February 7, 2011 - link

It'll be interesting to see how all the other SoC's perform with DDR2.DanNeely - Monday, February 7, 2011 - link

Where can I find more information on this?Anand Lal Shimpi - Monday, February 7, 2011 - link

Here's a link to the immediate mode vs. tbdr discussion in our old Kyro II review:http://www.anandtech.com/show/735/2

Take care,

Anand

silverblue - Monday, February 7, 2011 - link

I'm not sure I agree with the wording in this article about TBDR. The reason PowerVR didn't need to slap DDR RAM onto the Kyro II cards is because they simply didn't need it thanks to the reduction of traffic that comes from deferred rendering. The unknown element at the time was hardware T&L because it simply wasn't available and was thought to be impossible, however as this is yet again being performed on-die, wouldn't that also result in a marked reduction in traffic? Might need some clarification on this one.I've never seen it confirmed that the Adreno GPU performs TBDR; some clarification would be appreciated on this one as well! :)

Onto the option for changing fonts... my vendor-agnostic Galaxy S has such a feature called "Font style" under the Display settings, allowing you to choose the "Default font", "Choco cooky", "Cool jazz" and "Rosemary", with the option of getting more online.

Exophase - Monday, February 7, 2011 - link

Qualcomm bought out AMD's mobile GPUs and hence the Adreno 200 was a rebrand of AMD z430. Here's a little more background on the tiling nature of z430:(since apparently I can't post a link without being flagged as spam just google for this: gdc2008_ribble_maurice_TileBasedGpus.pdf - it's the first hit)

silverblue - Monday, February 7, 2011 - link

Interesting... I'd like to see the differences between their approach and that of Imagination Technologies.silverblue - Monday, February 7, 2011 - link

Thanks by the way... answered a lot of questions. :)AndroidFan - Monday, February 7, 2011 - link

should be 300mhz (=600/2)Zaitsev - Monday, February 7, 2011 - link

Is the camera really too thick to fit the width of the phone? I heard of many Evo users who have cracked the glass covering. While I haven't had this problem, it makes me wonder if it's really necessary in the first place.Thanks

MeSh1 - Monday, February 7, 2011 - link

I cant wait until you can wirelessly shoot your phones display to your tv ala intel WiDi. This HDMI out is cool, but the cable kind of kills it. With wireless display your phone becomes a game controller :) or a remote when shooting movies to your tv. Plus how cool would it be if your phone can fetch movies from your home network and you shot the playback to your TV. The Sony NGP should have implemented this. Ah well.