AMD’s GTX 560 Ti Counter-Offensive: Radeon HD 6950 1GB & XFX’s Radeon HD 6870 Black Edition

by Ryan Smith on January 25, 2011 12:20 PM ESTAfter being AnandTech’s senior GPU editor for nearly a year and a half and through more late-night GPU launches than I care to count, there’s a very specific pattern I’ve picked up on: the GPU market may be competitive, but it’s the $200-$300 that really brings out the insanity. I’m not sure if it’s the volume, the profit margins, or just the desire to be seen as affordable, but AMD and NVIDIA seem to take out all the stops to one-up each other whenever either side plans on launching a new video card in this price range.

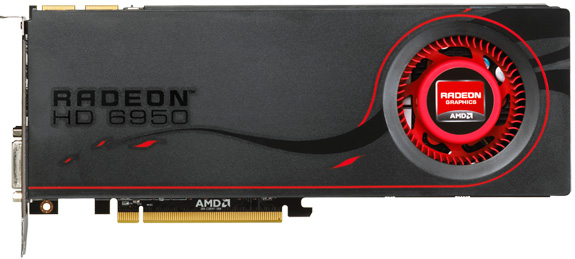

Today was originally supposed to be about the newly released GeForce GTX 560 Ti – NVIDIA’s new GF114-based $250 video card. Much as was the case with the launch of AMD’s Radeon HD 6800 series however, AMD is itching to spoil NVIDIA’s launch with their own push. Furthermore they intend to do so on two fronts: directly above the GTX 560 Ti at $259 is the Radeon HD 6950 1GB, and somewhere below it one of many factory overclocked Radeon HD 6870 cards, in our case an XFX Radeon HD 6870 Black Edition. The Radeon HD 6950 1GB is effectively the GTX 560 Ti’s direct competition, while the overclocked 6870 serves to be the price spoiler.

It wasn’t always meant to be this way, and indeed 5 days ago things were quite different. But before we get too far, let’s quickly discuss today’s cards.

| AMD Radeon HD 6970 | AMD Radeon HD 6950 2GB | AMD Radeon HD 6950 1GB | XFX Radeon HD 6870 Black | AMD Radeon HD 6870 | |

| Stream Processors | 1536 | 1408 | 1408 | 1120 | 1120 |

| Texture Units | 96 | 88 | 88 | 56 | 56 |

| ROPs | 32 | 32 | 32 | 32 | 32 |

| Core Clock | 880MHz | 800MHz | 800MHz | 940MHz | 900MHz |

| Memory Clock | 1.375GHz (5.5GHz effective) GDDR5 | 1.25GHz (5.0GHz effective) GDDR5 | 1.25GHz (5.0GHz effective) GDDR5 | 1.15GHz (4.6GHz effective) GDDR5 | 1.05GHz (4.2GHz effective) GDDR5 |

| Memory Bus Width | 256-bit | 256-bit | 256-bit | 256-bit | 256-bit |

| Frame Buffer | 2GB | 2GB | 1GB | 1GB | 1GB |

| FP64 | 1/4 | 1/4 | 1/4 | N/A | N/A |

| Transistor Count | 2.64B | 2.64B | 2.64B | 1.7B | 1.7B |

| Manufacturing Process | TSMC 40nm | TSMC 40nm | TSMC 40nm | TSMC 40nm | TSMC 40nm |

| Price Point | $369 | ~$279 | $259 | $229 | ~$219 |

Back when the Radeon HD 6950 launched, AMD told us to expect 1GB cards sometime in the near future as a value option. Because the 6900 series is using fairly new 2Gb GDDR5, such chips are still in short supply and cost more versus the very common and very available 1Gb variety. It’s not a massive difference once you all up the bill of materials on a video card, but for the card manufactures if they can save $10 on RAM then that’s $10 they can mark down a card and snag that many more sales. Furthermore we’re not quite to the point where 2GB is essential in the sub-$300 market - where 2560x1600 monitors are rare – so the performance penalty isn’t a major concern. As a result it was only a matter of time until 1GB 6900 series cards hit the market, to fill in the gap until 2Gb GDDR5 came down in price.

The day has finally come for the Radeon HD 6950 1GB, and today is that day. Truth be told it’s actually a bit anticlimactic – the reference 6950 1GB is virtually identical to the reference 6950 2GB. It’s the same PCB attached to the same vapor chamber cooler with the same power and heat characteristics. There is one and only one difference: the 1GB card uses 8 1Gb GDDR5 chips, and the 2GB card uses 8 2Gb GDDR5 chips. Everything else is equal, and indeed when the 6950 is not RAM limited even the performance is equal.

The second card we’re taking a quick look at is the XFX Radeon HD 6870 Black Edition, the obligatory factory overclocked Radeon HD 6870. Utilizing XFX’s open-air custom HSF, it’s clocked at 940MHz core and 1150MHz (4.6Gbps data rate) memory, representing a 40Mhz (4%) core overclock and a 100MHz (9%) memory overclock. Truth be told it’s not much of an overclock, and if it wasn’t for the cooler it wouldn’t be a very remarkable card as far as factory overclocking goes, and for that reason it’s almost a footnote today. But it wasn’t meant to be, and that’s where our story begins.

111 Comments

View All Comments

Stuka87 - Tuesday, January 25, 2011 - link

Why does every single ATI card get the EXACT same FPS in Civilization 5? Did the company that made it get paid off by nVidia to put a frame cap on ATI cards or what? It makes zero sense that 2 year old ATI cards would get the same FPS as just released ATI cards.So... What gives?

Ryan Smith - Tuesday, January 25, 2011 - link

Under normal circumstances it's CPU limited; apparently at the driver level. Just recently NVIDIA managed to resolve that issue in their drivers, which is why their Civ 5 scores have shot up while AMD's have not.Stuka87 - Wednesday, January 26, 2011 - link

Hmm, interesting.WhatsTheDifference - Wednesday, January 26, 2011 - link

please forgive me, Ryan, as I know this sounds abrasive and a little too off-topic from your response here. but speaking of 'score', what's the absolutely mind-boggling delay with including the 4890. quite frankly, if even one of any performance test over the relevant life of the 285 had not the 285 in it, nvidia would burn this site to the ground right after your nvidia-supporting readers did some leveling of their own. so honestly, for every 2xx series that finds its way into a benchmark, where in the world is ATI's top pick of that generation? site regs have posted about anandtech's nvidia-leaning ways, say, a few times, and this particularly clear evidence rather deserves an explanation - in my opinion. or, my spotty attendance contributed to missing at least one fascinating story.7Enigma - Wednesday, January 26, 2011 - link

4870 has been in most of the tests (see launch article) which can be used as a slightly lower-performing card. Use that as a guide.erple2 - Thursday, January 27, 2011 - link

I agree with 7Enigma - the difference between the 4870 and 4890 are no longer significant enough to warrant inclusion in the comparison. I seem to recall that the performance of the 4890 was between the 4870 (shown) and the nvidia 285 (also shown). Couple that with the relative trouncing 30%+ increase in performance) that the newer cards deliver to the GTX285, plus that the frame rates of the GTX285 isn't that high (+30% of 20 fps is 26 fps, which is still "too slow to make it relevant"), I'm not sure that it becomes relevant anymore.cheese319 - Tuesday, January 25, 2011 - link

It should be easily possible to unlock the card through manually editing the bios to unlock the extra shaders (like what is possible with the 6950 2gb) which is a lot safer for the ram as well.mapesdhs - Tuesday, January 25, 2011 - link

Talk about not being consistent. :\ Here we have a review that

includes an oc'd 6870, yet there was the huge row before about the 460

FTW. Make your minds up guys, either include oc'd cards or don't.

Personally I would definitely like to the see the FTW included since

I'd love to know how it compares to the new 560 Ti, given the FTW often

beats a stock 470. Please add FTW results if you can.

Re those who've commented on certain tests reaching a plateau in some

cases: may I ask, why are you running the i7 at such a low 3.33GHz

speed?? I keep seeing this more and more these days, review articles on

all sorts of sites using i7 CPU speeds well below 4, whereas just about

everyone posting in forums on a wide variety of sites is using speeds

of at least 4. So what gives? Please don't use less than 4, otherwise

it's far too easy for some tests to become CPU-bound. You're reviewing

gfx cards afterall, so surely one would want any CPU bottleneck to be

as low as possible?

Any 920 should be able to reach 4+ with ease. And besides, who on earth

would buy a costly Rampage II Extreme and then only run the CPU at

3.33? Heck, my mbd cost 70% less than a R/II/Extreme yet it would easily

outperform your review setup for these tests (I use an i7 870 @ 4270).

For a large collection of benchmark results, Google "SGI Ian", click

the first result, follow the "SGI Tech Advice" link and then select, "PC

Benchmarks, Advice and Information" (pity one can't post URLs here now,

but I understand the rational re spam).

Lastly, it's sad to admit but I agree with the poster who commented on

the use of 1920x1200 res. The 1080 height is horrible for general use,

but the industry has blatantly moved away from producing displays with

1200 height. I wanted to buy a 1920x1200 display but it was damn hard

to find companies selling any models at this res at all, never mind

models which were actually worth buying (I bought an HP LP2475W HIPS

24" in the end). So I'm curious, what model of display are you using

for the tests? (the hw summary doesn't say, indeed your reviews never

say what display is being used - please do!). Kinda seems like you're

still able to find 1200-height screens, so if you've any info on

recommended models I'm sure readers would be interested to know.

Thanks!!

Ian.

Ryan Smith - Tuesday, January 25, 2011 - link

Our 920 is a C0/C1 model, not D0. D0s can indeed hit near 4GHz quite regularly, but for our 920 that is not the case. As for our motherboard, it was chosen first for benchmark purposes - the R2E has some features like OC Profiles that are extremely useful for this line of work.As for the monitor we're using, it's a Samsung 305T.

mapesdhs - Wednesday, January 26, 2011 - link

Ryan writes:

> Our 920 is a C0/C1 model, not D0. D0s can indeed hit near 4GHz quite

> regularly, but for our 920 that is not the case. ...

Oh!! Time to upgrade. ;) Stick in a 950, should work much better. My point

still stands though, some tests could easily be CPU-bottlenecking when

things get tough.

> As for our motherboard, it was chosen first for benchmark purposes - the

> R2E has some features like OC Profiles that are extremely useful for this

> line of work.

So does mine. :D No need to spend oodles to have such features. Your's

would of course be better though for 3-way/4-way SLI/CF, but that's a

minority audience IMO.

> As for the monitor we're using, it's a Samsung 305T.

Sweeeet! I see it received good reviews, eg. on the 'trusted' of the

'reviews' dot com.

Hmm, do you know if it supports sync-on-green? Just curious.

Ian.