The Brazos Review: AMD's E-350 Supplants ION for mini-ITX

by Anand Lal Shimpi on January 27, 2011 6:08 PM ESTBlu-ray & Flash Video Acceleration

Compatibility is obviously a strongpoint of Brazos. So long as what you’re decoding can be hardware accelerated you’re pretty much in the clear. But what about CPU utilization while playing back these hardware accelerated formats? The CPU still needs to feed data to the GPU, how many cycles are used in the process?

I fired up a few H.264/x264 tests to kick off the investigation. First we have a 1080p H.264 Blu-ray rip of Quantum of Solace, averaging around 15Mbps:

| Quantum of Solace 1080p H.264 CPU Utilization (1:00 - 1:30) | |||||

| Platform | Min | Avg | Max | ||

| AMD E-350 | 22.7% | 27.8% | 35.3% | ||

| Intel Atom D510 | Fail | ||||

| Zotac ION | 14.6% | 17.2% | 20.1% | ||

A standard Atom platform can’t decode the video but ION manages a 17% average CPU utilization with an Atom 330. Remember that the Atom 330 is a dual-core CPU with SMT (4-threads total) so you’re actually getting 17.2% of four hardware threads used, but 34.4% of two cores. The E-350 by comparison leaves 27.8% of its two cores in use during this test. Both systems have more than enough horsepower left over to do other things.

Next up is an actual Blu-ray disc (Casino Royale) but stripped of its DRM using AnyDVD HD and played back from a folder on the SSD:

| Casino Royale BD (no DRM) CPU Utilization (49:00 - 49:30) | |||||

| Platform | Min | Avg | Max | ||

| AMD E-350 | 28.1% | 33.0% | 38.4% | ||

| Intel Atom D510 | Fail | ||||

| Zotac ION | 17.7% | 22.5% | 27.5% | ||

Average CPU utilization here for the E-350 was 33% of two cores.

Finally I ran a full blown Blu-ray disc (Star Trek) bitstreaming TrueHD on the E-350 to give you an idea of what worst case scenario CPU utilization would be like on Brazos:

| Star Trek BD CPU Utilization (2:30 - 3:30) | |||||

| Platform | Min | Avg | Max | ||

| AMD E-350 | 29.0% | 40.1% | 57.1% | ||

At 40% CPU utilization on average there’s enough headroom to do something else while watching a high bitrate 1080p movie on Brazos. The GPU based video decode acceleration does work, however the limits here are clear. Brazos isn’t going to fare well as a platform you use for heavy multitasking while decoding video, even if the video decode is hardware accelerated. As a value/entry-level platform I doubt this needs much more explanation.

Now let’s talk about Flash.

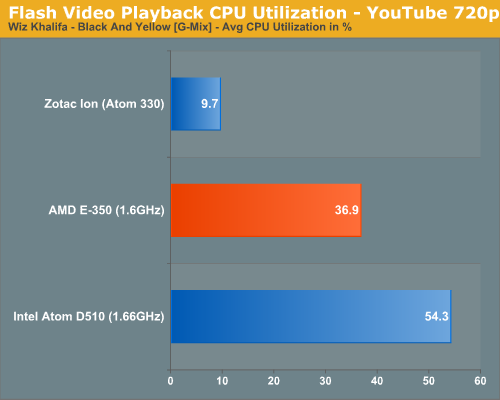

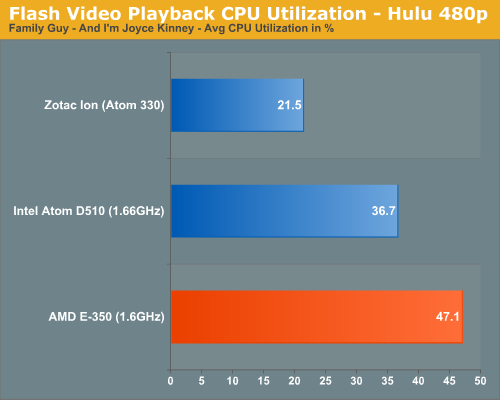

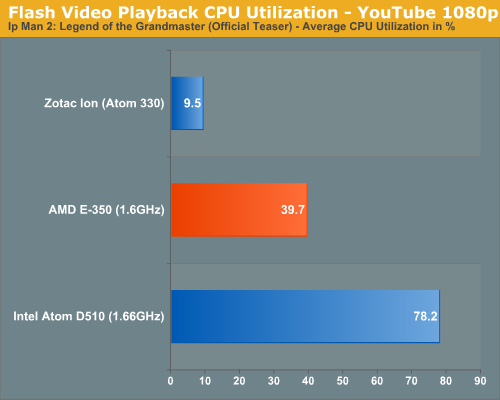

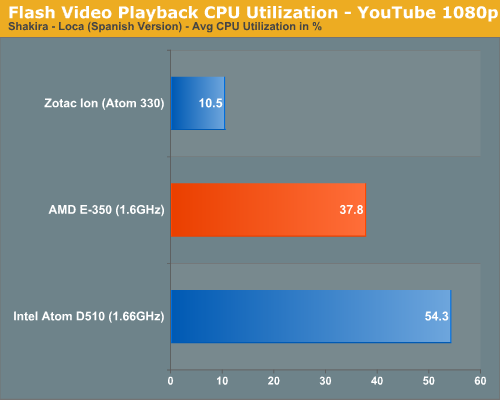

I ran through a number of Flash video tests at both YouTube and Hulu ranging in resolution from 480p all the way up to 1080p. I used Flash 10.1, 10.2 beta as well as an unreleased version of 10.2 beta provided by AMD.

For the most part GPU accelerated Flash video does work well. Performance under both YouTube and Hulu was flawless, provided that I wasn’t watching 1080p content. Watching 1080p content in YouTube wasn’t entirely smooth on Brazos, despite posting very reasonable CPU utilization numbers.

I took my concerns to AMD and was told that this was a known issue with Brazos and Flash 10.1 and that 10.2 should alleviate the issue. AMD then supplied me with an unreleased version of Flash 10.2 to allow me to verify its claims. While 1080p playback improved with AMD’s 10.2 beta, it wasn’t perfect (although it was very close). AMD wouldn’t tell me the cause of the problem but it’s currently working on it with Adobe. At the end of the day I don’t believe it’s a dealbreaker, but early Brazos adapters should expect some stuttering when playing back 1080p YouTube videos. Note that 720p and lower resolution videos were perfectly smooth on Brazos.

176 Comments

View All Comments

ocre - Friday, January 28, 2011 - link

hahaha, You need to educate yourself a little more on the advantages/disadvantages of both the x86 and ARM architectures before you go around posting ignorant statements like these. If we assumed your statement: is true: "E-350 has roughly 5 times the performance of Tegra 2" it would still be nothing to brag on becaue the same tegra 2 uses 7-9 times less energy than the E-350. This makes a mere 5 time performance a joke.But on top of that, there is no good reasonable way to conclude where these architectures rate towards one another. The software is radically different. There is not enough to go on for any useful conclusion and what little we do have is subject to a very special cases where there is SW that in the end results to similar obtained data. But the entire process is different and while we know the x86 is pretty well optimized, any ARM based counter SW is in its beginnings. X86 has the luxury of optimizations, ARM will only get better when SW engineers find better ways to utilize the system. But this is considering an actual case where there is SW similar enough to even reasonably attempt to measure x86 vs ARM performance. For the most part there the SW of each architecture is so radically different. ARM is extremely good at some things and not so good at others and this doesnt mean it cant be good, in some cases the SW just hasnt matured yet. All this matters little cause at the end of the day, everyone can see that the exact opposite from your statement; The superiority of ARM is just unmatched by x86 when it comes to performance per watt. This is undisputed and x86 has a long long way to go to catch up with arm (and many think it never will). Alls Arm has to do is actually build 18w CPUs, they will be 3 to 4 times more powerful than the e-350 based on the current ARM architecture.

jollyjugg - Friday, January 28, 2011 - link

Well your last statement is simply laughable. Because all we have from ARM right now is chips which run in smartphones and tablets. ARM based processors are not exactly known for their performance as much as their power. While it is true that performance/watt is great in ARM today, it also true as any modern microprocessor designer would say that, as you go up the performance chain (by throwing more hardware and getting more giga Hz and other tweaks like wider issue and out of order etc), the mileage you get out of the machine improves so does the drop in performance/watt. There are no free lunches here. I doubt a good performance ARM architecture will be a whole lot different that an x86 architecure. While the lower power is an achilles heal for x86 as is the performance an achilles heal for ARM. You mentioned software maturity. It is laughable to even mention this in this cut throat industry. Intel showed its superiority over AMD by tweaking the free x86 compiler it gave away to developers to suit its x86 architecture compared to its rival and the users got cheated until European commision exposed Intel. So dont even talk about software maturity. The incumbant always has the advantage. ARM first has to kill Intel's OEM muscle and marketing muscle before it can start dominating. Even if it did the former two, there is something it can never do, which is matching intel's manufacturing muscle. Intel by far is way better than even the largest contract manufacturer TSMC whose only task is to manufacture.Shadowmaster625 - Monday, January 31, 2011 - link

Nonsense. ARM is more optimized that x86. x86 code is always sloppy, because it has always been designed without having to deal with RAM, ROM, and clock constraints. When you code for an ARM device, you are presented with limits that most software engineers never even faced when writing x86 code. When writing software for Windows, 99.9% of developers will tell you they never even think about the amount of RAM they are using. For ARM it was probably 80% 10 years ago. Today it is probably less than 20% of ARM software engineers who would tell you they run into RAM and ROM limitations. With all this smartphone development going on today, ARM devices are getting more sloppy, but still nowhere near as bad as x86.Shadowmaster625 - Monday, January 31, 2011 - link

Best buy is still littered with them. Literally. Littered.e36Jeff - Thursday, January 27, 2011 - link

what review were you reading? The only bug that is actually mentioned is the issue with flash, which AMD and Adobe are both aware of and should be fixed in the next iteration of flash. Stop seeing anything from AMD as bad and Intel as good. For where AMD wants this product to compete, this is a fantastic product that Intel has very little to compete with now that they locked out Nvidia from another Ion platform.codedivine - Thursday, January 27, 2011 - link

Ok one last question. Is it possible to run your VS2008 benchmark on it? Will be appreciated, thanks.Anand Lal Shimpi - Thursday, January 27, 2011 - link

Running it now, will update with the results :)Take care,

Anand

Malih - Sunday, January 30, 2011 - link

I'm with you on this.I'm thinking about buying a netbook and may be a couple net tops with E-350, which will mostly be used to code websites, may be some other dev that require IDE (Eclipse, Visual Studio and so on).

micksh - Thursday, January 27, 2011 - link

how can it be that "1080i60 works just fine" when it failed all deinterlacing tests?Anand Lal Shimpi - Thursday, January 27, 2011 - link

It failed the quality tests but it can physically decode the video at full frame rate :)Take care,

Anand