The Sandy Bridge Review: Intel Core i7-2600K, i5-2500K and Core i3-2100 Tested

by Anand Lal Shimpi on January 3, 2011 12:01 AM ESTIntel’s Quick Sync Technology

In recent years video transcoding has become one of the most widespread consumers of CPU power. The popularity of YouTube alone has turned nearly everyone with a webcam into a producer, and every PC into a video editing station. The mobile revolution hasn’t slowed things down either. No smartphone can play full bitrate/resolution 1080p content from a Blu-ray disc, so if you want to carry your best quality movies and TV shows with you, you’ll have to transcode to a more compressed format. The same goes for the new wave of tablets.

At a high level, video transcoding involves taking a compressed video stream and further compressing it to better match the storage and decoding abilities of a target device. The reason this is transcoding and not encoding is because the source format is almost always already encoded in some sort of a compressed format. The most common, these days, being H.264/AVC.

Transcoding is a particularly CPU intensive task because of the three dimensional nature of the compression. Each individual frame within a video can be compressed; however, since sequential frames of video typically have many of the same elements, video compression algorithms look at data that’s repeated temporally as well as spatially.

I remember sitting in a hotel room in Times Square while Godfrey Cheng and Matthew Witheiler of ATI explained to me the challenges of decoding HD-DVD and Blu-ray content. ATI was about to unveil hardware acceleration for some of the stages of the H.264 decoding pipeline. Full hardware decode acceleration wouldn’t come for another year at that point.

The advent of fixed function video decode in modern GPUs is important because it helped enable GPU accelerated transcoding. The first step of the video transcode process is to first decode the source video. Since transcoding involves taking a video already in a compressed format and encoding it in a new format, hardware accelerated video decode is key. How fast a decode engine is has a tremendous impact on how fast a hardware accelerated video encode can run. This is true for two reasons.

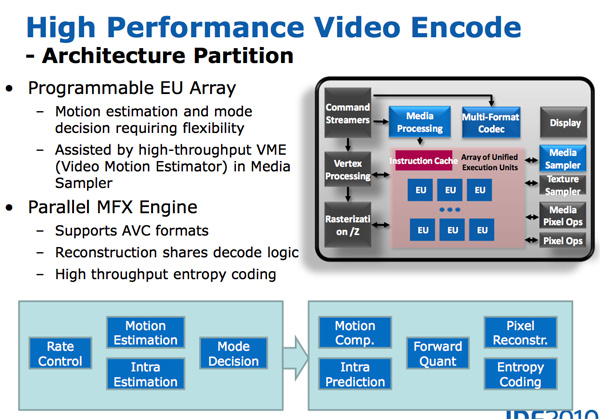

First, unlike in a playback scenario where you only need to decode faster than the frame rate of the video, when transcoding the video decode engine can run as fast as possible. The faster frames can be decoded, the faster they can be fed to the transcode engine. The second and less obvious point is that some of the hardware you need to accelerate video encoding is already present in a video decode engine (e.g. iDCT/DCT hardware).

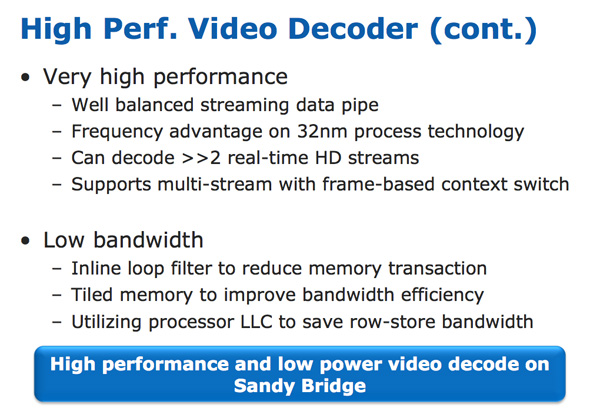

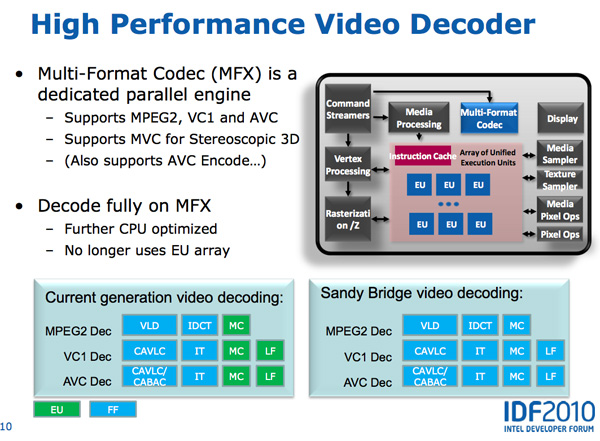

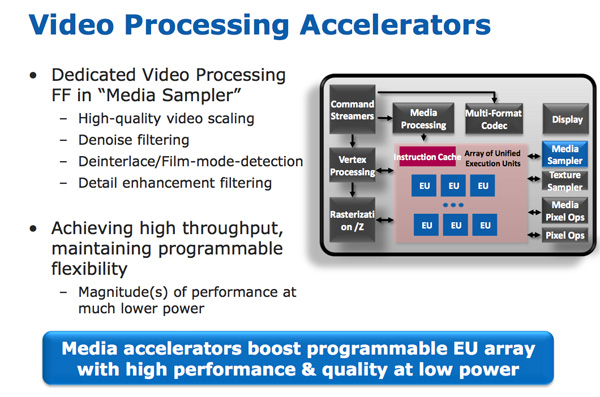

With video transcoding as a feature of Sandy Bridge’s GPU, Intel beefed up the video decode engine from what it had in Clarkdale. In the first generation Core series processors, video decode acceleration was split between fixed function decode hardware and the GPU’s EU array. With Sandy Bridge and the second generation Core CPUs, video decoding is done entirely in fixed function hardware. This is not ideal from a flexibility standpoint (e.g. newer video codecs can’t be fully hardware accelerated on existing hardware), but it is the most efficient method to build a video decoder from a power and performance standpoint. Both AMD and NVIDIA have fixed function video decode hardware in their GPUs now; neither rely on the shader cores to accelerate video decode.

The resulting hardware is both performance and power efficient. To test the performance of the decode engine I launched multiple instances of a 15Mbps 1080p high profile H.264 video running at 23.976 fps. I kept launching instances of the video until the system could no longer maintain full frame rate in all of the simultaneous streams. The graph below shows the maximum number of streams I could run in parallel:

| Intel Core i5-2500K | NVIDIA GeForce GTX 460 | AMD Radeon HD 6870 | |

| Number of Parallel 1080p HP Streams | 5 streams | 3 streams | 1 stream |

AMD’s Radeon HD 6000 series GPUs can only manage a single high profile, 1080p H.264 stream, which is perfectly sufficient for video playback. NVIDIA’s GeForce GTX 460 does much better; it could handle three simultaneous streams. Sandy Bridge however takes the cake as a single Core i5-2500K can decode five streams in tandem.

The Sandy Bridge decoder is likely helped by the very large (and high bandwidth) L3 cache connected to it. This is the first advantage Intel has in what it calls its Quick Sync technology: a very fast decode engine.

The decode engine is also reused during the actual encode phase. Once frames of the source video are decoded, they are actually fed to the programmable EU array to be split apart and prepared for transcoding. The data in each frame is transformed from the spatial domain (location of each pixel) to the frequency domain (how often pixels of a certain color appear); this is done by the use of a discrete cosine transform. You may remember that inverse discrete cosine transform hardware is necessary to decode video; well, that same hardware is useful in the domain transform needed when transcoding.

Motion search, the most compute intensive part of the transcode process, is done in the EU array. It's the combination of the fast decoder, the EU array, and fixed function hardware that make up Intel's Quick Sync engine.

283 Comments

View All Comments

omelet - Monday, January 3, 2011 - link

> The Sandy Bridge Review: Intel Core i5 2600K, i5 2500K and Core i3 2100 TestedDoesn't look fixed over here.

Zoomer - Monday, January 3, 2011 - link

Score one for intel marketing!Oh wait...

Beenthere - Monday, January 3, 2011 - link

I'll stick with my AMD 965 BE as it delivers a lot of performance for the price and I don't get fleeced on mobo and CPU prices like with Intel stuff.geek4life!! - Monday, January 3, 2011 - link

Exactly what I have been waiting on, time to build my RIG again. Been without a PC for 1 year now and itching to build a new one.Game on baby!!!!!!!!!!!!!!

Doormat - Monday, January 3, 2011 - link

If QuickSync is only available to those using the integrated GPU, does that mean you cant use QS with a P67 board, since they don't support integrated graphics? If so, I'll end up having to buy a dedicated QS box (a micro-ATX board, a S or T series CPU seem to be up to that challenge). Also what if the box is headless (e.g. Windows Home Server)?Does the performance of QS have to do with the number of EUs? The QS testing was on a 12-EU CPU, does performance get cut in half on a 6-EU CPU (again, S or T series CPUs would be affected).

No mention of Intel AVX functions. I suppose thats more of an architecture thing (which was covered separately), but no benchmarks (synthetic or otherwise) to demo the new feature.

MeSh1 - Monday, January 3, 2011 - link

Yeah I think this is the case or according the the blurb below you can connect a monitor to the IGP in order to use QS. Is this a design flaw? Seems like a messy workaround :(" you either have to use the integrated GPU alone or run a multimonitor setup with one monitor connected to Intel’s GPU in order to use Quick Sync."

SandmanWN - Monday, January 3, 2011 - link

The sad part is for all the great encoding you get, the playback sucks. Jacked up.Doormat - Monday, January 3, 2011 - link

I'm not that interested in playback on that device - its going to be streamed to my PS3, DLNA-enabled TVs, iPad/iPhone, etc. Considering this wont be supported as a hackintosh for a while, I might as well build a combo transcoding station and WHS box.JarredWalton - Monday, January 3, 2011 - link

How do you figure "playback sucks"? If you're using MPC-HC, it's currently broken, but that's an application issue not a problem with SNB in general.Absolution75 - Monday, January 3, 2011 - link

Thank you so much for the VS benchmarks!! Programmers rejoice!