NVIDIA's GeForce GTX 570: Filling In The Gaps

by Ryan Smith on December 7, 2010 9:00 AM ESTCompute & Normalized Numbers

Moving on from our look at gaming performance, we have our customary look at compute performance, bundled with a look at theoretical tessellation performance. Unlike our gaming benchmarks where NVIDIA’s architectural enhancements could have an impact, everything here should be dictated by the core clock and SMs, with the GTX 570’s slight core clock advantage over the GTX 480 defining most of these tests.

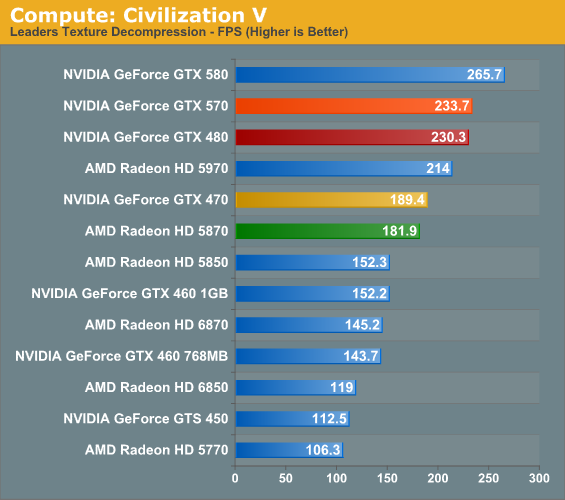

Our first compute benchmark comes from Civilization V, which uses DirectCompute to decompress textures on the fly. Civ V includes a sub-benchmark that exclusively tests the speed of their texture decompression algorithm by repeatedly decompressing the textures required for one of the game’s leader scenes.

The core clock advantage for the GTX 570 here is 4.5%; in practice it leads to a difference of less than 2% for Civilization V’s texture decompression test. Even the lead over the GTX 470 is a bit less than usual, at 23%. Nor should the lack of a competitive placement from an AMD product be a surprise, as NVIDIA’s cards consistently do well at this test, lending credit to the idea that it’s a compute application better suited for NVIDIA’s scalar processor design.

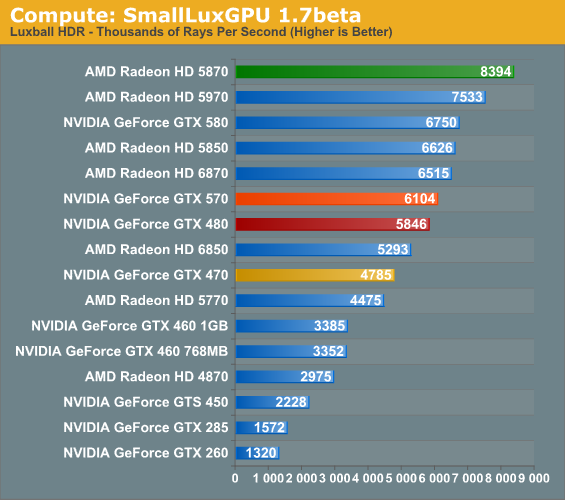

Our second GPU compute benchmark is SmallLuxGPU, the GPU ray tracing branch of the open source LuxRender renderer. While it’s still in beta, SmallLuxGPU recently hit a milestone by implementing a complete ray tracing engine in OpenCL, allowing them to fully offload the process to the GPU. It’s this ray tracing engine we’re testing.

SmallLuxGPU is rather straightforward in its requirements: compute and lots of it. The GTX 570’s core clock advantage over the GTX 480 drives a fairly straightforward 4% performance improvement, roughly in line with the theoretical maximum. The reduction in memory bandwidth and L2 cache does not seem to impact SmallLuxGPU. Meanwhile the advantage over the GTX 470 doesn’t quite reach its theoretical maximum, but the GTX 570 is still 27% faster.

However as was the case with the GTX 580, all of the NVIDIA cards fall to AMD’s faster cards here; the GTX 570 is only between the 6850 and 6870 in performance, thanks to AMD’s compute-heavy VLIW5 design that SmallLuxGPU excels at. The situation is quite bad for the GTX 570 as a result, with the top card being the Radeon 5870, which the GTX 570 underperforms by 27%.

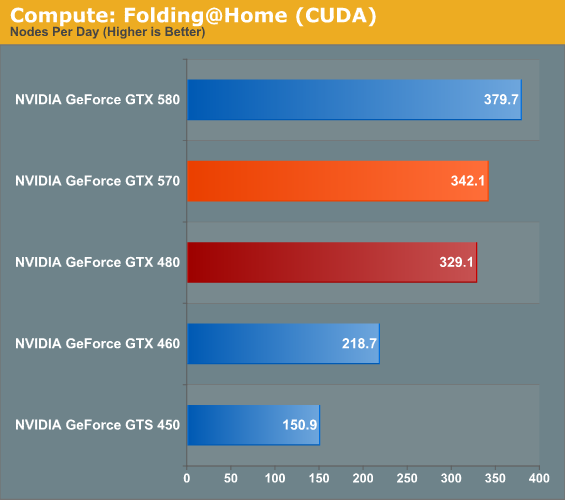

Our final compute benchmark is a Folding @ Home benchmark. Given NVIDIA’s focus on compute for Fermi and in particular GF110 and GF100, cards such as the GTX 580 can be particularly interesting for distributed computing enthusiasts, who are usually looking for the fastest card in the coolest package.

Once more the performance advantage for the GTX 570 matches its core clock advantage. If not for the fact that a DC project like F@H is trivial to scale to multi-GPU configurations, the GTX 570 would likely be the sweet spot for price, performance, and power/noise.

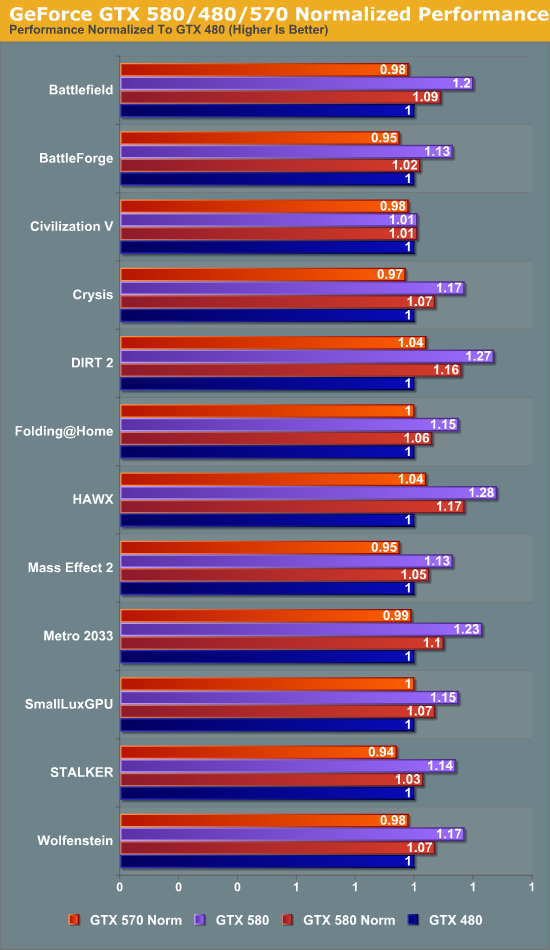

Finally, to take another look at GTX 570’s performance, we have the return of our normalized data view that we first saw with our look at the GTX 580. Unlike the GTX 580 which had similar memory/ROP abilities as the GTX 480 but more SMs, the GTX 570 contains the same number of SMs with fewer ROPs and a narrower memory bus. As such while a normalized dataset for the GTX 580 shows the advantage of the GF110’s architectural enhancements and the highter SM count, the normalized dataset for the GTX 570 shows the architectural enhancements alongside the impact of lost memory bandwidth, ROPs, and L2 cache.

The results certainly paint an interesting picture. Just about everything is ultimately affected by the lack of memory bandwidth, L2 cache, and ROPs; if the GTX 570 didn’t normally have its core clock advantage, it would generally lose to the GTX 480 by small amounts. The standouts here include STALKER, Mass Effect 2, and BattleForge which are all clearly among the most memory-hobbled titles.

On the other hand we have DIRT 2 and HAWX, both of which show a 4% improvement even though our normalized GTX 570 is worse compared to a GTX 480 in every way except architectural advantages. Clearly these were some of the games NVIDIA had in mind when they were tweaking GF110.

54 Comments

View All Comments

Taft12 - Tuesday, December 7, 2010 - link

Game, set and match. It will take a long time for Anandtech to redevelop its reputation.7Enigma - Tuesday, December 7, 2010 - link

Seriously? We're still going to preach on this topic? I was one of those in disagreement with the way they handled the launch of the AMD 68XX series cards, but let it die already. This is a LAUNCH article and it deals with the design of the card and the performance of the reference card. As such it should not contain comparisons to OC'd cards.....not AMD nor NVIDIA. In a follow-up article, however, it should be compared to non-reference designs from both camps.If, when the AMD 69XX series cards come out and they include OC'd Nvidia cards, THEN you can rant and rave. But I can guarantee you there is no way they would do that after the fallout of the previous launch.

So I politely ask that you stop.

Kef71 - Tuesday, December 7, 2010 - link

Yes, seriously. Was there ever any official statement if OC cards would be used in GPU launches? I didn't see any but on the other hand anandtech has not been in my bookmark list for a while...strikeback03 - Tuesday, December 7, 2010 - link

Yes, there was, they said because of d-bag comments like yours they would ignore a sector of the market and only provide some of the possible competitors for new products.Kef71 - Tuesday, December 7, 2010 - link

If you really need to be rude, at least spell out "douchebag".slacr - Tuesday, December 7, 2010 - link

I was just wondering why there are no starcraft2 performance figures in the review.Understandably there is no "benchmark" feature implemented in the game and they are annoying and time consuming to run and of course the card can handle it. But it is the only game some of us play and the figures may help guide us to see if it's "worth it".

nitrousoxide - Tuesday, December 7, 2010 - link

It's a more CPU-bound game so it cannot perfectly reflect the difference in GPU performanceRyan Smith - Tuesday, December 7, 2010 - link

I'm still in the process of fully fleshing out our SC2 benchmark. Once the latest rendition of Bench is ready, you'll find SC2 in there.tbtbtb - Tuesday, December 7, 2010 - link

The GTX 570 is now available for pre-order on Amazon for 400$ (http://amzn.to/e89Oo2)Oxford Guy - Tuesday, December 7, 2010 - link

Where is it, especially minimum frame rate testing?While it's nice to see minimum frame rates for Crysis, it would be nice to see them for Metro as well.