NVIDIA's GeForce GTX 570: Filling In The Gaps

by Ryan Smith on December 7, 2010 9:00 AM ESTPower, Temperature, & Noise

Last but not least as always is our look at the power consumption, temperatures, and acoustics of the GTX 570. While NVIDIA’s optimizations to these attributes were largely focused on bringing the GTX 480’s immediate successor to more reasonable levels, the GTX 570 certainly stands to gain some benefits too.

Starting with VIDs, we once again only have 1 card so there’s not too much data we can draw. Our GTX 570 sample has a lower VID than our GTX 580, but as we know NVIDIA is using a range of VIDs for each card. From what we’ve seen with the GTX 470 we’d expect the average GTX 570 VID to be higher than the average GTX 580 VID, as NVIDIA is likely using ASICs with damaged ROPs/SMs for the GTX 570, along with ASICs that wouldn’t make the cut at a suitable voltage with all functional units turned on. As a result the full power advantage of lower clockspeeds and fewer functional units would not always be realized here.

| GeForce GTX 500 Series Voltages | |||||

| Ref 580 Load | Asus 580 Load | Ref 570 Load | Ref 570 Idle | ||

| 1.037v | 1.000v | 1.025v | 0.912v | ||

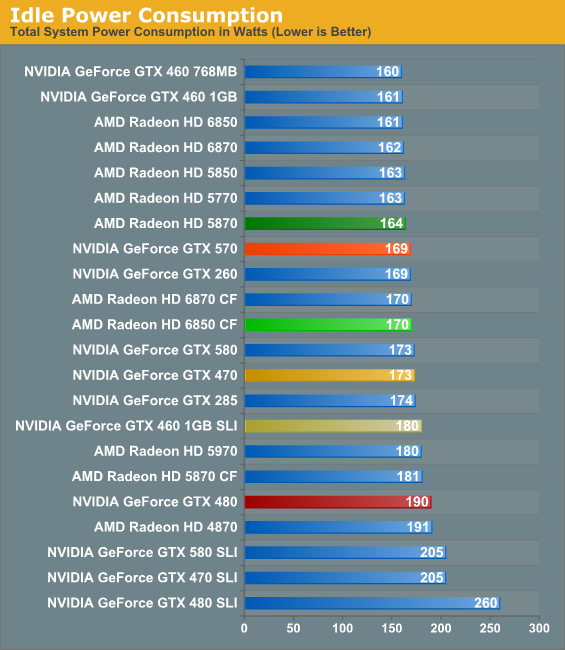

With fewer functional units than the GTX 580 and a less leaky manufacturing process than the GTX 400 series, the GTX 570 is the first GF1x0 card to bring our test rig under 170W at idle. NVIDIA’s larger chips still do worse than AMD’s smaller chips here though, which is driven home by the point that the 6850 CF is only drawing 1 more watt at idle. Nevertheless this is a 4W improvement over the GTX 470 and a 21W improvement over the GTX 480; the former in particular showcases the process improvements as there are more functional units and yet power consumption has dropped.

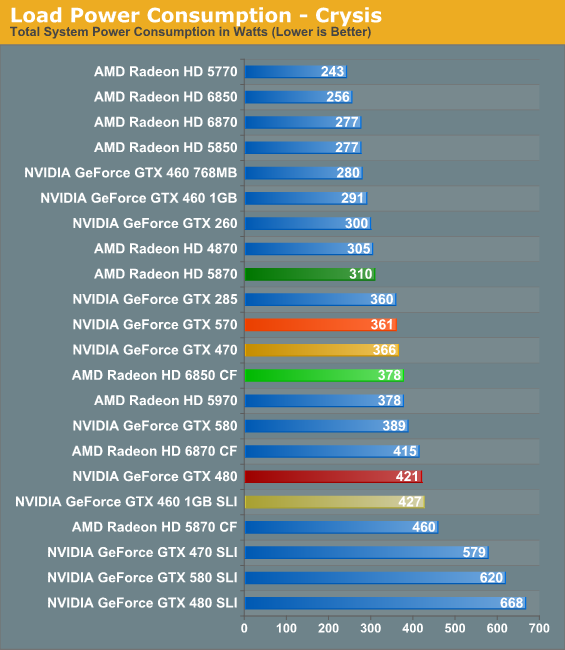

Under load we once again see NVIDIA’s design and TSMC’s process improvements in action. Even though the GTX 570 is over 20% faster than the GTX 470 on Crysis, power consumption has dropped by 5W. The comparison to the GTX 480 is even more remarkable at a 60W reduction in power consumption for the same level of performance, a number well in excess of the power savings from removing 2 GDDR5 memory chips. It’s quite remarkable how a bunch of minor changes can add up to so much.

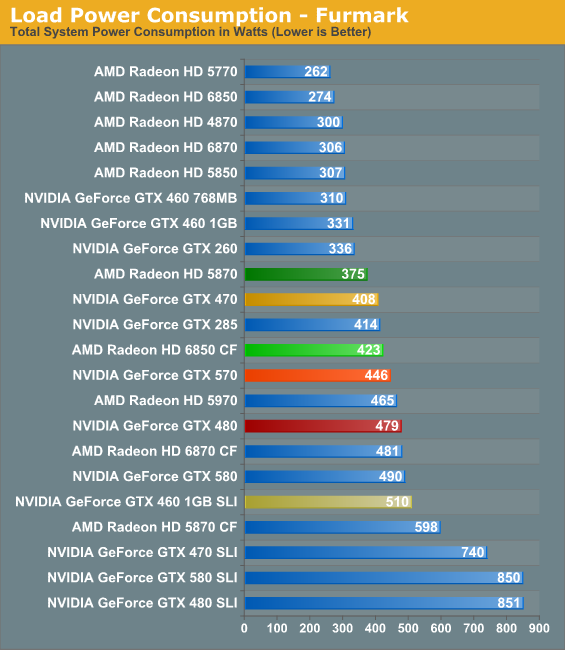

On the flipside however we have the newly reinstated FurMark, which we can once again use thanks to W1zzard of TechPowerUp’s successful disabling of NVIDIA’s power throttling features on the GTX 500 series. We’ve rebenched the GTX 580 and GTX 580 SLI along with the GTX 570, and now have fully comparable numbers once more.

It’s quite interesting that while the GTX 570 does so well under Crysis, it does worse here than the GTX 470. The official TDP difference is in the GTX 470’s favor, but not to this degree. I put more stock in the Crysis numbers than the FurMark numbers, but it’s worth noting that the GTX 570 can at times be worse than the GTX 470. However also take note of the fact that the GTX 570 is still beating the GTX 480 by 33W, and we’ve already established the two have similar performance.

Of course the stand-outs here are AMD’s cards, which benefit from AMD’s small, more power efficient chip designs. The Radeon HD 5870 draws 50W+ less than the GTX 570 in all situations, and even the 6850 CF wavers between being slightly worse and slightly better than the GTX 570. With the architecture and process improvements the GTX 570’s power consumption is in line with historically similar cards, but NVIDIA still cannot top AMD on power efficiency.

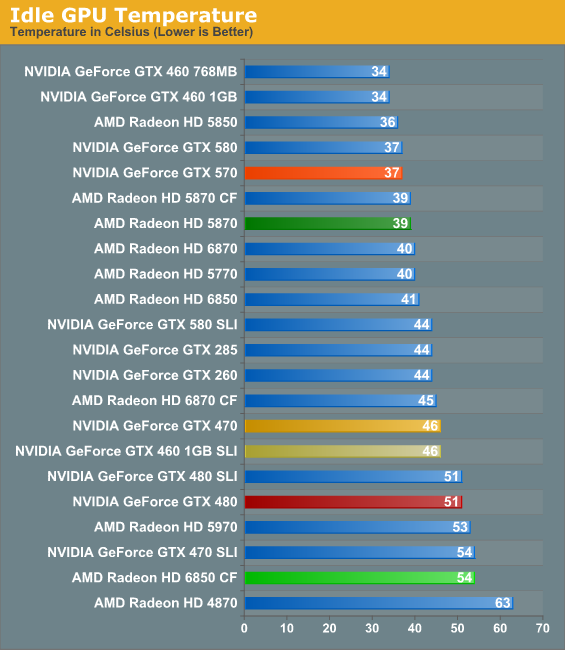

Up next are idle temperatures. With nearly identical GPUs on an identical platform it should come as little surprise that the GTX 570 performs just like a GTX 580 here: 37C. The GTX 570 joins the ranks among some of our coolest idling high-end cards, and manages to edge out the 5870 while clobbering the SLI and CF cards.

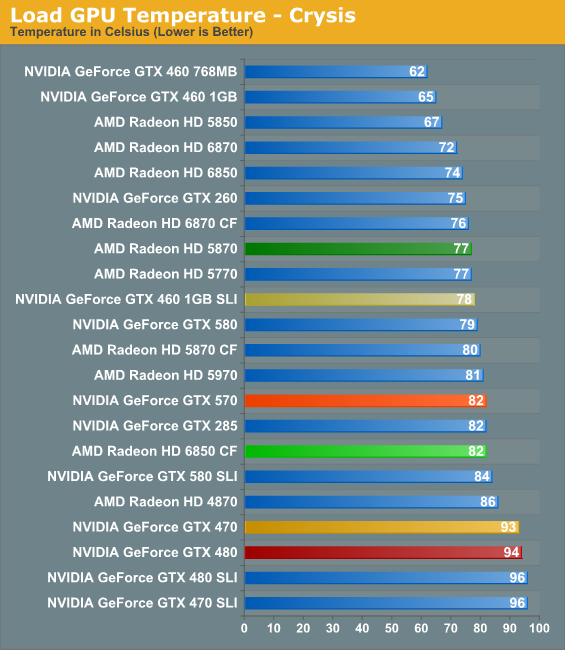

With the same cooling apparatus as the GTX 580 and lower power consumption, we originally expected the GTX 570 to edge out the GTX 580 in all cases, but as it turns out it’s not so simple. When it comes to Crysist the 82C GTX 570 is 3C warmer than the GTX 580, bringing it in line with other cards like the GTX 285. More importantly however it’s 11-12C cooler than the GTX 480/470, driving home the importance of the card’s lower power consumption along with the vapor chamber cooler. Is does not end up being quite as competitive with the 5870 and SLI/CF cards however, as those other setups are between 0C-5C cooler.

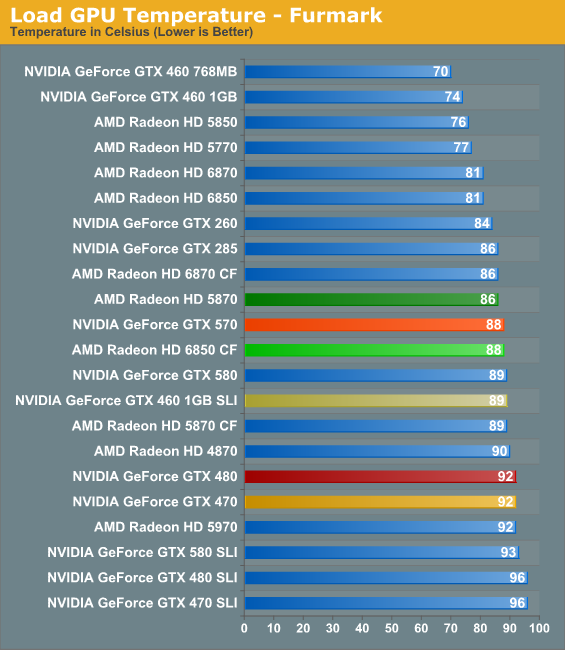

As for FurMark, temperatures approach the mid-to-upper 80s, putting it in good company of most other high-end cards. The 5870 edges out the GTX 570 by 2C, while everything else is as warm or warmer. The GTX 480/470 in particular end up being 4C warmer, and as we’ll see a good bit louder.

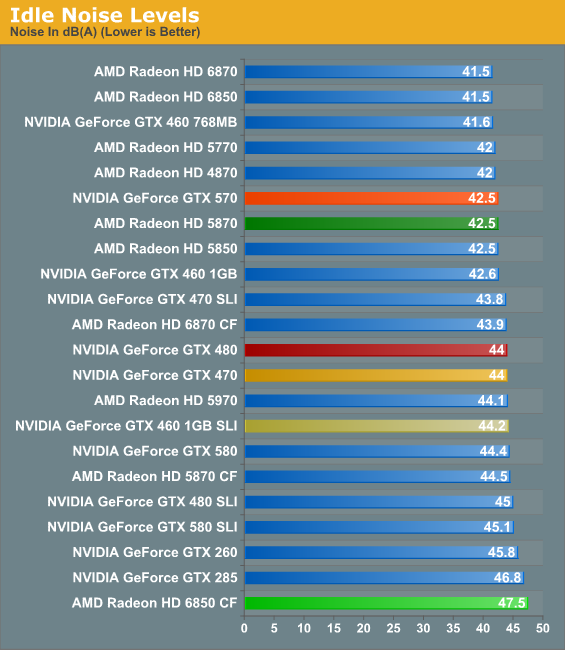

It’s once we look at our noise data that it’s clear NVIDIA has been tinkering with the fan parameters of their design for the GTX 570, as the GTX 570 doesn’t quite match the GTX 580 here. When it comes to idle noise for example the GTX 570 manages to just about hit the noise floor of our rig, emitting 1.5dB less noise than the GTX 580/480/470. For the purposes of our testing, it’s effectively a silent card at idle in our test rig.

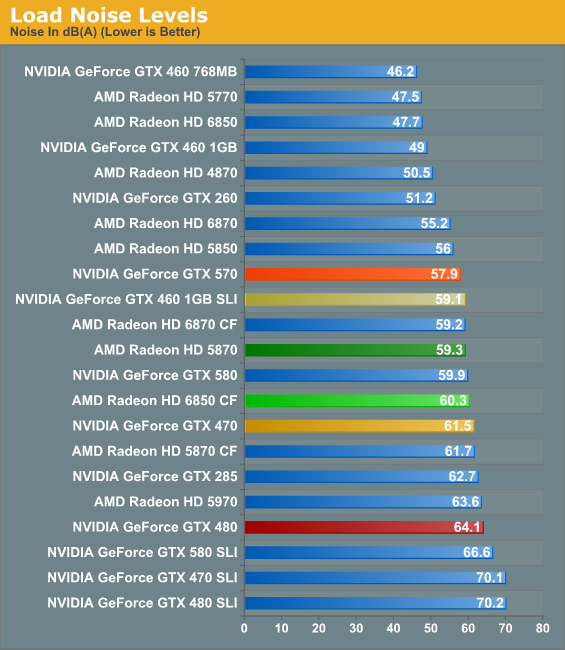

Under load, it’s finally apparent that NVIDIA has tweaked the GTX 570 to be quieter as opposed to cooler. For the slightly higher temperatures we saw earlier the GTX 570 is 2dB quieter than the GTX 580, 3.6dB quieter than the GTX 470, and 6.2dB quieter than the similarly performing GTX 480. For its performance level the GTX 570 effectively tops the charts here – the next quietest cards are the 5850 and 6870, a good pedigree to be compared to. On the other side of the chart we have the 5870 at 1.4dB louder, and the SLI/CF cards at anywhere between 1.2dB and 2.4dB louder.

54 Comments

View All Comments

ilkhan - Tuesday, December 7, 2010 - link

I love my HDMI connection. It falls out of my monitor about once a month and I have to flip the screen around to plug it back in. Thanks TV industry!Mr Perfect - Tuesday, December 7, 2010 - link

It is somewhat disappointing. People with existing screens probably don't care, and the cheap TN screens still pimp the DVI interface, but all of the high end IPS panel displays include either HDMI, DP or both. Why wouldn't a high end video card have the matching outputs?EnzoFX - Tuesday, December 7, 2010 - link

High-End gaming card is probably for serious gamers, which should probably go with TN as they are the best against input lag =P.Mr Perfect - Tuesday, December 7, 2010 - link

Input lag depends on the screen's controller, you're thinking pixel response time. Yes, TN is certainly faster then IPS for that. I still wouldn't get a TN though, the IPS isn't far enough behind in response time to negate the picture quality improvement.MrSpadge - Tuesday, December 7, 2010 - link

Agreed. The pixel response time of my eIPS is certainly good enough to be of absolutely no factor. The image quality, on the other hand, is worth every cent.MrS

DanNeely - Tuesday, December 7, 2010 - link

Due to the rarity of HDMI 1.4 devices (needed to go above 1920x1200) replacing a DVI port with an HDMI port would result in a loss of capability. This is aggravated by the fact that due to their stickerprice 30" monitors have a much longer lifetime than 1080p displays and owners who would get even more outraged as being told they had to replace their screens to use a new GPU. MiniDVI isn't an option either because it's singlelink and has the same 1920x1200 cap as HDMI 1.3.Unfortunately there isn't room for anything except a single miniHDMI/miniDP port to the side of 2 DVI's, installing it on the top half of a double height card like ATI has done cuts into the cards exhaust airflow and hurts cooling. With the 5xx series still limited to 2 outputs that's not a good tradeoff, and HDMI is much more ubiquitous.

The fiasco with DP-DVI adapters and the 5xxx series cards doesn't exactly make them an appealing option either to consumers.

Mr Perfect - Wednesday, December 8, 2010 - link

That makes good sense too, you certainty wouldn't want to drop an existing port to add DP. I guess it really comes down to that cooling vs port selection problem.I wonder why ATI stacked the DVI ports? Those are the largest ports out of the three and so block the most ventilation. If you could stack a mini-DP over the mini HDMI, it would be a pretty small penalty. It might even be possible to mount the mini ports on edge instead of horizontally to keep them all on one slot.

BathroomFeeling - Tuesday, December 7, 2010 - link

"...Whereas the GTX 580 took a two-tiered approach on raising the bar on GPU performance while simultaneously reducing power consumption, the GeForce GTX 470 takes a much more single-tracked approach. It is for all intents and purposes the new GTX 480, offering gaming performance..."Lonyo - Tuesday, December 7, 2010 - link

Any comments on how many will be available? In the UK sites are expecting cards on the 9th~11th December, so not a hard launch there.Newegg seems to only have limited stock.

Not to mention an almost complete lack of UK availability of GTX580s, and minimal models and quantities on offer from US sites (Newegg).

Kef71 - Tuesday, December 7, 2010 - link

Or maybe they are a nvidia "feature" only?