NVIDIA's GeForce GTX 580: Fermi Refined

by Ryan Smith on November 9, 2010 9:00 AM ESTCompute and Tessellation

Moving on from our look at gaming performance, we have our customary look at compute performance, bundled with a look at theoretical tessellation performance. Unlike our gaming benchmarks where NVIDIA’s architectural enhancements could have an impact, everything here should be dictated by the core clock and SMs, with shader and polymorph engine counts defining most of these tests.

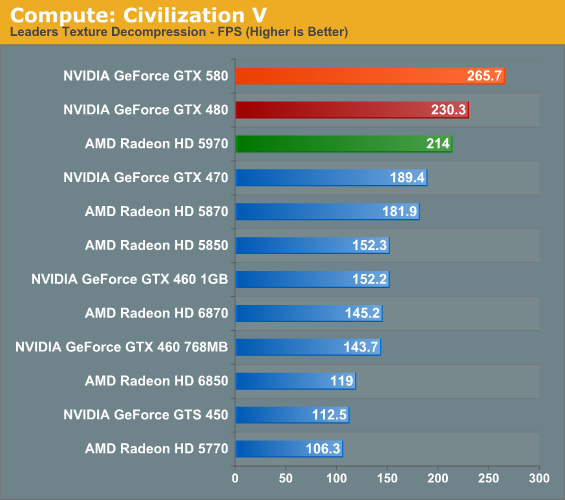

Our first compute benchmark comes from Civilization V, which uses DirectCompute to decompress textures on the fly. Civ V includes a sub-benchmark that exclusively tests the speed of their texture decompression algorithm by repeatedly decompressing the textures required for one of the game’s leader scenes.

We previously discovered that NVIDIA did rather well in this test, so it shouldn’t come as a surprise that the GTX 580 does even better. Even without the benefits of architectural improvements, the GTX 580 still ends up pulling ahead of the GTX 480 by 15%. The GTX 580 also does well against the 5970 here, which does see a boost from CrossFire but ultimately falls short, showcasing why multi-GPU cards can be inconsistent at times.

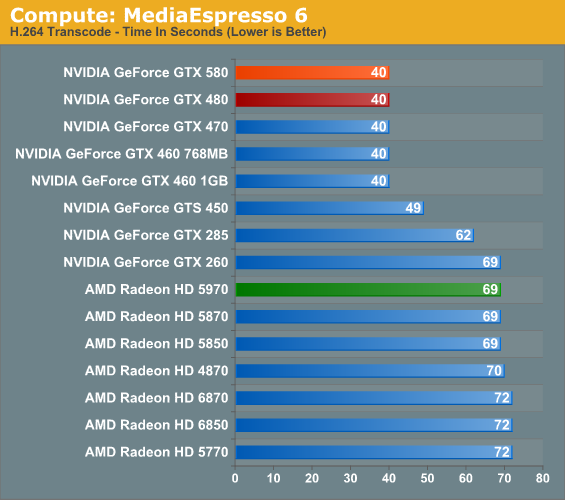

Our second compute benchmark is Cyberlink’s MediaEspresso 6, the latest version of their GPU-accelerated video encoding suite. MediaEspresso 6 doesn’t currently utilize a common API, and instead has codepaths for both AMD’s APP (née Stream) and NVIDIA’s CUDA APIs, which gives us a chance to test each API with a common program bridging them. As we’ll see this doesn’t necessarily mean that MediaEspresso behaves similarly on both AMD and NVIDIA GPUs, but for MediaEspresso users it is what it is.

We throw MediaEspresso 6 in largely to showcase that not everything that’s GPU accelerated is GPU-bound, as ME6 showcases this nicely. Once we move away from sub-$150 GPUs, APIs and architecture become much more important than raw speed. The 580 is unable to differentiate itself from the 480 as a result.

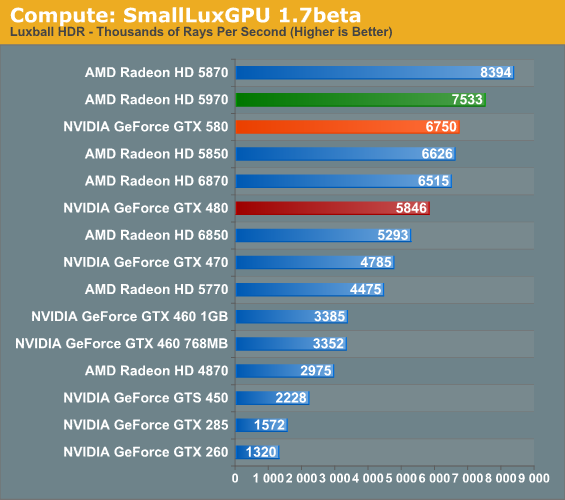

Our third GPU compute benchmark is SmallLuxGPU, the GPU ray tracing branch of the open source LuxRender renderer. While it’s still in beta, SmallLuxGPU recently hit a milestone by implementing a complete ray tracing engine in OpenCL, allowing them to fully offload the process to the GPU. It’s this ray tracing engine we’re testing.

SmallLuxGPU is rather straightforward in its requirements: compute and lots of it. The GTX 580 attains most of its theoretical performance improvement here, coming in at a bit over 15% over the GTX 480. It does get bested by a couple of AMD’s GPUs however, a showcase of where AMD’s theoretical performance advantage in compute isn’t so theoretical.

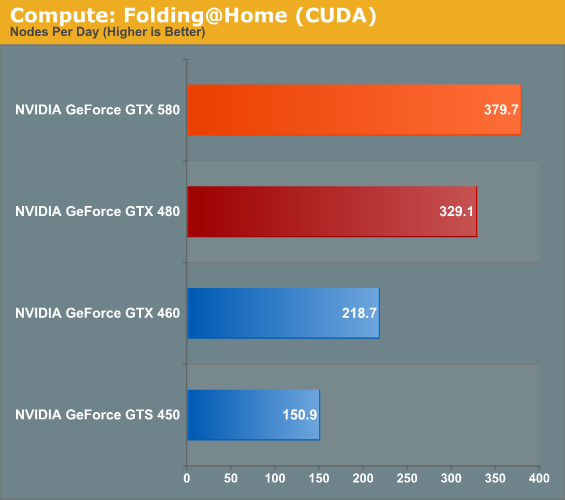

Our final compute benchmark is a Folding @ Home benchmark. Given NVIDIA’s focus on compute for Fermi and in particular GF110 and GF100, cards such as the GTX 580 can be particularly interesting for distributed computing enthusiasts, who are usually looking for the fastest card in the coolest package. This benchmark is from the original GTX 480 launch, so this is likely the last time we’ll use it.

If I said the GTX 580 was 15% faster, would anyone be shocked? So long as we’re not CPU bound it seems, the GTX 580 is 15% faster through all of our compute benchmarks. This coupled with the GTX 580’s cooler/quieter design should make the card a very big deal for distributed computing enthusiasts.

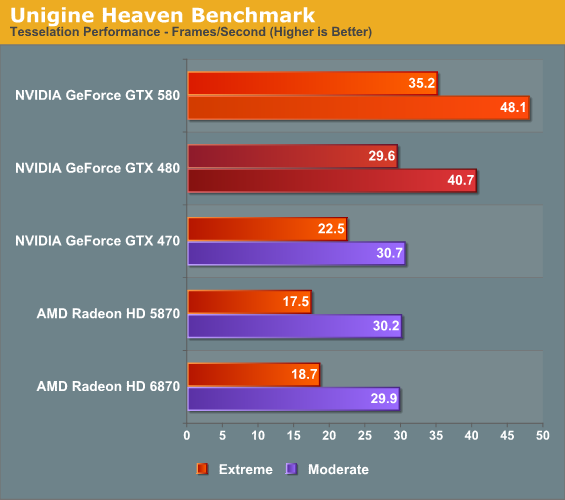

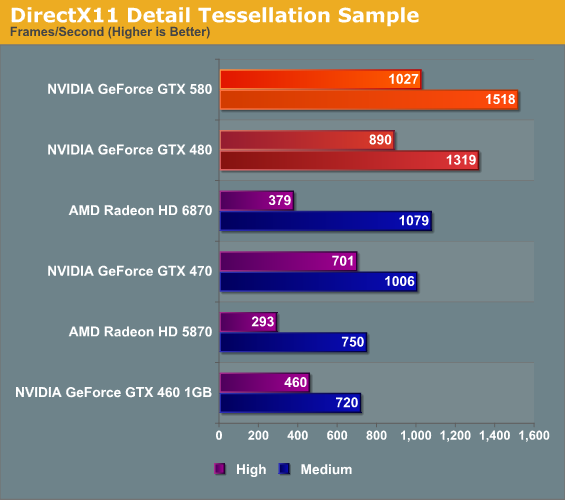

At the other end of the spectrum from GPU computing performance is GPU tessellation performance, used exclusively for graphical purposes. Here we’re interesting in things from a theoretical architectural perspective, using the Unigine Heaven benchmark and Microsoft’s DirectX 11 Detail Tessellation sample program to measure the tessellation performance of a few of our cards.

NVIDIA likes to heavily promote their tessellation performance advantage over AMD’s Cypress and Barts architectures, as it’s by far the single biggest difference between them and AMD. Not surprisingly the GTX 400/500 series does well here, and between those cards the GTX 580 enjoys a 15% advantage in the DX11 tessellation sample, while Heaven is a bit higher at 18% since Heaven is a full engine that can take advantage of the architectural improvements in GF110.

Seeing as how NVIDIA and AMD are still fighting about the importance of tessellation in both the company of developers and the public, these numbers shouldn’t be used as long range guidance. NVIDIA clearly has an advantage – getting developers to use additional tessellation in a meaningful manner is another matter entirely.

160 Comments

View All Comments

chizow - Tuesday, November 9, 2010 - link

There's 2 ways they can go with the GTX 570, either more disabled SM than just 1 (2-3) and similar clockspeed to the GTX 580, or fewer disabled SM but much lower clockspeed. Both would help to reduce TDP but with more disabled SM that would also help Nvidia unload the rest of their chip yield. I have a feeling they'll disable 2-3 SM with higher clocks similar to the 580 so that the 470 is still slightly slower than the 480.I'm thinking along the same lines as you though for the GTX 560, it'll most likely be the full-fledged GF104 we've been waiting for with all 384SP enabled, probably slightly higher clockspeeds and not much more than that, but definitely a faster card than the original GF104.

vectorm12 - Tuesday, November 9, 2010 - link

I'd really love to see the raw crunching power of the 480/580 vs. 5870/6870.I've found ighashgpu to be a great too to determine that and it can be found at http://www.golubev.com/

Please consider it for future tests as it's very well optimized for both CUDA and Stream

spigzone - Tuesday, November 9, 2010 - link

The performance advantage of a single GPU vs CF or SLI is steadily diminishing and approaching a point of near irrelevancy.6870 CF beats out the 580 in nearly every parameter, often substantially on performance benchmarks, and per current newegg prices, comes in at $80 cheaper.

But I think the real sweet spot would be a 6850 CF setup with AMD Overdrive applied 850Mz clocks, which any 6850 can achieve at stock voltages with minimal thermal/power/noise costs (and minimal 'tinkering'), and from the few 6850 CF benchmarks that showed up would match or even beat the GTX580 on most game benchmarks and come in at $200 CHEAPER.

That's an elbow from the sky in my book.

smookyolo - Tuesday, November 9, 2010 - link

You seem to be forgetting the minimum framerates... those are so much more important than average/maximum.Sihastru - Tuesday, November 9, 2010 - link

Agreed, CF scales very badly when it comes to minumum framerates. It is even below the minimum framerates of one of the cards in the CF setup. It is very anoying when you're doing 120FPS in a game and from time to time your framerates drop to an unplayable and very noticable 20FPS.chizow - Tuesday, November 9, 2010 - link

Nice job on the review as usual Ryan,Would've liked to have seen some expanded results however, but somewhat understandable given your limited access to hardware atm. It sounds like you plan on having some SLI results soon.

I would've really liked to have seen clock-for-clock comparisons though to the original GTX 480 though to isolate the impact of the refinements between GF100 and GF110. To be honest, taking away the ~10% difference in clockspeeds, what we're left with seems to be ~6-10% from those missing 6% functional units (32 SM and 4 TMUs).

I would've also liked to have seen some preliminary overclocking results with the GF110 to see how much the chip revision and cooling refinements increased clockspeed overhead, if at all. Contrary to somewhat popular belief, the GTX 480 did overclock quite well, and while that also increased heat and noise it'll be hard for someone with an overclocked 480 to trade it in for a 580 if it doesn't clock much better than the 480.

I know you typically have follow-up articles once the board partners send you more samples, so hopefully you consider these aspects in your next review, thanks!

PS: On page 4, I believe this should be a supposed GTX 570 mentioned in this excerpt and not GTX 470: "At 244W TDP the card draws too much for 6+6, but you can count on an eventual GTX 470 to fill that niche."

mapesdhs - Tuesday, November 9, 2010 - link

"I would've also liked to have seen some preliminary overclocking results ..."

Though obviously not a true oc'ing revelation, I note with interest there's already

a factory oc'd 580 listed on seller sites (Palit Sonic), with an 835 core and 1670

shader. The pricing is peculiar though, with one site pricing it the same as most

reference cards, another site pricing it 30 UKP higher. Of course though, none

of them show it as being in stock yet. :D

Anyway, thanks for the writeup! At least the competition for the consumer finally

looks to be entering a more sensible phase, though it's a shame the naming

schemes are probably going to fool some buyers.

Ian.

Ryan Smith - Wednesday, November 10, 2010 - link

You're going to have to wait until I have some more cards for some meaningful overclocking results. However clock-for-clock comparisons I can do.http://www.anandtech.com/show/4012/nvidias-geforce...

JimmiG - Tuesday, November 9, 2010 - link

Well technically, this is not a 512-SP card at 772 MHz. This is because if you ever find a way to put all 512 processors at 100% load, the throttling mechanism will kick in.That's like saying you managed to overclock your CPU to 4.7 GHz.. sure, it might POST, but as soon as you try to *do* anything, it instantly crashes.

Ryan Smith - Tuesday, November 9, 2010 - link

Based on the performance of a number of games and compute applications, I am confident that power throttling is not kicking in for anything besides FurMark and OCCT.