NVIDIA's GeForce GTX 580: Fermi Refined

by Ryan Smith on November 9, 2010 9:00 AM ESTMass Effect 2

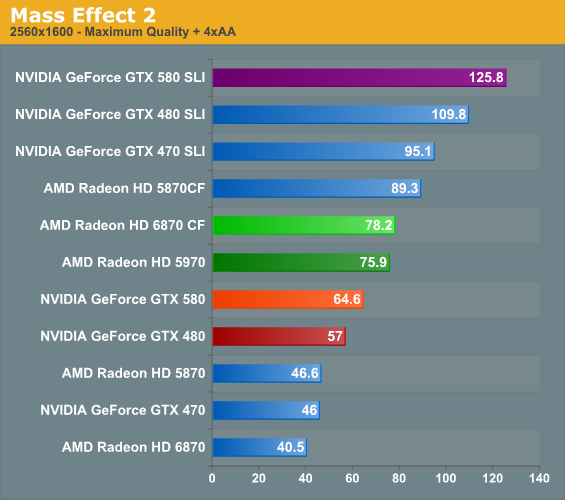

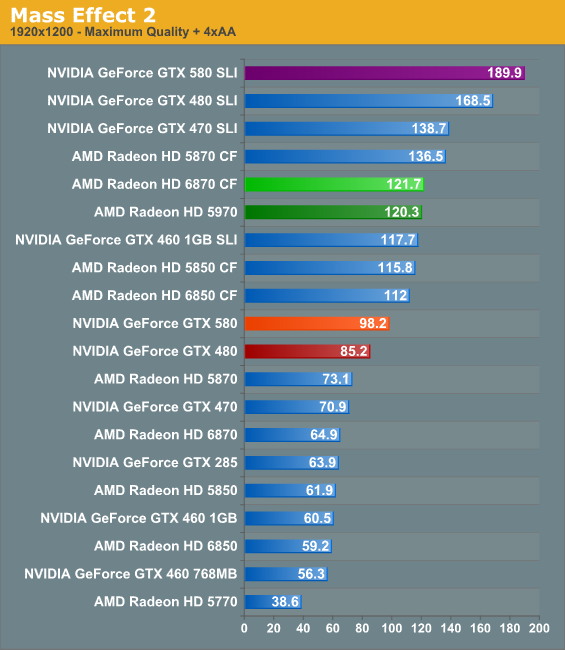

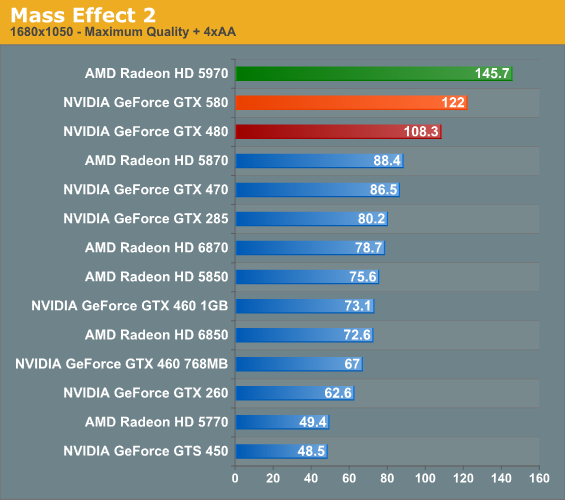

Electronic Arts’ space-faring RPG is our Unreal Engine 3 game. While it doesn’t have a built in benchmark, it does let us force anti-aliasing through driver control panels, giving us a better idea of UE3’s performance at higher quality settings. Since we can’t use a recording/benchmark in ME2, we use FRAPS to record a short run.

Mass Effect 2 has always surprised us by just how strenuous it is, even though UE3 is intended to be flexible to reach a wide range of systems. Furthermore it doesn’t seem to benefit nearly as much from the GTX 580 as other games, with the performance advantage over the GTX 480 shrinking to around 13%. The ultimate victor ends up being the AMD multi-GPU setups, and farther above that still is the GTX 470 SLI.

160 Comments

View All Comments

RussianSensation - Wednesday, November 10, 2010 - link

Very good point techcurious. Which is why the comment in the review about having GTX580 not being a quiet card at load is somewhat misleading. I have lowered my GTX470 from 40% idle fan speed to 32% fan speed and my idle temperatures only went up from 38*C to 41*C. At 32% fan speed I can not hear the car at all over other case fans and Scythe S-Flex F cpu fan. You could do the same with almost any videocard.Also, as far as FurMark goes, the test does test all GPUs beyond their TDPs. TDP is typically not the most power the chip could ever draw, such as by a power virus like FurMark, but rather the maximum power that it would draw when running real applications. Since HD58/68xx series already have software and hardware PowerPlay enabled which throttles their cards under power viruses like FurMark it was already meaningless to use FurMark for "maximum" power consumption figures. Besides the point, FurMark is just a theoretical application. AMD and NV implement throttling to prevent VRM/MOSFET failures. This protects their customers.

While FurMark can be great for stability/overclock testing, the power consumption tests from it are completely meaningless since it is not something you can achieve in any videogame (can a videogame utilize all GPU resources to 100%? Of course not since there are alwasy bottlenecks in GPU architectures).

techcurious - Wednesday, November 10, 2010 - link

How cool would it be if nVidia added to it's control panel a tab for dynamic fan speed control based on 3 user selectable settings.1) Quiet... which would spin the fan at the lowest speed while staying just enough below the GPU temperature treshold at load and somewhere in the area of low 50 C temp in idle.

2) Balanced.. which would be a balance between moderate fan speed (and noise levels) resulting in slightly lower load temperatures and perhaps 45 C temp in idle.

3) Cool.. which would spin the fan the fastest, be the loudest setting but also the coolest. Keeping load temperatures well below the maximum treshold and idle temps below 40 C. This setting would please those who want to extend the life of their graphics card as much as possible and do not care about noise levels, and may anyway have other fans in their PC that is louder anyway!

Maybe Ryan or someone else from Anandtech (who would obviously have much more pull and credibility than me) could suggest such a feature to nVidia and AMD too :o)

BlazeEVGA - Wednesday, November 10, 2010 - link

Here's what I dig about you guys at AnandTech, not only are your reviews very nicely presented but you keep it relevant for us GTX 285 owners and other more legacy bound interested parties - most other sites fail to provide this level of complete comparison. Much appreciated. You charts are fanatastic, your analysis and commentary is nicely balanced and attention to detail is most excellent - this all makes for a more simplified evaluation by the potential end user of this card.Keep up the great work...don't know what we'd do without you...

Robaczek - Thursday, November 11, 2010 - link

I really liked the article but would like to see some comparison with nVidia GTX295..massey - Wednesday, November 24, 2010 - link

Do what I did. Lookup their article on the 295, and compare the benchmarks there to the ones here.Here's the link:

http://www.anandtech.com/show/2708

Seems like Crysis runs 20% faster at max res and AA. Is a 20% speed up worth $500? Maybe. Depends on how anal you are about performance.

lakedude - Friday, November 12, 2010 - link

Someone needs to edit this review! The acronym "AMD" is used several places when it is clear "ATI" was intended.For example:

"At the same time, at least the GTX 580 is faster than the GTX 480 versus AMD’s 6800/5800 series"

lakedude - Friday, November 12, 2010 - link

Never mind, looks like I'm behind the times...Nate007 - Saturday, November 13, 2010 - link

In the end we ( the gamers) who purchase these cards NEED to be be supporting BOTH sides so the AMD and Nvidia can both manage to stay profitable.Its not a question of who Pawns who but more importantly that we have CHOICE !!

Maybe some of the people here ( or MOST) are not old enough to remember the days when mighty

" INTEL" ruled the landscape. I can tell you for 100% fact that CPU's were expensive and there was no choice in the matter.

We can agree to disagree but in the END, we need AMD and we need NVIDIA to keep pushing the limits and offering buyers a CHOICE.

God help us if we ever lose one or the other, then we won't be here reading reviews and or jousting back and forth on who has the biggest stick. We will all be crying and complaining how expense it will be to buy a decent Video card.

Here's to both Company's ..............Long live NVIDIA & AMD !

Philip46 - Wednesday, November 17, 2010 - link

Finally, at the high end Nvidia delivers a much cooler and quiter, one GPU card, that is much more like the GTX 460, and less like the 480, in terms of performance/heat balance.I'm one where i need Physx in my games, and until now, i had to go with a SLI 460 setup for one pc and for a lower rig, a 2GB 460 GTX(for maxing GTA:IV out).

Also, i just prefer the crisp Nvidia desktop quality, and it's drivers are more stable. (and ATI's CCC is a nightmare)

For those who want everything, and who use Physx, the 580 and it's upcoming 570/560 will be the only way to go.

For those who live by framerate only, then you may want to see what the next ATI lineup will deliver for it's single GPU setup.

But whatever you choose, this is a GREAT thing for the industry..and the gamer, as Nvidia delivered this time with not just performance, but also lower temps/noise levels, as well.

This is what the 480, should have been, but thankfully they fixed it.

swing848 - Wednesday, November 24, 2010 - link

Again, Anand is all over the place with different video cards, making judgements difficult.He even threw in a GTS 450 and an HD 4870 here and there. Sometimes he would include the HD 5970 and often not.

Come on Anand, be consistent with the charts.