NVIDIA's GeForce GTX 580: Fermi Refined

by Ryan Smith on November 9, 2010 9:00 AM ESTKeeping It Cool: Transistors, Throttles, and Coolers

Beyond the specific architectural improvements for GF110 we previously discussed, NVIDIA has also been tinkering with their designs at a lower level to see what they could do to improve their performance in conjunction with TSMC’s 40nm manufacturing process. GF100/GTX480 quickly gathered a reputation as a hot product, and this wasn’t an unearned reputation. Even with an SM fused off, GTX 480 already had a TDP of 250W, and the actual power draw could surpass that in extreme load situations such as FurMark.

NVIDIA can (and did) tackle things on the cooling side of things by better dissipating that heat, but keeping their GPUs from generating it in the first place was equally important. This was especially important if they wanted to push high-clocked fully-enabled designs on to the consumer GeForce and HPC Tesla markets, with the latter in particular not being a market where you can simply throw more cooling at the problem. As a result NVIDIA had to look at GF110 at a transistor level, and determine what they could do to cut power consumption.

Semiconductors are a near-perfect power-to-heat conversion device, so a lot of work goes in to getting as much work done with as little power as necessary. This is compounded by the fact that dynamic power (which does useful work) only represents some of the power used – the rest of the power is wasted as leakage power. In the case of a high-end GPU NVIDIA doesn’t necessarily want to reduce dynamic power usage and have it impact performance, instead they want to go after leakage power. This in turn is compounded by the fact that leaky transistors and high clocks are strange bedfellows, making it difficult to separate the two. The result is that leaky transistors are high-clocking transistors, and vice versa.

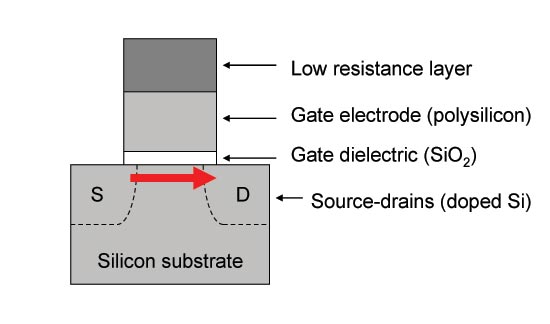

A typical CMOS transitor: Thin gate dielectrics lead to leakage

Thus the trick to making a good GPU is to use leaky transistors where you must, and use slower transistors elsewhere. This is exactly what NVIDIA did for GF100, where they primarily used 2 types of transistors differentiated in this manner. At a functional unit level we’re not sure which units used what, but it’s a good bet that most devices operating on the shader clock used the leakier transistors, while devices attached to the base clock could use the slower transistors. Of course GF100 ended up being power hungry – and by extension we assume leaky anyhow – so that design didn’t necessarily work out well for NVIDIA.

For GF110, NVIDIA included a 3rd type of transistor, which they describe as having “properties between the two previous ones”. Or in other words, NVIDIA began using a transistor that was leakier than a slow transistor, but not as leaky as the leakiest transistors in GF100. Again we don’t know which types of transistors were used where, but in using all 3 types NVIDIA ultimately was able to lower power consumption without needing to slow any parts of the chip down. In fact this is where virtually all of NVIDIA’s power savings come from, as NVIDIA only outright removed few if any transistors considering that GF110 retains all of GF100’s functionality.

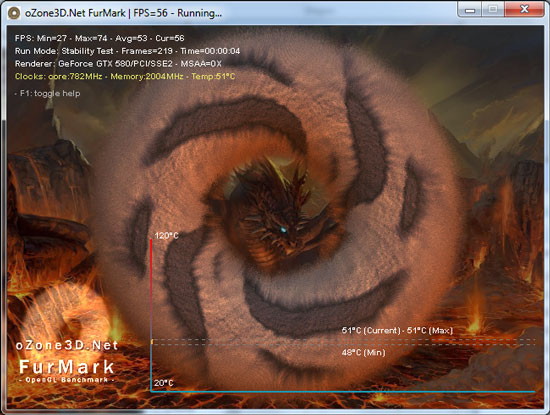

Of course reducing leakage is one way to reduce power consumption, but it doesn’t solve NVIDIA’s other problems in hitting their desired TDP. Both NVIDIA and AMD base their GPU TDP specifications around “real world” applications and games, with NVIDIA largely viewed to be more aggressive on this front. In either case load-generating programs like FurMark and OCCT do not exist in AMD or NVIDIA’s worlds, leading both companies to greatly despise these programs and label them as “power viruses” and other terms.

After a particularly rocky relationship with FurMark blowing up VRMs on the Radeon 4000 series, AMD instituted safeties in their cards with the 5000 series to protect against FurMark – AMD monitored the temperature of the VRMs, and would immediately downclock the GPU if the VRM temperatures exceeded specifications. Ultimately as this was temperature based AMD’s cards were allowed to run to the best of their capabilities, so long as they weren’t going to damage themselves. In practice we rarely encountered AMD’s VRM protection even with FurMark except in overclocking scenarios, where overvolting cards such as the 5970 quickly drove up the temperature of the VRMs.

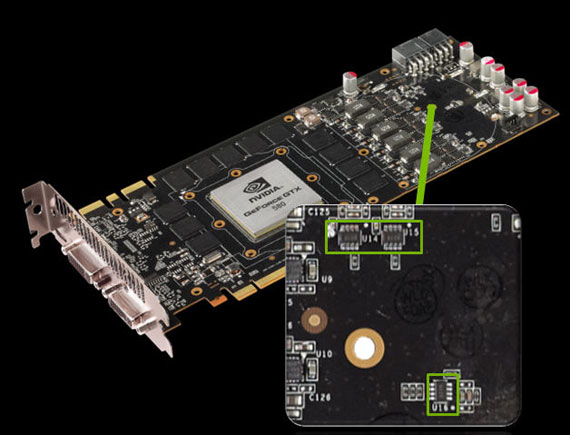

For GTX 580 NVIDIA is taking an even more stringent approach than AMD, as they’ll be going after power consumption itself rather than just focusing on protecting the card. Attached to GTX 580 are a series of power monitoring chips, which monitor the amount of power the card is drawing from the PCIe slot and PCIe power plugs. By collecting this information NVIDIA’s drivers can determine if the card is drawing too much power, and slow the card down to keep it within spec. This kind of power throttling is new for GPUs, though it’s been common with CPUs for a long time.

NVIDIA’s reasoning for this change doesn’t pull any punches: it’s to combat OCCT and FurMark. At an end-user level FurMark and OCCT really can be dangerous – even if they can’t break the card any longer, they can still cause other side-effects by drawing too much power from the PSU. As a result having this protection in place more or less makes it impossible to toast a video card or any other parts of a computer with these programs. Meanwhile at a PR level, we believe that NVIDIA is tired of seeing hardware review sites publish numbers showcasing GeForce products drawing exorbitant amounts of power even though these numbers represent non-real-world scenarios. By throttling FurMark and OCCT like this, we shouldn’t be able to get their cards to pull so much power. We still believe that tools like FurMark and OCCT are excellent load-testing tools for finding a worst-case scenario and helping our readers plan system builds with those scenarios in mind, but at the end of the day we can’t argue that this isn’t a logical position for NVIDIA.

Power Monitoring Chips Identified

While this is a hardware measure the real trigger is in software. FurMark and OCCT are indeed throttled, but we’ve been able to throw other programs at the GTX 580 that cause a similar power draw. If NVIDIA was actually doing this all in hardware everything would be caught, but clearly it’s not. For the time being this simplifies everything – you need not worry about throttling in anything else whatsoever – but there will be ramifications if NVIDIA actually uses the hardware to its full potential.

Much like GDDR5 EDC complicated memory overclocking, power throttling would complicate overall video card overclocking, particularly since there’s currently no way to tell when throttling kicks in. On AMD cards the clock drop is immediate, but on NVIDIA’s cards the drivers continue to report the card operating at full voltage and clocks. We suspect NVIDIA is using a NOP or HLT-like instruction here to keep the card from doing real work, but the result is that it’s completely invisible even to enthusiasts. At the moment it’s only possible to tell if it’s kicking in if an application’s performance is too low. It goes without saying that we’d like to have some way to tell if throttling is kicking in if NVIDIA fully utilizes this hardware.

Finally, with average and maximum power consumption dealt with, NVIDIA turned to improving cooling on the GTX to bring temperatures down and to more quietly dissipate heat. GTX 480 not only was loud, but it had an unusual cooling design that while we’re fine with, ended up raising eyebrows elsewhere. Specifically NVIDIA had heatpipes sticking out of the GTX 480, an exposed metal grill over the heatsink, and holes in the PCB on the back side of the blower to allow it to breathe from both sides. Considering we were dissipating over 300W at times it was effective, but apparently not a design NVIDIA liked.

So for GTX 580 NVIDIA has done a lot of work under the hood to produce a card that looks less like the GTX 480 and more like the all-enclosed coolers we saw with the GTX 200 series; the grill, external heatpipes, and PCB ventilation holes are all gone from the GTX 580, and no one would hold it against you to mistake it for a GTX 285. The biggest change in making this possible is NVIDIA’s choice of heatsink: NVIDIA has ditched traditional heatpipes and gone to the new workhorse of vapor chamber cooling.

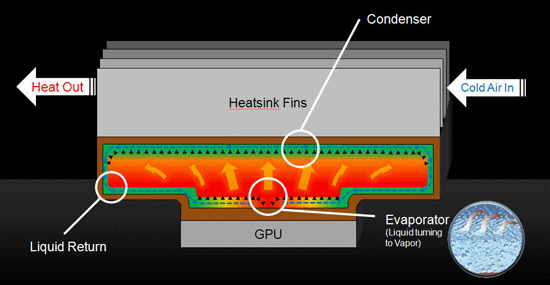

A Vapor Chamber Cooler In Action (Courtesy NVIDIA)

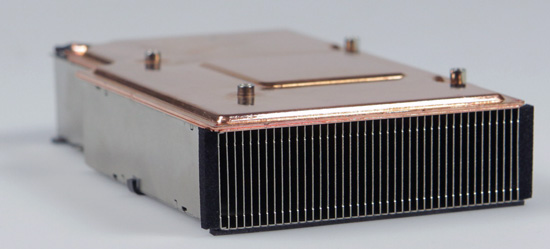

The GTX 580's Vapor Chamber + Heatsink

Vapor chamber coolers have been around for quite some time as aftermarket/custom coolers, and are often the signature design element for Sapphire; it was only more recently with the Radeon HD 5970 that we saw one become part of a reference GPU design. NVIDIA has gone down the same road and is now using a vapor chamber for the reference GTX 580 cooler. Visually this means the heatpipes are gone, while internally this should provide equal if not better heat conduction between the GPU’s heatspreader and the aluminum heatsink proper. The ultimate benefit from this being that with better heat transfer it’s not necessary to run the blower so hard to keep the heatsink cooler in order to maximize the temperature difference between the heatsink and GPU.

NVIDIA’s second change was to the blower itself, which is the source of all noise. NVIDIA found that the blower on the GTX 480 was vibrating against itself, producing additional noise and in particular the kind of high-pitch whining that makes a cooler come off as noisy. As a result NVIDIA has switched out the blower for a slightly different design that keeps a ring of plastic around the top, providing more stability. This isn’t a new design – it’s on all of our Radeon HD 5800 series cards – but much like the vapor chamber this is the first time we’ve seen it on an NVIDIA reference card.

Top: GTX 480 Blower. Bottom: GTX 580 Blower

Finally, NVIDIA has also tinkered with the shape of the shroud encasing the card for better airflow. NVIDIA already uses a slightly recessed shroud near the blower in order to allow some extra space between it and the next card, but they haven’t done anything with the overall shape until now. Starting with the GTX 580, the shroud is now slightly wedge-shaped between the blower and the back of the card; this according to NVIDIA improves airflow in SLI setups where there’s a case fan immediately behind the card by funneling more fresh air in to the gap between cards.

160 Comments

View All Comments

wtfbbqlol - Thursday, November 11, 2010 - link

Most likely an anomaly. Just compare the GTX480 to the GTX470 minimum framerate. There's no way the GTX480 is twice as fast as the GTX470.Oxford Guy - Friday, November 12, 2010 - link

It does not look like an anomaly since at least one of the few minimum frame rate tests posted by Anandtech also showed the 480 beating the 580.We need to see Unigine Heaven minimum frame rates, at the bare minimum, from Anandtech, too.

Oxford Guy - Saturday, November 13, 2010 - link

To put it more clearly... Anandtech only posted minimum frame rates for one test: Crysis.In those, we see the 480 SLI beating the 580 SLI at 1920x1200. Why is that?

It seems to fit with the pattern of the 480 being stronger in minimum frame rates in some situations -- especially Unigine -- provided that the resolution is below 2K.

I do hope someone will clear up this issue.

wtfbbqlol - Wednesday, November 10, 2010 - link

It's really disturbing how the throttling happens without any real indication. I was really excited reading about all the improvements nVidia made to the GTX580 then I read this annoying "feature".When any piece of hardware in my PC throttles, I want to know about it. Otherwise it just adds another variable when troubleshooting performance problem.

Is it a valid test to rename, say, crysis.exe to furmark.exe and see if throttling kicks in mid-game?

wtfbbqlol - Wednesday, November 10, 2010 - link

Well it looks like there is *some* official information about the current implementation of the throttling.http://nvidia.custhelp.com/cgi-bin/nvidia.cfg/php/...

Copy and paste of the message:

"NVIDIA has implemented a new power monitoring feature on GeForce GTX 580 graphics cards. Similar to our thermal protection mechanisms that protect the GPU and system from overheating, the new power monitoring feature helps protect the graphics card and system from issues caused by excessive power draw.

The feature works as follows:

• Dedicated hardware circuitry on the GTX 580 graphics card performs real-time monitoring of current and voltage on each 12V rail (6-pin, 8-pin, and PCI-Express).

• The graphics driver monitors the power levels and will dynamically adjust performance in certain stress applications such as Furmark 1.8 and OCCT if power levels exceed the card’s spec.

• Power monitoring adjusts performance only if power specs are exceeded AND if the application is one of the stress apps we have defined in our driver to monitor such as Furmark 1.8 and OCCT.

- Real world games will not throttle due to power monitoring.

- When power monitoring adjusts performance, clocks inside the chip are reduced by 50%.

Note that future drivers may update the power monitoring implementation, including the list of applications affected."

Sihastru - Wednesday, November 10, 2010 - link

I never heard anyone from the AMD camp complaining about that "feature" with their cards and all current AMD cards have it. And what would be the purpose of renaming your Crysis exe? Do you have problems with the "Crysis" name? You think the game should be called "Furmark"?So this is a non issue.

flyck - Wednesday, November 10, 2010 - link

the use of renaming is that nvidia uses name tags to identify wether it should throttle or not.... suppose person x creates a program and you use an older driver that does not include this name tag, you can break things.....Gonemad - Wednesday, November 10, 2010 - link

Big fat YES. Please do rename the executable from crysis.exe to furmark.exe, and tell us.Get furmark and go all the way around, rename it to Crysis.exe, but be sure to have a fire extinguisher in the premises. Caveat Emptor.

Perhaps just renaming in not enough, some checksumming is involved. It is pretty easy to change checksum without altering the running code, though. When compiling source code, you can insert comments in the code. When compiling, the comments are not dropped, they are compiled together with the running code. Change the comment, change the checksum. But furmark alone can do that.

Open the furmark on a hex editor and change some bytes, but try to do that in a long sequence of zeros at the end of the file. Usually compilers finish executables in round kilobytes, filling with zeros. It shouldn't harm the running code, but it changes the checksum, without changing byte size.

If it works, rename it Program X.

Ooops.

iwodo - Wednesday, November 10, 2010 - link

The good thing about GPU is that it scales VERY well ( if not linearly ) with transistors. 1 Node Die Shrink, Double the transistor account, double the performance.Combined there are not bottleneck with Memory, which GDDR5 still have lots of headroom, we are very limited by process and not the design.

techcurious - Wednesday, November 10, 2010 - link

I didnt read through ALL the comments, so maybe this was already suggested. But, can't the idle sound level be reduced simply by lowering the fan speed and compromising idle temperatures a bit? I bet you could sink below 40db if you are willing to put up with an acceptable 45 C temp instead of 37 C temp. 45 C is still an acceptable idle temp.