ZFS - Building, Testing, and Benchmarking

by Matt Breitbach on October 5, 2010 4:33 PM EST- Posted in

- IT Computing

- Linux

- NAS

- Nexenta

- ZFS

ZFS Features

ZFS includes two exciting features that dramatically improve the performance of read operations. I’m talking about ARC and L2ARC. ARC stands for adaptive replacement cache. ARC is a very fast block level cache located in the server’s memory (RAM). The amount of ARC available in a server is usually all of the memory except for 1GB.

For example, our ZFS server with 12GB of RAM has 11GB dedicated to ARC, which means our ZFS server will be able to cache 11GB of the most accessed data. Any read requests for data in the cache can be served directly from the ARC memory cache instead of hitting the much slower hard drives. This creates a noticeable performance boost for data that is accessed frequently.

As a general rule, you want to install as much RAM into the server as you can to make the ARC as big as possible. At some point adding more memory becomes cost prohibitive, which is where the L2ARC becomes important. The L2ARC is the second level adaptive replacement cache. The L2ARC is often called “cache drives” in the ZFS systems.

These cache drives are physically MLC style SSD drives. These SSD drives are slower than system memory, but still much faster than hard drives. More importantly, the SSD drives are much cheaper than system memory. Most people compare the price of SSD drives with the price of hard drives, and this makes SSD drives seem expensive. Compared to system memory, MLC SSD drives are actually very inexpensive.

When cache drives are present in the ZFS pool, the cache drives will cache frequently accessed data that did not fit in ARC. When read requests come into the system, ZFS will attempt to serve those requests from the ARC. If the data is not in the ARC, ZFS will attempt to serve the requests from the L2ARC. Hard drives are only accessed when data does not exist in either the ARC or L2ARC. This means the hard drives receive far fewer requests, which is awesome given the fact that the hard drives are easily the slowest devices in the overall storage solution.

In our ZFS project, we added a pair of 160GB Intel X25-M MLC SSD drives for a total of 320GB of L2ARC. Between our ARC of 11GB and our L2ARC of 320GB, our ZFS solution can cache over 300GB of the most frequently accessed data! This hybrid solution offers considerably better performance for read requests because it reduces the number of accesses to the large, slow hard drives.

Things to Keep in Mind

There are a few things to remember. The cache drives don’t get mirrored. When you add cache drives, you cannot set them up as mirrored, but there is no need to since the content is already mirrored on the hard drives. The cache drives are just a cheap alternative to RAM for caching frequently access content.

Another thing to remember is you still need to use SLC SSD drives for the ZIL drives. ZIL stands for "ZFS Intent Log", and acts as an intermediary for write caching. Not having ZIL drives severely slows down write access. By adding the ZIL drives you significantly increase write speeds. This is still not as fast as a RAM based write cache on a RAID card, but it is much better than not having anything. Solaris ZFS Best Practices For Log Devices The SLC SSD drives used for ZIL drives dramatically improve the performance of write actions. The MLC SSD drives used as cache drives are used to improve read performance.

It is also important to remember that the L2ARC will require some memory to operate. A portion of the ARC will be used to index and manage the content located in the L2ARC. A general rule of thumb is that 1-2GB of ARC will be used for every 100GB of L2ARC. With a 300GB L2ARC, we will give up 3-6GB of ARC. This will leave us with 5-8GB of ARC memory to use to cache the most frequently accessed files.

Effective Caching to Virtualized Environments

At this point, you are probably wondering how effectively the two levels of caching will be able to cache the most frequently used data, especially when we are talking about 9TB of formatted RAID10 capacity. Will 11GB of ARC and 320GB L2ARC make a significant difference for overall performance? It will depend on what type of data is located on the storage array and how it is being accessed. If it contained 9TB of files that were all accessed in a completely random way, the caching would likely not be effective. However, we are planning to use the storage for virtual machine file systems and this will cache very effectively for our intended purpose.

When you plan to deploy hundreds of virtual machines, the first step is to build a base template that all of the virtual machines will start from. If you were planning to host a lot of Linux virtual machines, you would build the base template by installing Linux. When you get to the step where you would normally configure the server, you would shut off the virtual machine. At that point, you would have the base template ready. Each additional virtual machine would simply be chained off the base template. The virtualization technology will keep the changes specific to each virtual machine in its own child or differencing file.

When the virtualization solution is configured this way, the base template will be cached quite effectively in the ARC (main system memory). This means the main operating system files and cPanel files should deliver near RAM-disk performance levels. The L2ARC will be able to effectively cache the most frequently used content that is not shared by all of the virtual machines, such as the content of the files and folders in the most popular websites or MySQL databases. The least frequently accessed content will be pulled from the hard drives, but even that should show solid performance since it will be RAID10 across 18 drives and none of the frequently accessed read requests will need to burden the RAID10 volume since they are already served from ARC or L2ARC.

Testing the L2ARC

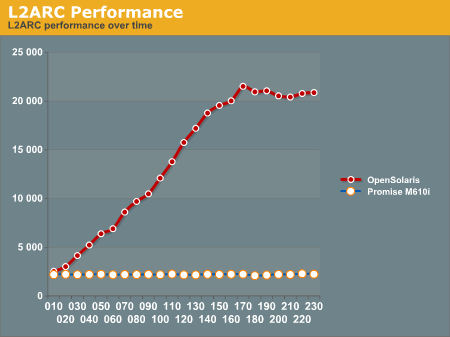

We thought it would be fun to actually test the L2ARC and build a chart of the performance as a function of time. To test and graph usefulness of L2ARC, we set up an iSCSI share on the ZFS server and then ran Iometer from our test blade in our blade center. We ran these tests over gigabit Ethernet.

Iometer Test Details:

25GB working set

4k blocks

100% random

100% read

load 32 (constant)

four hour test

Every ten minutes during the test, we grabbed the “Last performance” values (IOPS, MB/sec) from Iometer and wrote them down to build a performance chart. Our goal was to be able to graph the performance as a function of time so we could illustrate the usefulness of the L2ARC.

We ran the same test using the Promise M610i (16 1TB WD RE3 drives in RAID10) box to get a comparison graph. The Promise box is not a ZFS style solution and does not have any L2ARC style caching feature. We expected the ZFS box to outperform the Promise box, and we expected the ZFS box to increase performance as a function of time because the L2ARC would become more populated the longer the test ran.

The Promise box consistently delivered 2200 to 2300 IOPS every time we checked performance during the entire 4 hour test. The ZFS box started by delivering 2532 IOPS at 10 minutes into the test and delivered 20873 IOPS by the end of the test.

Here is the chart of the ZFS box performance results:

Initially, the two SAN boxes deliver similar performance, with the Promise box at 2200 IOPS and the ZFS box at 2500 IOPS. The ZFS box with a L2ARC is able to magnify its performance by a factor of ten once the L2ARC is completely populated!

Notice that ZFS limits how quickly the L2ARC is populated to reduce wear on the cache drives. It takes a few hours to populate the L2ARC and achieve maximum performance. That seems like a long time when running benchmarks, but it is actually a very short period of time in the life cycle of a typical SAN box.

102 Comments

View All Comments

mbreitba - Tuesday, October 5, 2010 - link

Thanks for the comment on the ZIL.As far as using the X25-E's as ZIL devices - when we built the box initially, the X25-E's were the best choice at the time. Future builds will probably include a capacitor-backed SSD.

James5mith - Tuesday, October 5, 2010 - link

For what it's worth, we are currently using roughly 16 of the Supermicro 846-E1 chassis in our storage solutions.Drive numbering is from bottom to top, left to right. Don't know if this helps or not.

5 11 17 23

4 10 16 22

3 9 15 21

2 8 14 20

1 7 13 19

0 6 12 18

badhack - Tuesday, October 5, 2010 - link

I would be curious to know how the performance compares to traditional fs caching on Linux w/ ext3 or ext4 with same amount of memory and a few SSD drives.Maveric007 - Tuesday, October 5, 2010 - link

There are a few options within Linux that would be pretty interesting to see. FS caching and the different schedulers that are available within Linux. Also I would throw out ext3 and replace that with ext4 and xfs. Redhat is now supporting xfs and there are just tons of tunables for xfs compared to the other file systems.badnews - Tuesday, October 5, 2010 - link

Thanks Matt, I've been following the build over at your blog and this is an excellent article to tie it all together. I hope you follow up with your "things we'd do differently" in future articles. I would also love to see some more benchmarking against more alternatives, e.g. Open-E, or even an off-the-shelf EqualLogic.Keep up the good work :)

Fallen Kell - Tuesday, October 5, 2010 - link

Well, I know at least for Solaris 10.... I would suspect that OpenSolaris has it as well by now, since it has been out for at least 4 years that I know of...https://<host>:6789

mbreitba - Tuesday, October 5, 2010 - link

You can install the ZFS Web GUI from the Solaris toolkit, but it isn't bundled into OpenSolaris. It is binary compatible, but it doesn't give any good options for iSCSI setup, as it only supported the old iSCSI target rather than the new COMSTAR target.sfc - Tuesday, October 5, 2010 - link

How can you spend a page talking about how you aren't really worried about the future of Opensolaris, and then have half a paragraph mentioning "oh, btw, it's cancelled"? The project is clearly dead. They stopped releasing source almost a month ago. Oracle has made absolutely no guarantees about when or how source would be released in the future. For all we know, they could release only portions of Solaris Express, and do it months to years after the binaries drop.http://opensolaris.org/jive/thread.jspa?messageID=...

I love ZFS/Opensolaris, I use it at home, but Opensolaris is dead.

Mattbreitbach - Tuesday, October 5, 2010 - link

OpenSolaris is indeed dead as far as development goes, but it's still viable if you want to use the last build released which is what all of our performance figures are based on. I will be writing some companion articles to this one talking about not only the death of OpenSolaris, but it's alternative, OpenIndiana, and the Promise M610i used as a comparison in this article.andersenep - Tuesday, October 5, 2010 - link

The OpenSolaris project may be dead but ZFS and all the CDDL licensed code is still out there. Illumos, OpenIndiana and a few other distros are still out there and available. Oracle has stated they will continue to release source code after Solaris releases and will also provide binary preview releases in the form of Solaris Express. To say Solaris and ZFS are dead is pretty premature.Whatever happens, the existing code is out there. To call it dead is a bit premature. Sure the project that had the name 'OpenSolaris' has been canceled, but everything that made it up (minus a small few closed bits that have already been replaced) lives on.