Server Clash: DELL's Quad Opteron DELL R815 vs HP's DL380 G7 and SGI's Altix UV10

by Johan De Gelas on September 9, 2010 7:30 AM EST- Posted in

- IT Computing

- AMD

- Intel

- Xeon

- Opteron

Server number 3: the Quanta QSCC-4R or SGI Altix UV 10

| CPU | Four Xeon X7560 at 2.26GHz |

| RAM | 16 x 4GB Samsung 1333MHz CH9 |

| Motherboard | QCI QSSC-S4R 31S4RMB0000 |

| Chipset | Intel 7500 |

| BIOS version | QSSC-S4R.QCI.01.00.0026.04052010655 |

| PSU | 4 x Delta DPS-850FB A S3F E62433-004 850W |

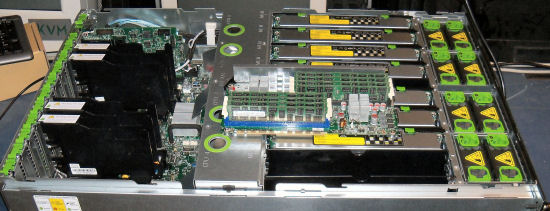

The 50kg 4U beast of Quanta that we reviewed a month ago is the representative of the quad Xeon 7500 platform. The interesting thing about this server is that the hardware is identical to the SGI Altix UV10. SGI confirms that the motherboard inside is designed by QSSC and Intel here. The pretty pictures here at SGI indeed show us an identical server.

Maximum expandability and scalability is the focus of this server: 10 PCIe slots, quad gigabit Ethernet onboard, and 64 DIMM slots. The disadvantage of the enormous amount of DIMM slots is the use of eight separate memory boards.

Each easily accessible memory board has two memory buffers. All these buffers require power, as shown by the heatsinks on top of them. The PSUs use a 2+2 configuration, but that is not necessarily a disadvantage. The PSU management logic is smart enough to make sure that the redundant PSUs do not waste any power at all: "Cold Redundancy".

The QSCC-4R server uses 130W TDP processors, but it is probably the best, albeit most expensive, choice for this server. The lower power 105W TDP Xeon E7540 only has six cores, less L3 cache (18MB), and runs at 2GHz. So it is definitely questionable whether the performance/watt of the E7540 is better compared to the Xeon X7560 which has 33% more cores and runs at a 10% higher clock.

51 Comments

View All Comments

pablo906 - Saturday, September 11, 2010 - link

High performance Oracle environments are exactly what's being virtualized in the Server world yet it's one of your premier benchmarks./edit should read

High performance Oracle environments are exactly what's not being virtualized in the Server world yet it's one of your premier benchmarks.

JohanAnandtech - Monday, September 13, 2010 - link

"You run highly loaded Hypervisors. NOONE does this in the Enterprise space."I agree. Isn't that what I am saying on page 12:

"In the real world you do not run your virtualized servers at their maximum just to measure the potential performance. Neither do they run idle."

The only reason why we run with highly loaded hypervisors is to measure the peak throughput of the platform. Like VMmark. We know that is not realworld, and does not give you a complete picture. That is exactly the reason why there is a page 12 and 13 in this article. Did you miss those?

Per Hansson - Sunday, September 12, 2010 - link

Hi, please use a better camera for pictures of servers that costs thousands of dollarsIn full size the pictures look terrible, way too much grain

The camera you use is a prime example of how far marketing have managed to take these things

10MP on a sensor that is 1/2.3 " (6.16 x 4.62 mm, 0.28 cm²)

A used DSLR with a decent 50mm prime lens plus a tripod really does not cost that much for a site like this

I love server pron pictures :D

dodge776 - Friday, September 17, 2010 - link

I may be one of the many "silent" readers of your reviews Johan, but putting aside all the nasty or not-so-bright comments, I would like to commend you and the AT team for putting up such excellent reviews, and also for using industry-standard benchmarks like SAPS to measure throughput of the x86 servers.Great work and looking forward to more of these types of reviews!

lonnys - Monday, September 20, 2010 - link

Johan -You note for the R815:

Make sure you populate at least 32 DIMMs, as bandwidth takes a dive at lower DIMM counts.

Could you elaborate on this? We have a R815 with 16x2GB and not seeing the expected performance for our very CPU intensive app perhaps adding another 16x2GB might help

JohanAnandtech - Tuesday, September 21, 2010 - link

This comment you quoted was written in the summary of the quad Xeon box.16 DIMMs is enough for the R815 on the condition that you have one DIMM in each channel. Maybe you are placing the DIMMs wrongly? (Two DIMMs in one channel, zero DIMM in the other?)

anon1234 - Sunday, October 24, 2010 - link

I've been looking around for some results comparing maxed-out servers but I am not finding any.The Xeon 5600 platform clocks the memory down to 800MHz whenever 3 dimms per channel are used, and I believe in some/all cases the full 1066/1333MHz speed (depends on model) is only available when 1 dimm per channel is used. This could be huge compared with an AMD 6100 solution at 1333MHz all the time, or a Xeon 7560 system at 1066 all the time (although some vendors clock down to 978MHz with some systems - IBM HX5 for example). I don't know if this makes a real-world difference on typical virtualization workloads, but it's hard to say because the reviewers rarely try it.

It does make me wonder about your 15-dimm 5600 system, 3 dimms per channel @800MHz on one processor with 2 DPC @ full speed on the other. Would it have done even better with a balanced memory config?

I realize you're trying to compare like to like, but if you're going to present price/performance and power/performance ratios you might want to consider how these numbers are affected if I have to use slower 16GB dimms to get the memory density I want, or if I have to buy 2x as many VMware licenses or Windows Datacenter processor licenses because I've purchased 2x as many 5600-series machines.

nightowl - Tuesday, March 29, 2011 - link

The previous post is correct in that the Xeon 5600 memory configuration is flawed. You are running the processor in a degraded state 1 due to the unbalanced memory configuration as well as the differing memory speeds.The Xeon 5600 processors can run at 1333MHz (with the correct DIMMs) with up to 4 ranks per channel. Going above this results in the memory speed clocking down to 800MHz which does result in a performance drop to the applications being run.

markabs - Friday, June 8, 2012 - link

Hi there,I know this is an old post but I'm looking at putting 4 SSDs in a Dell poweredge and had a question for you.

What raid card did you use with the above setup?

Currently a new Dell poweredge R510 comes with a PERC H700 raid card with 1GB cache and this is connect to a hot swap chassis. Dell want £1500 per SSD (crazy!) so I'm looking to buy 4 intel 520s and setup them up in raid 10.

I just wanted to know what raid card you used and if you had a trouble with it and what raid setup you used?

many thanks.

Mark

ian182 - Thursday, June 28, 2012 - link

I recently bought a G7 from www.itinstock.com and if I am honest it is perfect for my needs, i don't see the point in the higher end ones when it just works out a lot cheaper to buy the parts you need and add them to the G7.