Quad Xeon 7500, the Best Virtualized Datacenter Building Block?

by Johan De Gelas on August 10, 2010 5:10 PM EST- Posted in

- IT Computing

Stress Testing the High End

Our previous vApus Mark I gave an idea on how well systems perform when running several virtualized “heavy duty applications”: complex network bandwidth gobbling web servers, large OLAP databases, and write intensive OLTP databases. Our benchmark was mostly based on vApus, a software client that fires off requests as if real users were stressing the server. Several client machines run with a vApus “slave” instance and a “master” vApus instance manages them (for example: start tests in sync) and collects the end results.

The first version of vApus had several limitations: it could simulate a maximum of about 1500 users per client (a limit of 32-bit Windows based software) and the number of clients to could be kept in sync was also limited. In the meantime, the core count of the servers that we test has been increasing at an almost ridiculous pace. When the first lines of vApus were written (at the end of 2006), octal core servers were considered the high-end. Only four years later we are now looking at 64-thread and 48-core monsters. Our ambitious way of benchmarking—simulating real-world users, not scripting benchmarks—resulted in scalability problems.

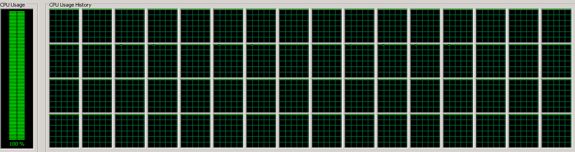

The lead developer of vApus, Dieter Vandroemme, decided to take all the lessons learned from 2.5 years of vApus development and apply them to a new vApus, built from scratch. Based on a new .Net 4.0 and 64-bit Windows foundation, and spending a lot of time on software tuning, Dieter came up with a new vApus Client that was capable of producing 10,000 threads in about 3.5 seconds; up to 15000 threads can be active on one client. If you know that every simulated user needs one thread, you’ll understand why this is very cool: we can now test extremely strong servers with only one humble client. A Core i7-750 (2.66GHz) needs only 20% CPU load to sustain 15000 “users” sending off SQL statements to the server. Our mighty 64-thread, 32-core quad Xeon X7560 at 2.26GHz was brought to its knees, as you can see below.

We were excited to see this happen: finally we tamed the beast with 64 threads. Yes, you can easily stress out a server with HPC benchmarks such as Linpack or SpecFP, but measuring the potential of a server using popular business software is no easy feat. We had to deal with severe thread contention at the client side for example. With several vApus instances, we are now ready to test the strongest servers including those coming out in the next few years. We are even able to stress test complete clusters of modern servers with just a few clients.

vApus' ultimate goal is not to stress servers to their maximum; we use it mostly for measuring response time at a given workload and to test stability of applications. But of course, we could not resist the chance to use it as a benchmark too. It was time to build a new benchmark, and vApus Mark II was born.

51 Comments

View All Comments

haplo602 - Wednesday, August 11, 2010 - link

This is one of the bottlenecks of your virtualised environemnt. A storage solution is only the limit if you do not use it as it was designed to be used.the more IO demanding application you have, the less virtualisation is going to offer any benefits. usualy CPU power is the last issue after netwrok, disk and memory.

I had a good laugh at the opening page. High end servers are High end not because of the increased performance but because of the better management and disaster tolerance/recovery they offer. After all, they use the same CPUs and memory as the low end servers, just everything else is different (OLRAD, hot swap/plug of almost anything except memory and CPU).

webdev511 - Thursday, August 12, 2010 - link

Well, if you're willing to spend some more money on Solid State (if you go with two twelve core cpus you'll save on licences) you could stuff four of the new Fusion IO 1.28 TB Duo Drives into the box and map them as System Drives and then use attached storage for big files.SomeITguy - Wednesday, August 11, 2010 - link

No offense intended, and I know this will put you on the defensive, but it sounds to me like the "development environment" was ill conceived in the design phase. You obviously overbought on processor power. The first step in designing an environment, is knowing what your apps need. You can't just buy servers, then whine about how poorly the performance matches the overall system capability...Last job I had Citrix Xen on HP blades with 53xx and 54xx CPU's, running about 150 production VM's. On the order of >300 total, with R&D and QA. The company had no money, and because of that we only ran local storage for the OS and most functions. The shared data we did have were on Netapps, and that alone constantly spiked up to +25k IOPS. I can't remember were each blade sat on IOPS, but it was high. I was able to balance resources utilized most of the day to about the ~60% level, with spikes hitting the high 80's. No resources being overly wasted. To do this effectively takes time and patience. You need to economize. 12 VM's on a blade with 16GB of memory was not unheard of...

Then there is the whole ESX thing, eh, won't get into that. Again, you need to know what is going to run on the servers before you spend (waste) money.

In my experience, It's typical that managers just override the lowly sysadmin advice, take a vendors word over the sysadmin who manages the app, or a business unit buys you the equipment without consulting, then says "here, make it work".

Overall, I thought the article good. It is just a guide, not a bible.

davegraham - Tuesday, August 10, 2010 - link

So, i'm sitting here with a spanking new Dell R815 which is a quad socket G34 system and is shipping today w/ AMD Opteron 6176SE parts...so, this article is outdated even before it begins. (oh, did i mention it's only 2RU?)I'm also very curious as to what the underlying storage is for all these tests as it definitely can have an impact on the servicability of the testing.

I'm curious as to the details per VM was well...IOMMU choices, HT sharing, NUMA settings, as well as the version of ESX being used?

dave

JohanAnandtech - Wednesday, August 11, 2010 - link

"So, i'm sitting here with a spanking new Dell R815 which is a quad socket G34 system and is shipping today w/ AMD Opteron 6176SE parts...so, this article is outdated even before it begins. (oh, did i mention it's only 2RU?)"Testing servers is not like testing videocards. I can not plug the R815 in a ready installed windows pc and push the button of "Servermark". It does not work that way as you indicate yourself. A complete storage system must be set up, and in many cases ESX fails to install the first time on a brand new server. We perform a whole battery of monitoring tests for example that confirm that the DQL is low enough.

The storage system we use for the 4 tile test is a 8 disk SSD system for the OLTP tests (described in this article). The VMs themselves sit on a separate RAID controller connect to a promise JBOD. The JBOD has 8 15000 rpm SAS disks. The only really disk intensive app is Swingbench in this test, and by making sure both data and logs get their separate SSD , we achieve DQLs under 0.1. There is lot more to the Oracle config, but if you are interested, we can share the parameter file.

Anyway, the low DQL and the fact that we scale well from 2 tot 4 tiles shows that we are not limited by the disks.

davegraham - Wednesday, August 11, 2010 - link

johan,I work with VMware for a living doing platform testing for the product i support. ;) consequently, I'm very well aware of the requirements for testing VMware and the various and sundry components within the server. Hence, my slightly critical view of what you're doing here.

appreciate the response on the storage....again, all well and good with that explanation.

I'll put my quad socket 6176SE system against your 7500 system anyday and i'll enjoy lower rack footprint, lower power consumption, and a positively brilliant VMware experience. ;)

keep up the good work.

dave

blue_falcon - Wednesday, August 11, 2010 - link

If you wan to do a similar 2U config, try the R810, only has 32 dimm sockets but nearly identical to the R910.mapesdhs - Tuesday, August 10, 2010 - link

Johan, how would this system compare to a low-end quad-socket Altix UV 10? (max

RAM = 512GB).

Ian.

JohanAnandtech - Wednesday, August 11, 2010 - link

I never tested an SGI server, so I can not say for sure. But the hardware looks (and probably is) identical to what we have tested here.Casper42 - Wednesday, August 11, 2010 - link

Due to the way Dell implemented the memory on their latest Quad socket machines, if you run 2 CPUs with the FlexMem bridge, you get full memory bandwidth but half of the memory sockets are further away from the CPU due to the extra trace length of going to the empty CPU socket and through the FlexMem bridge.When you put in 4 CPUs you only get half the memory bandwidth of an Intel reference design. This is because the traces that would normally go to the empty CPU socket and through the FlexMem now go essentially nowhere because the CPU in that socket needs the access instead.

I would say try IBM or HP. Just beware that IBM does some weird stuff when it comes to their Max5 memory expansion module that can also cause additional memory latency for some of the DIMM sockets and not the others.