High-End x86: The Nehalem EX Xeon 7500 and Dell R810

by Johan De Gelas on April 12, 2010 6:00 PM EST- Posted in

- IT Computing

- Intel

- Nehalem EX

Understanding the Performance Numbers

A good analysis of the memory subsystems helps to understand the strengths and weaknesses of these server systems. We still use our older stream binary. This binary was compiled by Alf Birger Rustad using v2.4 of Pathscale's C-compiler. It is a multi-threaded, 64-bit Linux Stream binary. The following compiler switches were used:

-Ofast -lm -static –mp

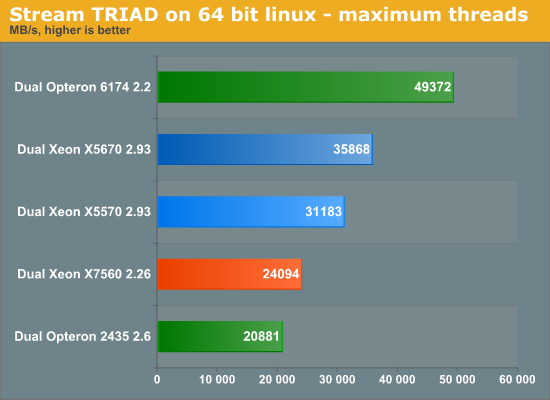

We ran the stream benchmark on SUSE SLES 11. The stream benchmark produces four numbers: copy, scale, add, triad. Triad is the most relevant in our opinion; it is a mix of the other three.

The Xeon X7560 fails to impress. Intel's engineers expected 36GB/s with the best optimizations. Their own gcc compiled binary (–O3 –fopenmp –static) achieves 25 to 29GB/s, in the same range as our Pathscale compiled binary.

It is interesting to note that single threaded bandwidth is mediocre at best: we got only 5GB/s with DDR3-1066. Even the six-core Opteron with DDR2-800 can reach over 8GB/s, while the newest Opteron DDR3 memory controller achieves 9.5GB/s with DDR3-1333, almost twice as much as the Xeon 7500 series. The best single-threaded performance comes out of the Xeon 5600 memory controller: 12GB/s with DDR3-1333. Intel clearly had to sacrifice some bandwidth too to achieve the enormous memory capacity (64 slots and 1TB without "extensions"). Let's look at latency.

| CPU | Speed (GHz) | L1 (clocks) | L2 (clocks) | L3 (clocks) | Memory (ns) |

| Xeon X5670 | 2.93 | 4 | 10 | 56 | 87 |

| Xeon X5570 | 2.80 | 4 | 9 | 47 | 81 |

| Opteron 6174 | 2.2 | 3 | 16 | 57 | 98 |

| Opteron 2435 | 2.6 | 3 | 16 | 56 | 113 |

| Xeon X7560 | 2.26 | 4 | 9 | 63 | 160 |

The L3 cache latency of our Xeon X7560 is very impressive, considering that we are talking about a 24MB L3. Memory latency clearly suffers from the serial-buffer-parallel DRAM transitions. We also did a cache bandwidth test with SiSoft Sandra 2010.

| CPU | Speed (GHz) | L1 CPU (GB/s) | L2 CPU (GB/s) | L3 (GB/s) |

| Xeon X5670 | 2.93 | 717 | 539 | 150 |

| Xeon X5570 | 2.80 | 437 | 312 | 114 |

| Opteron 6174 | 2.2 | 768 | 378 | 194 |

| Opteron 2435 | 2.6 | 472 | 281 | 228 |

| Xeon X7560 | 2.26 | 667 | 502 | 275 |

The most interesting number here is the L3 cache since all cores must access it, and it matters for almost all applications. The throughput of the L1 and L2 caches is mostly important for the few embarrassingly parallel applications. And here we see that the extra engineer on the Nehalem EX pays off: it clearly has the fastest L3 cache. The Opteron are the second fastest, but the exclusive nature of the L3 caches may need quite a bit more bandwidth. In a nutshell: the Xeon 7500 comes with probably the best L3 cache on the market, but the memory subsystem is quite a bit slower than on other server CPU systems.

23 Comments

View All Comments

dastruch - Monday, April 12, 2010 - link

Thanks AnandTech! I've been waiting for an year for this very moment and if only those 25nm Lyndonville SSDs were here too.. :)thunng8 - Monday, April 12, 2010 - link

For reference, IBM just released their octal chip Power7 3.8Ghz result for the SAP 2 tier benchmark. The result is 202180 saps for approx 2.32x faster than the Octal chipNehalem-EXJammrock - Monday, April 12, 2010 - link

The article cover on the front page mentions 1 TB maximum on the R810 and then 512 GB on page one. The R910 is the 1TB version, the R810 is "only" 512GB. You can also do a single processor in the R810. Though why you would drop the cash on an R810 and a single proc I don't know.vol7ron - Tuesday, April 13, 2010 - link

I wish I could afford something like this!I'm also curious how good it would be at gaming :) I know in many cases these server setups under-perform high end gaming machines, but I'd settle :) Still, something like this would be nice for my side business.

whatever1951 - Tuesday, April 13, 2010 - link

None of the Nehalem-EX numbers are accurate, because Nehalem-EX kernel optimization isn't in Windows 2008 Enterprise. There are only 3 commercial OSes right now that have Nehalem-EX optimization: Windows Server R2 with SQL Server 2008 R2, RHEL 5.5, SLES 11, and soon to be released CentOS 5.5 based on RHEL 5.5. Windows 2008 R1 has trouble scaling to 64 threads, and SQL Server 2008 R1 absolutely hates Nehalem-EX. You are cutting Nehalem-EX benchmarks short by 20% or so by using Windows 2008 R1.The problem isn't as severe for Magny cours, because the OS sees 4 or 8 sockets of 6 cores each via the enumerator, thus treats it with the same optimization as an 8 socket 8400 series CPU.

So, please rerun all the benchmarks.

JohanAnandtech - Tuesday, April 13, 2010 - link

It is a small mistake in our table. We have been using R2 for months now. We do use Windows 2008 R2 Enterprise.whatever1951 - Tuesday, April 13, 2010 - link

Ok. Change the table to reflect Windows Server 2008 R2 and SQL Server 2008 R2 information please.Any explanation for such poor memory bandwidth? Damn, those SMBs must really slow things down or there must be a software error.

whatever1951 - Tuesday, April 13, 2010 - link

It is hard to imagine 4 channels of DDR3-1066 to be 1/3 slower than even the westmere-eps. Can you remove half of the memory dimms to make sure that it isn't Dell's flex memory technology that's slowing things down intentionally to push sales toward R910?whatever1951 - Tuesday, April 13, 2010 - link

As far as I know, when you only populate two sockets on the R810, the Dell R810 flex memory technology routes the 16 dimms that used to be connected to the 2 empty sockets over to the 2 center CPUs, there could be significant memory bandwidth penalties induced by that.whatever1951 - Tuesday, April 13, 2010 - link

"This should add a little bit of latency, but more importantly it means that in a four-CPU configuration, the R810 uses only one memory controller per CPU. The same is true for the M910, the blade server version. The result is that the quad-CPU configuration has only half the bandwidth of a server like the Dell R910 which gives each CPU two memory controllers."Sorry, should have read a little slower. Damn, Dell cut half the memory channels from the R810!!!! That's a retarded design, no wonder the memory bandwidth is so low!!!!!