AMD's Radeon HD 5870 Eyefinity 6 Edition Reviewed

by Anand Lal Shimpi on March 31, 2010 12:01 AM EST- Posted in

- GPUs

The Crosshair Problem

With my wall of panels constructed it was time to plug them all in and begin the easy part. At POST and while starting Windows, only two of the panels actually display anything. Installing the driver and going through the Eyefinity setup process is the same as for a lesser number of displays. I will say that driver interactions involving creating/manipulating the six displays are sluggish. You just get the feeling that there's a lot going on under the hood. I've included a video below that shows a complete driver setup of a 6 display Eyefinity system so you can see for yourself. Pay attention to how long it takes for each display to activate at some points during the install.

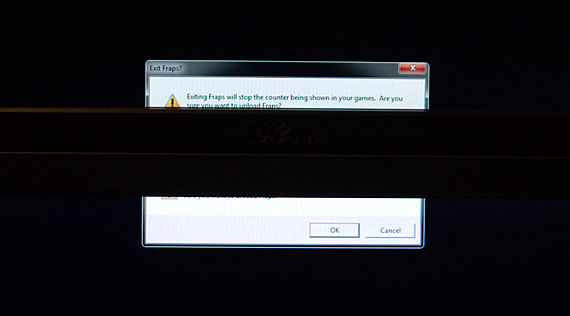

With the software configured I had a single large display that appeared to Windows as a 5760 x 2160 monitor. And here is where I ran into my first problem using an Eyefinity 6 setup. Dialog boxes normally appear in the middle of your display, which in my case was at the joining of two monitor bezels. Here's an example of a dialog box appearing on an Eyefinity 6 setup without bezel correction:

Note how the dialog box is actually stretched across both panels. It's even worse in games, but luckily as of Catalyst 10.3 AMD has enabled bezel correction in the driver to avoid the stretching problem. While bezel correction makes things look more correct in games, it does cause a problem for anything appearing behind the bezel:

Half the time I didn't even notice when a little window had popped up asking me to do something because it was hidden by my bezels. This happens a lot during software installs where the installer is asking you a question while you're off paying attention to something else on one of your 6 displays.

In my previous coverage on Eyefinity I mentioned that the thickness of the bezels wasn't an issue for gaming. In a three display setup I still stand by that. However with six monitors, particularly because of this occluded center point problem, bezel thickness is a major issue.

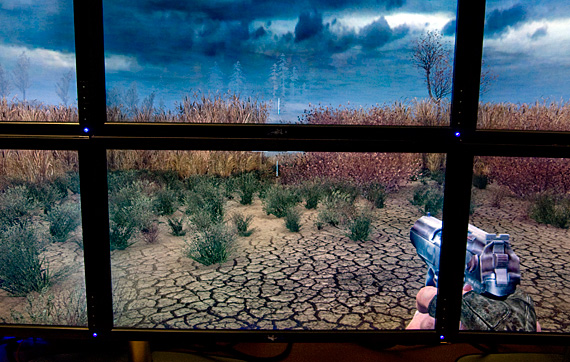

It's an even bigger issue in certain games, particularly first person shooters because there's usually a crosshair in the middle of your screen. Take a look at what aiming in Battlefield Bad Company 2 looks like with an Eyefinity 6 setup:

Somewhere behind that bezel is an enemy on a vehicle. You simply can't play an FPS seriously on an E6 setup, you have to guess at where you're aiming if you're shooting at anything directly ahead of you. Here's another example in S.T.A.L.K.E.R. Call of Pripyat:

Eyefinity 6 was just not made for FPSes. The ideal setup for an FPS would actually be a 5x1 portrait mode, which is currently not supported in AMD's drivers. AMD is well aware of the limitation and is working on enabling 5x1 at some point in the future.

Racing, flight sims or other person titles are much better suited to this 6-display configuration. I recorded a video of our DiRT 2 benchmark as an example:

The experience in these sorts of games is much more immersive, although it is frustrating to only really be able to use a subsection of titles on such an expensive display setup. You may find that it's more affordable/immersive/useful to just take the resolution hit and get a good 720p or 1080p projector to hook up to your gaming PC instead.

The aspect ratio issues that are present with a 3x1 landscape setup aren't as prevalent in a 3x2 configuration. At 5760 x 2160 you're much closer to 16:9 than you would be at 5760 x 1080. Far more games stretch well to this resolution, although there's still a lack of good compatibility with the majority of games out there.

AMD claims it is working with developers on enabling proper FOV and aspect ratio adjustments for Eyefinity setups, but it's not always easy. AMD is also working on alternatives to deal with the crosshair problem - for example by making the games aware of bezel correction and shifting the position of the crosshair for Eyefinity users. The AMD Display Library SDK update includes resources for developers to use when developing for Eyefinity platforms.

Given the secrecy surrounding Eyefinity's creation it's not surprising that most developers haven't had the opportunity to include support for it in current titles. Going forward we should see more native support however. Some developers are viewing technologies like Eyefinity as a way of differentiating and promoting PC versions of console titles to help boost sales.

78 Comments

View All Comments

frenchfrog - Wednesday, March 31, 2010 - link

It would be so nice:-3 monitors for left-center-rigth views

-1 monitor for rear view

-2 monitors for guages/GPS/map/flight controls

vol7ron - Wednesday, March 31, 2010 - link

I'm not sure why a "wall" was created. Your problem with FOV is the fact that you have too much of a 2D setup, rather than an easier-to-view 3D.Suggestion: 3 stands.

Center the middle pair to your seat.

Adjust the right and left pair so they're at a 15-25 degree slant, as if you were forming a hexadecagon (16 sided polygon @ 22.5 degrees)

vol7ron

cubeli - Wednesday, March 31, 2010 - link

I cannot print your reviews anymore.. Any help would be greatly appreciated!WarlordSmoke - Wednesday, March 31, 2010 - link

I still don't understand the point of this card by itself, as a single card(no CF).It's too expensive and too gaming oriented to be used in the workplace where, as someone else already mentioned, there have been cheaper and more effective solutions for multi-display setups for years.

It's too weak to drive the 6 displays it's designed to for gaming. Crysis(I know it's not a great example of an optimized engine but give me a break here) which is a 3 year old game isn't playable at < 25fps and I can't imagine the next generation of games which are around the corner to be more forgiving.

My point is, why build a card to drive 6 displays when you could have 2 cards that can drive 3 displays each and be more effective for gaming. I know this isn't currently possible, but that's my point, it should be, it's the next logical step.

Instead of having 2 cards in crossfire, where only one card has display output and the other just tags along as extra horsepower, why not use the cards in parallel, split the scene in two and use two framebuffers(one card with upper 3 screens and the other card with the lower 3 screens) and practically make crossfire redundant(or just use it for synchronizing the rendering).

This should be more efficient on so many levels. First, the obvious, half the screens => half the area to render => better performance. Second, if the scene is split in two each card could load different textures so less memory should be wasted than in crossfire mode where all cards need to load the same textures.

I'm probably not taking too seriously the synchronization issues that could appear between them, but they should be less obvious when they are between distinct rows of displays, especially if they have bezels.

Anyway this idea with 2 cards with 3 screens each would have been beneficial to both ATI(sales of more cards) and to the gamers: Buy a card and three screens now, and maybe later if you can afford it buy another card and another three screens. Not to mention the fact that ATI has several distinct models of cards that support 3 displays. So they could have made possible 6 display setups even for lower budgets.

To keep a long story short(er), I believe ATI should have worked to make this possible in their driver and just scrap this niche 6 display card idea from the start.

Bigginz - Wednesday, March 31, 2010 - link

I have an idea for the monitor manufacturers (Samsung). Just bolt a magnifying glass to the front of the monitor that is the same width and height (bezel included). I vaguely remember some products similar to this for the Nintendo Gameboy & DS.Dell came out with their Crystal LCD monitor at CES 2008. Just replace the tempered glass with a magnifying glass and your bezel problem is fixed.

http://hothardware.com/News/Dell_Crystal_LCD_Monit...

Calin - Thursday, April 1, 2010 - link

Magnifying glass for such a large surface would be thick and heavy (and probably prone to cracking), and "thin" variations have image artefacts (I've seen a magnifying "glass" usable as a bookmark, and the image was good, but it definitely had issuesimaheadcase - Wednesday, March 31, 2010 - link

As much R&D the invested in this, It seems better to use it towards making own monitors that don't have bezels. The extra black link is a major downside to this card.ATI monitors + video setup would be ideal. After all, when you are going to drop $1500 + video card setup, what is a little more in price for a streamlined monitors.

yacoub - Wednesday, March 31, 2010 - link

"the combined thickness of two bezels was annoying when actually using the system"Absolutely!

CarrellK - Thursday, April 1, 2010 - link

There are a fair number of comments to the effect of "Why did ATI/AMD build the Six? They could have spent their money better elsewhere..." To those who made those posts, I respectfully suggest that your thoughts are too near-term, that you look a bit further into the future.The answers are:

(1) To showcase the technology. We wanted to make the point that the world is changing. Three displays wasn't enough to make that point, four was obvious but still not enough. Six was non-obvious and definitely made the point that the world is changing.

(2) To stimulate thinking about the future of gaming, all applications, how interfaces *will* change, how operating systems *will* change, and how computing itself is about to change and change dramatically. Think Holodeck folks. Seriously.

(3) We wanted a learning vehicle for ourselves as well as everyone else.

(4) And probably the biggest reason of all: BECAUSE WE THOUGHT IT WOULD BE FUN. Not just for ourselves, but for those souls who want to play around and experiment at the edges of the possible. You never know what you don't know, and finding that out is a lot of fun.

Almost every day I tell myself and anyone who'll listen: If you didn't have fun at work today, maybe it is time to do something else. Go have some fun folks.

Anand Lal Shimpi - Thursday, April 1, 2010 - link

Thanks for posting Carrell :) I agree with the having fun part, if that's a motivation then by all means go for it!Take care,

Anand