Lab Update: SSDs, Nehalem Mac Pro, Nehalem EX, Bench and More

by Anand Lal Shimpi on May 27, 2009 12:00 AM EST- Posted in

- Anand

I had an epiphany the other day. Long time AnandTech readers will know that I used to do a far better job of keeping you guys apprised of what it was that I was working on. Somewhere along the way that got lost so today I’m going to try something...old, I guess. Here’s an update of what’s been going on.

The WePC Update

I've been working on a side project with ASUS called WePC.com. The idea is pretty cool: ASUS is tapping the community for ideas on what they'd like to see from its users in future notebook designs. I wrote about this at the end of last year but I've done a lot of work on it since then so I thought it'd be worthy of an update.

Last week I wrote about how simple it was to build a HTPC with a nice interface thanks to maturing integrated graphics platforms and good media center plugins. I also talked about the future arrival of Westmere and when the best time would be to buy your next laptop. Around the Athlon X2 7850 launch I talked about replacing aging PCs on a budget and seeing some pretty good performance results.

The netbook market is a very fast growing one so I broke out the crystal ball and tried to figure out where it was all going. I touched on NVIDIA v. Intel, the purported death of the desktop, SSD capacities and styling from a company other than Apple. It turns out I've written quite a bit over there, so check it out and join the discussion.

I’m working on SSDs Again

A couple major things have happened since my last in-depth look at SSDs. For starters, some drives now support the almighty Trim command. Both OCZ’s Vertex (and other Indilinx based drives) as well as the new Samsung drives should support Trim. Obviously you need the right firmware and you need an OS (or a utility) that supports Trim to take advantage of it, but it’s there and admittedly it’s there much earlier than expected.

That’s a good thing for drives like the new Samsung ones (Corsair’s new SSD is based on this drive) because their worst case scenario used performance is just bad. I’ve briefly touched on this in previous articles, but Samsung’s controllers don’t appear to do a good job of managing the used scenario I’ve been testing with. Thankfully, the latest controller’s support for Trim should help alleviate this issue. I’m still working on figuring out how to identify if a drive properly supports Trim or not.

I’ve also received the new OCZ Vertex EX (SLC) drives as well as the 30GB and 60GB variants of the MLC Vertex drives. The former is too expensive for most consumers but I’ll be putting it up against the X25-M to see how it fares in a high end desktop, all while trying to get enough together for Johan to do a proper enterprise level test. The 30/60GB Vertex drives are spec’d for lower write speeds so I’m going to be testing those (finally) to see what the real world impact is.

I can’t help but mention Windows 7 at this point, because I *really* want to switch to it for all of my SSD testing. Windows 7 is far more reliable from a performance standpoint. Although our recent article showed that it’s not really any faster than Vista, my SSD testing has shown that it’s at least more consistent with its performance results. Part of this I attribute to Windows 7 doing more intelligent grouping of its background tasks than Vista ever did, although it is surprising to me that we’re not seeing noticeably better battery life as a result.

How much would you guys hate me if I switched to Windows 7 sooner rather than later?

Nehalem Mac Pro: Upgrading CPUs

I actually finished testing the new Nehalem based Mac Pro several weeks ago, but in keeping up with tradition I had to see if it was possible to upgrade the CPUs on the new Mac Pro.

Indeed it is, but it’s a bit more complicated than you’d think.

Apple makes two models of the Mac Pro, one with two sockets and one with only a single LGA-1366 socket. The two socket model, often referred to as the 8-core Mac Pro, actually uses Nehalem CPUs without any heatspreaders. I suspect this is to enable them to run at their more aggressive turbo modes more frequently (high end Xeons can turbo up to higher frequencies than regular Xeons or the Core i7). The single socket model uses standard Xeons with heatspreaders, so there’s nothing special there.

I actually managed to kill a processor card doing the CPU swap but I’ve taken the hit so you all don’t have to :) It’ll all be included in the article, I’m simply waiting on a replacement heatsink since an integrated thermal sensor got damaged during the initial swap. For now just know that it is possible to upgrade the CPUs in these things and it’s not too difficult to, you just need to know what to expect and to be patient.

If you’re on the fence of buying today, opt for the slower CPUs and upgrade later if you’d like. And if you already have a good Mac Pro and aren’t terribly CPU bound, save the money and buy SSDs instead - in many cases the performance improvement is far greater. Once again, I’ll address all of this in the article itself.

Nehalem-EX: 2.3 billion transistors, eight cores, one die

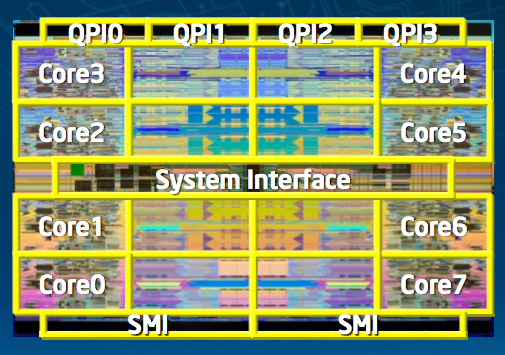

I spent about 45 minutes on a conference call with Intel yesterday talking about the new Nehalem-EX processor for multi-socket servers. Here’s a crude picture of the die:

That's 2.3 billion transistors thanks to 8 Nehalem cores and a 24MB L3 cache

Nehalem-EX, which I’ve spoken about before, is an 8-core version of Nehalem. It is not socket-compatible with existing Nehalem platforms as it has four QPI links (up from two in the LGA-1366 Xeon versions). The four QPI links enable it to be used in up to 8-socket systems for massive 64-core / 128-thread servers.

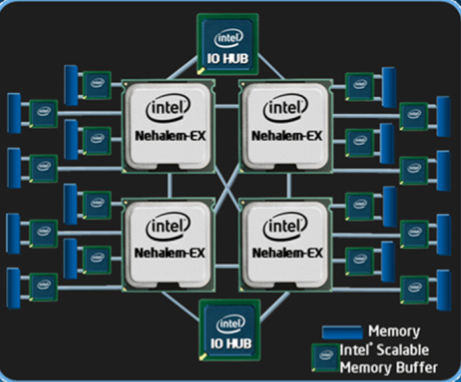

Four socket Nehalem-EX platform

Remember that the Nehalem architecture was optimized for four cores, but it was designed to scale up to 8-cores and down to 2. As such, the Nehalem-EX is actually a monolithic 8-core processor with a gigantic 24MB L3 cache shared between all 8 cores. Also remember that Intel wanted a minimum of 2MB of L3 cache per core, so Nehalem-EX actually goes above and beyond that with 3MB per core if all cores are sharing the cache equally.

Hyper Threading is supported, so that’s 16-threads per 8-core chip. I’d also expect some pretty interesting Turbo modes on an 8-core Nehalem.

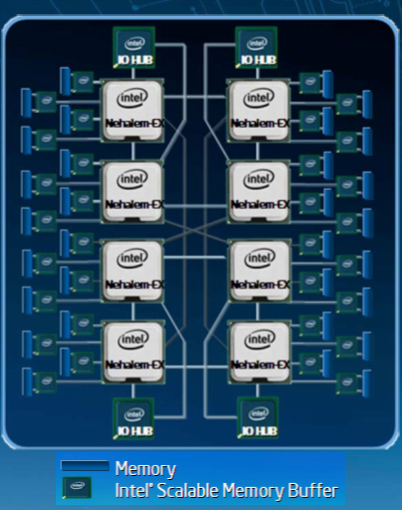

Eight socket Nehalem-EX platform, note that the CPUs connect through SMBs before getting to main memory. Say goodbye to FB-DIMMs, but hello to on-motherboard buffers.

The memory controllers are a bit different with the Nehalem-EX. Each socket supports up to 16 DIMMs, but instead of supporting FB-DIMMs Intel moved the memory buffer onto the motherboard. The Nehalem-EX memory controllers communicate directly to memory buffers (Intel calls them Scalable Memory Buffers) which in turn communicate directly to standard DDR3 memory. This is a much preferred approach as it keeps expensive, slow and power hungry FB-DIMMs out of the majority of the server market where it doesn’t make sense and enables large memory installation on these gigantic servers with 64 DIMM slots.

Intel expects to be shipping Nehalem-EX by the end of this year, with the first systems shipping at the beginning of 2010.

AnandTech Bench: Thanks for the Feedback

I made a post last week about me adding the Atom 230 and 330 to AnandTech Bench and shortly thereafter received a tremendous amount of very useful feedback.

I agree completely that we need to get rid of the “shorter bars mean better performance” metrics, as well as tidy up the interface a bit. I need to hammer out a list of specs but it does help to have your feedback and you can expect to see much of what you’ve asked for in future versions of the app.

I also asked to see what sorts of older CPUs you’d like to see included, and to my surprise there was a lot of demand for very old CPUs like the Pentium III or Athlon XP. I tallied up all of the responses and by far the single most requested CPU was the Athlon XP (granted if you added up all the different Pentium 4 variants that would easily take the cake).

As a result I’m going to be dusting off an old Athlon XP system, in addition to a single-core Hyper Threaded Pentium 4 as well as VIA’s Nano and will be running benchmarks on all three over the coming weeks.

Many of you also want a mobile CPU version of bench; rest assured, I do too. Let me see about getting these older desktop CPUs in first and then I’ll work on mobile.

That’s all for now. Hopefully I’ve provided a good taste of what’s to come in the not too distant future.

69 Comments

View All Comments

Live - Thursday, May 28, 2009 - link

I forgot to add that I really appreciate articles like this one and that a edit function is needed.thebeastie - Wednesday, May 27, 2009 - link

Yeah looking forward to it, just like the latest Terminator movie :)JonnyDough - Wednesday, May 27, 2009 - link

Or any Christian Bale movie...Souka - Wednesday, May 27, 2009 - link

Check out "The Machinist" if ya havn't seen it...FlameDeer - Wednesday, May 27, 2009 - link

After reading this update, really amazed with such a wide scope coverage that you are currently working hard on. Much anticipating with what will coming next from AnandTech.Thank you Anand & keep up the good work. :)

iwodo - Wednesday, May 27, 2009 - link

On the Bench, the main reason is that people want to know how much performance we are getting for our money. I think the recent economy has changes some of our thinking in spending.The past few years we had experience upgrading parts with not much performance difference. It is interesting to see how previous CPU fair with current generation. And finally realize the days we had improvements like 386 to 486 and Pentium are over.

SSD - The mystery of multiple channel SSD has yet to solved. Is it possible to have more then 10 channel SSD drive in an 2.5" SSD. If not what is the maximum possible channel, i.e what performance should we expect from future SSD.

EX seems to me an destructive product. Previous Benchmarks shows how much performance / watt the Nehalem was able to deliver. May be WoW servers can finally fit more players inside a Single Realm?

TA152H - Thursday, May 28, 2009 - link

If you think about it, it does make sense.The biggest jump, of course, was the 286, arguable the best processor Intel ever made for its time. It was extremely fast, so much so that I couldn't believe it the first time I used it. Going from a PC to a PC/AT was a jump that was mind-boggling. On top of that, it added virtual memory, and the ability to multitask. It was a huge improvement.

The 386 sucked, although it did move to 32-bit and added the now worthless Virtual 86 mode. The performance was poor though, as it ran like a 286 at the same clock speed, clock normalized. But, it moved the platform forward.

The 486 was a nice processor, for sure. It didn't add anything new, but ran like a raped ape. I remember how we fought over the first 486 PC made, an upgraded version of the PS/2 Model 70-A21, which was called the B21. It cut compiles down from 30 minutes to about 18 compared to a 386/25, and it was only running at 25 MHz, and did not have any L2 cache, unlike the 386 which had a 64K external cache.

The Pentium wasn't so great. It ran at poor clock speeds at first, and when I used it, I found the performance quite poor. I used to say Intel's odd numbered processors were all bad, and their even ones good and this was further proof. I went from a 486 at 100 MHz, which were available, to a Pentium at 66 MHz, and was entirely unimpressed. It ran hotter than Hell too. But, in time, they got it right, with shrinks and the Pentium MMX (although MMX wasn't so important, there were other enhancements). The FDIV bug was kind of funny too, and how idiots overreacted to it. Still, I have one of the processors and I hope it will go up in value.

The Pentium Pro was another initial disappointment. It ran 16-bit code very poorly, although the fault lies more with Microsoft than Intel since they gave Intel guidance that 32-bit code would dominate by 1995 when the Pentium Pro was released. It was really expensive too, because of the on-processor L2 cache (which was really on the same packaging, but not part of the processor), which could not be tested before joined with the processor, so if one were bad, they were both thrown out. The Pentium II addressed the segment register issue of the Pentium Pro, so improved 16-bit code somewhat, and obviously what we use today is still based on the Pentium Pro design. It was, of course, and even number (P6) design, so was good.

The Pentium 4, an odd number design, of course, sucked far worse than the Pentium and 386. It was much hotter, like the Pentium compared to the 486, but the performance sucked too, which made it a double threat.

Now we are back to the Pentium Pro design, so it's only reasonable we don't see dramatic changes since it's really not a new processor at all, but just improvements to an existing line, like the Pentium MMX, or Athlon XP (I'd use Prescott as an example too, but was it an improvement?????).

It makes sense though. Really, virtually nothing in PCs is new, and never has been. Virtually every concept has been taken from mainframes, or supercomputers, and microcomputers were just low cost implementations of those concepts. When you had a scarcity of transistors, there were many very good things you could not implement, but you wanted to. That low lying fruit is gone, and adding transistors gives greatly diminished returns. It's like the improvement with any technology. If you look at cars, jets, etc... once the technology matures, the improvements slow down and you get gradual improvements.

I'd like to see x86 die, but I guess it will not happen. Processors cost more, perform worse, and use more power because of this miserable instruction set. It's not a lot per processor, but when you multiply it by the millions upon millions of processors that are afflicted with the x86 instruction set, the costs are staggering. That's not even counting the migraines it has caused by people who have to code in assembly for it. It's gruesome.

yacoub - Thursday, June 4, 2009 - link

Great post, but you forgot the first Celeron, the 300a that overclocked to 450MHz without breaking a sweat. In my book, the best bang-for-the-buck Intel CPU right up through the Core2Duo.This was in the early days of overclocking, just over a decade ago, and it was a dream to be able to run a chip at 150% its rated speed with zero changes to things like voltages (heck most motherboards didn't even support voltage changes back then, iirc).

Ah... those were the days. :)

JonnyDough - Wednesday, May 27, 2009 - link

I agree with what you said about the economy changing things. It isn't just that though. Computer technology isn't gaining nearly as quickly, and the need for new systems - at least in the consumer space is less pressing. The dollar is far more important now than it was. Purchases even at the business level are determined even more by cost than in the 90s, when changes to performance was more heavily weighed factor in IT decisions.This is why businesses that want to survive have to be broadly invested. For example, if a company that manufacturers hard disk drives exclusively fails to get going on SSD early enough, it will be facing extinction.

If you're GM, it pays to invest in something other than just big gas guzzling automobiles. :-D Let's hope the Chevy Volt has some real value. I have my doubts that it will be as popular as they'd hoped, but I do think the car merits an award for ingenuity. They took conventional thinking and flipped it on its head, I just wish they'd done it 20 years ago. With their marketing dept they might've been able to put Ford out of business.

dragunover - Wednesday, May 27, 2009 - link

Removing the necessity for expensive server RAM? Someone's gonna hate that, and someone's gonna love that.Personally I'm the latter of the two.