AMD's 12-core "Magny-Cours" Opteron 6174 vs. Intel's 6-core Xeon

by Johan De Gelas on March 29, 2010 12:00 AM EST- Posted in

- IT Computing

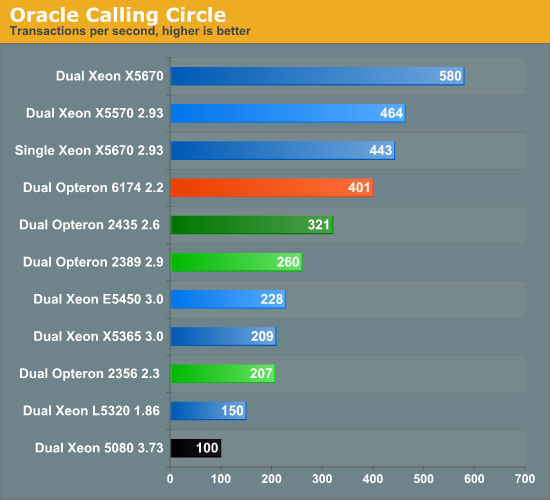

OLTP benchmark Oracle Charbench “Calling Circle”

| Oracle Charbench Calling Circle | |

| Operating System | Windows 2008 Enterprise Edition (64-bit) |

| Software | Oracle 10g Release 2 (10.2) for 64-bit Windows |

| Benchmark software | Swingbench/Charbench 2.2 |

| Database Size: | 9GB |

Calling Circle is an Oracle OLTP benchmark. We test with a database size of 9 GB. To reduce the pressure on our storage system, we increased the SGA size (Oracle buffer in RAM) to 10 GB and the PGA size was set at 1.6 GB. A calling circle tests consists of 83% selects, 7% inserts and 10% updates. The “calling circle” test is run for 10 minutes. A run is repeated 6 times and the results of the first run are discarded. The reason is that the disk queue length is sometimes close to 1, while the subsequent runs have a DQL (Disk Queue Length) of 0.2 or lower. In this case it was rather easy to run the CPUs at 99% load. Since DQLs were very similar, we could keep our results from the Nehalem article.

As we noted in our previous article, we work with a relatively small database. The result is that the benchmark doesn't scale well beyond 16 cores. The Opteron 6174 has a 10MB L3 cache for 12 cores, while the Opteron 2435 has 6MB L3 for 6 cores. The amount of cache might explain why the Intel Xeons scale a lot better in this benchmark. For this kind of OLTP workload is the Opteron 6174 not the right choice. To go back to the car analogy earlier: the muscle car is burning rubber while spinning its wheels, but is not making much progress.

58 Comments

View All Comments

Accord99 - Monday, March 29, 2010 - link

The X5670 is 6-core.JackPack - Tuesday, March 30, 2010 - link

LOL. Based on price?Sorry, but you do realize that the majority of these 6-core SKUs will be sold to customers where the CPU represents a small fraction of the system cost?

We're talking $40,000 to $60,000 for a chassis and four fully loaded blades. A couple hundred dollars difference for the processor means nothing. What's important is the performance and the RAS features.

JohanAnandtech - Tuesday, March 30, 2010 - link

Good post. Indeed, many enthusiast don't fully understand how it works in the IT world. Some parts of the market are very price sensitive and will look at a few hundreds of dollars more (like HPC, rendering, webhosting), as the price per server is low. A large part of the market won't care at all. If you are paying $30K for a software license, you are not going to notice a few hundred dollars on the CPUs.Sahrin - Tuesday, March 30, 2010 - link

If that's true, then why did you benchmark the slower parts at all? If it only matters in HPC, then why test it in database? Why would the IDM's spend time and money binning CPU's?Responding with "Product differentiation and IDM/OEM price spreads" simply means that it *does* matter from a price perspetive.

rbbot - Saturday, July 10, 2010 - link

Because those of us with applications running on older machines need comparisons against older systems in order to determine whether it is worth migrating existing applications to a new platform. Personally, I'd like to see more comparisons to even older kit in the 2-3 year range that more people will be upgrading from.Calin - Monday, March 29, 2010 - link

Some programs were licensed by physical processor chips, others were licensed by logical cores. Is this still correct, and if so, could you explain in based on the software used for benchmarking?AmdInside - Monday, March 29, 2010 - link

Can we get any Photoshop benchmarks?JohanAnandtech - Monday, March 29, 2010 - link

I have to check, but I doubt that besides a very exotic operation anything is going to scale beyond 4-8 cores. These CPUs are not made for Photoshop IMHO.AssBall - Tuesday, March 30, 2010 - link

Not sure why you would be running photoshop on a high end server.Nockeln - Tuesday, March 30, 2010 - link

I would recommend trying to apply some advanced filters on a 200+ GB file.Especially with the new higher megapixel cameras I could easilly see how some proffesionals would fork up the cash if this reduces the time they have to spend in front of the screen waiting on things to process.