NVIDIA’s GeForce GTX 480 and GTX 470: 6 Months Late, Was It Worth the Wait?

by Ryan Smith on March 26, 2010 7:00 PM EST- Posted in

- GPUs

Image Quality & AA

When it comes to image quality, the big news from NVIDIA for Fermi is what NVIDIA has done in terms of anti-aliasing of fake geometry such as billboards. For dealing with such fake geometry, Fermi has several new tricks.

The first is the ability to use coverage samples from CSAA to do additional sampling of billboards that allow Alpha To Coverage sampling to fake anti-alias the fake geometry. With the additional samples afforded by CSAA in this mode, the Fermi can generate additional transparency levels that allow the billboards to better blend in as properly anti-aliased geometry would.

The second change is a new CSAA mode: 32x. 32x is designed to go hand-in-hand with the CSAA Alpha To Coverage changes by generating an additional 8 coverage samples over 16xQ mode for a total of 32 samples and giving a total of 63 possible levels of transparency on fake geometry using Alpha To Coverage.

In practice these first two changes haven’t had the effect we were hoping for. Coming from CES we thought this would greatly improve NVIDIA’s ability to anti-alias fake geometry using cheap multisampling techniques, but apparently Age of Conan is really the only game that greatly benefits from this. The ultimate solution is for more developers of DX10+ applications to enable Alpha To Coverage so that anyone’s MSAA hardware can anti-alias their fake geometry, but we’re not there yet.

So it’s the third and final change that’s the most interesting. NVIDIA has added a new Transparency Supersampling (TrSS) mode for Fermi (ed: and GT240) that picks up where the old one left off. Their previous TrSS mode only worked on DX9 titles, which meant that users had few choices for anti-aliasing fake geometry under DX10 games. This new TrSS mode works under DX10, it’s as simple as that.

So why is this a big deal? Because a lot of DX10 games have bad aliasing of fake geometry, including some very popular ones. Under Crysis in DX10 mode for example you can’t currently anti-alias the foliage, and even brand-new games such as Battlefield: Bad Company 2 suffer from aliasing. NVIDIA’s new TrSS mode fixes all of this.

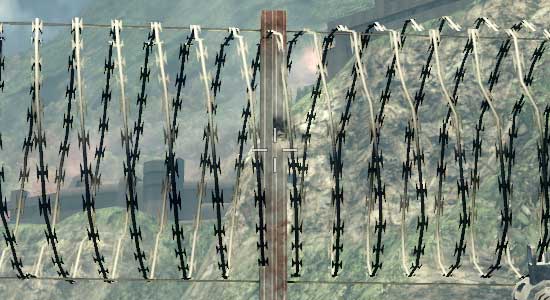

Bad Company 2 DX11 Without Transparency Supersampling

Bad Company 2 DX11 With Transparency Supersampling

The bad news is that it’s not quite complete. Oh as you’ll see in our screenshots it works, but the performance hit is severe. It’s currently super-sampling too much, resulting in massive performance drops. NVIDIA is telling us that this should be fixed next month, at which time the performance hit should be similar to that of the old TrSS mode under DX9. We’ve gone ahead and taken screenshots and benchmarks of the current implementation, but keep in mind that performance should be greatly improving next month.

So with that said, let’s look at the screenshots.

| NVIDIA GeForce GTX 480 | NVIDIA GeForce GTX 285 | ATI Radeon HD 5870 | ATI Radeon HD 4890 |

| 0x | 0x | 0x | 0x |

| 2x | 2x | 2x | 2x |

| 4x | 4x | 4x | 4x |

| 8xQ | 8xQ | 8x | 8x |

| 16xQ | 16xQ | DX9: 4x | DX9: 4x |

| 32x | DX9: 4x | DX9: 4x + AAA | DX9: 4x + AAA |

| 4x + TrSS 4x | DX9: 4x + TrSS | DX9: 4x + SSAA | |

| DX9: 4x | |||

| DX9: 4x + TrSS |

With the exception of NVIDIA’s new TrSS mode, very little has changed. Under DX10 all of the cards produce a very similar image. Furthermore once you reach 4x MSAA, each card producing a near-perfect image. NVIDIA’s new TrSS mode is the only standout for DX10.

We’ve also include a few DX9 shots, although we are in the process of moving away from DX9. This allows us to showcase NVIDIA’s old TrSS mode, along with AMD’s Adapative AA and Super-Sample AA modes. Note how both TrSS and AAA do a solid job of anti-aliasing the foliage, which makes it all the more a shame that they haven’t been available under DX10.

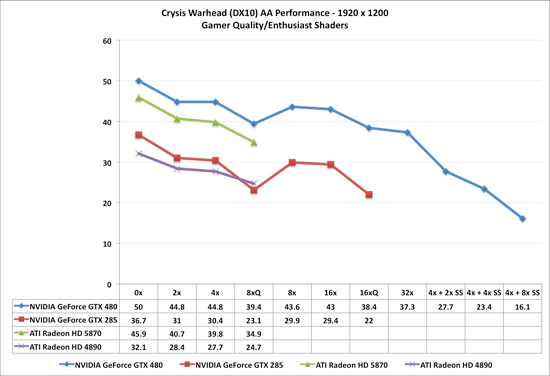

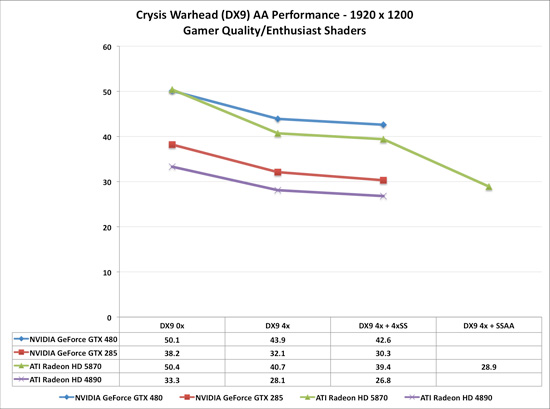

When it comes to performance, keep in mind that both AMD and NVIDIA have been trying to improve their 8x MSAA performance. When we reviewed the Radeon 5870 back in September we found that AMD’s 8x MSAA performance was virtually unchanged, and 6 months later that still holds true. The performance hit moving from 4x MSAA to 8x MSAA on both Radeon cards is roughly 13%. NVIDIA on the other hand took a stiffer penalty under DX10 for the GTX 285, where there it fell by 25%. But now with NVIDIA’s 8x MSAA performance improvements for Fermi, that gap has been closed. The performance penalty for moving to 8x MSAA over 4x MSAA is only 12%, putting it right up there with the Radeon cards in this respect. With the GTX 480, NVIDIA can now do 8x MSAA for as cheap as AMD has been able to

Meanwhile we can see the significant performance hit on the GTX 480 for enabling the new TrSS mode under DX10. If NVIDIA really can improve the performance of this mode to near-DX9 levels, then they are going to have a very interesting AA option on their hands.

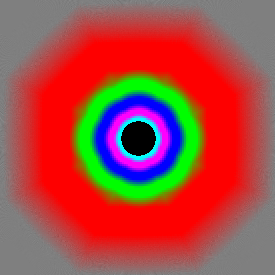

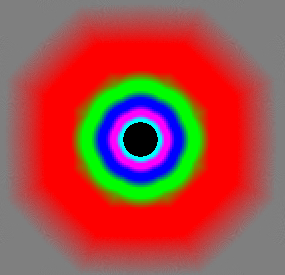

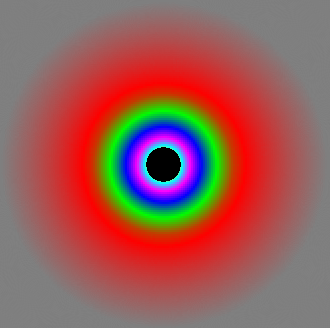

Last but not least, there’s anisotropic filtering quality. With the Radeon 5870 we saw AMD implement true angle-independent AF and we’ve been wondering whether we would see this from NVIDIA. The answer is no: NVIDIA’s AF quality remains unchanged from the GTX200 series. In this case that’s not necessarily a bad thing; NVIDIA already had great AF even if it was angle-dependant. More to the point, we have yet to find a game where the difference between AMD and NVIDIA’s AF modes have been noticeable; so technically AMD’s AF modes are better, but it’s not enough that it makes a practical difference

GeForce GTX 480

GeForce GTX 285

Radeon 5870

196 Comments

View All Comments

Headfoot - Monday, March 29, 2010 - link

Unless you are an insider all of this "profitability" speculuation is just that, useless speculation.The reason they make both companies chips is more likely due to diversification, if one company does poorly one round then they are not going to go down with them. I'd hate to make ATI chips during the 2900XT era and i'd hate to make nVidia chips during the 5800 FX era

blindbox - Saturday, March 27, 2010 - link

I know this is going to take quite a bit of work, but can't you colour up the main cards and its competition in this review? By main cards, I mean GTX 470, 480 and 5850 and 5870. It's giving me a hard time to make comparison. I'm sure you guys did this before.. I think.It's funny how you guys only coloured the 480.

blindbox - Saturday, March 27, 2010 - link

I know this is going to take quite a bit of work, but can't you colour up the main cards and its competition in this review? By main cards, I mean GTX 470, 480 and 5850 and 5870. It's giving me a hard time to make comparison. I'm sure you guys did this before.. I think.It's funny how you guys only coloured the 480.

iwodo - Saturday, March 27, 2010 - link

If i remember correctly Nvidia makes nearly 30- 40% of their Profits from Telsa and Quadro. However Telsa and Quadro only occupies 10% of their Total GPU volume shipment. Or 20% if we only count desktop GPU.Which means Nvidia is selling those Perfect grade Fermi 512 Shader to the most profitable market. And they are just binning these chips to lower grade GTX 480 and GTX 470. While Fermi did not provide the explosion of HPC sales as we initially expected due to heat and power issues, but judging by pre-order numbers Nvidia still has quite a lot of orders to fulfill.

The Best thing is we get another Die Shrink in late 2010 / early 2011 to 28nm. ( It is actually ready for volume production in 3Q 2010 ). This should bring Lower Power and Heat. Hopefully the next update will get us a much better Memory Controller, with 256Bit controller and may be 6Ghz+ GDDR5 should offer enough bandwidth while getting better yield then 384Bit Controller.

Fermi may not be exciting now, but it will be in the future.

swing848 - Saturday, March 27, 2010 - link

We are not living in the future yet.When the future does arrive I expect there will also be newer, better hardware.

Sunburn74 - Saturday, March 27, 2010 - link

So how do you guys test temps? It's not specifically stated. Are you using a case? An open bench? Using readings from a temp meter? Or system readings from catalyst or nvidia control panel? Please enlighten. It's important because people will eventually have to extrapolate your results to their personal scenarios which involve cases of various designs. 94 degrees measured inside a case is completely different from 94 degrees measured on an open bench.Also, why are people saying all this stuff about switching sides and families? Just buy the best card available in your opinion. I mean it's not like ATI and Nvidia are feeding you guys and clothing your kids and paying your bills. They make gpus, something you plug into a case and forget about if it's working properly. I just don't get it :(

Ryan Smith - Saturday, March 27, 2010 - link

We're using a fully assembled and closed Thermaltake Spedo with a 120mm fan directing behind the video cards feeding them air. Temperatures are usually measured with GPU-Z unless for some reason it can't grab the temps from the driver.hybrid2d4x4 - Saturday, March 27, 2010 - link

Thanks for elaborating on the temps as I was wondering about that myself. One other thing I'd like to know is how the VRM and RAM temps are on these cards. I'm assuming that the reported values are for just the core.The reason I ask is that on my 4870 with aftermarket cooling and the fan set pretty low, my core always stayed well below 65, while the RAM went all the way up to 115 and VRMs up to ~100 (I have obviously increased fan speeds as the RAM temps were way too hot for my liking- they now peak at ~90)

Ryan Smith - Saturday, March 27, 2010 - link

Correct, it's just the core. We don't have VRM temp data for Fermi. I would have to see if the Everest guys know how to read it, once they add support.shiggz - Friday, March 26, 2010 - link

I just am not interested in a card with a TDP over 175W. When I upgraded from 8800gt to GTX 260 It was big jump in heat and noise and definitely at my tolerance limit during the summer months. I found myself under-clocking a card I had just bought.175W max though a 150W is preferred @ 250$ and I am ready to buy if NVIDIA wont make it then I will switch back to ATI.