6Gbps SATA Performance: AMD 890GX vs. Intel X58/P55

by Anand Lal Shimpi on March 25, 2010 12:00 AM EST- Posted in

- Storage

Earlier this month my Crucial RealSSD C300 died in the middle of testing for AMD’s 890GX launch. This was a problem for two reasons:

1) Crucial’s RealSSD C300 is currently shipping and selling to paying customers. The 256GB drive costs $799.

2) AMD’s 890GX is the first chipset to natively support 6Gbps SATA. The C300 is the first SSD to natively support the standard as well. Butter, meet toast.

Since then, Crucial dispatched a new drive and discovered what happened to my first drive (more on this in a separate update). While waiting for the autopsy report, I decided to look at 890GX 6Gbps performance since it was absent from my original review.

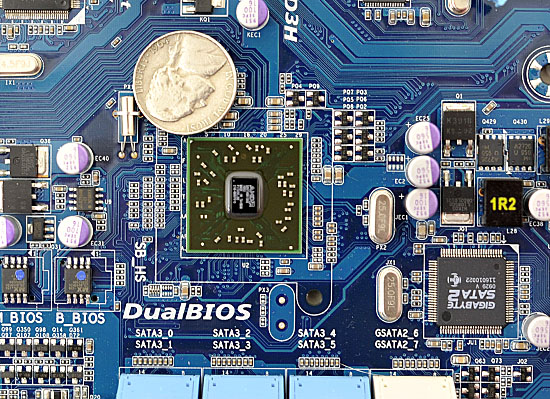

AMD's SB850 with Native 6Gbps SATA

In the 890GX review I found that AMD’s new South Bridge, the SB850, wasn’t quite as fast as Intel’s ICH/PCH when dealing with the latest crop of high performance SSDs. My concerns were particularly about high bandwidth or high IOPS situations, admittedly things that you only bump into if you’re spending a good amount of money on an SSD. Case in point, here is OCZ’s Vertex LE running on an AMD 890GX compared to an Intel X58:

| Iometer 6-22-2008 Performance | 2MB Sequential Read | 2MB Sequential Write | 4KB Random Read | 4KB Random Write (4K Aligned) |

| AMD 890GX | 248 MB/s | 217.5 MB/s | 38.4 MB/s | 130.1 MB/s |

| Intel H55 | 264.9 MB/s | 247.7 MB/s | 48.6 MB/s | 180 MB/s |

My concern was that if 3Gbps SSDs were underperfoming on the SB850, then 6Gbps SSDs definitely would.

Other reviewers had mixed results with the SB850. Some boards did well while others did worse. I also discovered that AMD’s own internal testing is done on an internal reference board with both Cool’n’Quiet and SB power management disabled, which is why disabling CnQ improved performance in my results. As far as why AMD does any of its own internal testing in such a way, your guess is as good as mine.

I received an ASUS 890GX board for this followup and updated to the latest BIOS on that board. That didn’t fix my performance problems. Using AMD’s latest SB850 AHCI drivers however (1.2.0.164), did...sort of:

| Iometer 6-22-2008 Performance | 2MB Sequential Read | 2MB Sequential Write | 4KB Random Read | 4KB Random Write (4K Aligned) |

| AMD 890GX (3/2/10) | 248 MB/s | 217.5 MB/s | 38.4 MB/s | 130.1 MB/s |

| AMD 890GX (3/25/10) | 253.5 MB/s | 223.8 MB/s | 51.2 MB/s | 152.1 MB/s |

| Intel H55 | 264.9 MB/s | 247.7 MB/s | 48.6 MB/s | 180 MB/s |

All performance improved, but we’re still looking at lower performance compared to Intel’s 3Gbps SATA controller except for random read speed. Random read speed is faster on the 890GX (but slower than X58).

The best part of it all is that I no longer had to disable CnQ or C1E to get this performance. I will note that my performance is still lower than what AMD is getting on its internal reference board and the performance from 3rd party boards varies significantly from one board to the next depending on board and BIOS revisions. But at least we’re getting somewhere.

In testing the 890GX, I decided to look into how Intel’s chipsets perform with this new wave of high performance SSDs. It’s not as straightforward as you’d think.

57 Comments

View All Comments

vol7ron - Thursday, March 25, 2010 - link

It would be extremely nice to see any RAID tests, as I've been asking Anand for months.I think he said a full review is coming, of course he could have just been toying with my emotions.

nubie - Thursday, March 25, 2010 - link

Is there any logical reason you couldn't run a video card with x15 or x14 links and send the other 1 or 2 off to the 6Gbps and USB 3.0 controllers?As far as I am concerned it should work (and I have a geforce 6200 modified to x1 with a dremel that has been in use for the last couple years).

Maybe the drivers or video bios wouldn't like that kind of lane splitting on some cards.

You can test this yourself quickly by applying some scotch tape over a few of the signal pairs on the end of the video card, you should be able to see if modern cards have any trouble linking at x9-x15 link widths.

nubie - Thursday, March 25, 2010 - link

Not to mention, where are the x4 6Gbps cards?wiak - Friday, March 26, 2010 - link

the marvell chip is a pcie 2.0 x1 chip anyway so its limited to that speed regardless of interface to motherboardatleast this says so

https://docs.google.com/viewer?url=http://www.marv...">https://docs.google.com/viewer?url=http..._control...

same goes for USB 3.0 from NEC, its also a PCIe 2.0 x1 chip

JarredWalton - Thursday, March 25, 2010 - link

Like many computer interfaces, PCIe is designed to work in powers of two. You could run x1, x2, x4, x8, or x16, but x3 or x5 aren't allowable configurations.nubie - Thursday, March 25, 2010 - link

OK, x12 is accounted for according to this:http://www.interfacebus.com/Design_Connector_PCI_E...">http://www.interfacebus.com/Design_Connector_PCI_E...

[quote]PCI Express supports 1x [2.5Gbps], 2x, 4x, 8x, 12x, 16x, and 32x bus widths[/quote]

I wonder about x14, as it should offer much greater bandwidth than x8.

I suppose I could do some informal testing here and see what really works, or maybe do some internet research first because I don't exactly have a test bench.

mathew7 - Thursday, March 25, 2010 - link

While 12x is good for 1 card, I wonder how feasible would 6x do for 2 gfx cards.nubie - Thursday, March 25, 2010 - link

Even AMD agrees to the x12 link width:http://www.amd.com/us-en/Processors/ComputingSolut...">http://www.amd.com/us-en/Processors/Com.../0,,30_2...

Seems like it could be an acceptable compromise on some platforms.

JarredWalton - Thursday, March 25, 2010 - link

x12 is the exception to the powers of 2, you're correct. I'm not sure it would really matter much; Anand's results show that even with plenty of extra bandwidth (i.e. in a PCIe 2.0 x16 slot), the SATA 6G connection doesn't always perform the same. It looks like BIOS tuning is at present more important than other aspects, provided of course that you're not an x1 PCIe 1.0.iwodo - Thursday, March 25, 2010 - link

Well we are speaking in terms of Gfx, with So GFX card work instead of 16x, work in 12x. Or even 10x. Thereby saving IO space,just wondering what are the status of PCI-E 3.0....