The Intel Xeon 5670: Six Improved Cores

by Johan De Gelas on March 16, 2010 3:39 PM EST- Posted in

- IT Computing

The new Xeon “Westmere” 5600 series, has arrived. Basically an improved 32nm version of the impressive Xeon 5500 series “Nehalem” CPU. The new Xeon won’t make a big splash like the Xeon 5500 series did back in March 2009. But who cares? Each core in the Xeon 5600 is a bit faster than the already excellent performing older brother, and you get an extra bonus. You choose: in the same power envelope you get two extra cores or 5-10% higher clockspeed. Or if you keep the number of cores and clockspeed constant, you can get lower power consumption. The most thrifty quadcore Xeon is now specced at a 40W TDP instead of 60W.

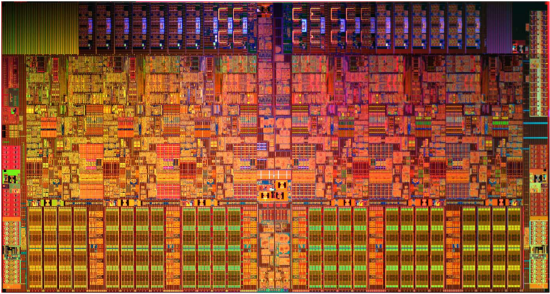

The Westmere Die: an enlarged Nehalem. Trivia: Notice the unused space on the top left

Intel promises up to 40% better performance or up to 30% lower power. The Xeon 5600 can use the same servers and motherboards at the Xeon 5500 after a BIOS update, making the latter almost redundant. Promising, but nothing beats some robust independent benchmarking to check the claims.

So we plugged the Westmere EP CPUs in our ASUS server and started to work on a new Server CPU comparison. Only one real problem: our two Xeon X5670 together are good for 12 cores and 24 simultaneous threads. Few applications can cope with that, so we shifted our focus even more towards virtualization. We added Hyper-V to our benchmark suite, hopefully an answer to the suggestion that we should concentrate on other virtualization platforms than VMware. For those of you looking for Opensource benchmarks, we will follow up with those in April.

Platform Improvements

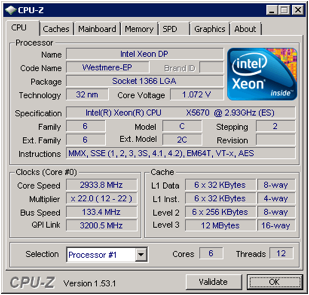

Westmere is more than just a die shrunk Nehalem. In this review we're taking a look at the Xeon X5670 2.93 GHz, the successor to the 2.93GHz Xeon X5570.

The most obvious improvement is that the X5670 comes with six instead of four cores, and a 12MB L3 cache instead of an 8MB cache. But there are quite a few more subtle tweaks under the hood:

- Virtualization : VMexit latency reductions

- Power management: An “uncore” power gate and support for low power DDR-3

- TLB improvements: Address Space IDs (ASID) and 1 GB pages

- Yet another addition to the already incredible crowded x86 ISA (AES_NI).

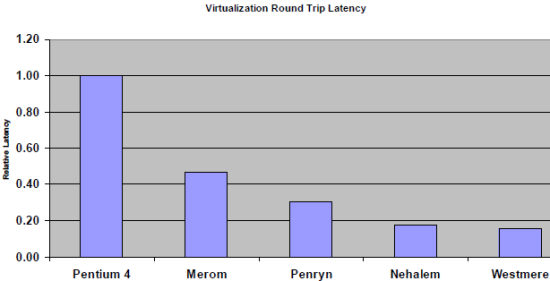

Just a few years ago, many ESX based servers used binary translation to virtualize their VMs. Binary translation used clever techniques to avoid transitions to the hypervisor. In the case of the Pentium 4 Xeons, using software instead of hardware virtualization was even a best practice. As we explained earlier in “Hardware virtualization: the nuts and bolts”, hardware virtualization can be faster than software virtualization so long as VM to hypervisor transitions happen quickly. The new Xeon 5600 Westmere does this about 12% faster than Nehalem.

Pretty impressive, if you consider that this makes Westmere switch between hypervisor and VM twice as fast as the “Xeon 5400” series (based on the Penryn architecture), which itself was fast. As the share of the VM-hypervisor-VM in hypervisor overhead gets lower, we don’t expect to see huge gains though. Hypervisor overhead is probably already dominated by other factors such as emulating I/O operations.

The Xeon 3400 “Lynnfield” was the first to get an un-core power gate (primarily the L3 cache). An un-core power gate will reduce the leakage power to a minimum if the whole CPU is in a deep sleep state. In typical server conditions, we don’t think this will happen often. Shutting down the un-core means after all that all your cores (even those at the other CPU) should be sleeping too. If only one core is even the slightest bit active, the L3-cache and memory controller must be working. For your information, we discussed server power management, including power gating in detail here.

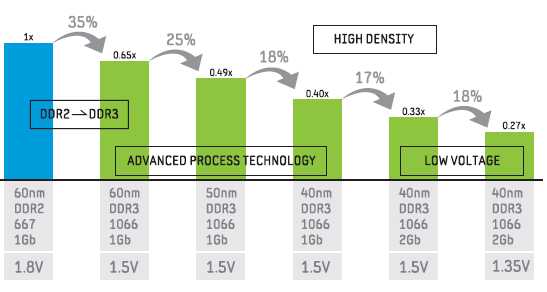

The fact that Westmere's memory controller supports low power DDR3 might have a much larger impact on the your server’s power consumption. In a server with 32GB or more memory, it is not uncommon for the RAM power consumption to be about quarter of the total server power consumption. Moving to 40nm low power DDR3 drops DRAM voltage from 1.5V to 1.35V, which can make a big impact on that quarter of server power.

According to Samsung, 48 GB of 40nm low power DDR3 1066 should use on average about 28W (an average of 16 hour idle and 8 hours of load). This compares favorably with the 66W for the early 60nm DDR3 and the currently popular 50nm based DRAM which should consume about 50W. So in a typical server configuration, you could save – roughly estimated – 22W or about 10% of the total server power consumption.

AMD has more than once confirmed that they would not use DDR3 before low power DDR3 was available. So we expect this low power DDR3 to be quite popular.

There is more. The Xeon 5600 also supports more memory and higher clock speeds. You can now use up to two DIMMs at 1333MHz, while the Xeon 5500 would throttle back to 1066MHz if you did this. The Xeon 5500 was also limited to 12 x 16 GB or 192 GB. If you have very deep pockets, you can now cram 18 of those ultra expensive DIMMs in there, good for 288 GB of DDR3-1066!

Deeper buffers allow the memory controller of the Westmere to be more efficient: a dual Xeon 5670 reaches 43 GB/s while the older X5570 was stuck at 35 GB/s with DDR-3 1333. That will make the X5670 quite a bit faster than its older brother in bandwidth intensive HPC software.

40 Comments

View All Comments

DigitalFreak - Tuesday, March 16, 2010 - link

Are you seriously going to buy a dual socket server (or workstation at a minimum) to play games? I'd rather see them take the time to do more enterprise benchmarking than waste it on what 0.00001% of the market wants.Starglider - Wednesday, March 17, 2010 - link

No but some HPC / CAD / scientific computing benchmarks would be good. Presumably we'll get the full suite when Nehalem EX and Magny Cours turn up.vitchilo - Tuesday, March 16, 2010 - link

I want to encode video, I mean a s***load of video + play games from time to time.rajod1 - Monday, February 1, 2016 - link

You see you are writing server cpu reviews to punk kids that somehow only think of playing a game on a server. They just do not get it. Babies with computers, maybe this could play mario. These are good for boring server work, database, HyperV, etc. ECC ram. And they are still the best bang for the buck in a used server in 2016.Starglider - Tuesday, March 16, 2010 - link

> You can now use up to two DIMMs at 1333MHz,> while the Xeon 5500 would throttle back to

> 1066MHz if you did this.

Presumably you mean 'up to two DIMMs per channel'?

DigitalFreak - Tuesday, March 16, 2010 - link

Not sure about the 2 DIMMs per channel forcing 1066Mhz. We've been ordering Dell R710s with the X5570 and 12x4GB of memory, which runs at 1333Mhz.TurboMax3 - Wednesday, March 17, 2010 - link

You are right. I work for Dell, since a couple of months after the launch of the 5500 Xeons we could do 2 DIMM per Channel (DPC) at 1333 MHz. It is a property of the chipset, rather than the CPU.Also, going to 3 DPC will clock the memory down to 800 MHz, and this has been available in R710 (and similar products from others) for some time now.

The 8GB DIMM is getting cheap enough to be quoted without shame. 16 GB DIMMS still cost as much as my car.

Navier - Tuesday, March 16, 2010 - link

Do you have information on Nehalem-EX and how that is going to fit in the updated road map with the latest 6 core systems?DigitalFreak - Tuesday, March 16, 2010 - link

The Nehalem-EX (probably called the Xeon 7500 series) are for quad socket boxes. From what I've been hearing, they should be released on 3/30. Not sure when the Poweredge R910 and Proliant DL580 G7 will show up though.duploxxx - Wednesday, March 17, 2010 - link

it is launched on 30/3 but actually only available mid june, call it a paper launch or whatever you want.