The Core i7 980X Review: Intel's First 6-Core Desktop CPU

by Anand Lal Shimpi on March 11, 2010 12:00 AM EST- Posted in

- CPUs

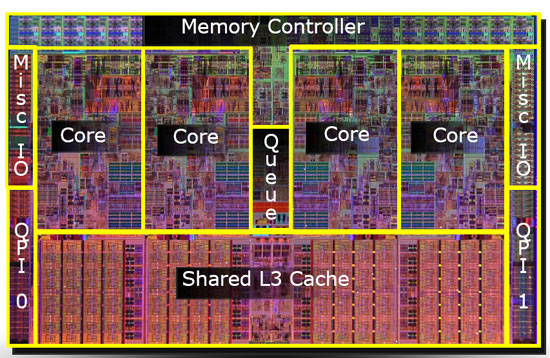

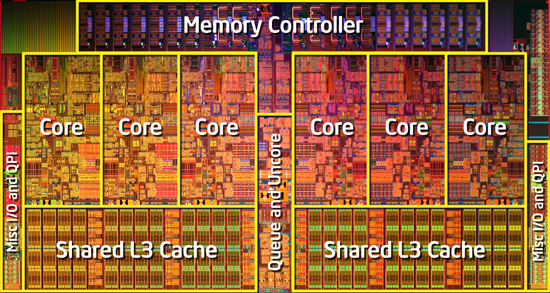

A 12MB L3 Cache: 50% Larger, 14% Higher Latency

Gulftown sticks to Ronak’s 2MB of L3 per core rule and has a full, 12MB shared L3 cache that’s accessible by any or all of the six cores. It’s actually because of the large unified L3 cache that performance in applications that don’t use all six cores can be higher than quad-core Core i7s.

Nehalem

Gulftown

The added cache does come at the expense of higher access latency. Nehalem and Lynnfield had a 42-cycle 8MB L3, Gulftown has a 48 cycle 12MB L3. A 14% higher latency for a 50% increase in size. Not an insignificant penalty, but a tradeoff that makes sense.

Identical Power Consumption to the Core i7 975

Raja has been busy with his DC clamp meter measuring actual power consumption of CPUs themselves rather than measuring power consumption at the wall. The results below compare the actual power draw of the 32nm Core i7 980X to the 45nm Core i7 975. These values are just the CPU itself, the rest of the system is completely removed from the equation:

| CPU | Intel Core i7 980X | Intel Core i7 975 |

| Idle | 6.3W | 6.3W |

| Load | 136.8W | 133.2W |

Thanks to power gating, both of these chips idle at 6.3W. That's ridiculous for a 1.17B transistor chip. Under full load, the two are virtually identical - both drawing around 136W.

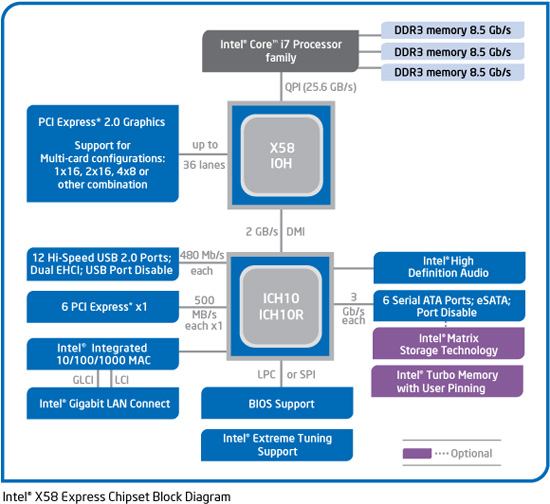

Westmere Goodness

The Core i7 980X is the first LGA-1366 processor based on Intel’s Westmere architecture, but unlike Clarkdale it does not have an on-die PCIe controller or on-package graphics. If anything, Gulftown really looks like a 6-core, 32nm version of Bloomfield rather than a scaled up Clarkdale. The similarities to Bloomfield extend all the way to the memory controller. Gulftown only officially supports three channels of DDR3-1066 memory, not 1333MHz like Lynnfield or Clarkdale. Of course running DDR3-1333 memory is possible, but the memory controller is technically operating out of spec at that frequency.

The processor does retain the Westmere specific features. The first Core i7 did not power gate its L3 cache, Lynnfield added it and Gulftown has it as well. While Mainly improved power efficiency and AES-NI instructions. I looked at the performance improvement offered by AES-NI acceleration in our Clarkdale review. If you use BitLocker or do a lot of archive encryption/decryption, you'll appreciate Gulftown's AES-NI.

102 Comments

View All Comments

palominoforever - Thursday, March 11, 2010 - link

Please test 7-zip compression with 7z 9.x which support lzma2 algorithm that can support 16 cores. It runs much faster than 7z 4.x on my i7 920.just4U - Thursday, March 11, 2010 - link

I've had the 920 for 13 months now and seeing this review makes me want to do a little dance. (I also have a PII 920 I like very much) The 920 holds up well I think overall.Some will b e horrified to know that I run it at stock. It can go quite high and I've got it set up with aftermarket cooling but I haven't really found a need to OC it as it. Someday I am sure I'll run it into the ground but not yet! A good purchase over a year ago, and still a worthy buy today.. or the 930 I guess since that's it's replacement.

Looks like it will be awhile before I move to 6cores. I wonder what AMD's offering will be like.

Ben90 - Thursday, March 11, 2010 - link

Ill be your dancing partner. It seems Intel is having a problem cranking up gaming performance after the Core2 series compared to other categories. Not having a fat cache limited Bloomfield performance and it seems a slower L3 cache is dragging down Gulftown.I'm not expecting the 47% gains like in ray-tracing, and in general Bloomfield/Gulftown has increased gaming performance; however, there are situations where a previous generation has a more suitable architecture. It would be nice to have a "BAM! CHECK ME OUT!" product such as Conroe where it absolutely swept everything, and for current gamers, Gulftown is not that. I'm sure however in the future having the extra cores will lend themselves more improvements though.

B3an - Thursday, March 11, 2010 - link

Come on people...You cant judge this CPU with games. It should be pretty obvious it wasn't going to do much in that area anyway.

Theres still loads of games that are poor at making use of quadcore let alone 6 core. Infact every single game i have uses less than 30% CPU usage on my 4.1GHz i7 920. Alot are under 15%. Thats just pathetic.

And only recently has quad started to make a decent difference over dualcore with some games.

I'm sure this CPU will have a longer life span for gaming performance when games actually start using PC CPU's better in the future, but thats probably years away as most games are console ports these days which are made in mind with vastly slower console CPU's.

just4U - Thursday, March 11, 2010 - link

I disagree. I don't thinkg this cpu will have a longer life span. My thinking is that when the current generation of cpu's finally start showing their age and can no longer cut it then you'd be upgrading anyway. Don't really matter if you have a 920, Q9X, a PIIX4, or even the 980X..... They are just that fast. Sure, some are faster then others but were not talking night and day differences here.

As an enthusiast and as someone who builds a great deal of computers I will likely have a new cpu long before I really need it. But that's more of a question of "WANT" rather then "NEED" You know?

Those sitting on a dual core and thinking of pulling the trigger on this puppy will be the ones who benifit from a purchase like this. The rest of us ... mmm not so much.

HotFoot - Thursday, March 11, 2010 - link

They can very well judge the CPU based on games, if games is what they do and the reason they'd consider upgrading. My most taxing application is gaming, and so I see little reason to move beyond my overclocked E8500.Otherwise, it's just trying to find a need for the solution, rather than the other way around. If I spent time doing tasks this CPU shined at, I'd be very excited about it.

Further to my point, I disagree with the article stating this is the best CPU for playing WoW. I would argue that a CPU costing 1/10 as much that still feeds your GPU fast enough to hit the 60 fps cap is a better CPU for playing WoW.

Dadofamunky - Thursday, March 11, 2010 - link

When a program like SysMark shows a crappy P4 getting 40% on average against the latest and greatest, it's definitely time for a new benchmark program. There's no way that P4EE ever comes that close in the real world. It's time to drop SysMark rom the benching suite. It's like using 3DMark03 for video card benchmarking.JonnyDough - Thursday, March 11, 2010 - link

That would be true, except that it isn't a Pentium 4, and this synthetic benchmark isn't supposed to be accurate, just give you an overall idea of how a CPU fares in relation to others. The Pentium 955 in question is a 65nm Presler core, not an old socket 478 chip...Dadofamunky - Thursday, March 11, 2010 - link

It's helpful to know what you're talking about before you correct me. Presler IS P4. and I noted it as a P4EE. And of course ignoring my point is not a good way to refute it.piroroadkill - Thursday, March 11, 2010 - link

Presler IS a Pentium 4