At this year’s Consumer Electronics Show, NVIDIA had several things going on. In a public press conference they announced 3D Vision Surround and Tegra 2, while on the showfloor they had products o’plenty, including a GF100 setup showcasing 3D Vision Surround.

But if you’re here, then what you’re most interested in is what wasn’t talked about in public, and that was GF100. With the Fermi-based GF100 GPU finally in full production, NVIDIA was ready to talk to the press about the rest of GF100, and at the tail-end of CES we got our first look at GF100’s gaming abilities, along with a hands-on look at some unknown GF100 products in action. The message NVIDIA was trying to send: GF100 is going to be here soon, and it’s going to be fast.

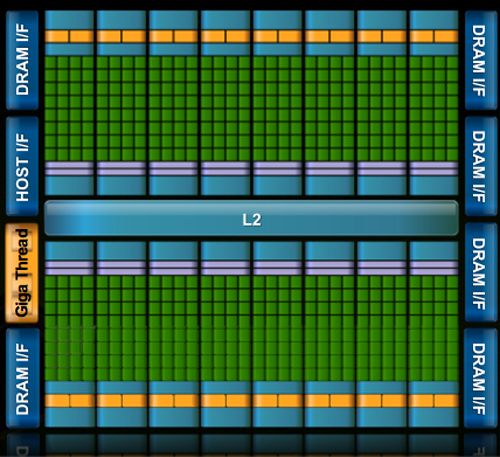

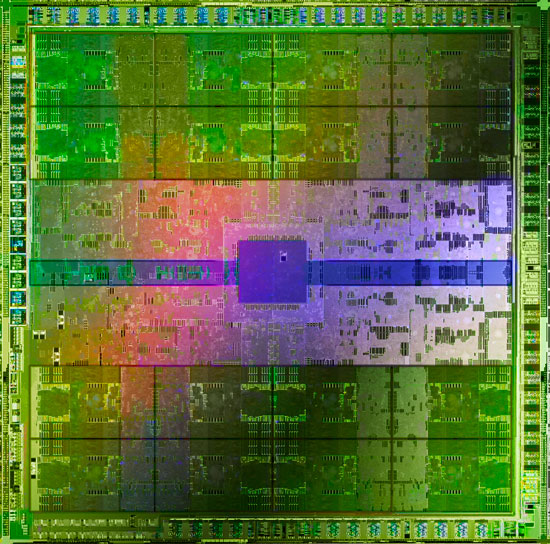

Fermi/GF100 as announced in September of 2009

Before we get too far ahead of ourselves though, let’s talk about what we know and what we don’t know.

During CES, NVIDIA held deep dive sessions for the hardware press. At these deep dives, NVIDIA focused on 3 things: Discussing GF100’s architecture as is relevant for a gaming/consumer GPU, discussing their developer relations program (including the infamous Batman: Arkham Asylum anti-aliasing situation), and finally demonstrating GF100 in action on some games and some productivity applications.

Many of you have likely already seen the demos, as videos of what we saw have already been on YouTube for a few days now. What you haven’t seen and what we’ll be focusing on today, is what we’ve learned about GF100 as a gaming GPU. We now know everything about what makes GF100 tick, and we’re going to share it all with you.

With that said, while NVIDIA is showing off GF100, they aren’t showing off the final products. As such we can talk about the GPU, but we don’t know anything about the final cards. All of that will be announced at a later time – and no, we don’t know that either. In short, here’s what we still don’t know and will not be able to cover today:

- Die size

- What cards will be made from the GF100

- Clock speeds

- Power usage (we only know that it’s more than GT200)

- Pricing

- Performance

At this point the final products and pricing are going to heavily depend on what the final GF100 chips are like. The clockspeeds NVIDIA can get away with will determine power usage and performance, and by extension of that, pricing. Make no mistake though, NVIDIA is clearly aiming to be faster than AMD’s Radeon HD 5870, so form your expectations accordingly.

For performance in particular, we have seen one benchmark: Far Cry 2, running the Ranch Small demo, with NVIDIA running it on both their unnamed GF100 card and a GTX285. The GF100 card was faster (84fps vs. 50fps), but as Ranch Small is a semi-randomized benchmark (certain objects are in some runs and not others) and we’ve seen Far Cry 2 to be CPU-limited in other situations, we don’t put much faith in this specific benchmark. When it comes to performance, we’re content to wait until we can test GF100 cards ourselves.

With that out of the way, let’s get started on GF100.

115 Comments

View All Comments

chizow - Monday, January 18, 2010 - link

Looks like Nvidia G80'd the graphics market again by completely redesigning major parts of their rendering pipeline. Clearly not just a doubling of GT200, some of the changes are really geared toward the next-gen of DX11 and PhysX driven games.One thing I didn't see mentioned anywhere was HD sound capabilities similar to AMD's 5 series offerings. I'm guessing they didn't mention it, which makes me think its not going to be addressed.

mm2587 - Monday, January 18, 2010 - link

for nvidia to "g80" the market again they would need parts far faster then anything amd had to offer and to maintain that lead for several months. The story is in fact reversed. AMD has the significantly faster cards and has had them for months now. gf100 still isn't here and the fact that nvidia isn't signing the praises of its performance up and down the streets is a sign that they're acceptable at best. (acceptable meaning faster then a 5870, a chip that's significantly smaller and cheaper to make)chizow - Monday, January 18, 2010 - link

Nah, they just have to win the generation, which they will when Fermi launches. And when I mean "generation", I mean the 12-16 month cycles dictated by process node and microarchitecture. It was similar with G80, R580 had the crown for a few months until G80 obliterated it. Even more recently with the 4870X2 and GTX 295. AMD was first to market by a good 4 months but Nvidia still won the generation with GTX 295.FaaR - Monday, January 18, 2010 - link

Win schmin.The 295 ran extremely hot, was much MUCH more expensive to manufacture, and the performance advantage in games was negligible for the most part. No game is so demanding the 4870 X2 can't run it well.

The geforce 285 is at least twice as expensive as a radeon 4890, its closest competitor, so how you can say Nvidia "won" this round is beyond me.

But I suppose with fanboy glasses on you can see whatever you want to see. ;)

beck2448 - Monday, January 18, 2010 - link

Its amazing to watch ATI fanboys revise history.The 295 smoked the competition and ran cooler and quieter. Fermi will inflict another beatdown soon enough.

chizow - Monday, January 18, 2010 - link

Funny the 295 ran no hotter (and often cooler) with a lower TDP than the 4870X2 from virtually every review that tested temps and was faster as well. Also the GTX 285 didn't compete with the 4890, the 275 did in both price and performance.Its obvious Nvidia won the round as these points are historical facts based on mounds of evidence, I suppose with fanboy glasses on you can see whatever you want to see. ;)

Paladin1211 - Monday, January 18, 2010 - link

Hey kid, sometimes less is more. You dont need to post that much just to say "nVidia wins, and will win again". This round AMD has won with 2mil cards drying up the graphics market. You cant change this, neither could nVidia.Just come out and buy a Fermi, which is 15-20% faster than a HD 5870, for $500-$600. You only have to wait 3 months, and save some bucks until then. I have a HD 5850 here and I'm waiting for Tegra 2 based smartphone, not Fermi.

Calin - Tuesday, January 19, 2010 - link

Both Tegra 2 and Fermi are extraordinary products - if what NVidia says about them is true. Unfortunately, it doesn't seem like any of them is a perfect fit for the gaming desktop.Calin - Monday, January 18, 2010 - link

You don't win a generation with a very-high-end card - you win a generation with a mainstream card (as this is where most of the profits are). Also, low-end cards are very high-volume, but the profit from each unit is very small.You might win the bragging rights with the $600, top-of-the-line, two-in-one cards, but they don't really have a market share.

chizow - Monday, January 18, 2010 - link

But that's not how Nvidia's business model works for the very reasons you stated. They know their low-end cards are very high-volume and low margin/profit and will sell regardless.They also know people buying in these price brackets don't know about or don't care about features like DX11 and as the 5670 review showed, such features are most likely a waste on such low-end parts to begin with (a 9800GT beats it pretty much across the board).

The GPU market is broken up into 3 parts, High-end, performance and mainstream. GF100 will cover High-end and the top tier in performance with GT200 filling in the rest to compete with the lower-end 5850. Eventually the technology introduced in GF100 will diffuse down to lower-end parts in that mainstream segment, but until then, Nvidia will deliver the cutting edge tech to those who are most interested in it and willing to pay the premium for it. High-end and performance minded individuals.