3D Vision Surround: NVIDIA’s Eyefinity

During our meeting with NVIDIA, they were also showing off 3D Vision Surround, which was announced at the start of CES at their press conference. 3D Vision Surround is not inherently a GF100 technology, but since it’s being timed for release along-side GF100 cards, we’re going to take a moment to discuss it.

If you’ve seen Matrox’s TripleHead2Go or AMD’s Eyefinity in action, then you know what 3D Vision Surround is. It’s NVIDIA’s implementation of the single large surface concept so that games (and anything else for that matter) can span multiple monitors. With it, gamers can get a more immersive view by being able to surround themselves with monitors so that the game world is projected from more than just a single point in front of them.

NVIDIA tells us that they’ve been sitting on this technology for quite some time but never saw a market for it. With the release of TripleHead2Go and Eyefinity it became apparent to them that this was no longer the case, and they unboxed the technology. Whether this is true or a sudden reaction to Eyefinity is immaterial at the moment, as it’s coming regardless.

This triple-display technology will have two names. When it’s used on its own, NVIDIA is calling it NVIDIA Surround. When it’s used in conjunction with 3D Vision, it’s called 3D Vision Surround. Obviously NVIDIA would like you to use it with 3D Vision to get the full effect (and to require a more powerful GPU) but 3D Vision is by no means required to use it. It is however the key differentiator from AMD, at least until AMD’s own 3D efforts get off the ground.

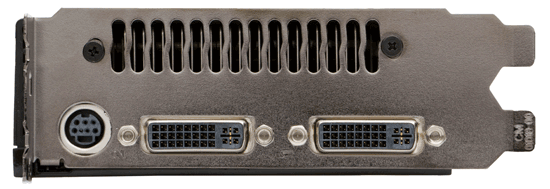

Regardless of to what degree this is a sudden reaction from NVIDIA over Eyefinity, ultimately this is something that was added late in to the design process. Unlike AMD who designed the Evergreen family around it from the start, NVIDA did not, and as a result they did not give a GF100 the ability to drive more than 2 displays at once. The shipping GF100 cards will have the traditional 2 monitor limit, meaning that gamers will need 2 GF100 cards in SLI to drive 3+ monitors, with the second card needed to provide the 3rd and 4th display outputs. We expect that the next NVIDIA design will include the ability to drive 3+ monitors from a single GPU, as for the moment this limitation precludes any ability to do Surround for cheap.

GTX 280 with 2 display outputs: GF100 won't be any different

As for some good news, as we stated earlier this is not a technology inherent to the GF100. NVIDIA can do it entirely in software and as a result will be backporting this technology to the GT200 (GTX 200 series). The drivers that get released for the GF100 will allow GTX 200 cards to do Surround in the same manner: with 2 cards, you can run a single large surface across 3+ displays. We’ve seen this in action and it works, as NVIDIA was demoing a pair of GTX 285s running in NVIDIA Surround mode in their CES booth.

The big question of course is going to be what this does for performance on both the GF100 and GT200, along with compatibility. That’s something that we’re going to have to wait on the actual hardware for.

115 Comments

View All Comments

marc1000 - Tuesday, January 19, 2010 - link

hey, Banshee was fine! I had one because by that time the 3dfx api was better than DirectX. But suddenly everything became DX compatible and that was one thing 3dfx GPUs could not do... then I replaced that Banshee with a Radeon 9200, later a Radeon X300 (or something), then Radeon 3850, and now Radeon 5770. I'm always in for the mainstream, not the top of the line, and Nvidia is not paying enough atention to mainstream since Geforce FX series...Zool - Monday, January 18, 2010 - link

The question is when they will come with mid range variants. The GF100 seems to be 448SP variant and the 512SP card will be only after A4 revision or who knows.http://www.semiconductor.net/article/438968-Nvidia...">http://www.semiconductor.net/article/43...en_Calls...

The interesting part on the article is the graph which shows the exponecial increase in leakage power after 40nm and less. (which of course hurts more if u have a big chip and diferent clocks to maintain)

They will have even more problems now that dx11 cards will be only gt300 architecture so no rebrand choices for mid range and lower.

For consumer gf100 will be great if they can buy it somewhere in the future, but nvidia will bleed more on it than the GT200.

QChronoD - Monday, January 18, 2010 - link

Maybe I'm missing something, but it seems like PC gaming has lost most of its value in the last few years. I know that you can run games at higher resolutions and probably faster framerates than you can on consoles, but it will end up costing more than all 3 consoles combined to do so. It just seems to have gotten too expensive for the marginal performance advantage.That being said, I bet that one of these would really crank through Collatz or GPUGRID.

GourdFreeMan - Monday, January 18, 2010 - link

I certainly share that sentiment. The last major graphical showcase we had was Crysis in 2007. There have been nice looking PC exclusive titles (Crysis Warhead, Arma 2, the Stalker franchise) since then, but no significant new IP with new rendering engines to take advantage of new technology.If software publishers want our money, they are going to have to do better. Without significant GPGPU applications for the mainstream consumer, GPU manufacturers will eventually suffer as well.

dukeariochofchaos - Monday, January 18, 2010 - link

no, i think you're totally correct, from a certain point of view.i had the thought that the DX9 support is probably more than enough for console games, and why would developers pump money into DX11 support for a product that generates most of it's profits on consoles?

obviously, there is some money to be made in the pc game sphere, but is it really enough to drive game developers to sink money into extra quality just for us?

At least NV has made a product that can be marketed now, and into the future, for design/enterprise solutions. That should help them extract more of the value out of their r&d if there are very few DX11 games for the lifespan of fermi.

Calin - Monday, January 18, 2010 - link

If Fermi is working good, NVidia is in a great place for the development of their next GPU - they'll only need to update some things here and there, based mostly on where the card's performance lack (improve this, improve that, reduce this, reduce that). Also, they are in a very good place for making lower-end cards based on Fermi (cut everything in two or four, no need to redesign the previously fixed function blocks).As for AMD... their current design is in the works and probably too advanced for big changes, so their real Fermi-killer won't come faster than a year or so (that is, if Fermi proves to be so great a success as NVidia wants it to be).

toyota - Monday, January 18, 2010 - link

what I have saved on games this year has more than paid for the difference between the price of a console and my pc.Stas - Tuesday, January 19, 2010 - link

that ^^^^^^^besides, with Steam/D2D/Impulse there is new breath in PC gaming. constant sales on great games, automatic updates, active support, forums full of people, all integrated with virtual community (profiles, chats, etc.). a place to release demos, trailers, etc. I was worried about PC gaming 2-3 years ago, but I'm absolutely confident that it's coming back better than ever.

deeceefar2 - Monday, January 18, 2010 - link

Are the screen shots from left 4 dead 2 missing at the end of page 5?[quote]

As a consequence of this change, TMAA’s tendency to have fake geometry on billboards pop in and out of existence is also solved. Here we have a set of screenshots from Left 4 Dead 2 showcasing this in action. The GF100 with TMAA generates softer edges on the vertical bars in this picture, which is what stops the popping from the GT200.

[/quote]

Ryan Smith - Monday, January 18, 2010 - link

Whoops. Fixed.