Why NVIDIA Is Focused On Geometry

Up until now we haven’t talked a great deal about the performance of GF100, and to some extent we still can’t. We don’t know the final clock speeds of the shipping cards, so we don’t know exactly what the card will be like. But what we can talk about is why NVIDIA made the decisions they did: why they went for the parallel PolyMorph and Raster Engines.

The DX11 specification doesn’t leave NVIDIA with a ton of room to add new features. Without the capsbits, NVIDIA can’t put new features on their hardware and easily expose them, nor would they want to at risk of having those features (and hence die space) go unused. DX11 rigidly says what features a compliant video card should offer, and leaves you very little room to deviate.

So NVIDIA has taken a bit of a gamble. There’s no single wonder-feature in the hardware that immediately makes it stand out from AMD’s hardware – NVIDIA has post-rendering features such as 3D Vision or compute features such as PhysX, but when it comes to rendering they can only do what AMD does.

But the DX11 specification doesn’t say how quickly you have to do it.

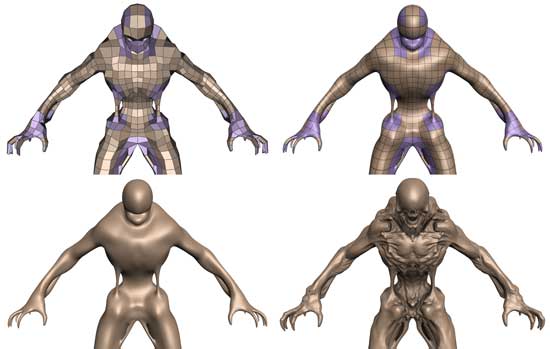

Tessellation in action

To differentiate themselves from AMD, NVIDIA is taking the tessellator and driving it for all its worth. While AMD merely has a tessellator, NVIDIA is counting on the tessellator in their PolyMorph Engine to give them a noticeable graphical advantage over AMD.

To put things in perspective, between NV30 (GeForce FX 5800) and GT200 (GeForce GTX 280), the geometry performance of NVIDIA’s hardware only increases roughly 3x in performance. Meanwhile the shader performance of their cards increased by over 150x. Compared just to GT200, GF100 has 8x the geometry performance of GT200, and NVIDIA tells us this is something they have measured in their labs.

So why does NVIDIA want so much geometry performance? Because with tessellation, it allows them to take the same assets from the same games as AMD and generate something that will look better. With more geometry power, NVIDIA can use tessellation and displacement mapping to generate more complex characters, objects, and scenery than AMD can at the same level of performance. And this is why NVIDIA has 16 PolyMorph Engines and 4 Raster Engines, because they need a lot of hardware to generate and process that much geometry.

NVIDIA believes their strategy will work, and if geometry performance is as good as they say it is, then we can see why they feel this way. Game art is usually created at far higher levels of detail than what eventually ends up being shipped, and with tessellation there’s no reason why a tessellated and displacement mapped representation of that high quality art can’t come with the game. Developers can use tessellation to scale down to whatever the hardware can do, and in NVIDIA’s world they won’t have to scale it down very far to meet up with the GF100.

At this point tessellation is a message that’s much more for developers than it is consumers. As DX11 is required to take advantage of tessellation, only a few games exist while plenty more are on the way. NVIDIA needs to convince developers to ship their art with detailed enough displacement maps to match GF100’s capabilities, and while that isn’t too hard, it’s also not a walk in the park. To that extent they’re also extolling the other virtues of tessellation, such as the ability to do higher quality animations by only needing to animate the control points of a model, and letting tessellation take care of the rest. A lot of the success of the GF100 architecture is going to ride on how developers respond to this, so it’s going to be something worth keeping an eye on.

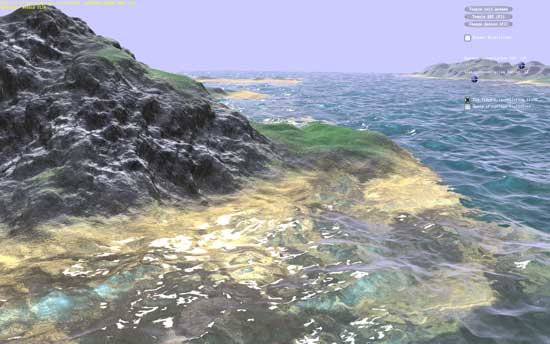

NVIDIA's water tessellation demo

NVIDIA's hair tessellation demo

115 Comments

View All Comments

marc1000 - Tuesday, January 19, 2010 - link

hey, Banshee was fine! I had one because by that time the 3dfx api was better than DirectX. But suddenly everything became DX compatible and that was one thing 3dfx GPUs could not do... then I replaced that Banshee with a Radeon 9200, later a Radeon X300 (or something), then Radeon 3850, and now Radeon 5770. I'm always in for the mainstream, not the top of the line, and Nvidia is not paying enough atention to mainstream since Geforce FX series...Zool - Monday, January 18, 2010 - link

The question is when they will come with mid range variants. The GF100 seems to be 448SP variant and the 512SP card will be only after A4 revision or who knows.http://www.semiconductor.net/article/438968-Nvidia...">http://www.semiconductor.net/article/43...en_Calls...

The interesting part on the article is the graph which shows the exponecial increase in leakage power after 40nm and less. (which of course hurts more if u have a big chip and diferent clocks to maintain)

They will have even more problems now that dx11 cards will be only gt300 architecture so no rebrand choices for mid range and lower.

For consumer gf100 will be great if they can buy it somewhere in the future, but nvidia will bleed more on it than the GT200.

QChronoD - Monday, January 18, 2010 - link

Maybe I'm missing something, but it seems like PC gaming has lost most of its value in the last few years. I know that you can run games at higher resolutions and probably faster framerates than you can on consoles, but it will end up costing more than all 3 consoles combined to do so. It just seems to have gotten too expensive for the marginal performance advantage.That being said, I bet that one of these would really crank through Collatz or GPUGRID.

GourdFreeMan - Monday, January 18, 2010 - link

I certainly share that sentiment. The last major graphical showcase we had was Crysis in 2007. There have been nice looking PC exclusive titles (Crysis Warhead, Arma 2, the Stalker franchise) since then, but no significant new IP with new rendering engines to take advantage of new technology.If software publishers want our money, they are going to have to do better. Without significant GPGPU applications for the mainstream consumer, GPU manufacturers will eventually suffer as well.

dukeariochofchaos - Monday, January 18, 2010 - link

no, i think you're totally correct, from a certain point of view.i had the thought that the DX9 support is probably more than enough for console games, and why would developers pump money into DX11 support for a product that generates most of it's profits on consoles?

obviously, there is some money to be made in the pc game sphere, but is it really enough to drive game developers to sink money into extra quality just for us?

At least NV has made a product that can be marketed now, and into the future, for design/enterprise solutions. That should help them extract more of the value out of their r&d if there are very few DX11 games for the lifespan of fermi.

Calin - Monday, January 18, 2010 - link

If Fermi is working good, NVidia is in a great place for the development of their next GPU - they'll only need to update some things here and there, based mostly on where the card's performance lack (improve this, improve that, reduce this, reduce that). Also, they are in a very good place for making lower-end cards based on Fermi (cut everything in two or four, no need to redesign the previously fixed function blocks).As for AMD... their current design is in the works and probably too advanced for big changes, so their real Fermi-killer won't come faster than a year or so (that is, if Fermi proves to be so great a success as NVidia wants it to be).

toyota - Monday, January 18, 2010 - link

what I have saved on games this year has more than paid for the difference between the price of a console and my pc.Stas - Tuesday, January 19, 2010 - link

that ^^^^^^^besides, with Steam/D2D/Impulse there is new breath in PC gaming. constant sales on great games, automatic updates, active support, forums full of people, all integrated with virtual community (profiles, chats, etc.). a place to release demos, trailers, etc. I was worried about PC gaming 2-3 years ago, but I'm absolutely confident that it's coming back better than ever.

deeceefar2 - Monday, January 18, 2010 - link

Are the screen shots from left 4 dead 2 missing at the end of page 5?[quote]

As a consequence of this change, TMAA’s tendency to have fake geometry on billboards pop in and out of existence is also solved. Here we have a set of screenshots from Left 4 Dead 2 showcasing this in action. The GF100 with TMAA generates softer edges on the vertical bars in this picture, which is what stops the popping from the GT200.

[/quote]

Ryan Smith - Monday, January 18, 2010 - link

Whoops. Fixed.