Why NVIDIA Is Focused On Geometry

Up until now we haven’t talked a great deal about the performance of GF100, and to some extent we still can’t. We don’t know the final clock speeds of the shipping cards, so we don’t know exactly what the card will be like. But what we can talk about is why NVIDIA made the decisions they did: why they went for the parallel PolyMorph and Raster Engines.

The DX11 specification doesn’t leave NVIDIA with a ton of room to add new features. Without the capsbits, NVIDIA can’t put new features on their hardware and easily expose them, nor would they want to at risk of having those features (and hence die space) go unused. DX11 rigidly says what features a compliant video card should offer, and leaves you very little room to deviate.

So NVIDIA has taken a bit of a gamble. There’s no single wonder-feature in the hardware that immediately makes it stand out from AMD’s hardware – NVIDIA has post-rendering features such as 3D Vision or compute features such as PhysX, but when it comes to rendering they can only do what AMD does.

But the DX11 specification doesn’t say how quickly you have to do it.

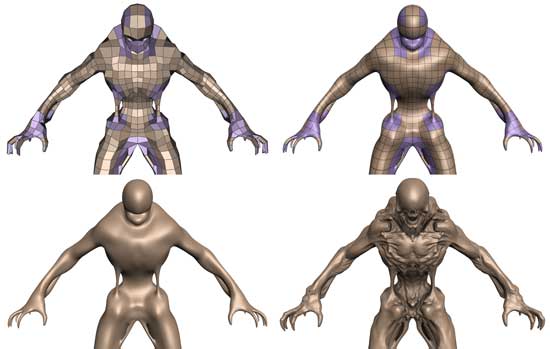

Tessellation in action

To differentiate themselves from AMD, NVIDIA is taking the tessellator and driving it for all its worth. While AMD merely has a tessellator, NVIDIA is counting on the tessellator in their PolyMorph Engine to give them a noticeable graphical advantage over AMD.

To put things in perspective, between NV30 (GeForce FX 5800) and GT200 (GeForce GTX 280), the geometry performance of NVIDIA’s hardware only increases roughly 3x in performance. Meanwhile the shader performance of their cards increased by over 150x. Compared just to GT200, GF100 has 8x the geometry performance of GT200, and NVIDIA tells us this is something they have measured in their labs.

So why does NVIDIA want so much geometry performance? Because with tessellation, it allows them to take the same assets from the same games as AMD and generate something that will look better. With more geometry power, NVIDIA can use tessellation and displacement mapping to generate more complex characters, objects, and scenery than AMD can at the same level of performance. And this is why NVIDIA has 16 PolyMorph Engines and 4 Raster Engines, because they need a lot of hardware to generate and process that much geometry.

NVIDIA believes their strategy will work, and if geometry performance is as good as they say it is, then we can see why they feel this way. Game art is usually created at far higher levels of detail than what eventually ends up being shipped, and with tessellation there’s no reason why a tessellated and displacement mapped representation of that high quality art can’t come with the game. Developers can use tessellation to scale down to whatever the hardware can do, and in NVIDIA’s world they won’t have to scale it down very far to meet up with the GF100.

At this point tessellation is a message that’s much more for developers than it is consumers. As DX11 is required to take advantage of tessellation, only a few games exist while plenty more are on the way. NVIDIA needs to convince developers to ship their art with detailed enough displacement maps to match GF100’s capabilities, and while that isn’t too hard, it’s also not a walk in the park. To that extent they’re also extolling the other virtues of tessellation, such as the ability to do higher quality animations by only needing to animate the control points of a model, and letting tessellation take care of the rest. A lot of the success of the GF100 architecture is going to ride on how developers respond to this, so it’s going to be something worth keeping an eye on.

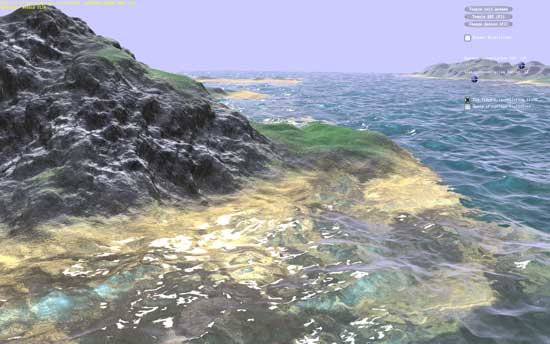

NVIDIA's water tessellation demo

NVIDIA's hair tessellation demo

115 Comments

View All Comments

Zool - Tuesday, January 19, 2010 - link

There are still plenty of questions.Like how tesselation efects MSAA with increased geametry per pixel. Also the flat stairs in uniengine (and very plastic, realistic after tesselation and displacement mapping), would they work with collision detection as after tesselation or before as completely flat and somewhere else in the 3d space. The same with some physix efects. The uniengine heaven is more of a showcase of tesselation and what can be done than a real game engine.

marraco - Monday, January 18, 2010 - link

Far Cry Ranch Small, and all the integrated benchmark, reads constantly the hard disk, so is dependent of HD speed.It's not unfair, since FC2 updates textures from hard disk all the time, making the game freeze constantly, even in the better computers.

I wish to see that benchmark run with and without SSD.

Zool - Monday, January 18, 2010 - link

I want also note that for the stream of fps/3rd person shooters/rts/racing games that look all same sometimes upgrading the graphic card doesnt have much sense these days.Can anyone make a game that will use pc hardware and it wont end in running and shoting at each other from first or third person ? Dragon age was a quite weak overhyped rpg.

Suntan - Monday, January 18, 2010 - link

Agreed. That is one of the main reasons I've lost interest in PC gaming. Ironically though, my favorite console games on the PS3 have been the two Uncharted games...-Suntan

mark0409mr01 - Monday, January 18, 2010 - link

Does anybody know if Fermi, GF100 or whatever it's going to be called have support for bitstream of HD audio codecs?Also do we know anything else about the video capabilites of the new card, there doesn't really seem to have been much mentioned about this.

Thanks

Slaimus - Monday, January 18, 2010 - link

Seeing how the GF100 chip has no display components at all on-chip (RAMDAC, TMDS, Displayport, PureVideo), they will probably be using a NVIO chip like the GT200. Would it not be possible to just put multiple NVIO chips to scale with the number of display outputs?Ryan Smith - Wednesday, January 20, 2010 - link

If it's possible, NVIDIA is not doing it. I asked them about the limit on display outputs, and their response (which is what brought upon the comments in the article) was that GF100 cards were already too late in the design process after they greenlit Surround to add more display outputs.I don't have more details than that, but the implication is that they need to bake support for more displays in to the GPU itself.

Headfoot - Monday, January 18, 2010 - link

Best comment for the entire page, I am wondering the same thing.Suntan - Monday, January 18, 2010 - link

Looking at the image of the chip on the first page, it looks like a miniature of a vast city complex. Man, when are they going to remake “TRON”……although, at the speeds that chips are running now-a-days, the whole movie would be over in a ¼ of a second…

-Suntan

arnavvdesai - Monday, January 18, 2010 - link

In you conclusion you mentioned that the only thing which would matter would be price/performance. However, from the article I wasnt really able to make out a couple of things. When NVIDIA says they can make something look better than the competition, how would you quantify that?I am a gamer & I love beautiful graphics. It's one of the reasons I still sometimes buy games for PCs instead of consoles. I have a 5870 & a 1080p 24" monitor. I would however consider buying this card if it made my game look better. After a certain number(60fps) I really only care about beautiful graphics. I want no grass to look like paper or jaggies to show on distant objects. Also, will game makers take advantage of this? Unlike previous generations game manufacturers are very deeply tied to the current console market. They have to make sure the game performs admirably on current day consoles which are at least 3-5 years behind their PC counterparts, so what incentive do they have to try and advance graphics on the PC when there arent enough people buying them. I am looking at current games and frankly just playing it, other than an obvious improvement in framerate, I cannot notice any visual improvements.

Coming back to my question on architecture. Will this tech being built by Nvidia help improve visual quality of games without additional or less additional work from the game manufacturing studios.