OCZ's Vertex 2 Pro Preview: The Fastest MLC SSD We've Ever Tested

by Anand Lal Shimpi on December 31, 2009 12:00 AM EST- Posted in

- Storage

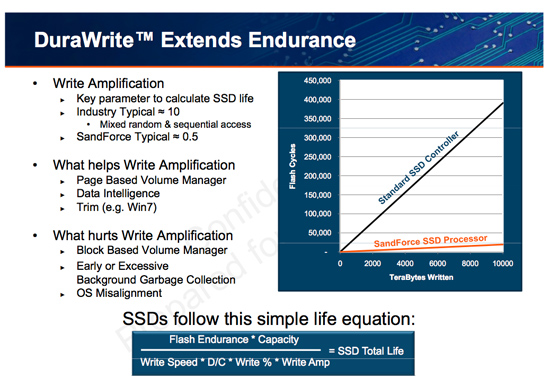

The Secret Sauce: 0.5x Write Amplification

The downfall of all NAND flash based SSDs is the dreaded read-modify-write scenario. I’ve explained this a few times before. Basically your controller goes to write some amount of data, but because of a lot of reorganization that needs to be done it ends up writing a lot more data. The ratio of how much you write to how much you wanted to write is write amplification. Ideally this should be 1. You want to write 1GB and you actually write 1GB. In practice this can be as high as 10 or 20x on a really bad SSD. Intel claims that the X25-M’s dynamic nature keeps write amplification down to a manageable 1.1x. SandForce says its controllers write a little less than half what Intel does.

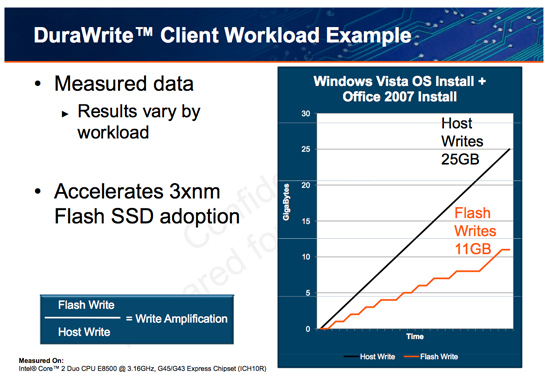

SandForce states that a full install of Windows 7 + Office 2007 results in 25GB of writes to the host, yet only 11GB of writes are passed on to the drive. In other words, 25GBs of files are written and available on the SSD, but only 11GB of flash is actually occupied. Clearly it’s not bit-for-bit data storage.

What SF appears to be doing is some form of real-time compression on data sent to the drive. SandForce told me that it’s not strictly compression but a combination of several techniques that are chosen on the fly depending on the workload.

SandForce referenced data deduplication as a type of data reduction algorithm that could be used. The principle behind data deduplication is simple. Instead of storing every single bit of data that comes through, simply store the bits that are unique and references to them instead of any additional duplicates. Now presumably your hard drive isn’t full of copies of the same file, so deduplication isn’t exactly what SandForce is doing - but it gives us a hint.

Straight up data compression is another possibility. The idea behind lossless compression is to use fewer bits to represent a larger set of bits. There’s additional processing required to recover the original data, but with a fast enough processor (or dedicated logic) that part can be negligible.

Assuming this is how SandForce works, it means that there’s a ton of complexity in the controller and firmware. Much more than what even a good SSD controller needs to deal with. Not only does SandForce have to manage bad blocks, block cleaning/recycling, LBA mapping and wear leveling, but it also needs to manage this tricky write optimization algorithm. It’s not a trivial matter, SandForce must ensure that the data remains intact while tossing away nearly half of it. After all, the primary goal of storage is to store data.

The whole write-less philosophy has tremendous implications for SSD performance. The less you write, the less you have to worry about garbage collection/cleaning and the less you have to worry about write amplification. This is how the SF controllers get by without having any external DRAM, there’s just no need. There are fairly large buffers on chip though, most likely on the order of a couple of MBs (more on this later).

Manufacturers are rarely honest enough to tell you the downsides to their technologies. Representing a collection of bits with a fewer number of bits works well if you have highly compressible data or a ton of duplicates. Data that is already well compressed however, shouldn’t work so nicely with the DuraWrite engine. That means compressed images, videos or file archives will most likely exhibit higher write amplification than SandForce’s claimed 0.5x. Presumably that’s not the majority of writes your SSD will see on a day to day basis, but it’s going to be some portion of it.

100 Comments

View All Comments

Holly - Friday, January 1, 2010 - link

Hmm, I thought MLC/SLC is more the matter of SSD controller than memory chip itself? Anybody could throw a bit light pls?bji - Friday, January 1, 2010 - link

MLC and SLC are two different types of flash chips. You can find out more at:http://en.wikipedia.org/wiki/MLC_flash">http://en.wikipedia.org/wiki/MLC_flash

http://en.wikipedia.org/wiki/Single-level_cell">http://en.wikipedia.org/wiki/Single-level_cell

Holly - Friday, January 1, 2010 - link

well,according to

http://www.anandtech.com/storage/showdoc.aspx?i=34...">http://www.anandtech.com/storage/showdoc.aspx?i=34...

they are not nescessary the same chips... the transistors are very much the same and it's more or less matter of how you interpret the voltages

quote:

Intel actually uses the same transistors for its SLC and MLC flash, the difference is how you read/write the two.

shawkie - Friday, January 1, 2010 - link

Call me cynical but I'd be very suspicious of benchmark results from this controller. How can you be sure that the write amplification during the benchmark resembles that during real world use? If you write completely random data to the disk then surely its impossible to achieve a write amplification of less than 1.0? I would have thought that home users would be mostly storing compressed images, audio and video which must be pretty close to random. I'd also be interested to know if the deduplication/compression is helping them to increase to the effective reserved space. That would go a long way to mask read-modify-write latency issues but again, what happens if the data on the disk can't be deduplicated/compressed?Swivelguy2 - Friday, January 1, 2010 - link

On the contrary - if you write random data, some (probably lots) of that data will be duplicated on successive writes simply by random chance.When you write already-compressed data, an algorithm has already looked at that data and processed it in a way that makes sure there's very little duplication of data.

Holly - Friday, January 1, 2010 - link

It's always matter of used compression algorithm. There are algorithms that are able to press whole avi movie (= already compressed) to few megabytes. Problem with these algoritms is they are so demanding it takes days even for neuron network to compress and decompress. We had one "very simple" compression algorithm in graphs theory classes.. honestly I got ultimately lost after first read paragraph (out of like 30 pages).So depending on algorithms used you can compress already compressed data. You can take your bitmap, run it through Run Length Encoding, then run it through Huffman encoding and finish with some dictionary based encoding... In most cases you'll compress your data a bit more every time.

There is no chance to tell how this new technology handles it's task in the end. Not until it is ran with Petabytes of data.

bji - Friday, January 1, 2010 - link

Please don't use an authoritative tone when you actually don't know much about the subject. You are likely to confuse readers who believe that what you write is factual.The compression of movies that you were talking about is a lossy compression and would never, ever be suitable in any way for compressing data internally within an SSD.

Run Length Encoding requires knowledge of the internal structure of the data being stored, and an SSD is an agnostic device that knows nothing about the data itself, so that's out.

Huffman encoding (or derivitives thereof) is universally used in pretty much every compression algorithm, so it's pretty much a given that this is a component of whatever compression SandForce is using. Also, dictionary based encoding is once again only relevent when you are dealing with data of a generally restricted form, not for data which you know nothing about, so it's out; and even if it were used, it would be used before Huffman encoding, not after it as you suggested.

I think your basic point is that many different individual compression technologies can be combined (typically by being applied successively); but that's already very much de riguer in compression, with every modern compression algorithm I am familiar with already combining several techniques to produce whatever combination of speed and effective compression ratios is desired. And every compression algorithm has certain types of data that it works better on, and certain types of data that it works worse on, than other algorithms.

I am skeptical about SandForce's technology; if it relies on compression then it is likely to perform quite poorly in certain circumstances (as others have pointed out); it reminds me of "web accelerator" snake oil technology that advertised ridiculous speeds out of 56K modems, and which only worked for uncompressed data, and even then, not very well.

Furthermore, this tradeoff of on-board DRAM for extra spare flash seems particularly retarded. Why would you design algorithms that do away with cheap DRAM in favor of expensive flash? You want to use as little flash as possible, because that's the expensive part of an SSD; the DRAM cache is a miniscule part of the total cost of the SSD, so who cares about optimizing that away?

Holly - Friday, January 1, 2010 - link

Well I know quite a bit about the subject, but if you feel offended in any way I am sorry.More I think we got in a bit of misunderstanding.

What I wrote was more or less serie of examples where you could go and compress some already compressed data.

It's quite common knowledge you won't be able to lossless compress well made AVI movie with normally used lossless compression software like ZIP or RAR. But, that is not even a slightest proof there isn't some kind of algorithm that can compress this data to a much smaller volume.

To prove my concept of theory I took the example of bitmap (uncompressed) and then used various lossless compression algorithms. In most cases every time I'd use the algorithm I would get more and more compressed data (well maybe except RLE that could end up with longer result than original file was).

I was not forcing any specific "front end" used algorithms on this controller, because honestly all talks about how (if) it compresses the data is mere speculation. So I went back to origins to keep the idea as simple as possible.

Whole point I was trying to make is there is no way to tell if it saves data traffic on NANDs when you save your file XY on this device simply because there is no knowledge what kind of algorithm is used. We can just guess by trying to compress the file with common algorithms (be it lossless or not) and then try to check if the controller saves NANDs some work or not. OFC, algorithms used on the controller must be lossless and must be stable. But that's about all we can say at this point.

Sorry if I caused some kind of confusion.

What _seems_ to me is that basically there is this difference between X25-M and Vertex 2 Pro logic (taking the 25 vs 11 gigs example used in the article):

System -> 25GB -> X25-M controller -> writes/overwrites -> 25 GB -> NAND flash (but due to overwrites, deletes etc. there is 11GB of data)

compared to Vertex 2 Pro:

System -> 25GB -> SF-1500 controller -> controller logic -> 11 GB -> NAND flash (only 11GB actually written in NAND due to smart controller logic)

sebijisi - Wednesday, January 6, 2010 - link

[quote]It's quite common knowledge you won't be able to lossless compress well made AVI movie with normally used lossless compression software like ZIP or RAR. But, that is not even a slightest proof there isn't some kind of algorithm that can compress this data to a much smaller volume.

[/quote]

Well actually there is. The entropy of the original file bounds the minimum possible size of the compressed file. Same reason you compress first before encrypting something: As the goal of encryption is to generate maximum entropy, encrypted data cannot be compressed further. Not even with some advanced but not yet know algorithm.

shawkie - Friday, January 1, 2010 - link

As long as the data is truly random (i.e. there is no correlation between different bytes) then it cannot be compressed. If you have N bits of data then you have 2^N different values. It is impossible to map all of these different values to less than N bits. If you generate this value randomly its possible you might produce something that can be easily compressed (such as all zeros or all ones) but if you do it enough times you will generate every possible value an equal number of times so on average it will take up at least N bits. Seehttp://www.faqs.org/faqs/compression-faq/part1/sec...">http://www.faqs.org/faqs/compression-faq/part1/sec...

http://en.wikipedia.org/wiki/Lossless_data_compres...">http://en.wikipedia.org/wiki/Lossless_d...on#The_M...

As you note, it is possible to identify already-compressed data and avoid trying to recompress it but this still means you get a write amplification of slightly more than 1.0 for such data.