OCZ's Vertex 2 Pro Preview: The Fastest MLC SSD We've Ever Tested

by Anand Lal Shimpi on December 31, 2009 12:00 AM EST- Posted in

- Storage

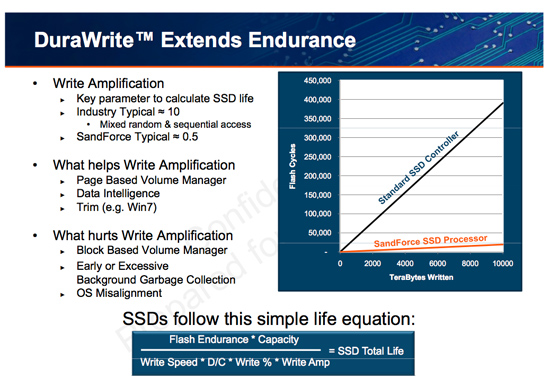

The Secret Sauce: 0.5x Write Amplification

The downfall of all NAND flash based SSDs is the dreaded read-modify-write scenario. I’ve explained this a few times before. Basically your controller goes to write some amount of data, but because of a lot of reorganization that needs to be done it ends up writing a lot more data. The ratio of how much you write to how much you wanted to write is write amplification. Ideally this should be 1. You want to write 1GB and you actually write 1GB. In practice this can be as high as 10 or 20x on a really bad SSD. Intel claims that the X25-M’s dynamic nature keeps write amplification down to a manageable 1.1x. SandForce says its controllers write a little less than half what Intel does.

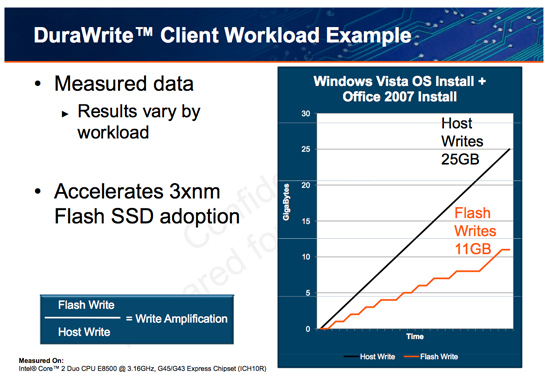

SandForce states that a full install of Windows 7 + Office 2007 results in 25GB of writes to the host, yet only 11GB of writes are passed on to the drive. In other words, 25GBs of files are written and available on the SSD, but only 11GB of flash is actually occupied. Clearly it’s not bit-for-bit data storage.

What SF appears to be doing is some form of real-time compression on data sent to the drive. SandForce told me that it’s not strictly compression but a combination of several techniques that are chosen on the fly depending on the workload.

SandForce referenced data deduplication as a type of data reduction algorithm that could be used. The principle behind data deduplication is simple. Instead of storing every single bit of data that comes through, simply store the bits that are unique and references to them instead of any additional duplicates. Now presumably your hard drive isn’t full of copies of the same file, so deduplication isn’t exactly what SandForce is doing - but it gives us a hint.

Straight up data compression is another possibility. The idea behind lossless compression is to use fewer bits to represent a larger set of bits. There’s additional processing required to recover the original data, but with a fast enough processor (or dedicated logic) that part can be negligible.

Assuming this is how SandForce works, it means that there’s a ton of complexity in the controller and firmware. Much more than what even a good SSD controller needs to deal with. Not only does SandForce have to manage bad blocks, block cleaning/recycling, LBA mapping and wear leveling, but it also needs to manage this tricky write optimization algorithm. It’s not a trivial matter, SandForce must ensure that the data remains intact while tossing away nearly half of it. After all, the primary goal of storage is to store data.

The whole write-less philosophy has tremendous implications for SSD performance. The less you write, the less you have to worry about garbage collection/cleaning and the less you have to worry about write amplification. This is how the SF controllers get by without having any external DRAM, there’s just no need. There are fairly large buffers on chip though, most likely on the order of a couple of MBs (more on this later).

Manufacturers are rarely honest enough to tell you the downsides to their technologies. Representing a collection of bits with a fewer number of bits works well if you have highly compressible data or a ton of duplicates. Data that is already well compressed however, shouldn’t work so nicely with the DuraWrite engine. That means compressed images, videos or file archives will most likely exhibit higher write amplification than SandForce’s claimed 0.5x. Presumably that’s not the majority of writes your SSD will see on a day to day basis, but it’s going to be some portion of it.

100 Comments

View All Comments

Howard - Friday, January 1, 2010 - link

Did you REALLY mean 90 millifarads (huge) or 90 uF, which is much more reasonable?korbendallas - Saturday, January 2, 2010 - link

Yep, it's 0.09F 5.5V Supercapacitor.http://www.cap-xx.com/images/HZ202HiRes.jpg">http://www.cap-xx.com/images/HZ202HiRes.jpg

iwodo - Friday, January 1, 2010 - link

If, all things being equal, it just shows that the current SSD drives performance aren't really limited by Flash itself but the controller.So may be with a Die Shrink we could get even more Random RW performance?

And i suspect these SSD aren't even using ONFI 2.1 chips either, so 600MB/s Seq Read is very feasible. Except SATA 3.0 is holding it all up.

How far are we from using PCI-Express based SSD? I am sure booting problem could be easily solved with UEFI,

ProDigit - Friday, January 1, 2010 - link

One of the factors would be if this drive has a processor that does real life compression of files on the SSD,that would mean that it would use more power on notebooks.Sure it's performance is top, as well as it's length in time that it works, but how much power does it use?

If it still is close to an HD it might be an interesting drive. But if it is more, it'd be interesting to see how much more!

I'm not interested in equipping a netbook or notebook/laptop with a SSD that uses more than 5W TDP.

chizow - Friday, January 1, 2010 - link

I've always noticed the many similarities between SSD controller technology and RAID technology with the multiple channel modules determining reads/write speeds along with write differences between MLC and SLC. The differences in SandForce's controller seems to take this analogy a step further with what is essentially RAID 5 compared to previous MLC SSDs.It seems like these drives use a lot of controller/processor power for redundancy/error checking code, which is very similar to a RAID 5 array. This allows them to do away with DRAM and gives them the flexibility to use cheaper NAND Flash, but at the expense of additional Flash capacity to store the parity/ECC data. I guess that begs the question, is 64MB of DRAM and the difference in NAND quality used more expensive than 30% more NAND Flash? Right now I'd say probably not until cheaper NAND becomes available, but if so it may make their technology more viable to widespread desktop adoption when that

Last thing I'll say is I think its a bit scary how much impact Anand's SSD articles have on this emerging market. He's like the Paul Muad'dib of SSDs and is able to kill a controller-maker with a single word lol. Seriously, after he exposed the stuttering and random read/write problems on Jmicron controllers back when OCZ first started using them, the mere mention of their name combined with SSDs has been taboo. OCZ has clearly recovered since then, as their Vertex drives have been highly regarded. I expect SandForce-based controllers to be all the buzz now going forward, largely because of this article.

pong - Friday, January 1, 2010 - link

It seems to me that Anand may be misunderstanding the reason for the impressive write amplification. The example with Windows Vista install + Office 2007 install states that 25GB is written to the disk, but only 11GB is written to flash. I don't believe this implies compression. It just means that a lot of the data written to disk is shortlived because it lives in temporary files which are deleted soon after or because the data is overwritten with more recent information. The 11GB is what ends up being on the disk after installation whether it is an SSD or a normal hard-drive. If the controller has significantly more RAM than other SSD controllers it doesn't have to commit short-lived changes to flash as often. The controller may also have logic that enables it to detect hotspots, ie areas of the logical disk that is written to often to improve the efficiency of its caching scheme. This sort of stuff could probably be implemented mostly in an OS except the OS can't guarantee that the stuff in the cache will make it to the disk if the power is suddenly cut. The SSD controller can make this guarantee if it can store enough energy - say in a large capacitor - to commit everything it has cached to flash when power is removed.shawkie - Friday, January 1, 2010 - link

Unless I misread it the article seems be claiming that the device actually has no cache at all.bji - Friday, January 1, 2010 - link

I think the article said that the SSD has no RAM external to the controller chip, but that the controller chip itself likely has some number of megabytes of RAM, much of which is likely used for cache. It's not clear, but it's very, very hard to believe that the device could work without any kind of internal buffering; but that this device does it with less DRAM than other SSDs (i.e., the smaller amount of DRAM built into the controller chip versus a separate external tens-of-megabytes DRAM chip).gixxer - Friday, January 1, 2010 - link

I thought the vertex supported Trim thru windows 7, yet in the article Anand says this:"With the original Vertex all you got was a command line wiper tool to manually TRIM the drive. While Vertex 2 Pro supports Windows 7 TRIM, you also get a nifty little toolbox crafted by SandForce and OCZ:"

Does the Vertex drive support windows 7 trim or do you still have to use the manual tool?

MrHorizontal - Friday, January 1, 2010 - link

Very interesting controller, though they've seemed to have missed a couple of tricks...First why is an 'enterprise' controller like this not using SAS which is at 6GBps right now and we can see what effect a non-3GBps interface has on SSDs, and why when SATA 6GBps is being shipped in motherboards now, then in 2010, when these SandForce drives are going to be released will still be using 3GBps SATA...

Second, the 'RAID' features of this drive seem to be like RAID5 distributing parity hashes across the spare area which is also distributed across the drive. However, all controllers have multiple channels and why they don't use RAID6 (the one where a dedicated drive holds parity data, not the 2-stripe RAID5) whereby they use 1 or 2 SLC NAND Flash chips to hold the more important data, and use really cheap MLC NAND to hold the actual data in a redundant manner?