OCZ's Vertex 2 Pro Preview: The Fastest MLC SSD We've Ever Tested

by Anand Lal Shimpi on December 31, 2009 12:00 AM EST- Posted in

- Storage

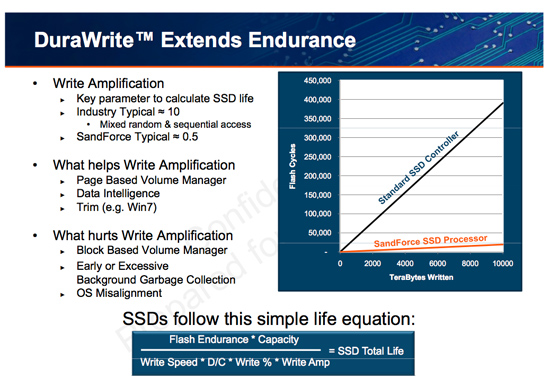

The Secret Sauce: 0.5x Write Amplification

The downfall of all NAND flash based SSDs is the dreaded read-modify-write scenario. I’ve explained this a few times before. Basically your controller goes to write some amount of data, but because of a lot of reorganization that needs to be done it ends up writing a lot more data. The ratio of how much you write to how much you wanted to write is write amplification. Ideally this should be 1. You want to write 1GB and you actually write 1GB. In practice this can be as high as 10 or 20x on a really bad SSD. Intel claims that the X25-M’s dynamic nature keeps write amplification down to a manageable 1.1x. SandForce says its controllers write a little less than half what Intel does.

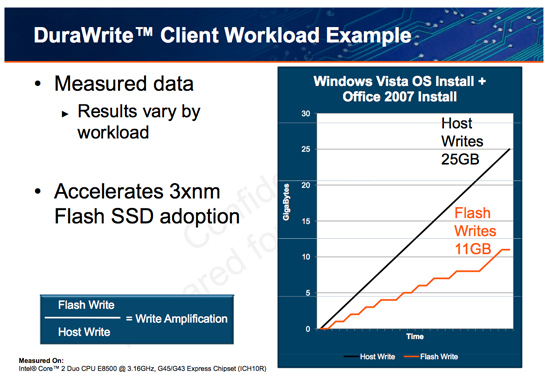

SandForce states that a full install of Windows 7 + Office 2007 results in 25GB of writes to the host, yet only 11GB of writes are passed on to the drive. In other words, 25GBs of files are written and available on the SSD, but only 11GB of flash is actually occupied. Clearly it’s not bit-for-bit data storage.

What SF appears to be doing is some form of real-time compression on data sent to the drive. SandForce told me that it’s not strictly compression but a combination of several techniques that are chosen on the fly depending on the workload.

SandForce referenced data deduplication as a type of data reduction algorithm that could be used. The principle behind data deduplication is simple. Instead of storing every single bit of data that comes through, simply store the bits that are unique and references to them instead of any additional duplicates. Now presumably your hard drive isn’t full of copies of the same file, so deduplication isn’t exactly what SandForce is doing - but it gives us a hint.

Straight up data compression is another possibility. The idea behind lossless compression is to use fewer bits to represent a larger set of bits. There’s additional processing required to recover the original data, but with a fast enough processor (or dedicated logic) that part can be negligible.

Assuming this is how SandForce works, it means that there’s a ton of complexity in the controller and firmware. Much more than what even a good SSD controller needs to deal with. Not only does SandForce have to manage bad blocks, block cleaning/recycling, LBA mapping and wear leveling, but it also needs to manage this tricky write optimization algorithm. It’s not a trivial matter, SandForce must ensure that the data remains intact while tossing away nearly half of it. After all, the primary goal of storage is to store data.

The whole write-less philosophy has tremendous implications for SSD performance. The less you write, the less you have to worry about garbage collection/cleaning and the less you have to worry about write amplification. This is how the SF controllers get by without having any external DRAM, there’s just no need. There are fairly large buffers on chip though, most likely on the order of a couple of MBs (more on this later).

Manufacturers are rarely honest enough to tell you the downsides to their technologies. Representing a collection of bits with a fewer number of bits works well if you have highly compressible data or a ton of duplicates. Data that is already well compressed however, shouldn’t work so nicely with the DuraWrite engine. That means compressed images, videos or file archives will most likely exhibit higher write amplification than SandForce’s claimed 0.5x. Presumably that’s not the majority of writes your SSD will see on a day to day basis, but it’s going to be some portion of it.

100 Comments

View All Comments

blowfish - Friday, January 1, 2010 - link

80GB? You really need that much? I'm not sure how much space current games take up, but you'd hope that if they shared the same engine, you could have several games installed in significantly less space than the sum of their separate installs. On my XP machines, my OS plus programs partitions are all less than 10GB, so I reckon 40GB is the sweet spot for me and it would be nice to see fast drives of that capacity at a reasonable price. At least some laptop makers recognise the need for two drive slots. Using a single large SSD for everything, including data, seems like extravagant overkill.Gasaraki88 - Monday, January 4, 2010 - link

Just as a FYI, Conan take 30GB. That's one game. Most new games are around 6GB. WoW takes like 13GB. 80GB runs out real fast.DOOMHAMMADOOM - Friday, January 1, 2010 - link

I wouldn't go below 160 GB for a SSD. The games in just my Steam folder alone go to 170 GB total. Games are big these days. The thought of putting Windows and a few programs and games onto an 80GB hard drive is not something I would want to do.Swivelguy2 - Thursday, December 31, 2009 - link

This is very interesting. Putting more processing power closer to the data is what has improved the performance of these SSDs over current offerings. That makes me wonder: what if we used the bigger, faster CPU on the other side of the SATA cable to similarly compress data before storing it on an X25-M? Could that possible increase the effective capacity of the drive while addressing the X25-M's major shortcoming in sequential write speed? Also, compressing/decompressing on the CPU instead of in the drive sends less through SATA, relieving the effects of the 3 GB/s ceiling.Also, could doing processing on the data (on either end of SATA) add more latency to retrieving a single file? From the random r/w performance, apparently not, but would a simple HDTune show an increase in access time, or might it be apparent in the "seat of the pants" experience?

Happy new year, everyone!

jacobdrj - Friday, January 1, 2010 - link

The race to the true 'Isolinear Chip' from Star Trek is afoot...Fox5 - Thursday, December 31, 2009 - link

This really does look like something that should have been solved with smarter file systems, and not smarter controllers imo. (though some would disagree)Reiser4 does support gzip compression of the file system though, and it's a big win for performance. I don't know if NTFS's compression is too, but I know in the past it had a negative impact, but I don't see why it wouldn't perform better if there was more cpu performance.

blagishnessosity - Thursday, December 31, 2009 - link

I've wondered this myself. It would be an interesting experiment. There are http://en.wikipedia.org/wiki/Comparison...systems#... (NTFS, Btrfs, ZFS and Reiser4). In windows, I suppose this could be tested by just right clicking all your files and checking "compress" and then running your benchmarks as usual. In linux, this would be interesting to test with btrfs's SSD mode paired with a low-overhead io scheduler like noop or deadline.What interests me the most though is SSD performance on a http://en.wikipedia.org/wiki/Log-structured_file_s... as they theoretically should never have random reads or writes. In the linux realm, there are several log-based filesystems (JFFS2, UBIFS, LogFS, NILFS2) though none seem to perform ideally in real world usage. Hopefully that'll change in the future :-)

blagishnessosity - Thursday, December 31, 2009 - link

correction:There are http://en.wikipedia.org/wiki/Comparison...systems#...">several filesystems that support transparent compression (NTFS, Btrfs, ZFS and Reiser4).

What interests me the most though is SSD performance on a http://en.wikipedia.org/wiki/Log-structured_file_s...">Log-based filesystem as they theoretically should never have random reads or writes.

(note to web admin: the comment wysiwig does not appear to work for me)

themelon - Thursday, December 31, 2009 - link

Note that ZFS now also has native DeDupe support as of build 128http://blogs.sun.com/bonwick/en_US/entry/zfs_dedup">http://blogs.sun.com/bonwick/en_US/entry/zfs_dedup

grover3606 - Saturday, November 13, 2010 - link

Is the used performance with trim enabled?