Anand's Thoughts on Intel Canceling Larrabee Prime

by Anand Lal Shimpi on December 6, 2009 8:00 PM EST- Posted in

- GPUs

Larrabee is Dead, Long Live Larrabee

Intel just announced that the first incarnation of Larrabee won't be a consumer graphics card. In other words, next year you're not going to be able to purchase a Larrabee GPU and run games on it.

You're also not going to be able to buy a Larrabee card and run your HPC workloads on it either.

Instead, the first version of Larrabee will exclusively be for developers interested in playing around with the chip. And honestly, though disappointing, it doesn't really matter.

The Larrabee Update at Fall IDF 2009

Intel hasn't said much about why it was canceled other than it was behind schedule. Intel recently announced that an overclocked Larrabee was able to deliver peak performance of 1 teraflop. Something AMD was able to do in 2008 with the Radeon HD 4870. (Update: so it's not exactly comparable, the point being that Larrabee is outgunned given today's GPU offerings).

With the Radeon HD 5870 already at 2.7 TFLOPS peak, chances are that Larrabee wasn't going to be remotely competitive, even if it came out today. We all knew this, no one was expecting Intel to compete at the high end. Its agents have been quietly talking about the uselessness of > $200 GPUs for much of the past two years, indicating exactly where Intel views the market for Larrabee's first incarnation.

Thanks to AMD's aggressive rollout of the Radeon HD 5000 series, even at lower price points Larrabee wouldn't have been competitive - delayed or not.

I've got a general rule of thumb for Intel products. Around 4 - 6 months before an Intel CPU officially ships, Intel's partners will have it in hand and running at near-final speeds. Larrabee hasn't been let out of Intel hands, chances are that it's more than 6 months away at this point.

By then Intel wouldn't have been able to release Larrabee at any price point other than free. It'd be slower at games than sub $100 GPUs from AMD and NVIDIA, and there's no way that the first drivers wouldn't have some incompatibly issues. To make matters worse, Intel's 45nm process would stop looking so advanced by mid 2010. Thus the only option is to forgo making a profit on the first chips altogether rather than pull an NV30 or R600.

So where do we go from here? AMD and NVIDIA will continue to compete in the GPU space as they always have. If anything this announcement supports NVIDIA's claim that making these things is, ahem, difficult; even if you're the world's leading x86 CPU maker.

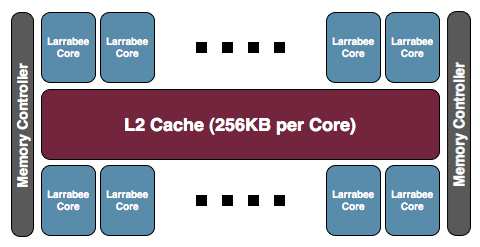

Do I believe the 48-core research announcement had anything to do with Larrabee's cancelation? Not really. The project came out of a different team within Intel. Intel Labs have worked on bits and pieces of technologies that will ultimately be used inside Larrabee, but the GPU team is quite different. Either way, the canceled Larrabee was a 32-core part.

A publicly available Larrabee graphics card at 32nm isn't guaranteed, either. Intel says they'll talk about the first Larrabee GPU sometime in 2010, which means we're looking at 2011 at the earliest. Given the timeframe I'd say that a 32nm Larrabee is likely but again, there are no guarantees.

It's not a huge financial loss to Intel. Intel still made tons of money all the while Larrabee's development was underway. Its 45nm fabs are old news and paid off. Intel wasn't going to make a lot of money off of Larrabee had it sold them on the market, definitely not enough to recoup the R&D investment, and as I just mentioned using Larrabee sales to pay off the fabs isn't necessary either. Financially it's not a problem, yet. If Larrabee never makes it to market, or fails to eventually be competitive, then it's a bigger problem. If heterogenous multicore is the future of desktop and mobile CPUs, Larrabee needs to succeed otherwise Intel's future will be in jeopardy. It's far too early to tell if that's worth worrying about.

One reader asked how this will impact Haswell. I don't believe it will, from what I can tell Haswell doesn't use Larrabee.

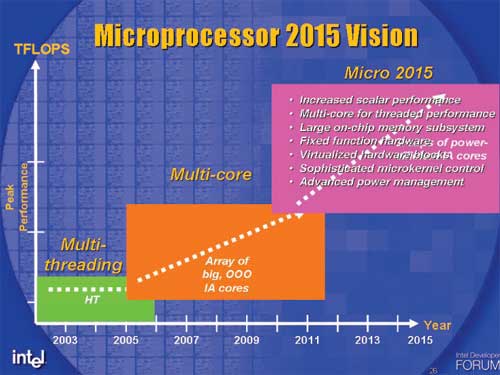

Intel has a different vision of the road to the CPU/GPU union. AMD's Fusion strategy combines CPU and GPU compute starting in 2011. Intel will have a single die with a CPU and GPU on it, but the GPU isn't expected to be used for much compute at that point. Intel's roadmap has the CPU and AVX units being used for the majority of vectorized floating point throughout 2011 and beyond.

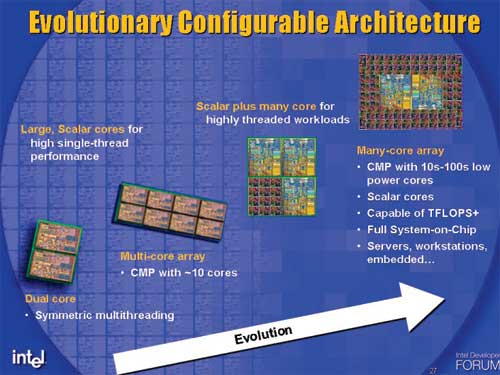

Intel's vision for the future of x86 CPUs announced in 2005, surprisingly accurate

It's not until you get in the 2013 - 2015 range that Larrabee even comes into play. The Larrabee that makes it into those designs will look nothing like the retail chip that just got canceled.

Intel's announcement wasn't too surprising or devastating, it just makes things a bit less interesting.

75 Comments

View All Comments

br0wn - Monday, December 7, 2009 - link

Actually Anand is correct. Here is a link to an actual performance of 1 Teraflop SGEMM using Radeon HD 4870: http://forum.beyond3d.com/showthread.php?t=54842">http://forum.beyond3d.com/showthread.php?t=54842The quoted 300 GFlops for RV770 from BSN is based on unoptimized code.

Khato - Monday, December 7, 2009 - link

Nice find, really goes to show how finicky all of these GPGPU applications tend to be at current. Going from 300 GFLOP on the unoptimized code to a bit over 1 TFLOP with properly optimized code a bit over a year later is indeed quite nice. Though it also raises the question of whether or not the LRB1 hardware can be coaxed to get as much utilization of its hardware. If not, then hopefully the results gathered will at least be used to remove those bottlenecks from LRB2.HighTech4US - Sunday, December 6, 2009 - link

"Instead, the first version of Larrabee will exclusively be for developers interested in playing around with the chip. And honestly, though disappointing, it doesn't really matter."Well I think it does matter as all Intel will have for the foreseeable future is the same crappy graphics they have had for years.

And it looks like Apple seems to think Intel's graphics are so bad they want nothing to do with them:

Apple ditches 32nm Arrandale, won't use Intel graphics

http://www.brightsideofnews.com/news/2009/12/5/app...">http://www.brightsideofnews.com/news/20...randale2...

IntelUser2000 - Sunday, December 6, 2009 - link

[quote]Apple ditches 32nm Arrandale, won't use Intel graphics

http://www.brightsideofnews.com/news/20...randale2...">http://www.brightsideofnews.com/news/20...randale2... [/quote]

I don't believe this. The reason is because when you plug in an external GPU, the IGP will be disabled anyway.

Plus, BSN has been wrong on so many things, its surprising people take most of its articles as more than just humor.

HighTech4US - Monday, December 7, 2009 - link

BSN was very right about Larrabbe:10/12/2009 An Inconvenient Truth: Intel Larrabee story revealed

http://brightsideofnews.com/news/2009/10/12/an-inc...">http://brightsideofnews.com/news/2009/1...uth-inte...

Whats really funny is seeing Anand and Charlie doing their revisionist writing that the Larrabbe consumer part cancellation was always expected or doesn't really matter.

I haven't stopped laughing yet.

Jaybus - Monday, December 7, 2009 - link

I don't think it will matter. Larrabee is not a waste. Forget graphics for a minute. There are many other uses for a Larrabee-like chip in the embedded space. Think of it as a MLC flash controller, a TCP/IP acceleration chip, an I/O processor, etc. The same silicon could be used for all of these with just a firmware change. Larrabee technology could make all of these application-specific controllers much cheaper and easier to produce.IntelUser2000 - Monday, December 7, 2009 - link

I'm not saying they are wrong with everything but...write enough crap and you are bound to get few right.

HighTech4US - Monday, December 7, 2009 - link

I somehow missed the topic change to Charlie and his Semi-Accurate site because "crap and bound to get a few right" is more like his drivel.JarredWalton - Sunday, December 6, 2009 - link

If Arrandale is really power hungry (i.e. more than the 25W TDP in current Pxxxx CPUs), the move makes a lot of sense. Apple is probably looking towards lower power parts. Even the lowest power i7-720QM uses a lot more power than most Core 2 parts; how much would half the core count and 32nm help? Enough to make Arrandale competitive with Core 2, probably, but I am skeptical that the initial parts will actually be significantly better for battery life. Again, waiting for something else on Apple's part would make sense.There's also a question of whether Apple might not use Arrandale + discrete with the ability to switch between the two. They do that already on the MacBook Pro, only with 9400M and 9600M. If OS X will run on Intel's IGP, which it should, I'd guess it's more likely we'll see hybrid GPUs rather than an outright shunning of Arrandale.

/Speculation. :)

StormyParis - Sunday, December 6, 2009 - link

It's funny how Intel bungled pretty much everything else they ever tried to dabble in:- networking

- graphics (they've been trying for a long time either IGP or discrete, and never made anything that didn't suck... I seem to remember Dell's DGX in 1995 ?)

- non x86 CPUs

It's almost like they subsconsciously don't WANT anything but x86 to succeed. Or they just' can't succeed when they don't have a mile-long headstart. So, let's once and for all except the fact that they got lucky x86 got chosen by IBM for PCs, managed to more or less (with gentle prodding from AMD at times) not mess up too bad their stewardship of the x86 architecture, but can't get anything else right.

Let's stop wasting time following Intel. Will get faster procs, that consume less, every few months, with different power vs consumption levels. Thanks guys.