AMD Core Counts and Bulldozer: Preparing for an APU World

by Anand Lal Shimpi on November 30, 2009 12:00 AM EST- Posted in

- CPUs

Last week Johan posted his thoughts from an server/HPC standpoint on AMD's roadmap. Much of my analysis was limited to desktop/mobile, so if you're making million dollar server decisions then his article is better suited for your needs.

He also unveiled a couple of details about AMD's Bulldozer architecture that I thought I'd call out in greater detail. Johan has been working on a CMP vs. SMT article so I'll try to not step on his toes too much here.

It all started about two weeks ago when I got a request from AMD to have a quick conference call about Bulldozer. I get these sorts of calls for one of two reasons. Either:

1) I did something wrong, or

2) Intel did something wrong.

This time it was the former. I hate when it's the former.

It's called a Module

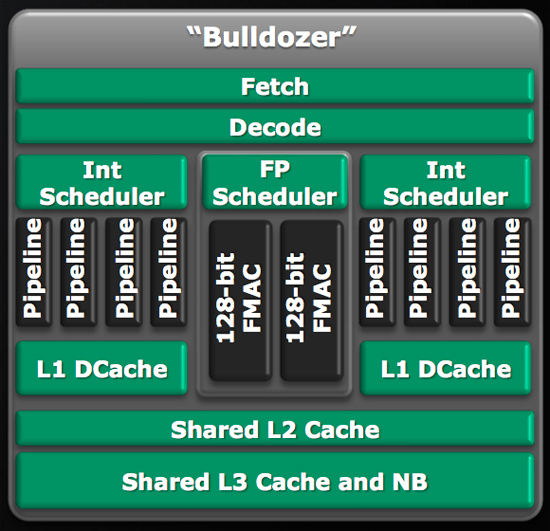

This is the Bulldozer building block, what AMD is calling a Bulldozer Module:

AMD refers to the module as being two tightly coupled cores, which starts the path of confusing terminology. A few of you wondered how AMD was going to be counting cores in the Bulldozer era; I took your question to AMD via email:

Also, just to confirm, when your roadmap refers to 4 bulldozer cores that is four of these cores:

http://images.anandtech.com/reviews/cpu/amd/FAD2009/2/bulldozer.jpg

Or does each one of those cores count as two? I think it's the former but I just wanted to confirm.

AMD responded:

Anand,

Think of each twin Integer core Bulldozer module as a single unit, so correct.

I took that to mean that my assumption was correct and 4 Bulldozer cores meant 4 Bulldozer modules. It turns out there was a miscommunication and I was wrong. Sorry about that :)

Inside the Bulldozer Module

There are two independent integer cores on a single Bulldozer module. Each one has its own L1 instruction and data cache (thanks Johan), as well as scheduling/reordering logic. AMD is also careful to mention that the integer throughput of one of these integer cores is greater than that of the Phenom II's integer units.

Intel's Core architecture uses a unified scheduler fielding all instructions, whether integer or floating point. AMD's architecture uses independent integer and floating point schedulers. While Bulldozer doubles up on the integer schedulers, there's only a single floating point scheduler in the design.

Behind the FP scheduler are two 128-bit wide FMACs. AMD says that each thread dispatched to the core can take one of the 128-bit FMACs or, if one thread is purely integer, the other can use all of the FP execution resources to itself.

AMD believes that 80%+ of all normal server workloads are purely integer operations. On top of that, the additional integer core on each Bulldozer module doesn't cost much die area. If you took a four module (eight core) Bulldozer CPU and stripped out the additional integer core from each module you would end up with a die that was 95% of the size of the original CPU. The combination of the two made AMD's design decision simple.AMD has come back to us with a clarification: the 5% figure was incorrect. AMD is now stating that the additional core in Bulldozer requires approximately an additional 50% die area. That's less than a complete doubling of die size for two cores, but still much more than something like Hyper Threading.

94 Comments

View All Comments

Calin - Monday, November 30, 2009 - link

Only on instructions per clock - the K6-3 was available at 400 and 450 MHz, while the Pentium !!! was available at (much later) up to 1300 MHz.However, the K6-3 was in the competition against Pentium !!! at up to 550-600 MHz, as the original K7 appeared around those times.

mino - Sunday, January 17, 2010 - link

K6-2+ and K6-3 were PII competitors that were able to outperform PII and even early PIII purely thanks to ON-DIE L2 and 3Dnow.L3 was on motherboard back then, was slow, and had little to do with K6-2+/3 performance gains.

Also K6-2+/3 was the top AMD CPU for a very short time as K7 came righ afterward.

K6-2+/3 was the notebook & low cost bussiness desktop solution of the times while K6-2 (without cache) was the budget solution.

medi01 - Monday, November 30, 2009 - link

Was it shared?Zool - Monday, November 30, 2009 - link

Any info on the amount of transistors for each module ?At least they can make a decent notebook cpu from the modules. Mobile nehalem and phenons are everything with 4 cores just not low power notebook cpu-s.

Zool - Monday, November 30, 2009 - link

Something like 1 module no L3 cache for netbooks,notebooks.2 modules with L3 cache for average notebooks and 4 or more modules for desktop replacement notebooks. They could play with the cache sizes too.

The 1 module no L3 cache for example would kill atom in performance. And the power usage could be still quite good. There is no meaning for 2-4 W slow cpu in netbook when the mainboard,hdd,display eats several times more electric power together than the cpu itself.

JVLebbink - Monday, November 30, 2009 - link

I am wondering about the shared FPU. Does one thread really have to be purely integer for the other thread to use the 2 FMACs at the same time, or can one thread use the 2 FMACs if the other one is currently not sending FPU instructions. "if one thread is purely integer, the other can use all of the FP execution resources to itself." sounds like the first, but that would (1) waste FPU resources, (2) give problems with threads switching cores. (how does the not witched thread know it can now use all of the FPU resources?)My presumption is that AMD chose the former and that if 2 (FMAC) instructions of different 'cores' reach the FPU (at that point in time) they will be executed using an FMAC each and if 2 instructions of one core reach the FPU without the other core sending any (at that point in time) they will be executed in parallel using both FMACs.

If my presumption is correct AMD decided not to HyperThread their ALUs but did HyperThread their FPU.

Kiijibari - Monday, November 30, 2009 - link

You do not have to switch anything.The shared FPU has ONE common scheduler. Both threads can issue Ops into the scheduler queue. If there are no FPU Ops from the first thread then - of course - the second thread has the power of the whole FPU.

Very simple ... it's like a chat room. Several people type in messages and you can see it serialized in the chat window (that would be equivalent to the queue).

You will read the messages one after another, according to their posted time / when they were issued. It is the same for the Bulldozer FPU.

You do not need to switch from one chat member to another to read their messages. Neither does the FPU has to switch ;-)

kobblestown - Monday, November 30, 2009 - link

I hope AMD regain their common sense and use the term "core" in amore conventional sense. According to their definition a quad core will only have 2 FP pipelines. What I see in a quad core Bulldozer is dual core with 2 Int and 1 FP pipeline each. I wish them the very best in their effort to regain the performance crown but abusing existing terminology will not help in that.Zool - Monday, November 30, 2009 - link

OS , drivers, API layers trashing the cpu constantly are usualy integer loads. For average math in code the curent fpus are fast enough. Things that realy need paralel FP performance (multimedia things,graphic) are using SIMD SSE units and those things should run much faster on gpus anyway. For 5% extra die area the extra integer pipeline rocks.fitten - Tuesday, December 1, 2009 - link

Except it isn't 5% additional die area.