The Radeon HD 5970: Completing AMD's Takeover of the High End GPU Market

by Ryan Smith on November 18, 2009 12:00 AM EST- Posted in

- GPUs

The Card They Beg You to Overclock

As AMD equipped 5970 with a fully functional Cypress core, one particularly binned for its excellent performance, it’s a shame the 5970 is only clocked at 725MHz core, right? AMD agrees, and has equipped and will be promoting the 5970 in a manner unlike any previous AMD video card.

Officially, AMD and its vendors can only sell a card that consumes up to 300W of power. That’s all the ATX spec allows for; anything else would mean they would be selling a non-compliant card. AMD would love to sell a more powerful card, but between breaking the spec and the prospect of running off users who don’t have an appropriate power supply (more on this later), they can’t.

But there’s nothing in the rulebook about building a more powerful card, and simply selling it at a low enough speed that it’s not breaking the spec. This is what AMD has done.

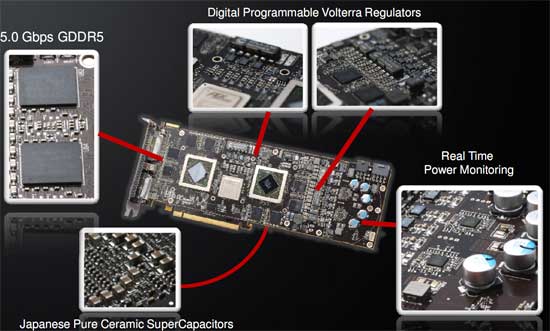

As a 300W TDP card, the 5970 is entirely overbuilt. The vapor chamber cooling system is built to dissipate 400W, and the card is equipped entirely with high-end electronics components, including solid caps and high-end VRMs.

Make no mistake: this card was designed to be a single-card 5870CF solution; AMD just can’t sell it like that. In our discussions with them they nearly (as much as Legal would let them) promised that every card will be able to hit 850MHz core (after all, these chips are binned to be better than a 5870), and memory speeds were nearly as optimistic, although we were given the impression that AMD is a little more concerned about GDDR5 memory bus issues at 5870 speeds.

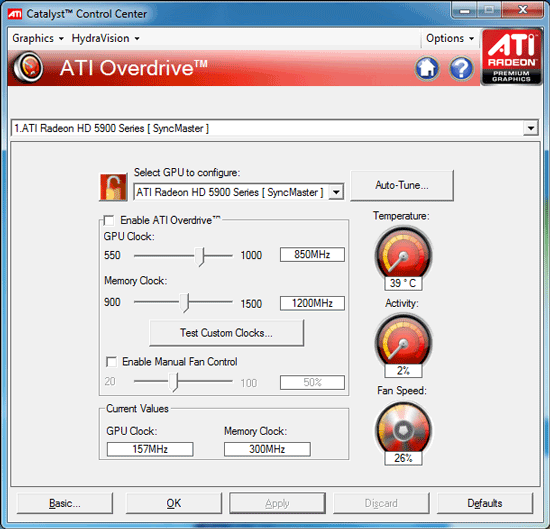

So with the card that is a pair of 5870s in everything except the shipping specifications, AMD has gone ahead and left it up to the user to put 2 + 2 together, and to bring the card to its full potential. The card ships with a much higher Overdrive cap than AMD’s other cards; instead of 10-20%, here the caps are 1GHz for the core and 1.5GHz for the memory, a 37% and 50% cap respectively (in comparison, on the 5850, the caps were set below the 5870’s stock speeds). The card effectively has unlimited overclocking headroom within Overdrive; we doubt that any 5970 is going to hit those speeds with air cooling.

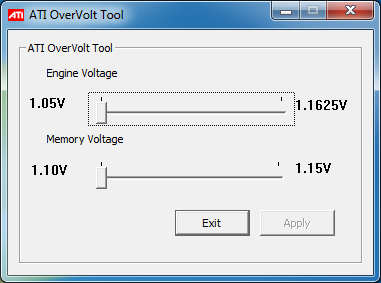

One weakness of Overdrive is that it doesn’t let you tweak voltages, which is a problem since AMD has to ship this card at lower voltages in order to meet the 294W TDP. In order to rectify that, AMD will be supplying vendors with a voltage tweaking tool specifically for the 5970, which will then be customized and distributed by vendors to their 5970 users.

Normally any kind of voltage tweaking on a video card makes us nervous due to the lack of guidance – a single GPUs doesn’t ship at a wide range of voltages after all. For overvolting the 5970, AMD has made matters quite simple: you only get one choice. The utility we’re using offers two voltages for the core, and two for the memory, which are the shipping voltages and the voltages the 5870 runs at. So you can run your 5970 at 1.05v core or 1.165v core, but nothing higher and nothing in between. It makes matters simple, and locks out the ability to supply the core with more voltage than it can handle. We haven’t seen any of the vendor-customized versions of the Overvolt utility, but we’d expect all of them to have the same cap, if not the same two-setting limit.

All of this comes at a cost however: power. Cranking up the voltage in particular will drive the power draw of the card way up, and this is the point where the card ceases to meet the PCIe specification. If you want to overclock this card, you’re going to need not just a strong power supply that can deliver its rated wattage, but you’re going to need a power supply that can overdeliver on the rails attached to the PCIe power plugs.

For overclocked operation, AMD is recommending a 750W power supply, capable of delivering at least 20A on the rail the 8pin plug is fed from, and another 15A on the rail the 6pin plug is fed from. There are a number of power supplies that can do this, but you need to pay very close attention to what your power supply can do. Frankly we’re just waiting for a sob-story where this card cooks a power supply when overvolted. Overclocking the 5970 will bring the power draw out of spec, its imperative you make sure you have a power supply that can handle it.

Overall the whole issue leaves us with an odd taste in our mouths. Clearly AMD would have rewritten the ATX spec to allow for more power if it were that simple, and we don’t believe anyone really wants to be selling a card that runs out of spec like this. Both AMD and NVIDIA are going to have to cope with the fact that power draw has been increasing on their cards over time, so this isn’t going to be the last over-300W card we see. I would not be surprised if we saw a newer revision of the ATX spec that allowed for more power for video cards – if you can cool 400W, then that’s where the new maximum is going to be for luxury video cards like the 5970.

Last, but certainly not least, there’s the matter of real-world testing. Although AMD told us that the 5970 should be able to hit 5870 clockspeeds, we actually didn’t have the kind of luck we were expecting to have. We have 2 5970s,one for myself, and one for Anand for Eyefinity and power/noise/heat testing. My 5970 hit 850MHz/1200MHz once overvolted (it had very little headroom without it), but the performance was sporadic. The VRM overcurrent protection mechanism started kicking in and momentarily throttling the card down to 550MHz/1000MHz, and not just in FurMark/OCCT. Running a real application (the Distributed.net RC5-72 Stream client) ultimately resulted in the same thing. With the core overvolted, our card kept throttling on FurMark all the way down to 730MHz. While the card is stable in terms of not crashing, or verdict is that our card is not capable of performing at 5870 clockspeeds.

We’ve attempted to isolate the cause of this, and we feel we can rule out temperature after feeding the card cold morning air had no effect. This leaves us with power. The power supply we use is a Corsair 850TX, which has a single 12V rail rated for 70A. We do not believe that the issue is the power supply, but we don’t have another unit on hand to test with, so we can not eliminate it. Our best guess is that in spite of the high-quality VRMs that are on this card, that they simply aren’t up to the task of powering the card at 5870 speeds and voltages.

We’ve gone ahead and done our testing at these speeds anyhow (since overcurrent protection doesn’t cause any quality issues), however it’s likely that these results are retarded somewhat by throttling, and that a card that can avoid throttling would perform slightly better. We're going to be retesting this card in the morning with some late suggestions from AMD (mainly forcing the fan to 100%) to see if this changes things, but we are fairly confident right now that it's not heat related.

As for Anand's card, his fared even worse. His card locked up his rig when trying to run OCCT at 5870 speeds. VRM throttling is one thing, but crashing is another; even if it's OCCT, it shouldn't be happening. We've written his card off as being unstable at 5870 speeds, which makes us 0-for-2 in chasing the 5870CF. Reality is currently in conflict with AMD's promises.

Note: We have since published an addendum blog covering VRM temperatures, the culprit for our throttling issues

114 Comments

View All Comments

SJD - Wednesday, November 18, 2009 - link

Thanks Anand,That kind of explains it, but I'm still confused about the whole thing. If your third monitor supported mini-DP then you wouldn't need an active adapter, right? Why is this when mini-DP and regular DP are the 'same' appart from the actual plug size. I thought the whole timing issue was only relevant when wanting a third 'DVI' (/HDMI) output from the card.

Simon

CrystalBay - Wednesday, November 18, 2009 - link

WTH is really up at TWSC ?Jacerie - Wednesday, November 18, 2009 - link

All the single game tests are great and all, but once I would love to see AT run a series of video card tests where multiple instances of games like EVE Online are running. While single instance tests are great for the FPS crowd, all us crazy high-end MMO players need some love too.Makaveli - Wednesday, November 18, 2009 - link

Jacerie the problem with benching MMO's and why you don't see more of them is all the other factors that come into play. You have to now deal with server latency, you also have no control of how many players are usually in the server at any given time when running benchmarks. There is just to many variables that would not make the benchmarks repeatable and valid for comparison purposes!mesiah - Thursday, November 19, 2009 - link

I think more what he is interested in is how well the card can render multiple instances of the game running at once. This could easily be done with a private server or even a demo written with the game engine. It would not be real world data, but it would give an idea of performance scaling when multiple instances of a game are running. Myself being an occasional "Dual boxer" I wouldn't mind seeing the data myself.Jacerie - Thursday, November 19, 2009 - link

That's exactly what I was trying to get at. It's not uncommon for me to be running at lease two instances of EVE with an entire assortment of other apps in the background. My current 3870X2 does the job just fine, but with 7 out and DX11 around the corner I'd like to know how much money I'm going to need to stash away to keep the same level of usability I have now with the newer cards.Zool - Wednesday, November 18, 2009 - link

The so fast is only becouse 95% of the games are dx9 xbox ports. Still crysis is the most demanding game out there quite a time (it need to be added that it has a very lazy engine). In Age of Conan the diference in dx9 and dx10 is more than half(with plenty of those efects on screen even1/3) the fps drop. Those advanced shader efects that they are showing in demos are actualy much more demanding on the gpu than the dx9 shaders. Its just the thing they dont mention it. It will be same with dx11. A full dx11 game with all those fancy shaders will be on the level of crysis.crazzyeddie - Wednesday, November 18, 2009 - link

... after their first 40nm test chips came back as being less impressive than **there** 55nm and 65nm test chips were.silverblue - Wednesday, November 18, 2009 - link

Hehe, I saw that one too.frozentundra123456 - Wednesday, November 18, 2009 - link

Unfortunately, since playing MW2, my question is: are there enough games that are sufficiently superior on the PC to justify the inital expense and power usage of this card? Maybe thats where eyefinity for AMD and PhysX for nVidia come in: they at least differentiate the PC experience from the console.I hate to say it, but to me there just do not seem to be enough games optimized for the PC to justify the price and power usage of this card, that is unless one has money to burn.