NVIDIA's Bumpy Ride: A Q4 2009 Update

by Anand Lal Shimpi on October 14, 2009 12:00 AM EST- Posted in

- GPUs

Blhaflhvfa.

There’s a lot to talk about with regards to NVIDIA and no time for a long intro, so let’s get right to it.

At the end of our Radeon HD 5850 Review we included this update:

“Update: We went window shopping again this afternoon to see if there were any GTX 285 price changes. There weren't. In fact GTX 285 supply seems pretty low; MWave, ZipZoomFly, and Newegg only have a few models in stock. We asked NVIDIA about this, but all they had to say was "demand remains strong". Given the timing, we're still suspicious that something may be afoot.”

Less than a week later and there were stories everywhere about NVIDIA’s GT200b shortages. Fudo said that NVIDIA was unwilling to drop prices low enough to make the cards competitive. Charlie said that NVIDIA was going to abandon the high end and upper mid range graphics card markets completely.

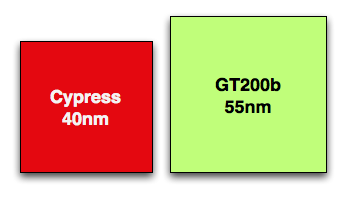

Let’s look at what we do know. GT200b has around 1.4 billion transistors and is made at TSMC on a 55nm process. Wikipedia lists the die at 470mm^2, that’s roughly 80% the size of the original 65nm GT200 die. In either case it’s a lot bigger and still more expensive than Cypress’ 334mm^2 40nm die.

Cypress vs. GT200b die sizes to scale

NVIDIA could get into a price war with AMD, but given that both companies make their chips at the same place, and NVIDIA’s costs are higher - it’s not a war that makes sense to fight.

NVIDIA told me two things. One, that they have shared with some OEMs that they will no longer be making GT200b based products. That’s the GTX 260 all the way up to the GTX 285. The EOL (end of life) notices went out recently and they request that the OEMs submit their allocation requests asap otherwise they risk not getting any cards.

The second was that despite the EOL notices, end users should be able to purchase GeForce GTX 260, 275 and 285 cards all the way up through February of next year.

If you look carefully, neither of these statements directly supports or refutes the two articles above. NVIDIA is very clever.

NVIDIA’s explanation to me was that current GPU supplies were decided on months ago, and in light of the economy, the number of chips NVIDIA ordered from TSMC was low. Demand ended up being stronger than expected and thus you can expect supplies to be tight in the remaining months of the year and into 2010.

Board vendors have been telling us that they can’t get allocations from NVIDIA. Some are even wondering whether it makes sense to build more GTX cards for the end of this year.

If you want my opinion, it goes something like this. While RV770 caught NVIDIA off guard, Cypress did not. AMD used the extra area (and then some) allowed by the move to 40nm to double RV770, not an unpredictable move. NVIDIA knew they were going to be late with Fermi, knew how competitive Cypress would be, and made a conscious decision to cut back supply months ago rather than enter a price war with AMD.

While NVIDIA won’t publicly admit defeat, AMD clearly won this round. Obviously it makes sense to ramp down the old product in expectation of Fermi, but I don’t see Fermi with any real availability this year. We may see a launch with performance data in 2009, but I’d expect availability in 2010.

While NVIDIA just launched its first 40nm DX10.1 parts, AMD just launched $120 DX11 cards

Regardless of how you want to phrase it, there will be lower than normal supplies of GT200 cards in the market this quarter. With higher costs than AMD per card and better performance from AMD’s DX11 parts, would you expect things to be any different?

Things Get Better Next Year

NVIDIA launched GT200 on too old of a process (65nm) and they were thus too late to move to 55nm. Bumpgate happened. Then we had the issues with 40nm at TSMC and Fermi’s delays. In short, it hasn’t been the best 12 months for NVIDIA. Next year, there’s reason to be optimistic though.

When Fermi does launch, everything from that point should theoretically be smooth sailing. There aren’t any process transitions in 2010, it’s all about execution at that point and how quickly can NVIDIA get Fermi derivatives out the door. AMD will have virtually its entire product stack out by the time NVIDIA ships Fermi in quantities, but NVIDIA should have competitive product out in 2010. AMD wins the first half of the DX11 race, the second half will be a bit more challenging.

If anything, NVIDIA has proved to be a resilient company. Other than Intel, I don’t know of any company that could’ve recovered from NV30. The real question is how strong will Fermi 2 be? Stumble twice and you’re shaken, do it a third time and you’re likely to fall.

106 Comments

View All Comments

dan101rayzor - Wednesday, October 14, 2009 - link

anyone know when the fermi is coming out?Zingam - Wednesday, October 14, 2009 - link

Sometime in the future. It appears to me that it will be a great general purpose processor but if it will be great graphics processor is not quite clear.If you want a great GPU for graphics you won't be wrong with ATI in the near future. If you want to do scientific computing probably Fermi will be the king next year.

And then comes Larrabee and thing would get quite interesting.

I wonder who will release a great, next generation, mobile GPU first!

dragunover - Wednesday, October 14, 2009 - link

I believe the 5770 could easily be transplanted into laptops with a different cooling solution and lower clocks and called the 5870M.dan101rayzor - Wednesday, October 14, 2009 - link

so for gaming an ATI 5800 is the best bet? Fermi will it be very expensive? Fermi not for gaming?Scali - Wednesday, October 14, 2009 - link

We can't say yet.We don't know the prices of Fermi parts, their gaming performance, or when they'll be released.

Which also means we don't know how 'good' the 5800 series is. All we know is that it's the best part on the market today.

swaaye - Monday, October 19, 2009 - link

It's always been rather hard to pronounce a part as "best". There's always an unknown future part in the works. And, even good parts sometimes have downsides. For ex, I can see a reason or two for going NV30 back in the day instead of R300 (namely OpenGL apps/games).I think it's safe to say that you can't go wrong with 58x0 right now. It doesn't have any major issues, AFAIK, the price is decent, and the competition is nowhere in sight.

MojaMonkey - Wednesday, October 14, 2009 - link

I have a simple brand preference for nVidia and I use Badaboom so I'd buy their products even if price performance doesn't quite stack up. However there are limits in performance difference I'm willing to accept.I really hope the gt300 is a great competitive product as it sounds interesting.

One thought, given that PC gaming is now on the back burner compared to consoles maybe nVidia is being smart branching out into mobiles and GPU computing? Both of these could be real growth areas as PC gaming starts to fade.

neomatrix724 - Wednesday, October 14, 2009 - link

People have been saying that PC gaming has been dying for years. Most of the GPU developments and technologies have been created for the PC first and then downstream improvements are what the console gets.I don't foresee the PC disappearing as a gaming medium for a while.

MonkeyPaw - Wednesday, October 14, 2009 - link

I don't really see games disappearing from PCs either. However, consoles are very popular, and are a much more guaranteed market, since developers know console sales numbers, and that essentially every console owner is a gamer. Consequently, developers will target a lucrative console market (at $40-60 per game). This means their games have to run on the consoles well enough (speed and graphics quality) to be appealing. That doesn't necessarily kill PC gaming, but it does slow the progression (and the need for expensive hardware). This, along with the fact that many gamers don't likely play on 30" LCDs, means high-end graphics card sales are directed at a very limited audience. When I gamed on my PC, I had a 4670, since this would play most games just fine on a 19" LCD. I would never need a 4870, much less a 5870.So no, PC gaming isn't dead, but it's not the target market anymore.

atlmann10 - Thursday, October 15, 2009 - link

The point made earlier that PC Gaming has been dieing for decades, as an incorrect statement is true. I think we will see more personally, but largely in this nvidia has room to gain, that is in other things that gigantic PC graphics card though.The online gaming thing is much better than even the console market. Why you may ask? That is because of revenue stream, and delivery. With an online pc game you can update it weekly thereby changing anything you want to.

Yes, console is concentrated to a point because they know everyone will go out and buy a 45 dollar game. But the subscription thing is the killer. The gamer who plays an online game subscribes. Therefore the buy the 45 dollar game and give you another 15 at the end of the month, and then when you have an upgrade you have a direct pat to them as a distributor.

So then that customer that bought your 45 dollar game, and pays you 15 a month for access logs onto there game, there is a new update for 35 dollars do you want it? That user clicks yes proceeds to download it what have you done as a company? You have eliminated advertising completely for existing customers, you have also eliminated packaging, and distribution.

So then what did you have to do to produce that upgrade? We didn't do anything but development. So we cut our production and cost of operations in half. Plus they bought our new game or upgrade for the same price, even though it cost us 60 percent less to make, and pay us an automatic 15 a month as long as they play it.