NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

Architecting Fermi: More Than 2x GT200

NVIDIA keeps referring to Fermi as a brand new architecture, while calling GT200 (and RV870) bigger versions of their predecessors with a few added features. Marginalizing the efforts required to build any multi-billion transistor chip is just silly, to an extent all of these GPUs have been significantly redesigned.

At a high level, Fermi doesn't look much different than a bigger GT200. NVIDIA is committed to its scalar architecture for the foreseeable future. In fact, its one op per clock per core philosophy comes from a basic desire to execute single threaded programs as quickly as possible. Remember, these are compute and graphics chips. NVIDIA sees no benefit in building a 16-wide or 5-wide core as the basis of its architectures, although we may see a bit more flexibility at the core level in the future.

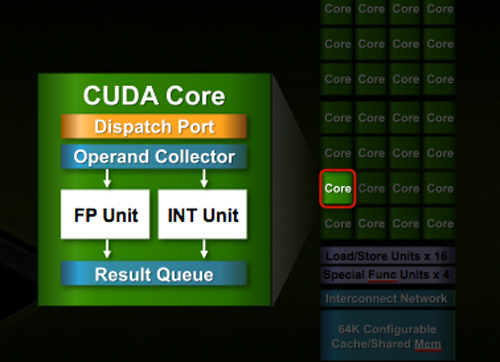

Despite the similarities, large parts of the architecture have evolved. The redesign happened at low as the core level. NVIDIA used to call these SPs (Streaming Processors), now they call them CUDA Cores, I’m going to call them cores.

All of the processing done at the core level is now to IEEE spec. That’s IEEE-754 2008 for floating point math (same as RV870/5870) and full 32-bit for integers. In the past 32-bit integer multiplies had to be emulated, the hardware could only do 24-bit integer muls. That silliness is now gone. Fused Multiply Add is also included. The goal was to avoid doing any cheesy tricks to implement math. Everything should be industry standards compliant and give you the results that you’d expect.

Double precision floating point (FP64) performance is improved tremendously. Peak 64-bit FP execution rate is now 1/2 of 32-bit FP, it used to be 1/8 (AMD's is 1/5). Wow.

NVIDIA isn’t disclosing clock speeds yet, so we don’t know exactly what that rate is yet.

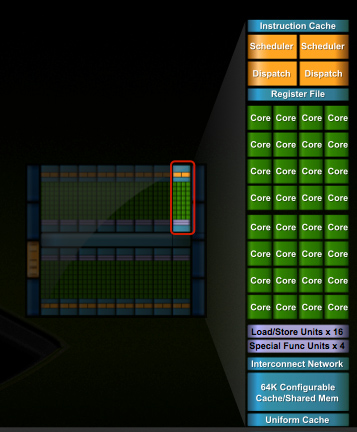

In G80 and GT200 NVIDIA grouped eight cores into what it called an SM. With Fermi, you get 32 cores per SM.

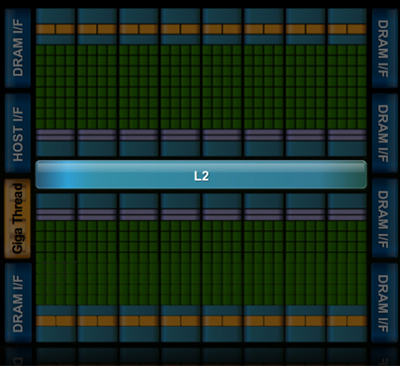

The high end single-GPU Fermi configuration will have 16 SMs. That’s fewer SMs than GT200, but more cores. 512 to be exact. Fermi has more than twice the core count of the GeForce GTX 285.

| Fermi | GT200 | G80 | |

| Cores | 512 | 240 | 128 |

| Memory Interface | 384-bit GDDR5 | 512-bit GDDR3 | 384-bit GDDR3 |

In addition to the cores, each SM has a Special Function Unit (SFU) used for transcendental math and interpolation. In GT200 this SFU had two pipelines, in Fermi it has four. While NVIDIA increased general math horsepower by 4x per SM, SFU resources only doubled.

The infamous missing MUL has been pulled out of the SFU, we shouldn’t have to quote peak single and dual-issue arithmetic rates any longer for NVIDIA GPUs.

NVIDIA organizes these SMs into TPCs, but the exact hierarchy isn’t being disclosed today. With the launch's Tesla focus we also don't know specific on ROPs, texture filtering or anything else related to 3D graphics. Boo.

A Real Cache Hierarchy

Each SM in GT200 had 16KB of shared memory that could be used by all of the cores. This wasn’t a cache, but rather software managed memory. The application would have to knowingly move data in and out of it. The benefit here is predictability, you always know if something is in shared memory because you put it there. The downside is it doesn’t work so well if the application isn’t very predictable.

Branch heavy applications and many of the general purpose compute applications that NVIDIA is going after need a real cache. So with Fermi at 40nm, NVIDIA gave them a real cache.

Attached to each SM is 64KB of configurable memory. It can be partitioned as 16KB/48KB or 48KB/16KB; one partition is shared memory, the other partition is an L1 cache. The 16KB minimum partition means that applications written for GT200 that require 16KB of shared memory will still work just fine on Fermi. If your app prefers shared memory, it gets 3x the space in Fermi. If your application could really benefit from a cache, Fermi now delivers that as well. GT200 did have an L1 texture cache (one per TPC), but the cache was mostly useless when the GPU ran in compute mode.

The entire chip shares a 768KB L2 cache. The result is a reduced penalty for doing an atomic memory op, Fermi is 5 - 20x faster here than GT200.

415 Comments

View All Comments

SiliconDoc - Friday, October 2, 2009 - link

Gee, you certaibnly are not a computer technician.That you even POSIT that games aren't played on INTEL GPU's is an astounding whack.

Nvidia and ati have laptop graphics, as does intel, and although I don't have the numbers for you on slot cards vs integrated, your whole idea is another absolute FUD and hogwash.

INTEL slotted graphics are still around playing games, bubbba.

You sure don't know much, and your point is 100% invalid, and contains the problem of YOUR TINY MIND.

Let me remind you Tamalero, you shrieked and wailed against nvidia for INTEGRATED GRAPHICS of theirs on a laptop !

Wow, you're a BOZO, again.

sandwiches - Friday, October 2, 2009 - link

You're a sad, pathetic troll, Silicondoc. I honestly do feel contempt for you. Your life is obviously devoid of any real substance.shotage - Thursday, October 1, 2009 - link

The performance and awesomeness of a company campared to another is biaised. Sure Nvidia probably has historically done better than ATI.I hope that ATI as a company does much better this year and next, so that the there is greater competition. Competition which will consequently mean smaller profit margins, but better deals for us consumers!

At the end of the day, who cares who's winning? Shouldn't we all be hoping that each does well? Shouldn't we all hope that there will always be several major graphics providers? Do we really want a monopoly on GPU's? How would this effect the price on a performance card?

I think you should be banned SiliconDoc. You're adding no real value here. Leave.

I have a GTX260 btw. So i'm not speaking from bias. Wander what kind of card you have? lol...

SiliconDoc - Friday, October 2, 2009 - link

What card you have or don't have doesn't matter one whit, but what you claim DOES. What you SPEW does !and when you lie expect any card to save you.

If liars were banned, you'd all be gone, and I'd be left. ( that does not include of course those who aren't trolling jerkbots running around to my every post wailing and whining and saying ABSOLUTELY NOTHING )

-

" Sure Nvidia probably has historically done better than ATI."

since you 1. obviously haven't got a clue wether what you said is true or not 2. Why would you even say it, with your stupidty retaining, lack of knowledge, or lying caveat "probably" ?

If you're so ignorant, please shut it on the matter! Do you prefer to open your big fat yap and prove how knowledgeless you are ? I guess you do.

If you don't know, why are you even opening your piehole about it ?

It certainly doesn't do anything for me if you aren't correct and you don't know it ! I don't WANT YOUR LIES, nor your pie open when you haven't a clue. I don't want your wishy washy CRAP.

Ok ?

Got it ?

If you open the flapper, make sure it gets it right.

-

If you actually are an enthusiast, why is it that the result is, you blather on in bland generalities, get large facts tossed in a fudging, sloppy, half baked inconclusive manner, and in the end, wind up being nothing less than the very red rooster you demand I believe you are not.

What a crappy outcome, really.---

--

Frankly, you cannot accept me even telling the facts as they actually are, that is too much for your mushy, weak, flakey head, and when I do, you attribute some far out motive to it !

There's no motive other than GET IT RIGHT, YOU IDIOTS !

--

What do you claim, though ?

Why is it, you have such an aversion to FACTS ? WHY IS THAT ?

If I point out ati is not in fact on top, but last, and NVIDIA is almost double ati, (to use the "authors" comparison techniques but not separate companies for "internal comparisons" and make CERTAIN I exagerrate) - why are you so GD bent out of shape ?

I'll tell you why...

YOU FORBID IT.

I certainly don't understand your mindset, you'd much prefer some puss filled bag of slop you can quack out so "we can come to some generalization on our desires and feelings" about "the industry".

Go suck down your estrogen pills with your girlfriends.

---

I don't care what your feelings are, what flakey desire you have for continuing competition, because, you prefer LIES over the truth.

Instead of course, after you whining in some sissy crybaby pathetic wail for the PC cleche of continuing competition, you'll turn around and screech the competition I provide to your censored mindset is the worst kind you could possibly imagine to encounter ! Then you wail aloud "destroy it! get rid of it ! ban it ! "

LOL

You're one piece of filthy work, that's for sure.

---

So, you want me to squeal like an idiot like you did, that you want lower prices and competition, and the way to get that is to LIE about ati in the good, and DISS nvidia to the bad with even bigger lies ?

I see.. I see exactly !

So when I point out the big fat lying fibs for ati and against nvidia - you percieve it as a great threat to "your bottom line" pocketbook.

LOL

Well you know what - TOO BAD ! If the card cannot survive on FACTS AND THE TRUTH, then it deserves to die.

Or is honesty banned so you can fan up ati numbers with your lies, and therefore get your cheaper nvidia card ?

--

This is WHAT YOU PEOPLE WANT - enough lies for ati and against nvidia to keep the piece of red crappin ?

LOL yeah man, just like you jerks...

---

" At the end of the day, who cares who's winning? "

Take a look at that you flaked out JERKOFF, and apply it to this site for the YEARS you didn't have your INSANE GOURD focused on me.

Come on you piece of filth, take a look in the mirror !

It's ALL ABOUT WHOSE WINNING HERE.

THE WHOLE SITE IS BASED UPON YOU LITTLE PIECE OF CRAP !

---

And of course worse than that, after claiming you don't care whose winning, you go on to spread your hope that ati market share climbs, so you can suck down a cheapo card with continuing competition.

So what that says, is all YOU care about is your money. MONEY, your money.

"Quick ban the truth! jerkoffs pocketbook is threatened by posts on anandtech because this poster won't claim he wants equal market share !"

--

Dude, you are disgusting. You take fear and personal greed to a whole new level.

sandwiches - Friday, October 2, 2009 - link

For some laughs at this poor excuse for a 29-year-old man, check out these links:Here, he was banned from driverheaven.com for his rants against ATI. He really is a truly rabid ATI hater with horribly sycophantic traits:

http://www.driverheaven.net/members/silicondoc.htm...">http://www.driverheaven.net/members/silicondoc.htm...

More rants by him about ATI and how great NVIDIA is:

http://www.maximumpc.com/user/silicondoc">http://www.maximumpc.com/user/silicondoc

http://forums.bit-tech.net/search.php?searchid=853...">http://forums.bit-tech.net/search.php?searchid=853...

Here's some political rant by Silicondoc. Is anyone surprised to learn he's also a rabid wingnut?

http://www.danielpipes.org/comments/118289">http://www.danielpipes.org/comments/118289

SiliconDoc - Friday, October 2, 2009 - link

Why that's great, let's see what you have for any rebuttal to this clone you claim is me:" Even the gtx260 uses less power than the 4870.

Pretty simple math - 295 WINS.

Cuda, PhysX, large cool die size, better power management, game profiles out of the box, forced SLI, better overclocking, 65% of GPU-Z scores and marketshare, TWIMTBP, no CCC bloat, secondary PhysX card capable, SLI monitor control "

---

Anything there you can refute ? Even just one thing ?

I'm not sure what your complaint is if you can't.

That's the text that was there, so why didn't you read it or try to claim anything in it was wrong ?

Are you just a little sourpussed spasm boy red, or do you actually have a reason ANY of that text is incorrect ?

Anything at all there ? Are you an empty shell who copies the truth then whines like a punk idiot ? Come on, prove you're not that pathetic.

wifiwolf - Thursday, October 1, 2009 - link

less margins indeed. At a business point of view though that's good anyway, not because less margins is good for business (of course not) but for what it implies. It means factories can keep on maximum production - and that's very important. There's less profit for each sale but number of consumers is bigger in an exponential way. So not bad indeed... even for them - good for all.Zingam - Thursday, October 1, 2009 - link

Fermi he hassilverblue - Thursday, October 1, 2009 - link

Doesn't matter mate, he'll still just accuse you of bias and brown-nosing ATI.Jamahl - Thursday, October 1, 2009 - link

Silicondoc, go see a REAL doc please.