NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

Architecting Fermi: More Than 2x GT200

NVIDIA keeps referring to Fermi as a brand new architecture, while calling GT200 (and RV870) bigger versions of their predecessors with a few added features. Marginalizing the efforts required to build any multi-billion transistor chip is just silly, to an extent all of these GPUs have been significantly redesigned.

At a high level, Fermi doesn't look much different than a bigger GT200. NVIDIA is committed to its scalar architecture for the foreseeable future. In fact, its one op per clock per core philosophy comes from a basic desire to execute single threaded programs as quickly as possible. Remember, these are compute and graphics chips. NVIDIA sees no benefit in building a 16-wide or 5-wide core as the basis of its architectures, although we may see a bit more flexibility at the core level in the future.

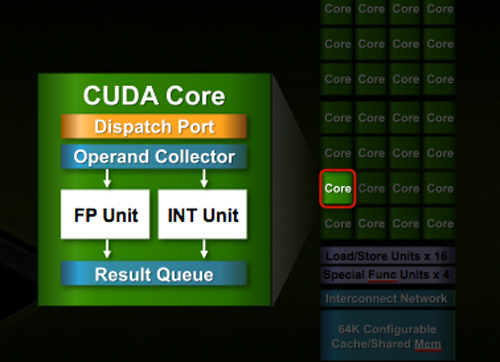

Despite the similarities, large parts of the architecture have evolved. The redesign happened at low as the core level. NVIDIA used to call these SPs (Streaming Processors), now they call them CUDA Cores, I’m going to call them cores.

All of the processing done at the core level is now to IEEE spec. That’s IEEE-754 2008 for floating point math (same as RV870/5870) and full 32-bit for integers. In the past 32-bit integer multiplies had to be emulated, the hardware could only do 24-bit integer muls. That silliness is now gone. Fused Multiply Add is also included. The goal was to avoid doing any cheesy tricks to implement math. Everything should be industry standards compliant and give you the results that you’d expect.

Double precision floating point (FP64) performance is improved tremendously. Peak 64-bit FP execution rate is now 1/2 of 32-bit FP, it used to be 1/8 (AMD's is 1/5). Wow.

NVIDIA isn’t disclosing clock speeds yet, so we don’t know exactly what that rate is yet.

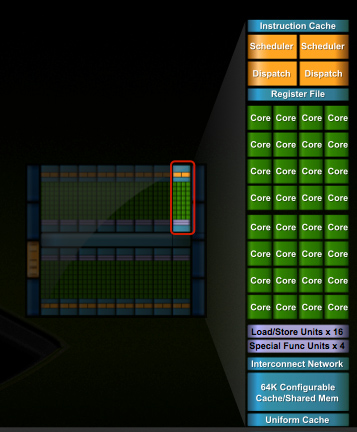

In G80 and GT200 NVIDIA grouped eight cores into what it called an SM. With Fermi, you get 32 cores per SM.

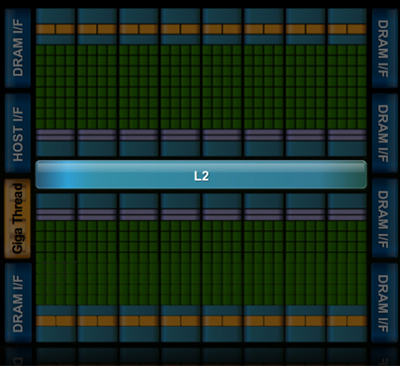

The high end single-GPU Fermi configuration will have 16 SMs. That’s fewer SMs than GT200, but more cores. 512 to be exact. Fermi has more than twice the core count of the GeForce GTX 285.

| Fermi | GT200 | G80 | |

| Cores | 512 | 240 | 128 |

| Memory Interface | 384-bit GDDR5 | 512-bit GDDR3 | 384-bit GDDR3 |

In addition to the cores, each SM has a Special Function Unit (SFU) used for transcendental math and interpolation. In GT200 this SFU had two pipelines, in Fermi it has four. While NVIDIA increased general math horsepower by 4x per SM, SFU resources only doubled.

The infamous missing MUL has been pulled out of the SFU, we shouldn’t have to quote peak single and dual-issue arithmetic rates any longer for NVIDIA GPUs.

NVIDIA organizes these SMs into TPCs, but the exact hierarchy isn’t being disclosed today. With the launch's Tesla focus we also don't know specific on ROPs, texture filtering or anything else related to 3D graphics. Boo.

A Real Cache Hierarchy

Each SM in GT200 had 16KB of shared memory that could be used by all of the cores. This wasn’t a cache, but rather software managed memory. The application would have to knowingly move data in and out of it. The benefit here is predictability, you always know if something is in shared memory because you put it there. The downside is it doesn’t work so well if the application isn’t very predictable.

Branch heavy applications and many of the general purpose compute applications that NVIDIA is going after need a real cache. So with Fermi at 40nm, NVIDIA gave them a real cache.

Attached to each SM is 64KB of configurable memory. It can be partitioned as 16KB/48KB or 48KB/16KB; one partition is shared memory, the other partition is an L1 cache. The 16KB minimum partition means that applications written for GT200 that require 16KB of shared memory will still work just fine on Fermi. If your app prefers shared memory, it gets 3x the space in Fermi. If your application could really benefit from a cache, Fermi now delivers that as well. GT200 did have an L1 texture cache (one per TPC), but the cache was mostly useless when the GPU ran in compute mode.

The entire chip shares a 768KB L2 cache. The result is a reduced penalty for doing an atomic memory op, Fermi is 5 - 20x faster here than GT200.

415 Comments

View All Comments

Pirks - Monday, October 5, 2009 - link

No, Jarred, they are advancing towards 2560x1600 on every PC gamer's desk. Since they move towards that (used to be 800x600 everywhere, now it's more like 1280x1024 everywhere, in couple of years it'll be 1680x1050 everywhere and so on) they cannot be described as stagnant, hence your statement is BS, Jarred.mejobloggs - Tuesday, October 6, 2009 - link

I think I'll agree with Jared on this oneLCD tech isn't advancing enough to get decent high quality large screens at a decent price. 22" seems about the sweet spot which is usually 1680x1050

Pirks - Wednesday, October 7, 2009 - link

This sweet spot used to be 19" 1280x1024 a while ago, with 17" 1024x768 before that. In a couple of years sweet spot will move to 24" 1920x1200, and so on. Hence monitor resolution does progress, it does NOT stagnate, and you do listen to Jarred's fairy tales too much :PJarredWalton - Friday, October 9, 2009 - link

What we have here is a failure to communicate. My point, which you are ignoring, is that maximum resolutions are "stagnating" in the sense that they are remaining static. It's not "BS" or a "fairy tale", unless you can provide detail that shows otherwise. I purchased a 24" LCD with a native 1920x1200 resolution six years ago, and then got a 30" 2560x1600 LCD two years later. Outside of ultra-expensive solutions, nothing is higher than 2560x1600 right now, is it?1280x1024 was mainstream from about 7-11 years ago, and 1024x768 hasn't been the norm since around 1995 (unless you bought a crappy 14/15" LCD). We have not had a serious move to anything higher than 1920x1080 in the mainstream for a while now, but even then 1080p (or 1200p really) has been available for well over 15 years if you count the non-widescreen 1600x1200. I was running 1600x1200 back in 1995 on my 75 pound 21" CRT, for instance, and I know people that were using it in the early 90s. 2048x1536 and 2560x1600 are basically the next bump up from 1600x1200 and 1920x1200, and that's where we've stopped.

Now, Anand seems to want even higher resolutions; personally, I'd be happier if we first found a way to make those resolutions work well for every application (i.e. DPI that extends to everything, not just text). Vista was supposed to make that a lot better than XP, but really it's still a crap shoot. Some apps work well if you set your DPI to 120 instead of the default 96; others don't change at all.

Pirks - Friday, October 9, 2009 - link

I agree that maximum resolution has stagnated at 2560x1600, my point was that the average resolution of PC gamers is still moving from pretty low 1280x1024 towards this holy grail of 2560x1600 and who knows how many years will pass until every PC gamer will have such "stagnated" resolution on his/her desk.So yeah, max resolution stagnated, but average resolution did not and will not.

I am as mad as hell - Friday, October 2, 2009 - link

Hi everyone,first off, I am not mad as hell! I just registered this acct after being an Anandtech reader for 10+ years. That's right. It's also my #1 tech website. I read several others, but this WAS always my favorite.

I don't know what happened here lately, but it's becoming more and more of a circus in here.

I am going to make a few points suggestions:

1) In the old days reviews were reviews, this days I there are a lot more PRE-views and picture shows and blog (chit chatting) entries.

2) In the old days a bunch of hardware was rounded up and compared to each other (mobos, memory, PSU's, etc..) I don't see that here much anymore. It's kind of worthless to me just to review one PSU or one motherboard at the time. Round em all up and then lets have a good ole fashioned shootout.

3) I miss the monthly buyer guides. What happened to them? I like to see CPU + Mobo + Mem + PSU combo recommendations in the MOST BANG FOR THE BUCKS categories (Something most people can afford to buy/build)

4) Time to moderate the comments section, but not censorship. My concern is not with f-words, but with trolls and comment abusers. I can't stand it. I remember the old days when then a famous site totally self-destruct, and at that time I think had maybe more readers than Anand, (hint: It had the English version of "Tiburon" as name as part of the domain name) when their forum went totally out of control because it was moderated.

5) Also, time to upgrade the comments software here at Anandtech. It needs a up/down ratings feature that even many newspaper websites offer these days.

shotage - Saturday, October 3, 2009 - link

I agree with the idea of a comments rating system (thumbs up or down). Its a democratic way of moderating. It also saves the need for those short replies when all you want to convey is that you agree or not.Maybe also an abuse button that people can click on should things get really out of control..?

Skiprudder - Friday, October 2, 2009 - link

The up/down idea is perfect for the site. Now why didn't I think of that! =)AnnonymousCoward - Friday, October 2, 2009 - link

I don't like commenter ratings, since they give unequal representation/visibility of comments, they affect your perception of a message before you read it, and it's one more thing to look at while skimming comments.Skiprudder - Friday, October 2, 2009 - link

I'm sorry, but I'm going to ask for a ban of SiliconDoc as well. One person has single-handedly taken over the reply section to this article. I was actually interested in reading what other folks thought of what I feel is a rather remarkable new direction that nvidia is taking in terms of gpu design and marketing, and instead there are literally 30 pages of a single person waging a shouting matching with the world.Right now there isn't free speech in this discussion because an individual is shouting everyone down. If the admins don't act, people are likely to stop using the reply section all together.