AMD’s Radeon HD 5850: The Other Shoe Drops

by Ryan Smith on September 30, 2009 12:00 AM EST- Posted in

- GPUs

Battleforge: The First DX11 Game

As we mentioned in our 5870 review, Electronic Arts pushed out the DX11 update for Battleforge the day before the 5870 launched. As we had already left for Intel’s Fall IDF we were unable to take a look at it at the time, so now we finally have the chance.

Being the first DX11 title, Battleforge makes very limited use of DX11’s features given that the hardware and the software are still brand-new. The only thing Battleforge uses DX11 for is for Compute Shader 5.0, which replaces the use of pixel shaders for calculating ambient occlusion. Notably, this is not a use that improves the image quality of the game; pixel shaders already do this effect in Battleforge and other games. EA is using the compute shader as a faster way to calculate the ambient occlusion as compared to using a pixel shader.

The use of various DX11 features to improve performance is something we’re going to see in more games than just Battleforge as additional titles pick up DX11, so this isn’t in any way an unusual use of DX11. Effectively anything can be done with existing pixel, vertex, and geometry shaders (we’ll skip the discussion of Turing completeness), just not at an appropriate speed. The fixed-function tessellater is faster than the geometry shader for tessellating objects, and in certain situations like ambient occlusion the compute shader is going to be faster than the pixel shader.

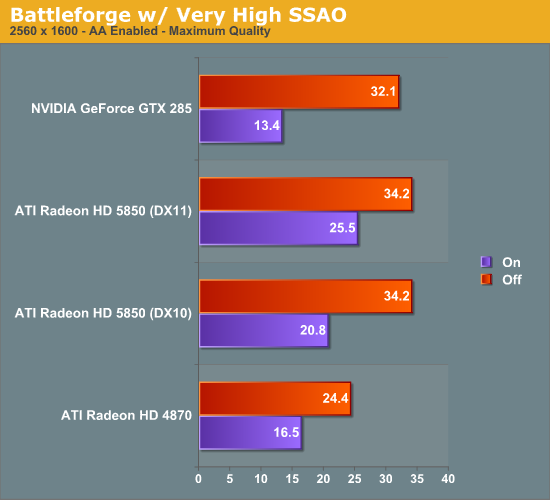

We ran Battleforge both with DX10/10.1 (pixel shader SSAO) and DX11 (compute shader SSAO) and with and without SSAO to look at the performance difference.

Update: We've finally identified the issue with our results. We've re-run the 5850, and now things make much more sense.

As Battleforge only uses the compute shader for SSAO, there is no difference in performance between DX11 and DX10.1 when we leave SSAO off. So the real magic here is when we enable SSAO, in this case we crank it up to Very High, which clobbers all the cards as a pixel shader.

The difference from in using a compute shader is that the performance hit of SSAO is significantly reduced. As a DX10.1 pixel shader it lobs off 35% of the performance of our 5850. But calculated using a compute shader, and that hit becomes 25%. Or to put it another way, switching from a DX10.1 pixel shader to a DX11 compute shader improved performance by 23% when using SSAO. This is what the DX11 compute shader will initially be making possible: allowing developers to go ahead and use effects that would be too slow on earlier hardware.

Our only big question at this point is whether a DX11 compute shader is really necessary here, or if a DX10/10.1 compute shader could do the job. We know there are some significant additional features available in the DX11 compute shader, but it's not at all clear on when they're necessary. In this case Battleforge is an AMD-sponsored showcase title, so take an appropriate quantity of salt when it comes to this matter - other titles may not produce similar results

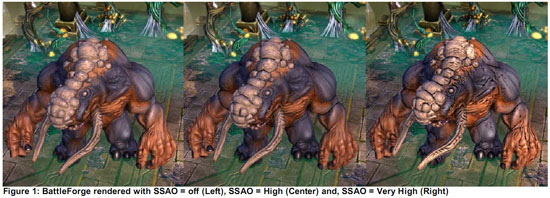

At any rate, even with the lighter performance penalty from using the compute shader, 25% for SSAO is nothing to sneeze at. AMD’s press shot is one of the best case scenarios for the use of SSAO in Battleforge, and in the game it’s very hard to notice. For the 25% drop in performance, it’s hard to justify the slightly improved visuals.

95 Comments

View All Comments

Seramics - Thursday, October 1, 2009 - link

HD5800 series is bandwidth limited. The 5850 being less severe than 5870. 5850 has about 77% computing power and about 83% of memory bandwidth of 5870. So normally, 5850 should perform about 77% as fast as 5870 but it wasnt the case here. If you calculate all the benchmarks performance at all resolution, its surprisingly consistent, 5850 is always around 82-85% performance of 5870. Never did it drop to below 80% performance level, let alone coming close to 77% which is where it should be. Different game has different bandwidth requirement and there's fluctuation in percentage improvement from 4800 series to 5800 series. It unstable but 5870 for eg rarely doubles 4870, let alone 4890. So in the end, its not parallelization or scaling problems, nor was it geometry or vertex limitation (possible but less likely), it is indeed the 5800 series being limited in performance due to restricted memory bandwidth. Those of you who has 5800 cards, overclock the memory and check the scaling and you'll see wht i mean.silverblue - Thursday, October 1, 2009 - link

Overclocking the RAM is one idea, adding more RAM is another, however it remains to be seen whether ATI will introduce a wider bus for any higher spec models.The situation is a little different to the 4830 - 4850 comparison whereby the 4830 had slightly lower clocks but only 640 SPs enabled instead of the full 800, however in the end the performance difference wasn't very large so the lack of shaders didn't cripple the 4830 too much.

Jamahl - Thursday, October 1, 2009 - link

I'm not convinced about that, many games run on 3 30" lcd's with no issues.More likely is the games aren't pushing the cards to their maximums, that is why you aren't getting the full effect. We will find out for sure when the 2gb version is released.

Seramics - Thursday, October 1, 2009 - link

If u cant see it thats ur problem, to say games arent pushing it is noobish, 1gb is still plentiful for today's games, memory buffer was nv an issue as long as its 1gb. well in the end u will see faster ram outperforming ur 2gb version. Very amazing to see many people still cant figure out the main reasons of 5870's underperformance.It is still a decent card and offer many features and definitely a better performance to price ratio card than GTX 285. But it is underperforming. Not living up to its nex gen architecture prowess. Unless GT300 screw up, it can easily outperform 5870 when its out. If AMD came out quickly with 5890, they will be wise to significantly bump up the GDDR5 speed as it is unlikely they will go with higher than 256bit design due to their "sweet spot" small die strategy.

Jamahl - Thursday, October 1, 2009 - link

Wait...you really think that you have it figured and ATI didn't realise it? You truly believe that ATI would lower the performance of the card instead of just strapping on a 384 bus?No. Any bandwidth issues only exist in your head. Didn't you say that different games have different bandwith requirements?

Jamahl - Thursday, October 1, 2009 - link

Any ideas what is going on here with that?loverboy - Thursday, October 1, 2009 - link

I would really love to know how these games run with this added xtra.One of my main reasons for upgrading would be to play WOW on three screens (most likely in window mode).

Would it be possible to add this benchmark in the future, with the most obvious config being 3 screens in 2560/1920/1680

yolt - Wednesday, September 30, 2009 - link

I'm looking to pick one of these up relatively quickly. My question is there really a difference in which vendor I purchase from (HIS, Powercolor, Diamond, XFX, etc). I know many offer varying warranties, but if they offer the same clock speeds, what else is there? I guess I'm looking for the most reputable brand since I won't be waiting for too many specific reviews before purchasing one. Any help is appreciated.ThePooBurner - Wednesday, September 30, 2009 - link

Where and under what conditions/server load do you test the frame rate in WoW? I've played for years and with my 4850 i can get 100fps in the game if i am in the right spot when no one else is in the zone. Knowing when and where you do you frame rate tests for WoW would help to put it into context.Ryan Smith - Thursday, October 1, 2009 - link

This is from Anand:"...our test isn't representative of worst case performance - it uses a very, very light server load, unfortunately in my testing I found it nearly impossible to get a repeatable worst case test scenario while testing multiple graphics cards.

I've also found that frame rate on WoW is actually more a function of server load than GPU load, it doesn't have to do with the number of people on the screen, rather the number of people on the server :)

What our test does is simply measures which GPU (or CPU) is going to be best for WoW performance. The overall performance in the game is going to be determined by a number of factors and when it comes to WoW, server load is a huge component."